Compare commits

9 Commits

devin/1750

...

lg-mcp-cre

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

4a7c21f0e7 | ||

|

|

060c486948 | ||

|

|

8b176d0598 | ||

|

|

c96d4a6823 | ||

|

|

59032817c7 | ||

|

|

e9d8a853ea | ||

|

|

463ea2b97f | ||

|

|

ec2903e5ee | ||

|

|

4364585ebc |

45

.github/workflows/mkdocs.yml

vendored

@@ -1,45 +0,0 @@

|

||||

name: Deploy MkDocs

|

||||

|

||||

on:

|

||||

release:

|

||||

types: [published]

|

||||

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

jobs:

|

||||

deploy:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Setup Python

|

||||

uses: actions/setup-python@v5

|

||||

with:

|

||||

python-version: '3.10'

|

||||

|

||||

- name: Calculate requirements hash

|

||||

id: req-hash

|

||||

run: echo "::set-output name=hash::$(sha256sum requirements-doc.txt | awk '{print $1}')"

|

||||

|

||||

- name: Setup cache

|

||||

uses: actions/cache@v4

|

||||

with:

|

||||

key: mkdocs-material-${{ steps.req-hash.outputs.hash }}

|

||||

path: .cache

|

||||

restore-keys: |

|

||||

mkdocs-material-

|

||||

|

||||

- name: Install Requirements

|

||||

run: |

|

||||

sudo apt-get update &&

|

||||

sudo apt-get install pngquant &&

|

||||

pip install mkdocs-material mkdocs-material-extensions pillow cairosvg

|

||||

|

||||

env:

|

||||

GH_TOKEN: ${{ secrets.GH_TOKEN }}

|

||||

|

||||

- name: Build and deploy MkDocs

|

||||

run: mkdocs gh-deploy --force

|

||||

@@ -134,7 +134,7 @@

|

||||

"tools/web-scraping/stagehandtool",

|

||||

"tools/web-scraping/firecrawlcrawlwebsitetool",

|

||||

"tools/web-scraping/firecrawlscrapewebsitetool",

|

||||

"tools/web-scraping/firecrawlsearchtool"

|

||||

"tools/web-scraping/oxylabsscraperstool"

|

||||

]

|

||||

},

|

||||

{

|

||||

|

||||

@@ -124,7 +124,7 @@ from crewai_tools import CrewaiEnterpriseTools

|

||||

enterprise_tools = CrewaiEnterpriseTools(

|

||||

actions_list=["gmail_find_email"] # only gmail_find_email tool will be available

|

||||

)

|

||||

gmail_tool = enterprise_tools[0]

|

||||

gmail_tool = enterprise_tools["gmail_find_email"]

|

||||

|

||||

gmail_agent = Agent(

|

||||

role="Gmail Manager",

|

||||

|

||||

BIN

docs/images/crewai_traces.gif

Normal file

|

After Width: | Height: | Size: 10 MiB |

BIN

docs/images/maxim_agent_tracking.png

Normal file

|

After Width: | Height: | Size: 1.3 MiB |

BIN

docs/images/maxim_alerts_1.png

Normal file

|

After Width: | Height: | Size: 1.1 MiB |

BIN

docs/images/maxim_dashboard_1.png

Normal file

|

After Width: | Height: | Size: 617 KiB |

BIN

docs/images/maxim_playground.png

Normal file

|

After Width: | Height: | Size: 1.2 MiB |

BIN

docs/images/maxim_trace_eval.png

Normal file

|

After Width: | Height: | Size: 845 KiB |

BIN

docs/images/maxim_versions.png

Normal file

|

After Width: | Height: | Size: 1.3 MiB |

@@ -6,11 +6,11 @@ icon: plug

|

||||

|

||||

## Overview

|

||||

|

||||

The [Model Context Protocol](https://modelcontextprotocol.io/introduction) (MCP) provides a standardized way for AI agents to provide context to LLMs by communicating with external services, known as MCP Servers.

|

||||

The `crewai-tools` library extends CrewAI's capabilities by allowing you to seamlessly integrate tools from these MCP servers into your agents.

|

||||

This gives your crews access to a vast ecosystem of functionalities.

|

||||

The [Model Context Protocol](https://modelcontextprotocol.io/introduction) (MCP) provides a standardized way for AI agents to provide context to LLMs by communicating with external services, known as MCP Servers.

|

||||

The `crewai-tools` library extends CrewAI's capabilities by allowing you to seamlessly integrate tools from these MCP servers into your agents.

|

||||

This gives your crews access to a vast ecosystem of functionalities.

|

||||

|

||||

We currently support the following transport mechanisms:

|

||||

We currently support the following transport mechanisms:

|

||||

|

||||

- **Stdio**: for local servers (communication via standard input/output between processes on the same machine)

|

||||

- **Server-Sent Events (SSE)**: for remote servers (unidirectional, real-time data streaming from server to client over HTTP)

|

||||

@@ -52,27 +52,27 @@ from mcp import StdioServerParameters # For Stdio Server

|

||||

# Example server_params (choose one based on your server type):

|

||||

# 1. Stdio Server:

|

||||

server_params=StdioServerParameters(

|

||||

command="python3",

|

||||

command="python3",

|

||||

args=["servers/your_server.py"],

|

||||

env={"UV_PYTHON": "3.12", **os.environ},

|

||||

)

|

||||

|

||||

# 2. SSE Server:

|

||||

server_params = {

|

||||

"url": "http://localhost:8000/sse",

|

||||

"url": "http://localhost:8000/sse",

|

||||

"transport": "sse"

|

||||

}

|

||||

|

||||

# 3. Streamable HTTP Server:

|

||||

server_params = {

|

||||

"url": "http://localhost:8001/mcp",

|

||||

"url": "http://localhost:8001/mcp",

|

||||

"transport": "streamable-http"

|

||||

}

|

||||

|

||||

# Example usage (uncomment and adapt once server_params is set):

|

||||

with MCPServerAdapter(server_params) as mcp_tools:

|

||||

print(f"Available tools: {[tool.name for tool in mcp_tools]}")

|

||||

|

||||

|

||||

my_agent = Agent(

|

||||

role="MCP Tool User",

|

||||

goal="Utilize tools from an MCP server.",

|

||||

@@ -85,44 +85,95 @@ with MCPServerAdapter(server_params) as mcp_tools:

|

||||

```

|

||||

This general pattern shows how to integrate tools. For specific examples tailored to each transport, refer to the detailed guides below.

|

||||

|

||||

## Filtering Tools

|

||||

|

||||

```python

|

||||

with MCPServerAdapter(server_params) as mcp_tools:

|

||||

print(f"Available tools: {[tool.name for tool in mcp_tools]}")

|

||||

|

||||

my_agent = Agent(

|

||||

role="MCP Tool User",

|

||||

goal="Utilize tools from an MCP server.",

|

||||

backstory="I can connect to MCP servers and use their tools.",

|

||||

tools=mcp_tools["tool_name"], # Pass the loaded tools to your agent

|

||||

reasoning=True,

|

||||

verbose=True

|

||||

)

|

||||

# ... rest of your crew setup ...

|

||||

```

|

||||

|

||||

## Using with CrewBase

|

||||

|

||||

To use MCPServer tools within a CrewBase class, use the `mcp_tools` method. Server configurations should be provided via the mcp_server_params attribute. You can pass either a single configuration or a list of multiple server configurations.

|

||||

|

||||

```python

|

||||

@CrewBase

|

||||

class CrewWithMCP:

|

||||

# ... define your agents and tasks config file ...

|

||||

|

||||

mcp_server_params = [

|

||||

# Streamable HTTP Server

|

||||

{

|

||||

"url": "http://localhost:8001/mcp",

|

||||

"transport": "streamable-http"

|

||||

},

|

||||

# SSE Server

|

||||

{

|

||||

"url": "http://localhost:8000/sse",

|

||||

"transport": "sse"

|

||||

},

|

||||

# StdIO Server

|

||||

StdioServerParameters(

|

||||

command="python3",

|

||||

args=["servers/your_stdio_server.py"],

|

||||

env={"UV_PYTHON": "3.12", **os.environ},

|

||||

)

|

||||

]

|

||||

|

||||

@agent

|

||||

def your_agent(self):

|

||||

return Agent(config=self.agents_config["your_agent"], tools=self.get_mcp_tools()) # you can filter which tool are available also

|

||||

|

||||

# ... rest of your crew setup ...

|

||||

```

|

||||

## Explore MCP Integrations

|

||||

|

||||

<CardGroup cols={2}>

|

||||

<Card

|

||||

title="Stdio Transport"

|

||||

icon="server"

|

||||

<Card

|

||||

title="Stdio Transport"

|

||||

icon="server"

|

||||

href="/mcp/stdio"

|

||||

color="#3B82F6"

|

||||

>

|

||||

Connect to local MCP servers via standard input/output. Ideal for scripts and local executables.

|

||||

</Card>

|

||||

<Card

|

||||

title="SSE Transport"

|

||||

icon="wifi"

|

||||

<Card

|

||||

title="SSE Transport"

|

||||

icon="wifi"

|

||||

href="/mcp/sse"

|

||||

color="#10B981"

|

||||

>

|

||||

Integrate with remote MCP servers using Server-Sent Events for real-time data streaming.

|

||||

</Card>

|

||||

<Card

|

||||

title="Streamable HTTP Transport"

|

||||

icon="globe"

|

||||

<Card

|

||||

title="Streamable HTTP Transport"

|

||||

icon="globe"

|

||||

href="/mcp/streamable-http"

|

||||

color="#F59E0B"

|

||||

>

|

||||

Utilize flexible Streamable HTTP for robust communication with remote MCP servers.

|

||||

</Card>

|

||||

<Card

|

||||

title="Connecting to Multiple Servers"

|

||||

icon="layer-group"

|

||||

<Card

|

||||

title="Connecting to Multiple Servers"

|

||||

icon="layer-group"

|

||||

href="/mcp/multiple-servers"

|

||||

color="#8B5CF6"

|

||||

>

|

||||

Aggregate tools from several MCP servers simultaneously using a single adapter.

|

||||

</Card>

|

||||

<Card

|

||||

title="Security Considerations"

|

||||

icon="lock"

|

||||

<Card

|

||||

title="Security Considerations"

|

||||

icon="lock"

|

||||

href="/mcp/security"

|

||||

color="#EF4444"

|

||||

>

|

||||

@@ -132,7 +183,7 @@ This general pattern shows how to integrate tools. For specific examples tailore

|

||||

|

||||

Checkout this repository for full demos and examples of MCP integration with CrewAI! 👇

|

||||

|

||||

<Card

|

||||

<Card

|

||||

title="GitHub Repository"

|

||||

icon="github"

|

||||

href="https://github.com/tonykipkemboi/crewai-mcp-demo"

|

||||

@@ -147,7 +198,7 @@ Always ensure that you trust an MCP Server before using it.

|

||||

</Warning>

|

||||

|

||||

#### Security Warning: DNS Rebinding Attacks

|

||||

SSE transports can be vulnerable to DNS rebinding attacks if not properly secured.

|

||||

SSE transports can be vulnerable to DNS rebinding attacks if not properly secured.

|

||||

To prevent this:

|

||||

|

||||

1. **Always validate Origin headers** on incoming SSE connections to ensure they come from expected sources

|

||||

@@ -159,6 +210,6 @@ Without these protections, attackers could use DNS rebinding to interact with lo

|

||||

For more details, see the [Anthropic's MCP Transport Security docs](https://modelcontextprotocol.io/docs/concepts/transports#security-considerations).

|

||||

|

||||

### Limitations

|

||||

* **Supported Primitives**: Currently, `MCPServerAdapter` primarily supports adapting MCP `tools`.

|

||||

* **Supported Primitives**: Currently, `MCPServerAdapter` primarily supports adapting MCP `tools`.

|

||||

Other MCP primitives like `prompts` or `resources` are not directly integrated as CrewAI components through this adapter at this time.

|

||||

* **Output Handling**: The adapter typically processes the primary text output from an MCP tool (e.g., `.content[0].text`). Complex or multi-modal outputs might require custom handling if not fitting this pattern.

|

||||

|

||||

@@ -30,18 +30,29 @@ Set your Langfuse API keys and configure OpenTelemetry export settings to send t

|

||||

|

||||

```python

|

||||

import os

|

||||

import base64

|

||||

|

||||

# Get keys for your project from the project settings page: https://cloud.langfuse.com

|

||||

os.environ["LANGFUSE_PUBLIC_KEY"] = "pk-lf-..."

|

||||

os.environ["LANGFUSE_SECRET_KEY"] = "sk-lf-..."

|

||||

os.environ["LANGFUSE_HOST"] = "https://cloud.langfuse.com" # 🇪🇺 EU region

|

||||

# os.environ["LANGFUSE_HOST"] = "https://us.cloud.langfuse.com" # 🇺🇸 US region

|

||||

|

||||

|

||||

# Your OpenAI key

|

||||

os.environ["OPENAI_API_KEY"] = "sk-proj-..."

|

||||

```

|

||||

With the environment variables set, we can now initialize the Langfuse client. get_client() initializes the Langfuse client using the credentials provided in the environment variables.

|

||||

|

||||

LANGFUSE_PUBLIC_KEY="pk-lf-..."

|

||||

LANGFUSE_SECRET_KEY="sk-lf-..."

|

||||

LANGFUSE_AUTH=base64.b64encode(f"{LANGFUSE_PUBLIC_KEY}:{LANGFUSE_SECRET_KEY}".encode()).decode()

|

||||

|

||||

os.environ["OTEL_EXPORTER_OTLP_ENDPOINT"] = "https://cloud.langfuse.com/api/public/otel" # EU data region

|

||||

# os.environ["OTEL_EXPORTER_OTLP_ENDPOINT"] = "https://us.cloud.langfuse.com/api/public/otel" # US data region

|

||||

os.environ["OTEL_EXPORTER_OTLP_HEADERS"] = f"Authorization=Basic {LANGFUSE_AUTH}"

|

||||

|

||||

# your openai key

|

||||

os.environ["OPENAI_API_KEY"] = "sk-..."

|

||||

```python

|

||||

from langfuse import get_client

|

||||

|

||||

langfuse = get_client()

|

||||

|

||||

# Verify connection

|

||||

if langfuse.auth_check():

|

||||

print("Langfuse client is authenticated and ready!")

|

||||

else:

|

||||

print("Authentication failed. Please check your credentials and host.")

|

||||

```

|

||||

|

||||

### Step 3: Initialize OpenLit

|

||||

|

||||

@@ -1,28 +1,107 @@

|

||||

---

|

||||

title: Maxim Integration

|

||||

description: Start Agent monitoring, evaluation, and observability

|

||||

icon: bars-staggered

|

||||

title: "Maxim Integration"

|

||||

description: "Start Agent monitoring, evaluation, and observability"

|

||||

icon: "infinity"

|

||||

---

|

||||

|

||||

# Maxim Integration

|

||||

# Maxim Overview

|

||||

|

||||

Maxim AI provides comprehensive agent monitoring, evaluation, and observability for your CrewAI applications. With Maxim's one-line integration, you can easily trace and analyse agent interactions, performance metrics, and more.

|

||||

|

||||

## Features

|

||||

|

||||

## Features: One Line Integration

|

||||

### Prompt Management

|

||||

|

||||

- **End-to-End Agent Tracing**: Monitor the complete lifecycle of your agents

|

||||

- **Performance Analytics**: Track latency, tokens consumed, and costs

|

||||

- **Hyperparameter Monitoring**: View the configuration details of your agent runs

|

||||

- **Tool Call Tracking**: Observe when and how agents use their tools

|

||||

- **Advanced Visualisation**: Understand agent trajectories through intuitive dashboards

|

||||

Maxim's Prompt Management capabilities enable you to create, organize, and optimize prompts for your CrewAI agents. Rather than hardcoding instructions, leverage Maxim’s SDK to dynamically retrieve and apply version-controlled prompts.

|

||||

|

||||

<Tabs>

|

||||

<Tab title="Prompt Playground">

|

||||

Create, refine, experiment and deploy your prompts via the playground. Organize of your prompts using folders and versions, experimenting with the real world cases by linking tools and context, and deploying based on custom logic.

|

||||

|

||||

Easily experiment across models by [**configuring models**](https://www.getmaxim.ai/docs/introduction/quickstart/setting-up-workspace#add-model-api-keys) and selecting the relevant model from the dropdown at the top of the prompt playground.

|

||||

|

||||

<img src='https://raw.githubusercontent.com/akmadan/crewAI/docs_maxim_observability/docs/images/maxim_playground.png'> </img>

|

||||

</Tab>

|

||||

<Tab title="Prompt Versions">

|

||||

As teams build their AI applications, a big part of experimentation is iterating on the prompt structure. In order to collaborate effectively and organize your changes clearly, Maxim allows prompt versioning and comparison runs across versions.

|

||||

|

||||

<img src='https://raw.githubusercontent.com/akmadan/crewAI/docs_maxim_observability/docs/images/maxim_versions.png'> </img>

|

||||

</Tab>

|

||||

<Tab title="Prompt Comparisons">

|

||||

Iterating on Prompts as you evolve your AI application would need experiments across models, prompt structures, etc. In order to compare versions and make informed decisions about changes, the comparison playground allows a side by side view of results.

|

||||

|

||||

## **Why use Prompt comparison?**

|

||||

|

||||

Prompt comparison combines multiple single Prompts into one view, enabling a streamlined approach for various workflows:

|

||||

|

||||

1. **Model comparison**: Evaluate the performance of different models on the same Prompt.

|

||||

2. **Prompt optimization**: Compare different versions of a Prompt to identify the most effective formulation.

|

||||

3. **Cross-Model consistency**: Ensure consistent outputs across various models for the same Prompt.

|

||||

4. **Performance benchmarking**: Analyze metrics like latency, cost, and token count across different models and Prompts.

|

||||

</Tab>

|

||||

</Tabs>

|

||||

|

||||

### Observability & Evals

|

||||

|

||||

Maxim AI provides comprehensive observability & evaluation for your CrewAI agents, helping you understand exactly what's happening during each execution.

|

||||

|

||||

<Tabs>

|

||||

<Tab title="Agent Tracing">

|

||||

Track your agent’s complete lifecycle, including tool calls, agent trajectories, and decision flows effortlessly.

|

||||

|

||||

<img src='https://raw.githubusercontent.com/akmadan/crewAI/docs_maxim_observability/docs/images/maxim_agent_tracking.png'> </img>

|

||||

</Tab>

|

||||

<Tab title="Analytics + Evals">

|

||||

Run detailed evaluations on full traces or individual nodes with support for:

|

||||

|

||||

- Multi-step interactions and granular trace analysis

|

||||

- Session Level Evaluations

|

||||

- Simulations for real-world testing

|

||||

|

||||

<img src='https://raw.githubusercontent.com/akmadan/crewAI/docs_maxim_observability/docs/images/maxim_trace_eval.png'> </img>

|

||||

|

||||

<CardGroup cols={3}>

|

||||

<Card title="Auto Evals on Logs" icon="e" href="https://www.getmaxim.ai/docs/observe/how-to/evaluate-logs/auto-evaluation">

|

||||

<p>

|

||||

Evaluate captured logs automatically from the UI based on filters and sampling

|

||||

|

||||

</p>

|

||||

</Card>

|

||||

<Card title="Human Evals on Logs" icon="hand" href="https://www.getmaxim.ai/docs/observe/how-to/evaluate-logs/human-evaluation">

|

||||

<p>

|

||||

Use human evaluation or rating to assess the quality of your logs and evaluate them.

|

||||

|

||||

</p>

|

||||

</Card>

|

||||

<Card title="Node Level Evals" icon="road" href="https://www.getmaxim.ai/docs/observe/how-to/evaluate-logs/node-level-evaluation">

|

||||

<p>

|

||||

Evaluate any component of your trace or log to gain insights into your agent’s behavior.

|

||||

|

||||

</p>

|

||||

</Card>

|

||||

</CardGroup>

|

||||

---

|

||||

</Tab>

|

||||

<Tab title="Alerting">

|

||||

Set thresholds on **error**, **cost, token usage, user feedback, latency** and get real-time alerts via Slack or PagerDuty.

|

||||

|

||||

<img src='https://raw.githubusercontent.com/akmadan/crewAI/docs_maxim_observability/docs/images/maxim_alerts_1.png'> </img>

|

||||

</Tab>

|

||||

<Tab title="Dashboards">

|

||||

Visualize Traces over time, usage metrics, latency & error rates with ease.

|

||||

|

||||

<img src='https://raw.githubusercontent.com/akmadan/crewAI/docs_maxim_observability/docs/images/maxim_dashboard_1.png'> </img>

|

||||

</Tab>

|

||||

</Tabs>

|

||||

|

||||

## Getting Started

|

||||

|

||||

### Prerequisites

|

||||

|

||||

- Python version >=3.10

|

||||

|

||||

- Python version \>=3.10

|

||||

- A Maxim account ([sign up here](https://getmaxim.ai/))

|

||||

- Generate Maxim API Key

|

||||

- A CrewAI project

|

||||

|

||||

### Installation

|

||||

@@ -30,16 +109,14 @@ Maxim AI provides comprehensive agent monitoring, evaluation, and observability

|

||||

Install the Maxim SDK via pip:

|

||||

|

||||

```python

|

||||

pip install maxim-py>=3.6.2

|

||||

pip install maxim-py

|

||||

```

|

||||

|

||||

Or add it to your `requirements.txt`:

|

||||

|

||||

```

|

||||

maxim-py>=3.6.2

|

||||

maxim-py

|

||||

```

|

||||

|

||||

|

||||

### Basic Setup

|

||||

|

||||

### 1. Set up environment variables

|

||||

@@ -64,18 +141,15 @@ from maxim.logger.crewai import instrument_crewai

|

||||

|

||||

### 3. Initialise Maxim with your API key

|

||||

|

||||

```python

|

||||

# Initialize Maxim logger

|

||||

logger = Maxim().logger()

|

||||

|

||||

```python {8}

|

||||

# Instrument CrewAI with just one line

|

||||

instrument_crewai(logger)

|

||||

instrument_crewai(Maxim().logger())

|

||||

```

|

||||

|

||||

### 4. Create and run your CrewAI application as usual

|

||||

|

||||

```python

|

||||

|

||||

# Create your agent

|

||||

researcher = Agent(

|

||||

role='Senior Research Analyst',

|

||||

@@ -105,7 +179,8 @@ finally:

|

||||

maxim.cleanup() # Ensure cleanup happens even if errors occur

|

||||

```

|

||||

|

||||

That's it! All your CrewAI agent interactions will now be logged and available in your Maxim dashboard.

|

||||

|

||||

That's it\! All your CrewAI agent interactions will now be logged and available in your Maxim dashboard.

|

||||

|

||||

Check this Google Colab Notebook for a quick reference - [Notebook](https://colab.research.google.com/drive/1ZKIZWsmgQQ46n8TH9zLsT1negKkJA6K8?usp=sharing)

|

||||

|

||||

@@ -113,40 +188,44 @@ Check this Google Colab Notebook for a quick reference - [Notebook](https://cola

|

||||

|

||||

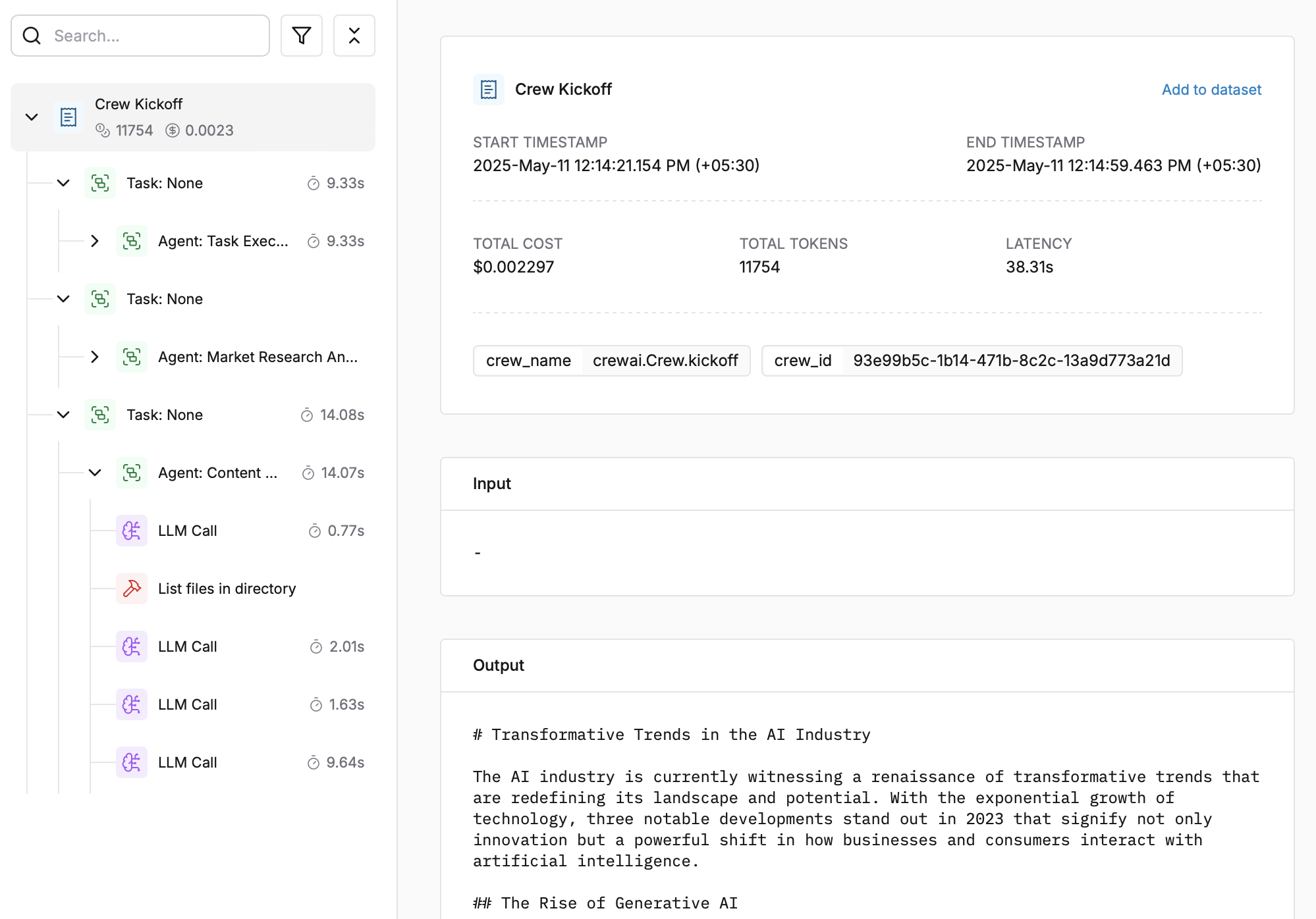

After running your CrewAI application:

|

||||

|

||||

|

||||

|

||||

1. Log in to your [Maxim Dashboard](https://getmaxim.ai/dashboard)

|

||||

1. Log in to your [Maxim Dashboard](https://app.getmaxim.ai/login)

|

||||

2. Navigate to your repository

|

||||

3. View detailed agent traces, including:

|

||||

- Agent conversations

|

||||

- Tool usage patterns

|

||||

- Performance metrics

|

||||

- Cost analytics

|

||||

- Agent conversations

|

||||

- Tool usage patterns

|

||||

- Performance metrics

|

||||

- Cost analytics

|

||||

|

||||

<img src='https://raw.githubusercontent.com/akmadan/crewAI/docs_maxim_observability/docs/images/crewai_traces.gif'> </img>

|

||||

|

||||

## Troubleshooting

|

||||

|

||||

### Common Issues

|

||||

|

||||

- **No traces appearing**: Ensure your API key and repository ID are correc

|

||||

- Ensure you've **called `instrument_crewai()`** ***before*** running your crew. This initializes logging hooks correctly.

|

||||

- **No traces appearing**: Ensure your API key and repository ID are correct

|

||||

- Ensure you've **`called instrument_crewai()`** **_before_** running your crew. This initializes logging hooks correctly.

|

||||

- Set `debug=True` in your `instrument_crewai()` call to surface any internal errors:

|

||||

|

||||

```python

|

||||

instrument_crewai(logger, debug=True)

|

||||

```

|

||||

|

||||

|

||||

```python

|

||||

instrument_crewai(logger, debug=True)

|

||||

```

|

||||

- Configure your agents with `verbose=True` to capture detailed logs:

|

||||

|

||||

```python

|

||||

|

||||

agent = CrewAgent(..., verbose=True)

|

||||

```

|

||||

|

||||

|

||||

```python

|

||||

agent = CrewAgent(..., verbose=True)

|

||||

```

|

||||

- Double-check that `instrument_crewai()` is called **before** creating or executing agents. This might be obvious, but it's a common oversight.

|

||||

|

||||

### Support

|

||||

## Resources

|

||||

|

||||

If you encounter any issues:

|

||||

|

||||

- Check the [Maxim Documentation](https://getmaxim.ai/docs)

|

||||

- Maxim Github [Link](https://github.com/maximhq)

|

||||

<CardGroup cols="3">

|

||||

<Card title="CrewAI Docs" icon="book" href="https://docs.crewai.com/">

|

||||

Official CrewAI documentation

|

||||

</Card>

|

||||

<Card title="Maxim Docs" icon="book" href="https://getmaxim.ai/docs">

|

||||

Official Maxim documentation

|

||||

</Card>

|

||||

<Card title="Maxim Github" icon="github" href="https://github.com/maximhq">

|

||||

Maxim Github

|

||||

</Card>

|

||||

</CardGroup>

|

||||

@@ -56,6 +56,10 @@ These tools enable your agents to interact with the web, extract data from websi

|

||||

<Card title="Stagehand Tool" icon="hand" href="/tools/web-scraping/stagehandtool">

|

||||

Intelligent browser automation with natural language commands.

|

||||

</Card>

|

||||

|

||||

<Card title="Oxylabs Scraper Tool" icon="globe" href="/tools/web-scraping/oxylabsscraperstool">

|

||||

Access web data at scale with Oxylabs.

|

||||

</Card>

|

||||

</CardGroup>

|

||||

|

||||

## **Common Use Cases**

|

||||

@@ -100,4 +104,4 @@ agent = Agent(

|

||||

- **JavaScript-Heavy Sites**: Use `SeleniumScrapingTool` for dynamic content

|

||||

- **Scale & Performance**: Use `FirecrawlScrapeWebsiteTool` for high-volume scraping

|

||||

- **Cloud Infrastructure**: Use `BrowserBaseLoadTool` for scalable browser automation

|

||||

- **Complex Workflows**: Use `StagehandTool` for intelligent browser interactions

|

||||

- **Complex Workflows**: Use `StagehandTool` for intelligent browser interactions

|

||||

|

||||

236

docs/tools/web-scraping/oxylabsscraperstool.mdx

Normal file

@@ -0,0 +1,236 @@

|

||||

---

|

||||

title: Oxylabs Scrapers

|

||||

description: >

|

||||

Oxylabs Scrapers allow to easily access the information from the respective sources. Please see the list of available sources below:

|

||||

- `Amazon Product`

|

||||

- `Amazon Search`

|

||||

- `Google Seach`

|

||||

- `Universal`

|

||||

icon: globe

|

||||

---

|

||||

|

||||

## Installation

|

||||

|

||||

Get the credentials by creating an Oxylabs Account [here](https://oxylabs.io).

|

||||

```shell

|

||||

pip install 'crewai[tools]' oxylabs

|

||||

```

|

||||

Check [Oxylabs Documentation](https://developers.oxylabs.io/scraping-solutions/web-scraper-api/targets) to get more information about API parameters.

|

||||

|

||||

# `OxylabsAmazonProductScraperTool`

|

||||

|

||||

### Example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsAmazonProductScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsAmazonProductScraperTool()

|

||||

|

||||

result = tool.run(query="AAAAABBBBCC")

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

### Parameters

|

||||

|

||||

- `query` - 10-symbol ASIN code.

|

||||

- `domain` - domain localization for Amazon.

|

||||

- `geo_location` - the _Deliver to_ location.

|

||||

- `user_agent_type` - device type and browser.

|

||||

- `render` - enables JavaScript rendering when set to `html`.

|

||||

- `callback_url` - URL to your callback endpoint.

|

||||

- `context` - Additional advanced settings and controls for specialized requirements.

|

||||

- `parse` - returns parsed data when set to true.

|

||||

- `parsing_instructions` - define your own parsing and data transformation logic that will be executed on an HTML scraping result.

|

||||

|

||||

### Advanced example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsAmazonProductScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsAmazonProductScraperTool(

|

||||

config={

|

||||

"domain": "com",

|

||||

"parse": True,

|

||||

"context": [

|

||||

{

|

||||

"key": "autoselect_variant",

|

||||

"value": True

|

||||

}

|

||||

]

|

||||

}

|

||||

)

|

||||

|

||||

result = tool.run(query="AAAAABBBBCC")

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

# `OxylabsAmazonSearchScraperTool`

|

||||

|

||||

### Example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsAmazonSearchScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsAmazonSearchScraperTool()

|

||||

|

||||

result = tool.run(query="headsets")

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

### Parameters

|

||||

|

||||

- `query` - Amazon search term.

|

||||

- `domain` - Domain localization for Bestbuy.

|

||||

- `start_page` - starting page number.

|

||||

- `pages` - number of pages to retrieve.

|

||||

- `geo_location` - the _Deliver to_ location.

|

||||

- `user_agent_type` - device type and browser.

|

||||

- `render` - enables JavaScript rendering when set to `html`.

|

||||

- `callback_url` - URL to your callback endpoint.

|

||||

- `context` - Additional advanced settings and controls for specialized requirements.

|

||||

- `parse` - returns parsed data when set to true.

|

||||

- `parsing_instructions` - define your own parsing and data transformation logic that will be executed on an HTML scraping result.

|

||||

|

||||

### Advanced example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsAmazonSearchScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsAmazonSearchScraperTool(

|

||||

config={

|

||||

"domain": 'nl',

|

||||

"start_page": 2,

|

||||

"pages": 2,

|

||||

"parse": True,

|

||||

"context": [

|

||||

{'key': 'category_id', 'value': 16391693031}

|

||||

],

|

||||

}

|

||||

)

|

||||

|

||||

result = tool.run(query='nirvana tshirt')

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

# `OxylabsGoogleSearchScraperTool`

|

||||

|

||||

### Example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsGoogleSearchScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsGoogleSearchScraperTool()

|

||||

|

||||

result = tool.run(query="iPhone 16")

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

### Parameters

|

||||

|

||||

- `query` - search keyword.

|

||||

- `domain` - domain localization for Google.

|

||||

- `start_page` - starting page number.

|

||||

- `pages` - number of pages to retrieve.

|

||||

- `limit` - number of results to retrieve in each page.

|

||||

- `locale` - `Accept-Language` header value which changes your Google search page web interface language.

|

||||

- `geo_location` - the geographical location that the result should be adapted for. Using this parameter correctly is extremely important to get the right data.

|

||||

- `user_agent_type` - device type and browser.

|

||||

- `render` - enables JavaScript rendering when set to `html`.

|

||||

- `callback_url` - URL to your callback endpoint.

|

||||

- `context` - Additional advanced settings and controls for specialized requirements.

|

||||

- `parse` - returns parsed data when set to true.

|

||||

- `parsing_instructions` - define your own parsing and data transformation logic that will be executed on an HTML scraping result.

|

||||

|

||||

### Advanced example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsGoogleSearchScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsGoogleSearchScraperTool(

|

||||

config={

|

||||

"parse": True,

|

||||

"geo_location": "Paris, France",

|

||||

"user_agent_type": "tablet",

|

||||

}

|

||||

)

|

||||

|

||||

result = tool.run(query="iPhone 16")

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

# `OxylabsUniversalScraperTool`

|

||||

|

||||

### Example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsUniversalScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsUniversalScraperTool()

|

||||

|

||||

result = tool.run(url="https://ip.oxylabs.io")

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

### Parameters

|

||||

|

||||

- `url` - website url to scrape.

|

||||

- `user_agent_type` - device type and browser.

|

||||

- `geo_location` - sets the proxy's geolocation to retrieve data.

|

||||

- `render` - enables JavaScript rendering when set to `html`.

|

||||

- `callback_url` - URL to your callback endpoint.

|

||||

- `context` - Additional advanced settings and controls for specialized requirements.

|

||||

- `parse` - returns parsed data when set to `true`, as long as a dedicated parser exists for the submitted URL's page type.

|

||||

- `parsing_instructions` - define your own parsing and data transformation logic that will be executed on an HTML scraping result.

|

||||

|

||||

|

||||

### Advanced example

|

||||

|

||||

```python

|

||||

from crewai_tools import OxylabsUniversalScraperTool

|

||||

|

||||

# make sure OXYLABS_USERNAME and OXYLABS_PASSWORD variables are set

|

||||

tool = OxylabsUniversalScraperTool(

|

||||

config={

|

||||

"render": "html",

|

||||

"user_agent_type": "mobile",

|

||||

"context": [

|

||||

{"key": "force_headers", "value": True},

|

||||

{"key": "force_cookies", "value": True},

|

||||

{

|

||||

"key": "headers",

|

||||

"value": {

|

||||

"Custom-Header-Name": "custom header content",

|

||||

},

|

||||

},

|

||||

{

|

||||

"key": "cookies",

|

||||

"value": [

|

||||

{"key": "NID", "value": "1234567890"},

|

||||

{"key": "1P JAR", "value": "0987654321"},

|

||||

],

|

||||

},

|

||||

{"key": "http_method", "value": "get"},

|

||||

{"key": "follow_redirects", "value": True},

|

||||

{"key": "successful_status_codes", "value": [808, 909]},

|

||||

],

|

||||

}

|

||||

)

|

||||

|

||||

result = tool.run(url="https://ip.oxylabs.io")

|

||||

|

||||

print(result)

|

||||

```

|

||||

216

mkdocs.yml

@@ -1,216 +0,0 @@

|

||||

site_name: crewAI

|

||||

site_author: crewAI, Inc

|

||||

site_description: Cutting-edge framework for orchestrating role-playing, autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks.

|

||||

repo_name: crewAI

|

||||

repo_url: https://github.com/crewAIInc/crewAI

|

||||

site_url: https://docs.crewai.com

|

||||

edit_uri: edit/main/docs/

|

||||

copyright: Copyright © 2024 crewAI, Inc

|

||||

|

||||

markdown_extensions:

|

||||

- abbr

|

||||

- admonition

|

||||

- pymdownx.details

|

||||

- attr_list

|

||||

- def_list

|

||||

- footnotes

|

||||

- md_in_html

|

||||

- toc:

|

||||

permalink: true

|

||||

- pymdownx.arithmatex:

|

||||

generic: true

|

||||

- pymdownx.betterem:

|

||||

smart_enable: all

|

||||

- pymdownx.caret

|

||||

- pymdownx.emoji:

|

||||

emoji_generator: !!python/name:material.extensions.emoji.to_svg

|

||||

emoji_index: !!python/name:material.extensions.emoji.twemoji

|

||||

- pymdownx.highlight:

|

||||

anchor_linenums: true

|

||||

line_spans: __span

|

||||

pygments_lang_class: true

|

||||

- pymdownx.inlinehilite

|

||||

- pymdownx.keys

|

||||

- pymdownx.magiclink:

|

||||

normalize_issue_symbols: true

|

||||

repo_url_shorthand: true

|

||||

user: joaomdmoura

|

||||

repo: crewAI

|

||||

- pymdownx.mark

|

||||

- pymdownx.smartsymbols

|

||||

- pymdownx.snippets:

|

||||

auto_append:

|

||||

- includes/mkdocs.md

|

||||

- pymdownx.superfences:

|

||||

custom_fences:

|

||||

- name: mermaid

|

||||

class: mermaid

|

||||

format: !!python/name:pymdownx.superfences.fence_code_format

|

||||

- pymdownx.tabbed:

|

||||

alternate_style: true

|

||||

combine_header_slug: true

|

||||

slugify: !!python/object/apply:pymdownx.slugs.slugify

|

||||

kwds:

|

||||

case: lower

|

||||

- pymdownx.tasklist:

|

||||

custom_checkbox: true

|

||||

- pymdownx.tilde

|

||||

theme:

|

||||

name: material

|

||||

language: en

|

||||

icon:

|

||||

repo: fontawesome/brands/github

|

||||

edit: material/pencil

|

||||

view: material/eye

|

||||

admonition:

|

||||

note: octicons/light-bulb-16

|

||||

abstract: octicons/checklist-16

|

||||

info: octicons/info-16

|

||||

tip: octicons/squirrel-16

|

||||

success: octicons/check-16

|

||||

question: octicons/question-16

|

||||

warning: octicons/alert-16

|

||||

failure: octicons/x-circle-16

|

||||

danger: octicons/zap-16

|

||||

bug: octicons/bug-16

|

||||

example: octicons/beaker-16

|

||||

quote: octicons/quote-16

|

||||

|

||||

palette:

|

||||

- scheme: default

|

||||

primary: deep orange

|

||||

accent: deep orange

|

||||

toggle:

|

||||

icon: material/brightness-7

|

||||

name: Switch to dark mode

|

||||

- scheme: slate

|

||||

primary: deep orange

|

||||

accent: deep orange

|

||||

toggle:

|

||||

icon: material/brightness-4

|

||||

name: Switch to light mode

|

||||

features:

|

||||

- announce.dismiss

|

||||

- content.action.edit

|

||||

- content.action.view

|

||||

- content.code.annotate

|

||||

- content.code.copy

|

||||

- content.code.select

|

||||

- content.tabs.link

|

||||

- content.tooltips

|

||||

- header.autohide

|

||||

- navigation.footer

|

||||

- navigation.indexes

|

||||

# - navigation.prune

|

||||

# - navigation.sections

|

||||

# - navigation.tabs

|

||||

- search.suggest

|

||||

- navigation.instant

|

||||

- navigation.instant.progress

|

||||

- navigation.instant.prefetch

|

||||

- navigation.tracking

|

||||

# - navigation.expand

|

||||

- navigation.path

|

||||

- navigation.top

|

||||

- toc.follow

|

||||

- toc.integrate

|

||||

- search.highlight

|

||||

- search.share

|

||||

|

||||

nav:

|

||||

- Home: '/'

|

||||

- Getting Started:

|

||||

- Installing CrewAI: 'getting-started/Installing-CrewAI.md'

|

||||

- Starting a new CrewAI project: 'getting-started/Start-a-New-CrewAI-Project-Template-Method.md'

|

||||

- Core Concepts:

|

||||

- Agents: 'core-concepts/Agents.md'

|

||||

- Tasks: 'core-concepts/Tasks.md'

|

||||

- Tools: 'core-concepts/Tools.md'

|

||||

- Processes: 'core-concepts/Processes.md'

|

||||

- Crews: 'core-concepts/Crews.md'

|

||||

- Collaboration: 'core-concepts/Collaboration.md'

|

||||

- Training: 'core-concepts/Training-Crew.md'

|

||||

- Memory: 'core-concepts/Memory.md'

|

||||

- Planning: 'core-concepts/Planning.md'

|

||||

- Testing: 'core-concepts/Testing.md'

|

||||

- Using LangChain Tools: 'core-concepts/Using-LangChain-Tools.md'

|

||||

- Using LlamaIndex Tools: 'core-concepts/Using-LlamaIndex-Tools.md'

|

||||

- How to Guides:

|

||||

- Create Custom Tools: 'how-to/Create-Custom-Tools.md'

|

||||

- Using Sequential Process: 'how-to/Sequential.md'

|

||||

- Using Hierarchical Process: 'how-to/Hierarchical.md'

|

||||

- Create your own Manager Agent: 'how-to/Your-Own-Manager-Agent.md'

|

||||

- Connecting to any LLM: 'how-to/LLM-Connections.md'

|

||||

- Customizing Agents: 'how-to/Customizing-Agents.md'

|

||||

- Coding Agents: 'how-to/Coding-Agents.md'

|

||||

- Forcing Tool Output as Result: 'how-to/Force-Tool-Ouput-as-Result.md'

|

||||

- Human Input on Execution: 'how-to/Human-Input-on-Execution.md'

|

||||

- Kickoff a Crew Asynchronously: 'how-to/Kickoff-async.md'

|

||||

- Kickoff a Crew for a List: 'how-to/Kickoff-for-each.md'

|

||||

- Replay from a specific task from a kickoff: 'how-to/Replay-tasks-from-latest-Crew-Kickoff.md'

|

||||

- Conditional Tasks: 'how-to/Conditional-Tasks.md'

|

||||

- Agent Monitoring with AgentOps: 'how-to/AgentOps-Observability.md'

|

||||

- Agent Monitoring with LangTrace: 'how-to/Langtrace-Observability.md'

|

||||

- Agent Monitoring with OpenLIT: 'how-to/openlit-Observability.md'

|

||||

- Agent Monitoring with MLflow: 'how-to/mlflow-Observability.md'

|

||||

- Tools Docs:

|

||||

- Browserbase Web Loader: 'tools/BrowserbaseLoadTool.md'

|

||||

- Code Docs RAG Search: 'tools/CodeDocsSearchTool.md'

|

||||

- Code Interpreter: 'tools/CodeInterpreterTool.md'

|

||||

- Composio Tools: 'tools/ComposioTool.md'

|

||||

- CSV RAG Search: 'tools/CSVSearchTool.md'

|

||||

- DALL-E Tool: 'tools/DALL-ETool.md'

|

||||

- Directory RAG Search: 'tools/DirectorySearchTool.md'

|

||||

- Directory Read: 'tools/DirectoryReadTool.md'

|

||||

- Docx Rag Search: 'tools/DOCXSearchTool.md'

|

||||

- EXA Search Web Loader: 'tools/EXASearchTool.md'

|

||||

- File Read: 'tools/FileReadTool.md'

|

||||

- File Write: 'tools/FileWriteTool.md'

|

||||

- Firecrawl Crawl Website Tool: 'tools/FirecrawlCrawlWebsiteTool.md'

|

||||

- Firecrawl Scrape Website Tool: 'tools/FirecrawlScrapeWebsiteTool.md'

|

||||

- Firecrawl Search Tool: 'tools/FirecrgstawlSearchTool.md'

|

||||

- Github RAG Search: 'tools/GitHubSearchTool.md'

|

||||

- Google Serper Search: 'tools/SerperDevTool.md'

|

||||

- JSON RAG Search: 'tools/JSONSearchTool.md'

|

||||

- MDX RAG Search: 'tools/MDXSearchTool.md'

|

||||

- MySQL Tool: 'tools/MySQLTool.md'

|

||||

- NL2SQL Tool: 'tools/NL2SQLTool.md'

|

||||

- PDF RAG Search: 'tools/PDFSearchTool.md'

|

||||

- PG RAG Search: 'tools/PGSearchTool.md'

|

||||

- Scrape Website: 'tools/ScrapeWebsiteTool.md'

|

||||

- Selenium Scraper: 'tools/SeleniumScrapingTool.md'

|

||||

- Spider Scraper: 'tools/SpiderTool.md'

|

||||

- TXT RAG Search: 'tools/TXTSearchTool.md'

|

||||

- Vision Tool: 'tools/VisionTool.md'

|

||||

- Website RAG Search: 'tools/WebsiteSearchTool.md'

|

||||

- XML RAG Search: 'tools/XMLSearchTool.md'

|

||||

- Youtube Channel RAG Search: 'tools/YoutubeChannelSearchTool.md'

|

||||

- Youtube Video RAG Search: 'tools/YoutubeVideoSearchTool.md'

|

||||

- Examples:

|

||||

- Trip Planner Crew: https://github.com/joaomdmoura/crewAI-examples/tree/main/trip_planner"

|

||||

- Create Instagram Post: https://github.com/joaomdmoura/crewAI-examples/tree/main/instagram_post"

|

||||

- Stock Analysis: https://github.com/joaomdmoura/crewAI-examples/tree/main/stock_analysis"

|

||||

- Game Generator: https://github.com/joaomdmoura/crewAI-examples/tree/main/game-builder-crew"

|

||||

- Drafting emails with LangGraph: https://github.com/joaomdmoura/crewAI-examples/tree/main/CrewAI-LangGraph"

|

||||

- Landing Page Generator: https://github.com/joaomdmoura/crewAI-examples/tree/main/landing_page_generator"

|

||||

- Prepare for meetings: https://github.com/joaomdmoura/crewAI-examples/tree/main/prep-for-a-meeting"

|

||||

- Telemetry: 'telemetry/Telemetry.md'

|

||||

- Change Log: 'https://github.com/crewAIInc/crewAI/releases'

|

||||

|

||||

extra_css:

|

||||

- stylesheets/output.css

|

||||

- stylesheets/extra.css

|

||||

|

||||

plugins:

|

||||

- social

|

||||

- search

|

||||

|

||||

extra:

|

||||

analytics:

|

||||

provider: google

|

||||

property: G-N3Q505TMQ6

|

||||

social:

|

||||

- icon: fontawesome/brands/x-twitter

|

||||

link: https://x.com/crewAIInc

|

||||

- icon: fontawesome/brands/github

|

||||

link: https://github.com/crewAIInc/crewAI

|

||||

@@ -74,11 +74,6 @@ dev-dependencies = [

|

||||

"ruff>=0.8.2",

|

||||

"mypy>=1.10.0",

|

||||

"pre-commit>=3.6.0",

|

||||

"mkdocs>=1.4.3",

|

||||

"mkdocstrings>=0.22.0",

|

||||

"mkdocstrings-python>=1.1.2",

|

||||

"mkdocs-material>=9.5.7",

|

||||

"mkdocs-material-extensions>=1.3.1",

|

||||

"pillow>=10.2.0",

|

||||

"cairosvg>=2.7.1",

|

||||

"pytest>=8.0.0",

|

||||

|

||||

@@ -91,9 +91,6 @@ class Agent(BaseAgent):

|

||||

function_calling_llm: Optional[Union[str, InstanceOf[BaseLLM], Any]] = Field(

|

||||

description="Language model that will run the agent.", default=None

|

||||

)

|

||||

fallback_llms: Optional[List[Union[str, InstanceOf[BaseLLM], Any]]] = Field(

|

||||

default=None, description="List of fallback language models to try if the primary LLM fails."

|

||||

)

|

||||

system_template: Optional[str] = Field(

|

||||

default=None, description="System format for the agent."

|

||||

)

|

||||

@@ -177,8 +174,6 @@ class Agent(BaseAgent):

|

||||

self.agent_ops_agent_name = self.role

|

||||

|

||||

self.llm = create_llm(self.llm)

|

||||

if self.fallback_llms:

|

||||

self.fallback_llms = [create_llm(fallback_llm) for fallback_llm in self.fallback_llms]

|

||||

if self.function_calling_llm and not isinstance(

|

||||

self.function_calling_llm, BaseLLM

|

||||

):

|

||||

|

||||

@@ -159,7 +159,6 @@ class CrewAgentExecutor(CrewAgentExecutorMixin):

|

||||

messages=self.messages,

|

||||

callbacks=self.callbacks,

|

||||

printer=self._printer,

|

||||

fallback_llms=getattr(self.agent, 'fallback_llms', None),

|

||||

)

|

||||

formatted_answer = process_llm_response(answer, self.use_stop_words)

|

||||

|

||||

|

||||

@@ -94,17 +94,18 @@ def _get_project_attribute(

|

||||

|

||||

attribute = _get_nested_value(pyproject_content, keys)

|

||||

except FileNotFoundError:

|

||||

print(f"Error: {pyproject_path} not found.")

|

||||

console.print(f"Error: {pyproject_path} not found.", style="bold red")

|

||||

except KeyError:

|

||||

print(f"Error: {pyproject_path} is not a valid pyproject.toml file.")

|

||||

console.print(f"Error: {pyproject_path} is not a valid pyproject.toml file.", style="bold red")

|

||||

except tomllib.TOMLDecodeError if sys.version_info >= (3, 11) else Exception as e: # type: ignore

|

||||

print(

|

||||

console.print(

|

||||

f"Error: {pyproject_path} is not a valid TOML file."

|

||||

if sys.version_info >= (3, 11)

|

||||

else f"Error reading the pyproject.toml file: {e}"

|

||||

else f"Error reading the pyproject.toml file: {e}",

|

||||

style="bold red",

|

||||

)

|

||||

except Exception as e:

|

||||

print(f"Error reading the pyproject.toml file: {e}")

|

||||

console.print(f"Error reading the pyproject.toml file: {e}", style="bold red")

|

||||

|

||||

if require and not attribute:

|

||||

console.print(

|

||||

@@ -137,9 +138,9 @@ def fetch_and_json_env_file(env_file_path: str = ".env") -> dict:

|

||||

return env_dict

|

||||

|

||||

except FileNotFoundError:

|

||||

print(f"Error: {env_file_path} not found.")

|

||||

console.print(f"Error: {env_file_path} not found.", style="bold red")

|

||||

except Exception as e:

|

||||

print(f"Error reading the .env file: {e}")

|

||||

console.print(f"Error reading the .env file: {e}", style="bold red")

|

||||

|

||||

return {}

|

||||

|

||||

@@ -255,50 +256,69 @@ def write_env_file(folder_path, env_vars):

|

||||

|

||||

|

||||

def get_crews(crew_path: str = "crew.py", require: bool = False) -> list[Crew]:

|

||||

"""Get the crew instances from the a file."""

|

||||

"""Get the crew instances from a file."""

|

||||

crew_instances = []

|

||||

try:

|

||||

import importlib.util

|

||||

|

||||

for root, _, files in os.walk("."):

|

||||

if crew_path in files:

|

||||

crew_os_path = os.path.join(root, crew_path)

|

||||

try:

|

||||

spec = importlib.util.spec_from_file_location(

|

||||

"crew_module", crew_os_path

|

||||

)

|

||||

if not spec or not spec.loader:

|

||||

continue

|

||||

module = importlib.util.module_from_spec(spec)

|

||||

# Add the current directory to sys.path to ensure imports resolve correctly

|

||||

current_dir = os.getcwd()

|

||||

if current_dir not in sys.path:

|

||||

sys.path.insert(0, current_dir)

|

||||

|

||||

# If we're not in src directory but there's a src directory, add it to path

|

||||

src_dir = os.path.join(current_dir, "src")

|

||||

if os.path.isdir(src_dir) and src_dir not in sys.path:

|

||||

sys.path.insert(0, src_dir)

|

||||

|

||||

# Search in both current directory and src directory if it exists

|

||||

search_paths = [".", "src"] if os.path.isdir("src") else ["."]

|

||||

|

||||

for search_path in search_paths:

|

||||

for root, _, files in os.walk(search_path):

|

||||

if crew_path in files and "cli/templates" not in root:

|

||||

crew_os_path = os.path.join(root, crew_path)

|

||||

try:

|

||||

sys.modules[spec.name] = module

|

||||

spec.loader.exec_module(module)

|

||||

|

||||

for attr_name in dir(module):

|

||||

module_attr = getattr(module, attr_name)

|

||||

|

||||

try:

|

||||

crew_instances.extend(fetch_crews(module_attr))

|

||||

except Exception as e:

|

||||

print(f"Error processing attribute {attr_name}: {e}")

|

||||

continue

|

||||

|

||||

except Exception as exec_error:

|

||||

print(f"Error executing module: {exec_error}")

|

||||

import traceback

|

||||

|

||||

print(f"Traceback: {traceback.format_exc()}")

|

||||

except (ImportError, AttributeError) as e:

|

||||

if require:

|

||||

console.print(

|

||||

f"Error importing crew from {crew_path}: {str(e)}",

|

||||

style="bold red",

|

||||

spec = importlib.util.spec_from_file_location(

|

||||

"crew_module", crew_os_path

|

||||

)

|

||||

if not spec or not spec.loader:

|

||||

continue

|

||||

|

||||

module = importlib.util.module_from_spec(spec)

|

||||

sys.modules[spec.name] = module

|

||||

|

||||

try:

|

||||

spec.loader.exec_module(module)

|

||||

|

||||

for attr_name in dir(module):

|

||||

module_attr = getattr(module, attr_name)

|

||||

try:

|

||||

crew_instances.extend(fetch_crews(module_attr))

|

||||

except Exception as e:

|

||||

console.print(f"Error processing attribute {attr_name}: {e}", style="bold red")

|

||||

continue

|

||||

|

||||

# If we found crew instances, break out of the loop

|

||||

if crew_instances:

|

||||

break

|

||||

|

||||

except Exception as exec_error:

|

||||

console.print(f"Error executing module: {exec_error}", style="bold red")

|

||||

|

||||

except (ImportError, AttributeError) as e:

|

||||

if require:

|

||||

console.print(

|

||||

f"Error importing crew from {crew_path}: {str(e)}",

|

||||

style="bold red",

|

||||

)

|

||||

continue

|

||||

|

||||

# If we found crew instances in this search path, break out of the search paths loop

|

||||

if crew_instances:

|

||||

break

|

||||

|

||||

if require:

|

||||

if require and not crew_instances:

|

||||

console.print("No valid Crew instance found in crew.py", style="bold red")

|

||||

raise SystemExit

|

||||

|

||||

@@ -318,11 +338,15 @@ def get_crew_instance(module_attr) -> Crew | None:

|

||||

and module_attr.is_crew_class

|

||||

):

|

||||

return module_attr().crew()

|

||||

if (ismethod(module_attr) or isfunction(module_attr)) and get_type_hints(

|

||||

module_attr

|

||||

).get("return") is Crew:

|

||||

return module_attr()

|

||||

elif isinstance(module_attr, Crew):

|

||||

try:

|

||||

if (ismethod(module_attr) or isfunction(module_attr)) and get_type_hints(

|

||||

module_attr

|

||||

).get("return") is Crew:

|

||||

return module_attr()

|

||||

except Exception:

|

||||

return None

|

||||

|

||||

if isinstance(module_attr, Crew):

|

||||

return module_attr

|

||||

else:

|

||||

return None

|

||||

@@ -402,7 +426,8 @@ def _load_tools_from_init(init_file: Path) -> list[dict[str, Any]]:

|

||||

|

||||

if not hasattr(module, "__all__"):

|

||||

console.print(

|

||||

f"[bold yellow]Warning: No __all__ defined in {init_file}[/bold yellow]"

|

||||

f"Warning: No __all__ defined in {init_file}",

|

||||

style="bold yellow",

|

||||

)

|

||||

raise SystemExit(1)

|

||||

|

||||

|

||||

@@ -526,7 +526,6 @@ class LiteAgent(FlowTrackable, BaseModel):

|

||||

messages=self._messages,

|

||||

callbacks=self._callbacks,

|

||||

printer=self._printer,

|

||||

fallback_llms=getattr(self, 'fallback_llms', None),

|

||||

)

|

||||

|

||||

# Emit LLM call completed event

|

||||

|

||||

@@ -1,7 +1,8 @@

|

||||

import inspect

|

||||

import logging

|

||||

from pathlib import Path

|

||||

from typing import Any, Callable, Dict, TypeVar, cast

|

||||

from typing import Any, Callable, Dict, TypeVar, cast, List

|

||||

from crewai.tools import BaseTool

|

||||

|

||||

import yaml

|

||||

from dotenv import load_dotenv

|

||||

@@ -27,6 +28,8 @@ def CrewBase(cls: T) -> T:

|

||||

)

|

||||

original_tasks_config_path = getattr(cls, "tasks_config", "config/tasks.yaml")

|

||||

|

||||

mcp_server_params: Any = getattr(cls, "mcp_server_params", None)

|

||||

|

||||

def __init__(self, *args, **kwargs):

|

||||

super().__init__(*args, **kwargs)

|

||||

self.load_configurations()

|

||||

@@ -64,6 +67,39 @@ def CrewBase(cls: T) -> T:

|

||||

self._original_functions, "is_kickoff"

|

||||

)

|

||||

|

||||

# Add close mcp server method to after kickoff

|

||||

bound_method = self._create_close_mcp_server_method()

|

||||

self._after_kickoff['_close_mcp_server'] = bound_method

|

||||

|

||||

def _create_close_mcp_server_method(self):

|

||||

def _close_mcp_server(self, instance, outputs):

|

||||

adapter = getattr(self, '_mcp_server_adapter', None)

|

||||

if adapter is not None:

|

||||

try:

|

||||

adapter.stop()

|

||||

except Exception as e:

|

||||

logging.warning(f"Error stopping MCP server: {e}")

|

||||

return outputs

|

||||

|

||||

_close_mcp_server.is_after_kickoff = True

|

||||

|

||||

import types

|

||||

return types.MethodType(_close_mcp_server, self)

|

||||

|

||||

def get_mcp_tools(self) -> List[BaseTool]:

|

||||

if not self.mcp_server_params:

|

||||

return []

|

||||

|

||||

from crewai_tools import MCPServerAdapter

|

||||

|

||||

adapter = getattr(self, '_mcp_server_adapter', None)

|

||||

if adapter and isinstance(adapter, MCPServerAdapter):

|

||||

return adapter.tools

|

||||

|

||||

self._mcp_server_adapter = MCPServerAdapter(self.mcp_server_params)

|

||||

return self._mcp_server_adapter.tools

|

||||

|

||||

|

||||

def load_configurations(self):

|

||||

"""Load agent and task configurations from YAML files."""

|

||||

if isinstance(self.original_agents_config_path, str):

|

||||

|

||||

@@ -39,7 +39,7 @@ from crewai.tasks.output_format import OutputFormat

|

||||

from crewai.tasks.task_output import TaskOutput

|

||||

from crewai.tools.base_tool import BaseTool

|

||||

from crewai.utilities.config import process_config

|

||||

from crewai.utilities.constants import NOT_SPECIFIED

|

||||

from crewai.utilities.constants import NOT_SPECIFIED, _NotSpecified

|

||||

from crewai.utilities.guardrail import process_guardrail, GuardrailResult

|

||||

from crewai.utilities.converter import Converter, convert_to_model

|

||||

from crewai.utilities.events import (

|

||||

@@ -95,9 +95,9 @@ class Task(BaseModel):

|

||||

agent: Optional[BaseAgent] = Field(

|

||||

description="Agent responsible for execution the task.", default=None

|

||||

)

|

||||

context: Optional[List["Task"]] = Field(

|

||||

context: Union[List["Task"], None, _NotSpecified] = Field(

|

||||

description="Other tasks that will have their output used as context for this task.",

|

||||

default=NOT_SPECIFIED,

|

||||

default=NOT_SPECIFIED

|

||||

)

|

||||

async_execution: Optional[bool] = Field(

|

||||

description="Whether the task should be executed asynchronously or not.",

|

||||

@@ -158,6 +158,9 @@ class Task(BaseModel):

|

||||

end_time: Optional[datetime.datetime] = Field(

|

||||

default=None, description="End time of the task execution"

|

||||

)

|

||||

model_config = {

|

||||

"arbitrary_types_allowed": True

|

||||

}

|

||||

|

||||

@field_validator("guardrail")

|

||||

@classmethod

|

||||

|

||||

@@ -145,52 +145,27 @@ def get_llm_response(

|

||||

messages: List[Dict[str, str]],

|

||||

callbacks: List[Any],

|

||||

printer: Printer,

|

||||

fallback_llms: Optional[List[Union[LLM, BaseLLM]]] = None,

|

||||

) -> str:

|

||||

"""Call the LLM and return the response, handling any invalid responses and trying fallbacks if available."""

|

||||

llms_to_try = [llm]

|

||||

if fallback_llms:

|

||||

llms_to_try.extend(fallback_llms)

|

||||

|

||||

last_exception = None

|

||||

|

||||

for i, current_llm in enumerate(llms_to_try):

|

||||

try:

|

||||

answer = current_llm.call(

|

||||

messages,

|

||||

callbacks=callbacks,

|

||||

)

|

||||

if not answer:

|

||||

error_msg = "Received None or empty response from LLM call."

|

||||

printer.print(content=error_msg, color="red")

|

||||

if i < len(llms_to_try) - 1:

|

||||

printer.print(content=f"Trying fallback LLM {i+1}...", color="yellow")

|

||||

continue

|

||||

else:

|

||||

raise ValueError("Invalid response from LLM call - None or empty.")

|

||||

return answer

|

||||

except Exception as e:

|

||||

last_exception = e

|

||||

if i == 0:

|

||||

printer.print(content=f"Primary LLM failed: {e}", color="red")

|

||||

else:

|

||||

printer.print(content=f"Fallback LLM {i} failed: {e}", color="red")

|

||||

|

||||

if e.__class__.__module__.startswith("litellm"):

|

||||

error_str = str(e).lower()

|

||||

if any(term in error_str for term in ["authentication", "api key", "unauthorized", "forbidden"]):

|

||||

printer.print(content="Authentication error detected, skipping remaining fallbacks", color="red")

|

||||

raise e

|

||||

|

||||