mirror of

https://github.com/crewAIInc/crewAI.git

synced 2026-05-18 23:48:10 +00:00

Compare commits

7 Commits

v0.51.0

...

docs/fix-t

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

65dd44f70d | ||

|

|

b120801fc5 | ||

|

|

9f85a2a011 | ||

|

|

b9de1034ed | ||

|

|

2d04c70f94 | ||

|

|

ab47d276db | ||

|

|

44e38b1d5e |

@@ -27,10 +27,10 @@ If needed you can also tweak the parameters of the DALL-E model by passing them

|

||||

```python

|

||||

from crewai_tools import DallETool

|

||||

|

||||

dalle_tool = DallETool(model: str = "dall-e-3",

|

||||

size: str = "1024x1024",

|

||||

quality: str = "standard",

|

||||

n: int = 1)

|

||||

dalle_tool = DallETool(model="dall-e-3",

|

||||

size="1024x1024",

|

||||

quality="standard",

|

||||

n=1)

|

||||

|

||||

Agent(

|

||||

...

|

||||

@@ -38,4 +38,4 @@ Agent(

|

||||

)

|

||||

```

|

||||

|

||||

The parameter are based on the `client.images.generate` method from the OpenAI API. For more information on the parameters, please refer to the [OpenAI API documentation](https://platform.openai.com/docs/guides/images/introduction?lang=python).

|

||||

The parameters are based on the `client.images.generate` method from the OpenAI API. For more information on the parameters, please refer to the [OpenAI API documentation](https://platform.openai.com/docs/guides/images/introduction?lang=python).

|

||||

|

||||

33

docs/tools/FileWriteTool.md

Normal file

33

docs/tools/FileWriteTool.md

Normal file

@@ -0,0 +1,33 @@

|

||||

# FileWriterTool Documentation

|

||||

|

||||

## Description

|

||||

The `FileWriterTool` is a component of the crewai_tools package, designed to simplify the process of writing content to files. It is particularly useful in scenarios such as generating reports, saving logs, creating configuration files, and more. This tool supports creating new directories if they don't exist, making it easier to organize your output.

|

||||

|

||||

## Installation

|

||||

Install the crewai_tools package to use the `FileWriterTool` in your projects:

|

||||

|

||||

```shell

|

||||

pip install 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Example

|

||||

To get started with the `FileWriterTool`:

|

||||

|

||||

```python

|

||||

from crewai_tools import FileWriterTool

|

||||

|

||||

# Initialize the tool

|

||||

file_writer_tool = FileWriterTool()

|

||||

|

||||

# Write content to a file in a specified directory

|

||||

result = file_writer_tool._run('example.txt', 'This is a test content.', 'test_directory')

|

||||

print(result)

|

||||

```

|

||||

|

||||

## Arguments

|

||||

- `filename`: The name of the file you want to create or overwrite.

|

||||

- `content`: The content to write into the file.

|

||||

- `directory` (optional): The path to the directory where the file will be created. Defaults to the current directory (`.`). If the directory does not exist, it will be created.

|

||||

|

||||

## Conclusion

|

||||

By integrating the `FileWriterTool` into your crews, the agents can execute the process of writing content to files and creating directories. This tool is essential for tasks that require saving output data, creating structured file systems, and more. By adhering to the setup and usage guidelines provided, incorporating this tool into projects is straightforward and efficient.

|

||||

42

docs/tools/FirecrawlCrawlWebsiteTool.md

Normal file

42

docs/tools/FirecrawlCrawlWebsiteTool.md

Normal file

@@ -0,0 +1,42 @@

|

||||

# FirecrawlCrawlWebsiteTool

|

||||

|

||||

## Description

|

||||

|

||||

[Firecrawl](https://firecrawl.dev) is a platform for crawling and convert any website into clean markdown or structured data.

|

||||

|

||||

## Installation

|

||||

|

||||

- Get an API key from [firecrawl.dev](https://firecrawl.dev) and set it in environment variables (`FIRECRAWL_API_KEY`).

|

||||

- Install the [Firecrawl SDK](https://github.com/mendableai/firecrawl) along with `crewai[tools]` package:

|

||||

|

||||

```

|

||||

pip install firecrawl-py 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Example

|

||||

|

||||

Utilize the FirecrawlScrapeFromWebsiteTool as follows to allow your agent to load websites:

|

||||

|

||||

```python

|

||||

from crewai_tools import FirecrawlCrawlWebsiteTool

|

||||

|

||||

tool = FirecrawlCrawlWebsiteTool(url='firecrawl.dev')

|

||||

```

|

||||

|

||||

## Arguments

|

||||

|

||||

- `api_key`: Optional. Specifies Firecrawl API key. Defaults is the `FIRECRAWL_API_KEY` environment variable.

|

||||

- `url`: The base URL to start crawling from.

|

||||

- `page_options`: Optional.

|

||||

- `onlyMainContent`: Optional. Only return the main content of the page excluding headers, navs, footers, etc.

|

||||

- `includeHtml`: Optional. Include the raw HTML content of the page. Will output a html key in the response.

|

||||

- `crawler_options`: Optional. Options for controlling the crawling behavior.

|

||||

- `includes`: Optional. URL patterns to include in the crawl.

|

||||

- `exclude`: Optional. URL patterns to exclude from the crawl.

|

||||

- `generateImgAltText`: Optional. Generate alt text for images using LLMs (requires a paid plan).

|

||||

- `returnOnlyUrls`: Optional. If true, returns only the URLs as a list in the crawl status. Note: the response will be a list of URLs inside the data, not a list of documents.

|

||||

- `maxDepth`: Optional. Maximum depth to crawl. Depth 1 is the base URL, depth 2 includes the base URL and its direct children, and so on.

|

||||

- `mode`: Optional. The crawling mode to use. Fast mode crawls 4x faster on websites without a sitemap but may not be as accurate and shouldn't be used on heavily JavaScript-rendered websites.

|

||||

- `limit`: Optional. Maximum number of pages to crawl.

|

||||

- `timeout`: Optional. Timeout in milliseconds for the crawling operation.

|

||||

|

||||

38

docs/tools/FirecrawlScrapeWebsiteTool.md

Normal file

38

docs/tools/FirecrawlScrapeWebsiteTool.md

Normal file

@@ -0,0 +1,38 @@

|

||||

# FirecrawlScrapeWebsiteTool

|

||||

|

||||

## Description

|

||||

|

||||

[Firecrawl](https://firecrawl.dev) is a platform for crawling and convert any website into clean markdown or structured data.

|

||||

|

||||

## Installation

|

||||

|

||||

- Get an API key from [firecrawl.dev](https://firecrawl.dev) and set it in environment variables (`FIRECRAWL_API_KEY`).

|

||||

- Install the [Firecrawl SDK](https://github.com/mendableai/firecrawl) along with `crewai[tools]` package:

|

||||

|

||||

```

|

||||

pip install firecrawl-py 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Example

|

||||

|

||||

Utilize the FirecrawlScrapeWebsiteTool as follows to allow your agent to load websites:

|

||||

|

||||

```python

|

||||

from crewai_tools import FirecrawlScrapeWebsiteTool

|

||||

|

||||

tool = FirecrawlScrapeWebsiteTool(url='firecrawl.dev')

|

||||

```

|

||||

|

||||

## Arguments

|

||||

|

||||

- `api_key`: Optional. Specifies Firecrawl API key. Defaults is the `FIRECRAWL_API_KEY` environment variable.

|

||||

- `url`: The URL to scrape.

|

||||

- `page_options`: Optional.

|

||||

- `onlyMainContent`: Optional. Only return the main content of the page excluding headers, navs, footers, etc.

|

||||

- `includeHtml`: Optional. Include the raw HTML content of the page. Will output a html key in the response.

|

||||

- `extractor_options`: Optional. Options for LLM-based extraction of structured information from the page content

|

||||

- `mode`: The extraction mode to use, currently supports 'llm-extraction'

|

||||

- `extractionPrompt`: Optional. A prompt describing what information to extract from the page

|

||||

- `extractionSchema`: Optional. The schema for the data to be extracted

|

||||

- `timeout`: Optional. Timeout in milliseconds for the request

|

||||

|

||||

35

docs/tools/FirecrawlSearchTool.md

Normal file

35

docs/tools/FirecrawlSearchTool.md

Normal file

@@ -0,0 +1,35 @@

|

||||

# FirecrawlSearchTool

|

||||

|

||||

## Description

|

||||

|

||||

[Firecrawl](https://firecrawl.dev) is a platform for crawling and convert any website into clean markdown or structured data.

|

||||

|

||||

## Installation

|

||||

|

||||

- Get an API key from [firecrawl.dev](https://firecrawl.dev) and set it in environment variables (`FIRECRAWL_API_KEY`).

|

||||

- Install the [Firecrawl SDK](https://github.com/mendableai/firecrawl) along with `crewai[tools]` package:

|

||||

|

||||

```

|

||||

pip install firecrawl-py 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Example

|

||||

|

||||

Utilize the FirecrawlSearchTool as follows to allow your agent to load websites:

|

||||

|

||||

```python

|

||||

from crewai_tools import FirecrawlSearchTool

|

||||

|

||||

tool = FirecrawlSearchTool(query='what is firecrawl?')

|

||||

```

|

||||

|

||||

## Arguments

|

||||

|

||||

- `api_key`: Optional. Specifies Firecrawl API key. Defaults is the `FIRECRAWL_API_KEY` environment variable.

|

||||

- `query`: The search query string to be used for searching.

|

||||

- `page_options`: Optional. Options for result formatting.

|

||||

- `onlyMainContent`: Optional. Only return the main content of the page excluding headers, navs, footers, etc.

|

||||

- `includeHtml`: Optional. Include the raw HTML content of the page. Will output a html key in the response.

|

||||

- `fetchPageContent`: Optional. Fetch the full content of the page.

|

||||

- `search_options`: Optional. Options for controlling the crawling behavior.

|

||||

- `limit`: Optional. Maximum number of pages to crawl.

|

||||

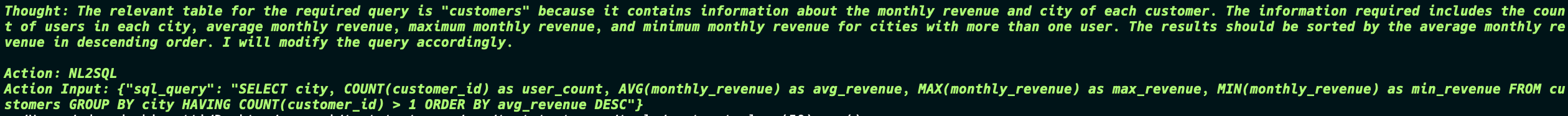

@@ -47,8 +47,8 @@ The primary task goal was:

|

||||

|

||||

So the Agent tried to get information from the DB, the first one is wrong so the Agent tries again and gets the correct information and passes to the next agent.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

The second task goal was:

|

||||

@@ -58,11 +58,11 @@ Include information on the average, maximum, and minimum monthly revenue for eac

|

||||

|

||||

Now things start to get interesting, the Agent generates the SQL query to not only create the table but also insert the data into the table. And in the end the Agent still returns the final report which is exactly what was in the database.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

This is a simple example of how the NL2SQLTool can be used to interact with the database and generate reports based on the data in the database.

|

||||

|

||||

36

mkdocs.yml

36

mkdocs.yml

@@ -152,33 +152,37 @@ nav:

|

||||

- Agent Monitoring with AgentOps: 'how-to/AgentOps-Observability.md'

|

||||

- Agent Monitoring with LangTrace: 'how-to/Langtrace-Observability.md'

|

||||

- Tools Docs:

|

||||

- Google Serper Search: 'tools/SerperDevTool.md'

|

||||

- Browserbase Web Loader: 'tools/BrowserbaseLoadTool.md'

|

||||

- Composio Tools: 'tools/ComposioTool.md'

|

||||

- Code Docs RAG Search: 'tools/CodeDocsSearchTool.md'

|

||||

- Code Interpreter: 'tools/CodeInterpreterTool.md'

|

||||

- Scrape Website: 'tools/ScrapeWebsiteTool.md'

|

||||

- Directory Read: 'tools/DirectoryReadTool.md'

|

||||

- Exa Serch Web Loader: 'tools/EXASearchTool.md'

|

||||

- File Read: 'tools/FileReadTool.md'

|

||||

- Selenium Scraper: 'tools/SeleniumScrapingTool.md'

|

||||

- Directory RAG Search: 'tools/DirectorySearchTool.md'

|

||||

- DALL-E Tool: 'tools/DALL-ETool.md'

|

||||

- PDF RAG Search: 'tools/PDFSearchTool.md'

|

||||

- TXT RAG Search: 'tools/TXTSearchTool.md'

|

||||

- Composio Tools: 'tools/ComposioTool.md'

|

||||

- CSV RAG Search: 'tools/CSVSearchTool.md'

|

||||

- XML RAG Search: 'tools/XMLSearchTool.md'

|

||||

- JSON RAG Search: 'tools/JSONSearchTool.md'

|

||||

- DALL-E Tool: 'tools/DALL-ETool.md'

|

||||

- Directory RAG Search: 'tools/DirectorySearchTool.md'

|

||||

- Directory Read: 'tools/DirectoryReadTool.md'

|

||||

- Docx Rag Search: 'tools/DOCXSearchTool.md'

|

||||

- EXA Serch Web Loader: 'tools/EXASearchTool.md'

|

||||

- File Read: 'tools/FileReadTool.md'

|

||||

- File Write: 'tools/FileWriteTool.md'

|

||||

- Firecrawl Crawl Website Tool: 'tools/FirecrawlCrawlWebsiteTool.md'

|

||||

- Firecrawl Scrape Website Tool: 'tools/FirecrawlScrapeWebsiteTool.md'

|

||||

- Firecrawl Search Tool: 'tools/FirecrgstawlSearchTool.md'

|

||||

- Github RAG Search: 'tools/GitHubSearchTool.md'

|

||||

- Google Serper Search: 'tools/SerperDevTool.md'

|

||||

- JSON RAG Search: 'tools/JSONSearchTool.md'

|

||||

- MDX RAG Search: 'tools/MDXSearchTool.md'

|

||||

- MySQL Tool: 'tools/MySQLTool.md'

|

||||

- NL2SQL Tool: 'tools/NL2SQLTool.md'

|

||||

- PDF RAG Search: 'tools/PDFSearchTool.md'

|

||||

- PG RAG Search: 'tools/PGSearchTool.md'

|

||||

- Scrape Website: 'tools/ScrapeWebsiteTool.md'

|

||||

- Selenium Scraper: 'tools/SeleniumScrapingTool.md'

|

||||

- TXT RAG Search: 'tools/TXTSearchTool.md'

|

||||

- Vision Tool: 'tools/VisionTool.md'

|

||||

- Website RAG Search: 'tools/WebsiteSearchTool.md'

|

||||

- Github RAG Search: 'tools/GitHubSearchTool.md'

|

||||

- Code Docs RAG Search: 'tools/CodeDocsSearchTool.md'

|

||||

- Youtube Video RAG Search: 'tools/YoutubeVideoSearchTool.md'

|

||||

- XML RAG Search: 'tools/XMLSearchTool.md'

|

||||

- Youtube Channel RAG Search: 'tools/YoutubeChannelSearchTool.md'

|

||||

- Youtube Video RAG Search: 'tools/YoutubeVideoSearchTool.md'

|

||||

- Examples:

|

||||

- Trip Planner Crew: https://github.com/joaomdmoura/crewAI-examples/tree/main/trip_planner"

|

||||

- Create Instagram Post: https://github.com/joaomdmoura/crewAI-examples/tree/main/instagram_post"

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

[tool.poetry]

|

||||

name = "crewai"

|

||||

version = "0.51.0"

|

||||

version = "0.51.1"

|

||||

description = "Cutting-edge framework for orchestrating role-playing, autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks."

|

||||

authors = ["Joao Moura <joao@crewai.com>"]

|

||||

readme = "README.md"

|

||||

|

||||

@@ -98,65 +98,70 @@ class Telemetry:

|

||||

self._add_attribute(span, "crew_memory", crew.memory)

|

||||

self._add_attribute(span, "crew_number_of_tasks", len(crew.tasks))

|

||||

self._add_attribute(span, "crew_number_of_agents", len(crew.agents))

|

||||

self._add_attribute(

|

||||

span,

|

||||

"crew_agents",

|

||||

json.dumps(

|

||||

[

|

||||

{

|

||||

"key": agent.key,

|

||||

"id": str(agent.id),

|

||||

"role": agent.role,

|

||||

"goal": agent.goal,

|

||||

"backstory": agent.backstory,

|

||||

"verbose?": agent.verbose,

|

||||

"max_iter": agent.max_iter,

|

||||

"max_rpm": agent.max_rpm,

|

||||

"i18n": agent.i18n.prompt_file,

|

||||

"llm": json.dumps(self._safe_llm_attributes(agent.llm)),

|

||||

"delegation_enabled?": agent.allow_delegation,

|

||||

"tools_names": [

|

||||

tool.name.casefold() for tool in agent.tools or []

|

||||

],

|

||||

}

|

||||

for agent in crew.agents

|

||||

]

|

||||

),

|

||||

)

|

||||

self._add_attribute(

|

||||

span,

|

||||

"crew_tasks",

|

||||

json.dumps(

|

||||

[

|

||||

{

|

||||

"key": task.key,

|

||||

"id": str(task.id),

|

||||

"description": task.description,

|

||||

"expected_output": task.expected_output,

|

||||

"async_execution?": task.async_execution,

|

||||

"human_input?": task.human_input,

|

||||

"agent_role": task.agent.role if task.agent else "None",

|

||||

"agent_key": task.agent.key if task.agent else None,

|

||||

"context": (

|

||||

[task.description for task in task.context]

|

||||

if task.context

|

||||

else None

|

||||

),

|

||||

"tools_names": [

|

||||

tool.name.casefold() for tool in task.tools or []

|

||||

],

|

||||

}

|

||||

for task in crew.tasks

|

||||

]

|

||||

),

|

||||

)

|

||||

self._add_attribute(span, "platform", platform.platform())

|

||||

self._add_attribute(span, "platform_release", platform.release())

|

||||

self._add_attribute(span, "platform_system", platform.system())

|

||||

self._add_attribute(span, "platform_version", platform.version())

|

||||

self._add_attribute(span, "cpus", os.cpu_count())

|

||||

|

||||

if crew.share_crew:

|

||||

self._add_attribute(

|

||||

span,

|

||||

"crew_agents",

|

||||

json.dumps(

|

||||

[

|

||||

{

|

||||

"key": agent.key,

|

||||

"id": str(agent.id),

|

||||

"role": agent.role,

|

||||

"goal": agent.goal,

|

||||

"backstory": agent.backstory,

|

||||

"verbose?": agent.verbose,

|

||||

"max_iter": agent.max_iter,

|

||||

"max_rpm": agent.max_rpm,

|

||||

"i18n": agent.i18n.prompt_file,

|

||||

"llm": json.dumps(

|

||||

self._safe_llm_attributes(agent.llm)

|

||||

),

|

||||

"delegation_enabled?": agent.allow_delegation,

|

||||

"tools_names": [

|

||||

tool.name.casefold()

|

||||

for tool in agent.tools or []

|

||||

],

|

||||

}

|

||||

for agent in crew.agents

|

||||

]

|

||||

),

|

||||

)

|

||||

self._add_attribute(

|

||||

span,

|

||||

"crew_tasks",

|

||||

json.dumps(

|

||||

[

|

||||

{

|

||||

"key": task.key,

|

||||

"id": str(task.id),

|

||||

"description": task.description,

|

||||

"expected_output": task.expected_output,

|

||||

"async_execution?": task.async_execution,

|

||||

"human_input?": task.human_input,

|

||||

"agent_role": task.agent.role

|

||||

if task.agent

|

||||

else "None",

|

||||

"agent_key": task.agent.key if task.agent else None,

|

||||

"context": (

|

||||

[task.description for task in task.context]

|

||||

if task.context

|

||||

else None

|

||||

),

|

||||

"tools_names": [

|

||||

tool.name.casefold()

|

||||

for tool in task.tools or []

|

||||

],

|

||||

}

|

||||

for task in crew.tasks

|

||||

]

|

||||

),

|

||||

)

|

||||

self._add_attribute(span, "platform", platform.platform())

|

||||

self._add_attribute(span, "platform_release", platform.release())

|

||||

self._add_attribute(span, "platform_system", platform.system())

|

||||

self._add_attribute(span, "platform_version", platform.version())

|

||||

self._add_attribute(span, "cpus", os.cpu_count())

|

||||

self._add_attribute(

|

||||

span, "crew_inputs", json.dumps(inputs) if inputs else None

|

||||

)

|

||||

|

||||

Reference in New Issue

Block a user