mirror of

https://github.com/crewAIInc/crewAI.git

synced 2026-05-22 17:38:10 +00:00

Compare commits

31 Commits

docs/fix-t

...

joao-pipel

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

e6e2944454 | ||

|

|

f777c1c2e0 | ||

|

|

782ce22d99 | ||

|

|

f5246039e5 | ||

|

|

4736604b4d | ||

|

|

09cba0135e | ||

|

|

8119edb495 | ||

|

|

17bffb0803 | ||

|

|

cbe139fced | ||

|

|

946d8567fe | ||

|

|

7b5d5bdeef | ||

|

|

a1551bcf2b | ||

|

|

5495825b1d | ||

|

|

6e36f84cc6 | ||

|

|

cddf2d8f7c | ||

|

|

5f17e35c5a | ||

|

|

231a833ad0 | ||

|

|

a870295d42 | ||

|

|

ddda8f6bda | ||

|

|

bf7372fefa | ||

|

|

3451b6fc7a | ||

|

|

dbf2570353 | ||

|

|

d0707fac91 | ||

|

|

35ebdd6022 | ||

|

|

92a77e5cac | ||

|

|

a2922c9ad5 | ||

|

|

9f9b52dd26 | ||

|

|

2482c7ab68 | ||

|

|

7fdabda97e | ||

|

|

7306414de7 | ||

|

|

97d7bfb52a |

35

.github/ISSUE_TEMPLATE/bug_report.md

vendored

35

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@@ -1,35 +0,0 @@

|

||||

---

|

||||

name: Bug report

|

||||

about: Create a report to help us improve CrewAI

|

||||

title: "[BUG]"

|

||||

labels: bug

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

**Description**

|

||||

Provide a clear and concise description of what the bug is.

|

||||

|

||||

**Steps to Reproduce**

|

||||

Provide a step-by-step process to reproduce the behavior:

|

||||

|

||||

**Expected behavior**

|

||||

A clear and concise description of what you expected to happen.

|

||||

|

||||

**Screenshots/Code snippets**

|

||||

If applicable, add screenshots or code snippets to help explain your problem.

|

||||

|

||||

**Environment Details:**

|

||||

- **Operating System**: [e.g., Ubuntu 20.04, macOS Catalina, Windows 10]

|

||||

- **Python Version**: [e.g., 3.8, 3.9, 3.10]

|

||||

- **crewAI Version**: [e.g., 0.30.11]

|

||||

- **crewAI Tools Version**: [e.g., 0.2.6]

|

||||

|

||||

**Logs**

|

||||

Include relevant logs or error messages if applicable.

|

||||

|

||||

**Possible Solution**

|

||||

Have a solution in mind? Please suggest it here, or write "None".

|

||||

|

||||

**Additional context**

|

||||

Add any other context about the problem here.

|

||||

116

.github/ISSUE_TEMPLATE/bug_report.yml

vendored

Normal file

116

.github/ISSUE_TEMPLATE/bug_report.yml

vendored

Normal file

@@ -0,0 +1,116 @@

|

||||

name: Bug report

|

||||

description: Create a report to help us improve CrewAI

|

||||

title: "[BUG]"

|

||||

labels: ["bug"]

|

||||

assignees: []

|

||||

body:

|

||||

- type: textarea

|

||||

id: description

|

||||

attributes:

|

||||

label: Description

|

||||

description: Provide a clear and concise description of what the bug is.

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: steps-to-reproduce

|

||||

attributes:

|

||||

label: Steps to Reproduce

|

||||

description: Provide a step-by-step process to reproduce the behavior.

|

||||

placeholder: |

|

||||

1. Go to '...'

|

||||

2. Click on '....'

|

||||

3. Scroll down to '....'

|

||||

4. See error

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: expected-behavior

|

||||

attributes:

|

||||

label: Expected behavior

|

||||

description: A clear and concise description of what you expected to happen.

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: screenshots-code

|

||||

attributes:

|

||||

label: Screenshots/Code snippets

|

||||

description: If applicable, add screenshots or code snippets to help explain your problem.

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

id: os

|

||||

attributes:

|

||||

label: Operating System

|

||||

description: Select the operating system you're using

|

||||

options:

|

||||

- Ubuntu 20.04

|

||||

- Ubuntu 22.04

|

||||

- Ubuntu 24.04

|

||||

- macOS Catalina

|

||||

- macOS Big Sur

|

||||

- macOS Monterey

|

||||

- macOS Ventura

|

||||

- macOS Sonoma

|

||||

- Windows 10

|

||||

- Windows 11

|

||||

- Other (specify in additional context)

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

id: python-version

|

||||

attributes:

|

||||

label: Python Version

|

||||

description: Version of Python your Crew is running on

|

||||

options:

|

||||

- '3.10'

|

||||

- '3.11'

|

||||

- '3.12'

|

||||

- '3.13'

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: crewai-version

|

||||

attributes:

|

||||

label: crewAI Version

|

||||

description: What version of CrewAI are you using

|

||||

validations:

|

||||

required: true

|

||||

- type: input

|

||||

id: crewai-tools-version

|

||||

attributes:

|

||||

label: crewAI Tools Version

|

||||

description: What version of CrewAI Tools are you using

|

||||

validations:

|

||||

required: true

|

||||

- type: dropdown

|

||||

id: virtual-environment

|

||||

attributes:

|

||||

label: Virtual Environment

|

||||

description: What Virtual Environment are you running your crew in.

|

||||

options:

|

||||

- Venv

|

||||

- Conda

|

||||

- Poetry

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: evidence

|

||||

attributes:

|

||||

label: Evidence

|

||||

description: Include relevant information, logs or error messages. These can be screenshots.

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: possible-solution

|

||||

attributes:

|

||||

label: Possible Solution

|

||||

description: Have a solution in mind? Please suggest it here, or write "None".

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: additional-context

|

||||

attributes:

|

||||

label: Additional context

|

||||

description: Add any other context about the problem here.

|

||||

validations:

|

||||

required: true

|

||||

1

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

1

.github/ISSUE_TEMPLATE/config.yml

vendored

Normal file

@@ -0,0 +1 @@

|

||||

blank_issues_enabled: false

|

||||

24

.github/ISSUE_TEMPLATE/custom.md

vendored

24

.github/ISSUE_TEMPLATE/custom.md

vendored

@@ -1,24 +0,0 @@

|

||||

---

|

||||

name: Custom issue template

|

||||

about: Describe this issue template's purpose here.

|

||||

title: "[DOCS]"

|

||||

labels: documentation

|

||||

assignees: ''

|

||||

|

||||

---

|

||||

|

||||

## Documentation Page

|

||||

<!-- Provide a link to the documentation page that needs improvement -->

|

||||

|

||||

## Description

|

||||

<!-- Describe what needs to be changed or improved in the documentation -->

|

||||

|

||||

## Suggested Changes

|

||||

<!-- If possible, provide specific suggestions for how to improve the documentation -->

|

||||

|

||||

## Additional Context

|

||||

<!-- Add any other context about the documentation issue here -->

|

||||

|

||||

## Checklist

|

||||

- [ ] I have searched the existing issues to make sure this is not a duplicate

|

||||

- [ ] I have checked the latest version of the documentation to ensure this hasn't been addressed

|

||||

65

.github/ISSUE_TEMPLATE/feature_request.yml

vendored

Normal file

65

.github/ISSUE_TEMPLATE/feature_request.yml

vendored

Normal file

@@ -0,0 +1,65 @@

|

||||

name: Feature request

|

||||

description: Suggest a new feature for CrewAI

|

||||

title: "[FEATURE]"

|

||||

labels: ["feature-request"]

|

||||

assignees: []

|

||||

body:

|

||||

- type: markdown

|

||||

attributes:

|

||||

value: |

|

||||

Thanks for taking the time to fill out this feature request!

|

||||

- type: dropdown

|

||||

id: feature-area

|

||||

attributes:

|

||||

label: Feature Area

|

||||

description: Which area of CrewAI does this feature primarily relate to?

|

||||

options:

|

||||

- Core functionality

|

||||

- Agent capabilities

|

||||

- Task management

|

||||

- Integration with external tools

|

||||

- Performance optimization

|

||||

- Documentation

|

||||

- Other (please specify in additional context)

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: problem

|

||||

attributes:

|

||||

label: Is your feature request related to a an existing bug? Please link it here.

|

||||

description: A link to the bug or NA if not related to an existing bug.

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: solution

|

||||

attributes:

|

||||

label: Describe the solution you'd like

|

||||

description: A clear and concise description of what you want to happen.

|

||||

validations:

|

||||

required: true

|

||||

- type: textarea

|

||||

id: alternatives

|

||||

attributes:

|

||||

label: Describe alternatives you've considered

|

||||

description: A clear and concise description of any alternative solutions or features you've considered.

|

||||

validations:

|

||||

required: false

|

||||

- type: textarea

|

||||

id: context

|

||||

attributes:

|

||||

label: Additional context

|

||||

description: Add any other context, screenshots, or examples about the feature request here.

|

||||

validations:

|

||||

required: false

|

||||

- type: dropdown

|

||||

id: willingness-to-contribute

|

||||

attributes:

|

||||

label: Willingness to Contribute

|

||||

description: Would you be willing to contribute to the implementation of this feature?

|

||||

options:

|

||||

- Yes, I'd be happy to submit a pull request

|

||||

- I could provide more detailed specifications

|

||||

- I can test the feature once it's implemented

|

||||

- No, I'm just suggesting the idea

|

||||

validations:

|

||||

required: true

|

||||

6

.github/workflows/mkdocs.yml

vendored

6

.github/workflows/mkdocs.yml

vendored

@@ -1,10 +1,8 @@

|

||||

name: Deploy MkDocs

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

push:

|

||||

branches:

|

||||

- main

|

||||

release:

|

||||

types: [published]

|

||||

|

||||

permissions:

|

||||

contents: write

|

||||

|

||||

23

.github/workflows/security-checker.yml

vendored

Normal file

23

.github/workflows/security-checker.yml

vendored

Normal file

@@ -0,0 +1,23 @@

|

||||

name: Security Checker

|

||||

|

||||

on: [pull_request]

|

||||

|

||||

jobs:

|

||||

security-check:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v4

|

||||

with:

|

||||

python-version: "3.11.9"

|

||||

|

||||

- name: Install dependencies

|

||||

run: pip install bandit

|

||||

|

||||

- name: Run Bandit

|

||||

run: bandit -c pyproject.toml -r src/ -lll

|

||||

|

||||

1

.github/workflows/stale.yml

vendored

1

.github/workflows/stale.yml

vendored

@@ -24,3 +24,4 @@ jobs:

|

||||

stale-pr-message: 'This PR is stale because it has been open for 45 days with no activity.'

|

||||

days-before-pr-stale: 45

|

||||

days-before-pr-close: -1

|

||||

operations-per-run: 1200

|

||||

|

||||

1

.github/workflows/tests.yml

vendored

1

.github/workflows/tests.yml

vendored

@@ -11,6 +11,7 @@ env:

|

||||

jobs:

|

||||

deploy:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 15

|

||||

|

||||

steps:

|

||||

- name: Checkout code

|

||||

|

||||

142

docs/core-concepts/Cli.md

Normal file

142

docs/core-concepts/Cli.md

Normal file

@@ -0,0 +1,142 @@

|

||||

# CrewAI CLI Documentation

|

||||

|

||||

The CrewAI CLI provides a set of commands to interact with CrewAI, allowing you to create, train, run, and manage crews and pipelines.

|

||||

|

||||

## Installation

|

||||

|

||||

To use the CrewAI CLI, make sure you have CrewAI & Poetry installed:

|

||||

|

||||

```

|

||||

pip install crewai poetry

|

||||

```

|

||||

|

||||

## Basic Usage

|

||||

|

||||

The basic structure of a CrewAI CLI command is:

|

||||

|

||||

```

|

||||

crewai [COMMAND] [OPTIONS] [ARGUMENTS]

|

||||

```

|

||||

|

||||

## Available Commands

|

||||

|

||||

### 1. create

|

||||

|

||||

Create a new crew or pipeline.

|

||||

|

||||

```

|

||||

crewai create [OPTIONS] TYPE NAME

|

||||

```

|

||||

|

||||

- `TYPE`: Choose between "crew" or "pipeline"

|

||||

- `NAME`: Name of the crew or pipeline

|

||||

- `--router`: (Optional) Create a pipeline with router functionality

|

||||

|

||||

Example:

|

||||

```

|

||||

crewai create crew my_new_crew

|

||||

crewai create pipeline my_new_pipeline --router

|

||||

```

|

||||

|

||||

### 2. version

|

||||

|

||||

Show the installed version of CrewAI.

|

||||

|

||||

```

|

||||

crewai version [OPTIONS]

|

||||

```

|

||||

|

||||

- `--tools`: (Optional) Show the installed version of CrewAI tools

|

||||

|

||||

Example:

|

||||

```

|

||||

crewai version

|

||||

crewai version --tools

|

||||

```

|

||||

|

||||

### 3. train

|

||||

|

||||

Train the crew for a specified number of iterations.

|

||||

|

||||

```

|

||||

crewai train [OPTIONS]

|

||||

```

|

||||

|

||||

- `-n, --n_iterations INTEGER`: Number of iterations to train the crew (default: 5)

|

||||

- `-f, --filename TEXT`: Path to a custom file for training (default: "trained_agents_data.pkl")

|

||||

|

||||

Example:

|

||||

```

|

||||

crewai train -n 10 -f my_training_data.pkl

|

||||

```

|

||||

|

||||

### 4. replay

|

||||

|

||||

Replay the crew execution from a specific task.

|

||||

|

||||

```

|

||||

crewai replay [OPTIONS]

|

||||

```

|

||||

|

||||

- `-t, --task_id TEXT`: Replay the crew from this task ID, including all subsequent tasks

|

||||

|

||||

Example:

|

||||

```

|

||||

crewai replay -t task_123456

|

||||

```

|

||||

|

||||

### 5. log_tasks_outputs

|

||||

|

||||

Retrieve your latest crew.kickoff() task outputs.

|

||||

|

||||

```

|

||||

crewai log_tasks_outputs

|

||||

```

|

||||

|

||||

### 6. reset_memories

|

||||

|

||||

Reset the crew memories (long, short, entity, latest_crew_kickoff_outputs).

|

||||

|

||||

```

|

||||

crewai reset_memories [OPTIONS]

|

||||

```

|

||||

|

||||

- `-l, --long`: Reset LONG TERM memory

|

||||

- `-s, --short`: Reset SHORT TERM memory

|

||||

- `-e, --entities`: Reset ENTITIES memory

|

||||

- `-k, --kickoff-outputs`: Reset LATEST KICKOFF TASK OUTPUTS

|

||||

- `-a, --all`: Reset ALL memories

|

||||

|

||||

Example:

|

||||

```

|

||||

crewai reset_memories --long --short

|

||||

crewai reset_memories --all

|

||||

```

|

||||

|

||||

### 7. test

|

||||

|

||||

Test the crew and evaluate the results.

|

||||

|

||||

```

|

||||

crewai test [OPTIONS]

|

||||

```

|

||||

|

||||

- `-n, --n_iterations INTEGER`: Number of iterations to test the crew (default: 3)

|

||||

- `-m, --model TEXT`: LLM Model to run the tests on the Crew (default: "gpt-4o-mini")

|

||||

|

||||

Example:

|

||||

```

|

||||

crewai test -n 5 -m gpt-3.5-turbo

|

||||

```

|

||||

|

||||

### 8. run

|

||||

|

||||

Run the crew.

|

||||

|

||||

```

|

||||

crewai run

|

||||

```

|

||||

|

||||

## Note

|

||||

|

||||

Make sure to run these commands from the directory where your CrewAI project is set up. Some commands may require additional configuration or setup within your project structure.

|

||||

@@ -32,8 +32,8 @@ Each input creates its own run, flowing through all stages of the pipeline. Mult

|

||||

|

||||

## Pipeline Attributes

|

||||

|

||||

| Attribute | Parameters | Description |

|

||||

| :--------- | :--------- | :------------------------------------------------------------------------------------ |

|

||||

| Attribute | Parameters | Description |

|

||||

| :--------- | :--------- | :---------------------------------------------------------------------------------------------- |

|

||||

| **Stages** | `stages` | A list of crews, lists of crews, or routers representing the stages to be executed in sequence. |

|

||||

|

||||

## Creating a Pipeline

|

||||

@@ -239,7 +239,7 @@ email_router = Router(

|

||||

pipeline=normal_pipeline

|

||||

)

|

||||

},

|

||||

default=Pipeline(stages=[normal_pipeline]) # Default to just classification if no urgency score

|

||||

default=Pipeline(stages=[normal_pipeline]) # Default to just normal if no urgency score

|

||||

)

|

||||

|

||||

# Use the router in a main pipeline

|

||||

|

||||

@@ -0,0 +1,129 @@

|

||||

# Creating a CrewAI Pipeline Project

|

||||

|

||||

Welcome to the comprehensive guide for creating a new CrewAI pipeline project. This document will walk you through the steps to create, customize, and run your CrewAI pipeline project, ensuring you have everything you need to get started.

|

||||

|

||||

To learn more about CrewAI pipelines, visit the [CrewAI documentation](https://docs.crewai.com/core-concepts/Pipeline/).

|

||||

|

||||

## Prerequisites

|

||||

|

||||

Before getting started with CrewAI pipelines, make sure that you have installed CrewAI via pip:

|

||||

|

||||

```shell

|

||||

$ pip install crewai crewai-tools

|

||||

```

|

||||

|

||||

The same prerequisites for virtual environments and Code IDEs apply as in regular CrewAI projects.

|

||||

|

||||

## Creating a New Pipeline Project

|

||||

|

||||

To create a new CrewAI pipeline project, you have two options:

|

||||

|

||||

1. For a basic pipeline template:

|

||||

|

||||

```shell

|

||||

$ crewai create pipeline <project_name>

|

||||

```

|

||||

|

||||

2. For a pipeline example that includes a router:

|

||||

|

||||

```shell

|

||||

$ crewai create pipeline --router <project_name>

|

||||

```

|

||||

|

||||

These commands will create a new project folder with the following structure:

|

||||

|

||||

```

|

||||

<project_name>/

|

||||

├── README.md

|

||||

├── poetry.lock

|

||||

├── pyproject.toml

|

||||

├── src/

|

||||

│ └── <project_name>/

|

||||

│ ├── __init__.py

|

||||

│ ├── main.py

|

||||

│ ├── crews/

|

||||

│ │ ├── crew1/

|

||||

│ │ │ ├── crew1.py

|

||||

│ │ │ └── config/

|

||||

│ │ │ ├── agents.yaml

|

||||

│ │ │ └── tasks.yaml

|

||||

│ │ ├── crew2/

|

||||

│ │ │ ├── crew2.py

|

||||

│ │ │ └── config/

|

||||

│ │ │ ├── agents.yaml

|

||||

│ │ │ └── tasks.yaml

|

||||

│ ├── pipelines/

|

||||

│ │ ├── __init__.py

|

||||

│ │ ├── pipeline1.py

|

||||

│ │ └── pipeline2.py

|

||||

│ └── tools/

|

||||

│ ├── __init__.py

|

||||

│ └── custom_tool.py

|

||||

└── tests/

|

||||

```

|

||||

|

||||

## Customizing Your Pipeline Project

|

||||

|

||||

To customize your pipeline project, you can:

|

||||

|

||||

1. Modify the crew files in `src/<project_name>/crews/` to define your agents and tasks for each crew.

|

||||

2. Modify the pipeline files in `src/<project_name>/pipelines/` to define your pipeline structure.

|

||||

3. Modify `src/<project_name>/main.py` to set up and run your pipelines.

|

||||

4. Add your environment variables into the `.env` file.

|

||||

|

||||

### Example: Defining a Pipeline

|

||||

|

||||

Here's an example of how to define a pipeline in `src/<project_name>/pipelines/normal_pipeline.py`:

|

||||

|

||||

```python

|

||||

from crewai import Pipeline

|

||||

from crewai.project import PipelineBase

|

||||

from ..crews.normal_crew import NormalCrew

|

||||

|

||||

@PipelineBase

|

||||

class NormalPipeline:

|

||||

def __init__(self):

|

||||

# Initialize crews

|

||||

self.normal_crew = NormalCrew().crew()

|

||||

|

||||

def create_pipeline(self):

|

||||

return Pipeline(

|

||||

stages=[

|

||||

self.normal_crew

|

||||

]

|

||||

)

|

||||

|

||||

async def kickoff(self, inputs):

|

||||

pipeline = self.create_pipeline()

|

||||

results = await pipeline.kickoff(inputs)

|

||||

return results

|

||||

```

|

||||

|

||||

### Annotations

|

||||

|

||||

The main annotation you'll use for pipelines is `@PipelineBase`. This annotation is used to decorate your pipeline classes, similar to how `@CrewBase` is used for crews.

|

||||

|

||||

## Installing Dependencies

|

||||

|

||||

To install the dependencies for your project, use Poetry:

|

||||

|

||||

```shell

|

||||

$ cd <project_name>

|

||||

$ crewai install

|

||||

```

|

||||

|

||||

## Running Your Pipeline Project

|

||||

|

||||

To run your pipeline project, use the following command:

|

||||

|

||||

```shell

|

||||

$ crewai run

|

||||

```

|

||||

|

||||

This will initialize your pipeline and begin task execution as defined in your `main.py` file.

|

||||

|

||||

## Deploying Your Pipeline Project

|

||||

|

||||

Pipelines can be deployed in the same way as regular CrewAI projects. The easiest way is through [CrewAI+](https://www.crewai.com/crewaiplus), where you can deploy your pipeline in a few clicks.

|

||||

|

||||

Remember, when working with pipelines, you're orchestrating multiple crews to work together in a sequence or parallel fashion. This allows for more complex workflows and information processing tasks.

|

||||

@@ -154,15 +154,15 @@ email_summarizer_task:

|

||||

Use the annotations to properly reference the agent and task in the crew.py file.

|

||||

|

||||

### Annotations include:

|

||||

* @agent

|

||||

* @task

|

||||

* @crew

|

||||

* @llm

|

||||

* @tool

|

||||

* @callback

|

||||

* @output_json

|

||||

* @output_pydantic

|

||||

* @cache_handler

|

||||

* [@agent](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L17)

|

||||

* [@task](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L4)

|

||||

* [@crew](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L69)

|

||||

* [@llm](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L23)

|

||||

* [@tool](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L39)

|

||||

* [@callback](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L44)

|

||||

* [@output_json](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L29)

|

||||

* [@output_pydantic](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L34)

|

||||

* [@cache_handler](https://github.com/crewAIInc/crewAI/blob/97d7bfb52ad49a9f04db360e1b6612d98c91971e/src/crewai/project/annotations.py#L49)

|

||||

|

||||

crew.py

|

||||

```py

|

||||

@@ -191,8 +191,7 @@ To install the dependencies for your project, you can use Poetry. First, navigat

|

||||

|

||||

```shell

|

||||

$ cd my_project

|

||||

$ poetry lock

|

||||

$ poetry install

|

||||

$ crewai install

|

||||

```

|

||||

|

||||

This will install the dependencies specified in the `pyproject.toml` file.

|

||||

@@ -233,11 +232,6 @@ To run your project, use the following command:

|

||||

```shell

|

||||

$ crewai run

|

||||

```

|

||||

or

|

||||

```shell

|

||||

$ poetry run my_project

|

||||

```

|

||||

|

||||

This will initialize your crew of AI agents and begin task execution as defined in your configuration in the `main.py` file.

|

||||

|

||||

### Replay Tasks from Latest Crew Kickoff

|

||||

|

||||

@@ -88,7 +88,7 @@ There are a couple of different ways you can use HuggingFace to host your LLM.

|

||||

|

||||

### Your own HuggingFace endpoint

|

||||

```python

|

||||

from langchain_huggingface import HuggingFaceEndpoint,

|

||||

from langchain_huggingface import HuggingFaceEndpoint

|

||||

|

||||

llm = HuggingFaceEndpoint(

|

||||

repo_id="microsoft/Phi-3-mini-4k-instruct",

|

||||

@@ -112,30 +112,30 @@ Switch between APIs and models seamlessly using environment variables, supportin

|

||||

### Configuration Examples

|

||||

#### FastChat

|

||||

```sh

|

||||

os.environ[OPENAI_API_BASE]="http://localhost:8001/v1"

|

||||

os.environ[OPENAI_MODEL_NAME]='oh-2.5m7b-q51'

|

||||

os.environ[OPENAI_API_KEY]=NA

|

||||

os.environ["OPENAI_API_BASE"]='http://localhost:8001/v1'

|

||||

os.environ["OPENAI_MODEL_NAME"]='oh-2.5m7b-q51'

|

||||

os.environ[OPENAI_API_KEY]='NA'

|

||||

```

|

||||

|

||||

#### LM Studio

|

||||

Launch [LM Studio](https://lmstudio.ai) and go to the Server tab. Then select a model from the dropdown menu and wait for it to load. Once it's loaded, click the green Start Server button and use the URL, port, and API key that's shown (you can modify them). Below is an example of the default settings as of LM Studio 0.2.19:

|

||||

```sh

|

||||

os.environ[OPENAI_API_BASE]="http://localhost:1234/v1"

|

||||

os.environ[OPENAI_API_KEY]="lm-studio"

|

||||

os.environ["OPENAI_API_BASE"]='http://localhost:1234/v1'

|

||||

os.environ["OPENAI_API_KEY"]='lm-studio'

|

||||

```

|

||||

|

||||

#### Groq API

|

||||

```sh

|

||||

os.environ[OPENAI_API_KEY]=your-groq-api-key

|

||||

os.environ[OPENAI_MODEL_NAME]='llama3-8b-8192'

|

||||

os.environ[OPENAI_API_BASE]=https://api.groq.com/openai/v1

|

||||

os.environ["OPENAI_API_KEY"]='your-groq-api-key'

|

||||

os.environ["OPENAI_MODEL_NAME"]='llama3-8b-8192'

|

||||

os.environ["OPENAI_API_BASE"]='https://api.groq.com/openai/v1'

|

||||

```

|

||||

|

||||

#### Mistral API

|

||||

```sh

|

||||

os.environ[OPENAI_API_KEY]=your-mistral-api-key

|

||||

os.environ[OPENAI_API_BASE]=https://api.mistral.ai/v1

|

||||

os.environ[OPENAI_MODEL_NAME]="mistral-small"

|

||||

os.environ["OPENAI_API_KEY"]='your-mistral-api-key'

|

||||

os.environ["OPENAI_API_BASE"]='https://api.mistral.ai/v1'

|

||||

os.environ["OPENAI_MODEL_NAME"]='mistral-small'

|

||||

```

|

||||

|

||||

### Solar

|

||||

@@ -143,8 +143,8 @@ os.environ[OPENAI_MODEL_NAME]="mistral-small"

|

||||

from langchain_community.chat_models.solar import SolarChat

|

||||

```

|

||||

```sh

|

||||

os.environ[SOLAR_API_BASE]="https://api.upstage.ai/v1/solar"

|

||||

os.environ[SOLAR_API_KEY]="your-solar-api-key"

|

||||

os.environ["SOLAR_API_BASE"]='https://api.upstage.ai/v1/solar'

|

||||

os.environ["SOLAR_API_KEY"]='your-solar-api-key'

|

||||

```

|

||||

|

||||

# Free developer API key available here: https://console.upstage.ai/services/solar

|

||||

@@ -155,7 +155,7 @@ os.environ[SOLAR_API_KEY]="your-solar-api-key"

|

||||

```python

|

||||

from langchain_cohere import ChatCohere

|

||||

# Initialize language model

|

||||

os.environ["COHERE_API_KEY"] = "your-cohere-api-key"

|

||||

os.environ["COHERE_API_KEY"]='your-cohere-api-key'

|

||||

llm = ChatCohere()

|

||||

|

||||

# Free developer API key available here: https://cohere.com/

|

||||

@@ -166,10 +166,10 @@ llm = ChatCohere()

|

||||

For Azure OpenAI API integration, set the following environment variables:

|

||||

```sh

|

||||

|

||||

os.environ[AZURE_OPENAI_DEPLOYMENT] = "Your deployment"

|

||||

os.environ["OPENAI_API_VERSION"] = "2023-12-01-preview"

|

||||

os.environ["AZURE_OPENAI_ENDPOINT"] = "Your Endpoint"

|

||||

os.environ["AZURE_OPENAI_API_KEY"] = "<Your API Key>"

|

||||

os.environ["AZURE_OPENAI_DEPLOYMENT"]='Your deployment'

|

||||

os.environ["OPENAI_API_VERSION"]='2023-12-01-preview'

|

||||

os.environ["AZURE_OPENAI_ENDPOINT"]='Your Endpoint'

|

||||

os.environ["AZURE_OPENAI_API_KEY"]='Your API Key'

|

||||

```

|

||||

|

||||

### Example Agent with Azure LLM

|

||||

@@ -194,4 +194,4 @@ azure_agent = Agent(

|

||||

```

|

||||

|

||||

## Conclusion

|

||||

Integrating CrewAI with different LLMs expands the framework's versatility, allowing for customized, efficient AI solutions across various domains and platforms.

|

||||

Integrating CrewAI with different LLMs expands the framework's versatility, allowing for customized, efficient AI solutions across various domains and platforms.

|

||||

|

||||

@@ -8,13 +8,20 @@ Cutting-edge framework for orchestrating role-playing, autonomous AI agents. By

|

||||

<div style="width:25%">

|

||||

<h2>Getting Started</h2>

|

||||

<ul>

|

||||

<li><a href='./getting-started/Installing-CrewAI'>

|

||||

<li>

|

||||

<a href='./getting-started/Installing-CrewAI'>

|

||||

Installing CrewAI

|

||||

</a>

|

||||

</a>

|

||||

</li>

|

||||

<li><a href='./getting-started/Start-a-New-CrewAI-Project-Template-Method'>

|

||||

<li>

|

||||

<a href='./getting-started/Start-a-New-CrewAI-Project-Template-Method'>

|

||||

Start a New CrewAI Project: Template Method

|

||||

</a>

|

||||

</a>

|

||||

</li>

|

||||

<li>

|

||||

<a href='./getting-started/Create-a-New-CrewAI-Pipeline-Template-Method'>

|

||||

Create a New CrewAI Pipeline: Template Method

|

||||

</a>

|

||||

</li>

|

||||

</ul>

|

||||

</div>

|

||||

|

||||

@@ -27,10 +27,10 @@ If needed you can also tweak the parameters of the DALL-E model by passing them

|

||||

```python

|

||||

from crewai_tools import DallETool

|

||||

|

||||

dalle_tool = DallETool(model: str = "dall-e-3",

|

||||

size: str = "1024x1024",

|

||||

quality: str = "standard",

|

||||

n: int = 1)

|

||||

dalle_tool = DallETool(model="dall-e-3",

|

||||

size="1024x1024",

|

||||

quality="standard",

|

||||

n=1)

|

||||

|

||||

Agent(

|

||||

...

|

||||

@@ -38,4 +38,4 @@ Agent(

|

||||

)

|

||||

```

|

||||

|

||||

The parameter are based on the `client.images.generate` method from the OpenAI API. For more information on the parameters, please refer to the [OpenAI API documentation](https://platform.openai.com/docs/guides/images/introduction?lang=python).

|

||||

The parameters are based on the `client.images.generate` method from the OpenAI API. For more information on the parameters, please refer to the [OpenAI API documentation](https://platform.openai.com/docs/guides/images/introduction?lang=python).

|

||||

|

||||

33

docs/tools/FileWriteTool.md

Normal file

33

docs/tools/FileWriteTool.md

Normal file

@@ -0,0 +1,33 @@

|

||||

# FileWriterTool Documentation

|

||||

|

||||

## Description

|

||||

The `FileWriterTool` is a component of the crewai_tools package, designed to simplify the process of writing content to files. It is particularly useful in scenarios such as generating reports, saving logs, creating configuration files, and more. This tool supports creating new directories if they don't exist, making it easier to organize your output.

|

||||

|

||||

## Installation

|

||||

Install the crewai_tools package to use the `FileWriterTool` in your projects:

|

||||

|

||||

```shell

|

||||

pip install 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Example

|

||||

To get started with the `FileWriterTool`:

|

||||

|

||||

```python

|

||||

from crewai_tools import FileWriterTool

|

||||

|

||||

# Initialize the tool

|

||||

file_writer_tool = FileWriterTool()

|

||||

|

||||

# Write content to a file in a specified directory

|

||||

result = file_writer_tool._run('example.txt', 'This is a test content.', 'test_directory')

|

||||

print(result)

|

||||

```

|

||||

|

||||

## Arguments

|

||||

- `filename`: The name of the file you want to create or overwrite.

|

||||

- `content`: The content to write into the file.

|

||||

- `directory` (optional): The path to the directory where the file will be created. Defaults to the current directory (`.`). If the directory does not exist, it will be created.

|

||||

|

||||

## Conclusion

|

||||

By integrating the `FileWriterTool` into your crews, the agents can execute the process of writing content to files and creating directories. This tool is essential for tasks that require saving output data, creating structured file systems, and more. By adhering to the setup and usage guidelines provided, incorporating this tool into projects is straightforward and efficient.

|

||||

@@ -47,8 +47,8 @@ The primary task goal was:

|

||||

|

||||

So the Agent tried to get information from the DB, the first one is wrong so the Agent tries again and gets the correct information and passes to the next agent.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

The second task goal was:

|

||||

@@ -58,11 +58,11 @@ Include information on the average, maximum, and minimum monthly revenue for eac

|

||||

|

||||

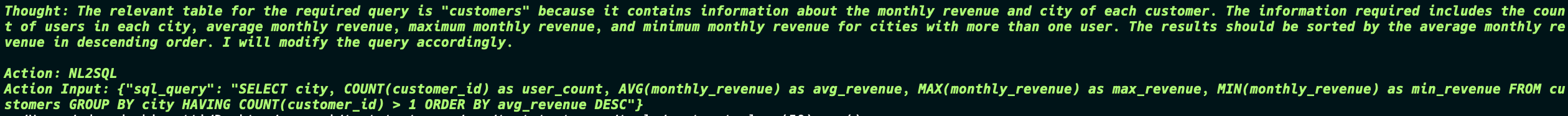

Now things start to get interesting, the Agent generates the SQL query to not only create the table but also insert the data into the table. And in the end the Agent still returns the final report which is exactly what was in the database.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

This is a simple example of how the NL2SQLTool can be used to interact with the database and generate reports based on the data in the database.

|

||||

|

||||

81

docs/tools/SpiderTool.md

Normal file

81

docs/tools/SpiderTool.md

Normal file

@@ -0,0 +1,81 @@

|

||||

# SpiderTool

|

||||

|

||||

## Description

|

||||

|

||||

[Spider](https://spider.cloud/?ref=crewai) is the [fastest](https://github.com/spider-rs/spider/blob/main/benches/BENCHMARKS.md#benchmark-results) open source scraper and crawler that returns LLM-ready data. It converts any website into pure HTML, markdown, metadata or text while enabling you to crawl with custom actions using AI.

|

||||

|

||||

## Installation

|

||||

|

||||

To use the Spider API you need to download the [Spider SDK](https://pypi.org/project/spider-client/) and the crewai[tools] SDK too:

|

||||

|

||||

```python

|

||||

pip install spider-client 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Example

|

||||

|

||||

This example shows you how you can use the Spider tool to enable your agent to scrape and crawl websites. The data returned from the Spider API is already LLM-ready, so no need to do any cleaning there.

|

||||

|

||||

```python

|

||||

from crewai_tools import SpiderTool

|

||||

|

||||

def main():

|

||||

spider_tool = SpiderTool()

|

||||

|

||||

searcher = Agent(

|

||||

role="Web Research Expert",

|

||||

goal="Find related information from specific URL's",

|

||||

backstory="An expert web researcher that uses the web extremely well",

|

||||

tools=[spider_tool],

|

||||

verbose=True,

|

||||

)

|

||||

|

||||

return_metadata = Task(

|

||||

description="Scrape https://spider.cloud with a limit of 1 and enable metadata",

|

||||

expected_output="Metadata and 10 word summary of spider.cloud",

|

||||

agent=searcher

|

||||

)

|

||||

|

||||

crew = Crew(

|

||||

agents=[searcher],

|

||||

tasks=[

|

||||

return_metadata,

|

||||

],

|

||||

verbose=2

|

||||

)

|

||||

|

||||

crew.kickoff()

|

||||

|

||||

if __name__ == "__main__":

|

||||

main()

|

||||

```

|

||||

|

||||

## Arguments

|

||||

|

||||

- `api_key` (string, optional): Specifies Spider API key. If not specified, it looks for `SPIDER_API_KEY` in environment variables.

|

||||

- `params` (object, optional): Optional parameters for the request. Defaults to `{"return_format": "markdown"}` to return the website's content in a format that fits LLMs better.

|

||||

- `request` (string): The request type to perform. Possible values are `http`, `chrome`, and `smart`. Use `smart` to perform an HTTP request by default until JavaScript rendering is needed for the HTML.

|

||||

- `limit` (int): The maximum number of pages allowed to crawl per website. Remove the value or set it to `0` to crawl all pages.

|

||||

- `depth` (int): The crawl limit for maximum depth. If `0`, no limit will be applied.

|

||||

- `cache` (bool): Use HTTP caching for the crawl to speed up repeated runs. Default is `true`.

|

||||

- `budget` (object): Object that has paths with a counter for limiting the amount of pages example `{"*":1}` for only crawling the root page.

|

||||

- `locale` (string): The locale to use for request, example `en-US`.

|

||||

- `cookies` (string): Add HTTP cookies to use for request.

|

||||

- `stealth` (bool): Use stealth mode for headless chrome request to help prevent being blocked. The default is `true` on chrome.

|

||||

- `headers` (object): Forward HTTP headers to use for all request. The object is expected to be a map of key value pairs.

|

||||

- `metadata` (bool): Boolean to store metadata about the pages and content found. This could help improve AI interopt. Defaults to `false` unless you have the website already stored with the configuration enabled.

|

||||

- `viewport` (object): Configure the viewport for chrome. Defaults to `800x600`.

|

||||

- `encoding` (string): The type of encoding to use like `UTF-8`, `SHIFT_JIS`, or etc.

|

||||

- `subdomains` (bool): Allow subdomains to be included. Default is `false`.

|

||||

- `user_agent` (string): Add a custom HTTP user agent to the request. By default this is set to a random agent.

|

||||

- `store_data` (bool): Boolean to determine if storage should be used. If set this takes precedence over `storageless`. Defaults to `false`.

|

||||

- `gpt_config` (object): Use AI to generate actions to perform during the crawl. You can pass an array for the `"prompt"` to chain steps.

|

||||

- `fingerprint` (bool): Use advanced fingerprint for chrome.

|

||||

- `storageless` (bool): Boolean to prevent storing any type of data for the request including storage and AI vectors embedding. Defaults to `false` unless you have the website already stored.

|

||||

- `readability` (bool): Use [readability](https://github.com/mozilla/readability) to pre-process the content for reading. This may drastically improve the content for LLM usage.

|

||||

`return_format` (string): The format to return the data in. Possible values are `markdown`, `raw`, `text`, and `html2text`. Use `raw` to return the default format of the page like HTML etc.

|

||||

- `proxy_enabled` (bool): Enable high performance premium proxies for the request to prevent being blocked at the network level.

|

||||

- `query_selector` (string): The CSS query selector to use when extracting content from the markup.

|

||||

- `full_resources` (bool): Crawl and download all the resources for a website.

|

||||

- `request_timeout` (int): The timeout to use for request. Timeouts can be from `5-60`. The default is `30` seconds.

|

||||

- `run_in_background` (bool): Run the request in the background. Useful if storing data and wanting to trigger crawls to the dashboard. This has no effect if storageless is set.

|

||||

41

mkdocs.yml

41

mkdocs.yml

@@ -129,6 +129,7 @@ nav:

|

||||

- Processes: 'core-concepts/Processes.md'

|

||||

- Crews: 'core-concepts/Crews.md'

|

||||

- Collaboration: 'core-concepts/Collaboration.md'

|

||||

- Pipeline: 'core-concepts/Pipeline.md'

|

||||

- Training: 'core-concepts/Training-Crew.md'

|

||||

- Memory: 'core-concepts/Memory.md'

|

||||

- Planning: 'core-concepts/Planning.md'

|

||||

@@ -152,36 +153,38 @@ nav:

|

||||

- Agent Monitoring with AgentOps: 'how-to/AgentOps-Observability.md'

|

||||

- Agent Monitoring with LangTrace: 'how-to/Langtrace-Observability.md'

|

||||

- Tools Docs:

|

||||

- Firecrawl Scrape Website Tool: 'tools/FirecrawlScrapeWebsiteTool.md'

|

||||

- Firecrawl Crawl Website Tool: 'tools/FirecrawlCrawlWebsiteTool.md'

|

||||

- Firecrawl Search Tool: 'tools/FirecrawlSearchTool.md'

|

||||

- Google Serper Search: 'tools/SerperDevTool.md'

|

||||

- Browserbase Web Loader: 'tools/BrowserbaseLoadTool.md'

|

||||

- Composio Tools: 'tools/ComposioTool.md'

|

||||

- Code Docs RAG Search: 'tools/CodeDocsSearchTool.md'

|

||||

- Code Interpreter: 'tools/CodeInterpreterTool.md'

|

||||

- Scrape Website: 'tools/ScrapeWebsiteTool.md'

|

||||

- Directory Read: 'tools/DirectoryReadTool.md'

|

||||

- Exa Serch Web Loader: 'tools/EXASearchTool.md'

|

||||

- File Read: 'tools/FileReadTool.md'

|

||||

- Selenium Scraper: 'tools/SeleniumScrapingTool.md'

|

||||

- Directory RAG Search: 'tools/DirectorySearchTool.md'

|

||||

- DALL-E Tool: 'tools/DALL-ETool.md'

|

||||

- PDF RAG Search: 'tools/PDFSearchTool.md'

|

||||

- TXT RAG Search: 'tools/TXTSearchTool.md'

|

||||

- Composio Tools: 'tools/ComposioTool.md'

|

||||

- CSV RAG Search: 'tools/CSVSearchTool.md'

|

||||

- XML RAG Search: 'tools/XMLSearchTool.md'

|

||||

- JSON RAG Search: 'tools/JSONSearchTool.md'

|

||||

- DALL-E Tool: 'tools/DALL-ETool.md'

|

||||

- Directory RAG Search: 'tools/DirectorySearchTool.md'

|

||||

- Directory Read: 'tools/DirectoryReadTool.md'

|

||||

- Docx Rag Search: 'tools/DOCXSearchTool.md'

|

||||

- EXA Serch Web Loader: 'tools/EXASearchTool.md'

|

||||

- File Read: 'tools/FileReadTool.md'

|

||||

- File Write: 'tools/FileWriteTool.md'

|

||||

- Firecrawl Crawl Website Tool: 'tools/FirecrawlCrawlWebsiteTool.md'

|

||||

- Firecrawl Scrape Website Tool: 'tools/FirecrawlScrapeWebsiteTool.md'

|

||||

- Firecrawl Search Tool: 'tools/FirecrgstawlSearchTool.md'

|

||||

- Github RAG Search: 'tools/GitHubSearchTool.md'

|

||||

- Google Serper Search: 'tools/SerperDevTool.md'

|

||||

- JSON RAG Search: 'tools/JSONSearchTool.md'

|

||||

- MDX RAG Search: 'tools/MDXSearchTool.md'

|

||||

- MySQL Tool: 'tools/MySQLTool.md'

|

||||

- NL2SQL Tool: 'tools/NL2SQLTool.md'

|

||||

- PDF RAG Search: 'tools/PDFSearchTool.md'

|

||||

- PG RAG Search: 'tools/PGSearchTool.md'

|

||||

- Scrape Website: 'tools/ScrapeWebsiteTool.md'

|

||||

- Selenium Scraper: 'tools/SeleniumScrapingTool.md'

|

||||

- Spider Scraper: 'tools/SpiderTool.md'

|

||||

- TXT RAG Search: 'tools/TXTSearchTool.md'

|

||||

- Vision Tool: 'tools/VisionTool.md'

|

||||

- Website RAG Search: 'tools/WebsiteSearchTool.md'

|

||||

- Github RAG Search: 'tools/GitHubSearchTool.md'

|

||||

- Code Docs RAG Search: 'tools/CodeDocsSearchTool.md'

|

||||

- Youtube Video RAG Search: 'tools/YoutubeVideoSearchTool.md'

|

||||

- XML RAG Search: 'tools/XMLSearchTool.md'

|

||||

- Youtube Channel RAG Search: 'tools/YoutubeChannelSearchTool.md'

|

||||

- Youtube Video RAG Search: 'tools/YoutubeVideoSearchTool.md'

|

||||

- Examples:

|

||||

- Trip Planner Crew: https://github.com/joaomdmoura/crewAI-examples/tree/main/trip_planner"

|

||||

- Create Instagram Post: https://github.com/joaomdmoura/crewAI-examples/tree/main/instagram_post"

|

||||

|

||||

174

poetry.lock

generated

174

poetry.lock

generated

@@ -1,4 +1,4 @@

|

||||

# This file is automatically @generated by Poetry 1.7.1 and should not be changed by hand.

|

||||

# This file is automatically @generated by Poetry 1.8.3 and should not be changed by hand.

|

||||

|

||||

[[package]]

|

||||

name = "agentops"

|

||||

@@ -253,6 +253,24 @@ docs = ["cogapp", "furo", "myst-parser", "sphinx", "sphinx-notfound-page", "sphi

|

||||

tests = ["cloudpickle", "hypothesis", "mypy (>=1.11.1)", "pympler", "pytest (>=4.3.0)", "pytest-mypy-plugins", "pytest-xdist[psutil]"]

|

||||

tests-mypy = ["mypy (>=1.11.1)", "pytest-mypy-plugins"]

|

||||

|

||||

[[package]]

|

||||

name = "auth0-python"

|

||||

version = "4.7.1"

|

||||

description = ""

|

||||

optional = false

|

||||

python-versions = ">=3.8"

|

||||

files = [

|

||||

{file = "auth0_python-4.7.1-py3-none-any.whl", hash = "sha256:5bdbefd582171f398c2b686a19fb5e241a2fa267929519a0c02e33e5932fa7b8"},

|

||||

{file = "auth0_python-4.7.1.tar.gz", hash = "sha256:5cf8be11aa807d54e19271a990eb92bea1863824e4863c7fc8493c6f15a597f1"},

|

||||

]

|

||||

|

||||

[package.dependencies]

|

||||

aiohttp = ">=3.8.5,<4.0.0"

|

||||

cryptography = ">=42.0.4,<43.0.0"

|

||||

pyjwt = ">=2.8.0,<3.0.0"

|

||||

requests = ">=2.31.0,<3.0.0"

|

||||

urllib3 = ">=2.0.7,<3.0.0"

|

||||

|

||||

[[package]]

|

||||

name = "autoflake"

|

||||

version = "2.3.1"

|

||||

@@ -829,29 +847,81 @@ name = "crewai-tools"

|

||||

version = "0.8.3"

|

||||

description = "Set of tools for the crewAI framework"

|

||||

optional = false

|

||||

python-versions = ">=3.10,<=3.13"

|

||||

files = []

|

||||

develop = false

|

||||

python-versions = "<=3.13,>=3.10"

|

||||

files = [

|

||||

{file = "crewai_tools-0.8.3-py3-none-any.whl", hash = "sha256:a54a10c36b8403250e13d6594bd37db7e7deb3f9fabc77e8720c081864ae6189"},

|

||||

{file = "crewai_tools-0.8.3.tar.gz", hash = "sha256:f0317ea1d926221b22fcf4b816d71916fe870aa66ed7ee2a0067dba42b5634eb"},

|

||||

]

|

||||

|

||||

[package.dependencies]

|

||||

beautifulsoup4 = "^4.12.3"

|

||||

chromadb = "^0.4.22"

|

||||

docker = "^7.1.0"

|

||||

docx2txt = "^0.8"

|

||||

embedchain = "^0.1.114"

|

||||

lancedb = "^0.5.4"

|

||||

beautifulsoup4 = ">=4.12.3,<5.0.0"

|

||||

chromadb = ">=0.4.22,<0.5.0"

|

||||

docker = ">=7.1.0,<8.0.0"

|

||||

docx2txt = ">=0.8,<0.9"

|

||||

embedchain = ">=0.1.114,<0.2.0"

|

||||

lancedb = ">=0.5.4,<0.6.0"

|

||||

langchain = ">0.2,<=0.3"

|

||||

openai = "^1.12.0"

|

||||

pydantic = "^2.6.1"

|

||||

pyright = "^1.1.350"

|

||||

pytest = "^8.0.0"

|

||||

pytube = "^15.0.0"

|

||||

requests = "^2.31.0"

|

||||

selenium = "^4.18.1"

|

||||

openai = ">=1.12.0,<2.0.0"

|

||||

pydantic = ">=2.6.1,<3.0.0"

|

||||

pyright = ">=1.1.350,<2.0.0"

|

||||

pytest = ">=8.0.0,<9.0.0"

|

||||

pytube = ">=15.0.0,<16.0.0"

|

||||

requests = ">=2.31.0,<3.0.0"

|

||||

selenium = ">=4.18.1,<5.0.0"

|

||||

|

||||

[package.source]

|

||||

type = "directory"

|

||||

url = "../crewai-tools"

|

||||

[[package]]

|

||||

name = "cryptography"

|

||||

version = "42.0.8"

|

||||

description = "cryptography is a package which provides cryptographic recipes and primitives to Python developers."

|

||||

optional = false

|

||||

python-versions = ">=3.7"

|

||||

files = [

|

||||

{file = "cryptography-42.0.8-cp37-abi3-macosx_10_12_universal2.whl", hash = "sha256:81d8a521705787afe7a18d5bfb47ea9d9cc068206270aad0b96a725022e18d2e"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-macosx_10_12_x86_64.whl", hash = "sha256:961e61cefdcb06e0c6d7e3a1b22ebe8b996eb2bf50614e89384be54c48c6b63d"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-manylinux_2_17_aarch64.manylinux2014_aarch64.whl", hash = "sha256:e3ec3672626e1b9e55afd0df6d774ff0e953452886e06e0f1eb7eb0c832e8902"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl", hash = "sha256:e599b53fd95357d92304510fb7bda8523ed1f79ca98dce2f43c115950aa78801"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-manylinux_2_28_aarch64.whl", hash = "sha256:5226d5d21ab681f432a9c1cf8b658c0cb02533eece706b155e5fbd8a0cdd3949"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-manylinux_2_28_x86_64.whl", hash = "sha256:6b7c4f03ce01afd3b76cf69a5455caa9cfa3de8c8f493e0d3ab7d20611c8dae9"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-musllinux_1_1_aarch64.whl", hash = "sha256:2346b911eb349ab547076f47f2e035fc8ff2c02380a7cbbf8d87114fa0f1c583"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-musllinux_1_1_x86_64.whl", hash = "sha256:ad803773e9df0b92e0a817d22fd8a3675493f690b96130a5e24f1b8fabbea9c7"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-musllinux_1_2_aarch64.whl", hash = "sha256:2f66d9cd9147ee495a8374a45ca445819f8929a3efcd2e3df6428e46c3cbb10b"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-musllinux_1_2_x86_64.whl", hash = "sha256:d45b940883a03e19e944456a558b67a41160e367a719833c53de6911cabba2b7"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-win32.whl", hash = "sha256:a0c5b2b0585b6af82d7e385f55a8bc568abff8923af147ee3c07bd8b42cda8b2"},

|

||||

{file = "cryptography-42.0.8-cp37-abi3-win_amd64.whl", hash = "sha256:57080dee41209e556a9a4ce60d229244f7a66ef52750f813bfbe18959770cfba"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-macosx_10_12_universal2.whl", hash = "sha256:dea567d1b0e8bc5764b9443858b673b734100c2871dc93163f58c46a97a83d28"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-manylinux_2_17_aarch64.manylinux2014_aarch64.whl", hash = "sha256:c4783183f7cb757b73b2ae9aed6599b96338eb957233c58ca8f49a49cc32fd5e"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-manylinux_2_17_x86_64.manylinux2014_x86_64.whl", hash = "sha256:a0608251135d0e03111152e41f0cc2392d1e74e35703960d4190b2e0f4ca9c70"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-manylinux_2_28_aarch64.whl", hash = "sha256:dc0fdf6787f37b1c6b08e6dfc892d9d068b5bdb671198c72072828b80bd5fe4c"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-manylinux_2_28_x86_64.whl", hash = "sha256:9c0c1716c8447ee7dbf08d6db2e5c41c688544c61074b54fc4564196f55c25a7"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-musllinux_1_1_aarch64.whl", hash = "sha256:fff12c88a672ab9c9c1cf7b0c80e3ad9e2ebd9d828d955c126be4fd3e5578c9e"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-musllinux_1_1_x86_64.whl", hash = "sha256:cafb92b2bc622cd1aa6a1dce4b93307792633f4c5fe1f46c6b97cf67073ec961"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-musllinux_1_2_aarch64.whl", hash = "sha256:31f721658a29331f895a5a54e7e82075554ccfb8b163a18719d342f5ffe5ecb1"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-musllinux_1_2_x86_64.whl", hash = "sha256:b297f90c5723d04bcc8265fc2a0f86d4ea2e0f7ab4b6994459548d3a6b992a14"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-win32.whl", hash = "sha256:2f88d197e66c65be5e42cd72e5c18afbfae3f741742070e3019ac8f4ac57262c"},

|

||||

{file = "cryptography-42.0.8-cp39-abi3-win_amd64.whl", hash = "sha256:fa76fbb7596cc5839320000cdd5d0955313696d9511debab7ee7278fc8b5c84a"},

|

||||

{file = "cryptography-42.0.8-pp310-pypy310_pp73-macosx_10_12_x86_64.whl", hash = "sha256:ba4f0a211697362e89ad822e667d8d340b4d8d55fae72cdd619389fb5912eefe"},

|

||||

{file = "cryptography-42.0.8-pp310-pypy310_pp73-manylinux_2_28_aarch64.whl", hash = "sha256:81884c4d096c272f00aeb1f11cf62ccd39763581645b0812e99a91505fa48e0c"},

|

||||

{file = "cryptography-42.0.8-pp310-pypy310_pp73-manylinux_2_28_x86_64.whl", hash = "sha256:c9bb2ae11bfbab395bdd072985abde58ea9860ed84e59dbc0463a5d0159f5b71"},

|

||||

{file = "cryptography-42.0.8-pp310-pypy310_pp73-win_amd64.whl", hash = "sha256:7016f837e15b0a1c119d27ecd89b3515f01f90a8615ed5e9427e30d9cdbfed3d"},

|

||||

{file = "cryptography-42.0.8-pp39-pypy39_pp73-macosx_10_12_x86_64.whl", hash = "sha256:5a94eccb2a81a309806027e1670a358b99b8fe8bfe9f8d329f27d72c094dde8c"},

|

||||

{file = "cryptography-42.0.8-pp39-pypy39_pp73-manylinux_2_28_aarch64.whl", hash = "sha256:dec9b018df185f08483f294cae6ccac29e7a6e0678996587363dc352dc65c842"},

|

||||

{file = "cryptography-42.0.8-pp39-pypy39_pp73-manylinux_2_28_x86_64.whl", hash = "sha256:343728aac38decfdeecf55ecab3264b015be68fc2816ca800db649607aeee648"},

|

||||

{file = "cryptography-42.0.8-pp39-pypy39_pp73-win_amd64.whl", hash = "sha256:013629ae70b40af70c9a7a5db40abe5d9054e6f4380e50ce769947b73bf3caad"},

|

||||

{file = "cryptography-42.0.8.tar.gz", hash = "sha256:8d09d05439ce7baa8e9e95b07ec5b6c886f548deb7e0f69ef25f64b3bce842f2"},

|

||||

]

|

||||

|

||||

[package.dependencies]

|

||||

cffi = {version = ">=1.12", markers = "platform_python_implementation != \"PyPy\""}

|

||||

|

||||

[package.extras]

|

||||

docs = ["sphinx (>=5.3.0)", "sphinx-rtd-theme (>=1.1.1)"]

|

||||

docstest = ["pyenchant (>=1.6.11)", "readme-renderer", "sphinxcontrib-spelling (>=4.0.1)"]

|

||||

nox = ["nox"]

|

||||

pep8test = ["check-sdist", "click", "mypy", "ruff"]

|

||||

sdist = ["build"]

|

||||

ssh = ["bcrypt (>=3.1.5)"]

|

||||

test = ["certifi", "pretend", "pytest (>=6.2.0)", "pytest-benchmark", "pytest-cov", "pytest-xdist"]

|

||||

test-randomorder = ["pytest-randomly"]

|

||||

|

||||

[[package]]

|

||||

name = "cssselect2"

|

||||

@@ -1321,12 +1391,12 @@ files = [

|

||||

google-auth = ">=2.14.1,<3.0.dev0"

|

||||

googleapis-common-protos = ">=1.56.2,<2.0.dev0"

|

||||

grpcio = [

|

||||

{version = ">=1.49.1,<2.0dev", optional = true, markers = "python_version >= \"3.11\" and extra == \"grpc\""},

|

||||

{version = ">=1.33.2,<2.0dev", optional = true, markers = "python_version < \"3.11\" and extra == \"grpc\""},

|

||||

{version = ">=1.49.1,<2.0dev", optional = true, markers = "python_version >= \"3.11\" and extra == \"grpc\""},

|

||||

]

|

||||

grpcio-status = [

|

||||

{version = ">=1.49.1,<2.0.dev0", optional = true, markers = "python_version >= \"3.11\" and extra == \"grpc\""},

|

||||

{version = ">=1.33.2,<2.0.dev0", optional = true, markers = "python_version < \"3.11\" and extra == \"grpc\""},

|

||||

{version = ">=1.49.1,<2.0.dev0", optional = true, markers = "python_version >= \"3.11\" and extra == \"grpc\""},

|

||||

]

|

||||

proto-plus = ">=1.22.3,<2.0.0dev"

|

||||

protobuf = ">=3.19.5,<3.20.0 || >3.20.0,<3.20.1 || >3.20.1,<4.21.0 || >4.21.0,<4.21.1 || >4.21.1,<4.21.2 || >4.21.2,<4.21.3 || >4.21.3,<4.21.4 || >4.21.4,<4.21.5 || >4.21.5,<6.0.0.dev0"

|

||||

@@ -3628,8 +3698,8 @@ files = [

|

||||

|

||||

[package.dependencies]

|

||||

numpy = [

|

||||

{version = ">=1.23.2", markers = "python_version == \"3.11\""},

|

||||

{version = ">=1.22.4", markers = "python_version < \"3.11\""},

|

||||

{version = ">=1.23.2", markers = "python_version == \"3.11\""},

|

||||

{version = ">=1.26.0", markers = "python_version >= \"3.12\""},

|

||||

]

|

||||

python-dateutil = ">=2.8.2"

|

||||

@@ -4027,6 +4097,19 @@ files = [

|

||||

{file = "pyarrow-17.0.0-cp312-cp312-win_amd64.whl", hash = "sha256:392bc9feabc647338e6c89267635e111d71edad5fcffba204425a7c8d13610d7"},

|

||||

{file = "pyarrow-17.0.0-cp38-cp38-macosx_10_15_x86_64.whl", hash = "sha256:af5ff82a04b2171415f1410cff7ebb79861afc5dae50be73ce06d6e870615204"},

|

||||

{file = "pyarrow-17.0.0-cp38-cp38-macosx_11_0_arm64.whl", hash = "sha256:edca18eaca89cd6382dfbcff3dd2d87633433043650c07375d095cd3517561d8"},

|

||||

{file = "pyarrow-17.0.0-cp38-cp38-manylinux_2_17_aarch64.manylinux2014_aarch64.whl", hash = "sha256:7c7916bff914ac5d4a8fe25b7a25e432ff921e72f6f2b7547d1e325c1ad9d155"},

|

||||

{file = "pyarrow-17.0.0-cp38-cp38-manylinux_2_17_x86_64.manylinux2014_x86_64.whl", hash = "sha256:f553ca691b9e94b202ff741bdd40f6ccb70cdd5fbf65c187af132f1317de6145"},

|

||||

{file = "pyarrow-17.0.0-cp38-cp38-manylinux_2_28_aarch64.whl", hash = "sha256:0cdb0e627c86c373205a2f94a510ac4376fdc523f8bb36beab2e7f204416163c"},

|

||||

{file = "pyarrow-17.0.0-cp38-cp38-manylinux_2_28_x86_64.whl", hash = "sha256:d7d192305d9d8bc9082d10f361fc70a73590a4c65cf31c3e6926cd72b76bc35c"},

|

||||

{file = "pyarrow-17.0.0-cp38-cp38-win_amd64.whl", hash = "sha256:02dae06ce212d8b3244dd3e7d12d9c4d3046945a5933d28026598e9dbbda1fca"},

|

||||

{file = "pyarrow-17.0.0-cp39-cp39-macosx_10_15_x86_64.whl", hash = "sha256:13d7a460b412f31e4c0efa1148e1d29bdf18ad1411eb6757d38f8fbdcc8645fb"},

|

||||

{file = "pyarrow-17.0.0-cp39-cp39-macosx_11_0_arm64.whl", hash = "sha256:9b564a51fbccfab5a04a80453e5ac6c9954a9c5ef2890d1bcf63741909c3f8df"},

|

||||

{file = "pyarrow-17.0.0-cp39-cp39-manylinux_2_17_aarch64.manylinux2014_aarch64.whl", hash = "sha256:32503827abbc5aadedfa235f5ece8c4f8f8b0a3cf01066bc8d29de7539532687"},

|

||||

{file = "pyarrow-17.0.0-cp39-cp39-manylinux_2_17_x86_64.manylinux2014_x86_64.whl", hash = "sha256:a155acc7f154b9ffcc85497509bcd0d43efb80d6f733b0dc3bb14e281f131c8b"},

|

||||

{file = "pyarrow-17.0.0-cp39-cp39-manylinux_2_28_aarch64.whl", hash = "sha256:dec8d129254d0188a49f8a1fc99e0560dc1b85f60af729f47de4046015f9b0a5"},

|

||||

{file = "pyarrow-17.0.0-cp39-cp39-manylinux_2_28_x86_64.whl", hash = "sha256:a48ddf5c3c6a6c505904545c25a4ae13646ae1f8ba703c4df4a1bfe4f4006bda"},

|

||||

{file = "pyarrow-17.0.0-cp39-cp39-win_amd64.whl", hash = "sha256:42bf93249a083aca230ba7e2786c5f673507fa97bbd9725a1e2754715151a204"},

|

||||

{file = "pyarrow-17.0.0.tar.gz", hash = "sha256:4beca9521ed2c0921c1023e68d097d0299b62c362639ea315572a58f3f50fd28"},

|

||||

]

|

||||

|

||||

[package.dependencies]

|

||||

@@ -4219,6 +4302,23 @@ files = [

|

||||

[package.extras]

|

||||

windows-terminal = ["colorama (>=0.4.6)"]

|

||||

|

||||

[[package]]

|

||||

name = "pyjwt"

|

||||

version = "2.9.0"

|

||||

description = "JSON Web Token implementation in Python"

|

||||

optional = false

|

||||

python-versions = ">=3.8"

|

||||

files = [

|

||||

{file = "PyJWT-2.9.0-py3-none-any.whl", hash = "sha256:3b02fb0f44517787776cf48f2ae25d8e14f300e6d7545a4315cee571a415e850"},

|

||||

{file = "pyjwt-2.9.0.tar.gz", hash = "sha256:7e1e5b56cc735432a7369cbfa0efe50fa113ebecdc04ae6922deba8b84582d0c"},

|

||||

]

|

||||

|

||||

[package.extras]

|

||||

crypto = ["cryptography (>=3.4.0)"]

|

||||

dev = ["coverage[toml] (==5.0.4)", "cryptography (>=3.4.0)", "pre-commit", "pytest (>=6.0.0,<7.0.0)", "sphinx", "sphinx-rtd-theme", "zope.interface"]

|

||||

docs = ["sphinx", "sphinx-rtd-theme", "zope.interface"]

|

||||

tests = ["coverage[toml] (==5.0.4)", "pytest (>=6.0.0,<7.0.0)"]

|

||||

|

||||

[[package]]

|

||||

name = "pylance"

|

||||

version = "0.9.18"

|

||||

@@ -5467,22 +5567,23 @@ files = [

|

||||

|

||||

[[package]]

|

||||

name = "urllib3"

|

||||

version = "1.26.19"

|

||||

version = "2.2.2"

|

||||

description = "HTTP library with thread-safe connection pooling, file post, and more."

|

||||

optional = false

|

||||

python-versions = "!=3.0.*,!=3.1.*,!=3.2.*,!=3.3.*,!=3.4.*,!=3.5.*,>=2.7"

|

||||

python-versions = ">=3.8"

|

||||

files = [

|

||||

{file = "urllib3-1.26.19-py2.py3-none-any.whl", hash = "sha256:37a0344459b199fce0e80b0d3569837ec6b6937435c5244e7fd73fa6006830f3"},

|

||||

{file = "urllib3-1.26.19.tar.gz", hash = "sha256:3e3d753a8618b86d7de333b4223005f68720bcd6a7d2bcb9fbd2229ec7c1e429"},

|

||||

{file = "urllib3-2.2.2-py3-none-any.whl", hash = "sha256:a448b2f64d686155468037e1ace9f2d2199776e17f0a46610480d311f73e3472"},

|

||||

{file = "urllib3-2.2.2.tar.gz", hash = "sha256:dd505485549a7a552833da5e6063639d0d177c04f23bc3864e41e5dc5f612168"},

|

||||

]

|

||||

|

||||

[package.dependencies]

|

||||

PySocks = {version = ">=1.5.6,<1.5.7 || >1.5.7,<2.0", optional = true, markers = "extra == \"socks\""}

|

||||

pysocks = {version = ">=1.5.6,<1.5.7 || >1.5.7,<2.0", optional = true, markers = "extra == \"socks\""}

|

||||

|

||||

[package.extras]

|

||||