mirror of

https://github.com/crewAIInc/crewAI.git

synced 2026-01-08 23:58:34 +00:00

Compare commits

13 Commits

devin/1739

...

devin/1739

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

3c2f85d9d4 | ||

|

|

ae82745ddd | ||

|

|

b98e720531 | ||

|

|

5467a70d97 | ||

|

|

99a6390158 | ||

|

|

d3b398ed52 | ||

|

|

d52fd09602 | ||

|

|

d6800d8957 | ||

|

|

2fd7506ed9 | ||

|

|

161084aff2 | ||

|

|

b145cb3247 | ||

|

|

1adbcf697d | ||

|

|

e51355200a |

98

docs/how-to/langfuse-observability.mdx

Normal file

98

docs/how-to/langfuse-observability.mdx

Normal file

@@ -0,0 +1,98 @@

|

||||

---

|

||||

title: Agent Monitoring with Langfuse

|

||||

description: Learn how to integrate Langfuse with CrewAI via OpenTelemetry using OpenLit

|

||||

icon: magnifying-glass-chart

|

||||

---

|

||||

|

||||

# Integrate Langfuse with CrewAI

|

||||

|

||||

This notebook demonstrates how to integrate **Langfuse** with **CrewAI** using OpenTelemetry via the **OpenLit** SDK. By the end of this notebook, you will be able to trace your CrewAI applications with Langfuse for improved observability and debugging.

|

||||

|

||||

> **What is Langfuse?** [Langfuse](https://langfuse.com) is an open-source LLM engineering platform. It provides tracing and monitoring capabilities for LLM applications, helping developers debug, analyze, and optimize their AI systems. Langfuse integrates with various tools and frameworks via native integrations, OpenTelemetry, and APIs/SDKs.

|

||||

|

||||

## Get Started

|

||||

|

||||

We'll walk through a simple example of using CrewAI and integrating it with Langfuse via OpenTelemetry using OpenLit.

|

||||

|

||||

### Step 1: Install Dependencies

|

||||

|

||||

|

||||

```python

|

||||

%pip install langfuse openlit crewai crewai_tools

|

||||

```

|

||||

|

||||

### Step 2: Set Up Environment Variables

|

||||

|

||||

Set your Langfuse API keys and configure OpenTelemetry export settings to send traces to Langfuse. Please refer to the [Langfuse OpenTelemetry Docs](https://langfuse.com/docs/opentelemetry/get-started) for more information on the Langfuse OpenTelemetry endpoint `/api/public/otel` and authentication.

|

||||

|

||||

|

||||

```python

|

||||

import os

|

||||

import base64

|

||||

|

||||

LANGFUSE_PUBLIC_KEY="pk-lf-..."

|

||||

LANGFUSE_SECRET_KEY="sk-lf-..."

|

||||

LANGFUSE_AUTH=base64.b64encode(f"{LANGFUSE_PUBLIC_KEY}:{LANGFUSE_SECRET_KEY}".encode()).decode()

|

||||

|

||||

os.environ["OTEL_EXPORTER_OTLP_ENDPOINT"] = "https://cloud.langfuse.com/api/public/otel" # EU data region

|

||||

# os.environ["OTEL_EXPORTER_OTLP_ENDPOINT"] = "https://us.cloud.langfuse.com/api/public/otel" # US data region

|

||||

os.environ["OTEL_EXPORTER_OTLP_HEADERS"] = f"Authorization=Basic {LANGFUSE_AUTH}"

|

||||

|

||||

# your openai key

|

||||

os.environ["OPENAI_API_KEY"] = "sk-..."

|

||||

```

|

||||

|

||||

### Step 3: Initialize OpenLit

|

||||

|

||||

Initialize the OpenLit OpenTelemetry instrumentation SDK to start capturing OpenTelemetry traces.

|

||||

|

||||

|

||||

```python

|

||||

import openlit

|

||||

|

||||

openlit.init()

|

||||

```

|

||||

|

||||

### Step 4: Create a Simple CrewAI Application

|

||||

|

||||

We'll create a simple CrewAI application where multiple agents collaborate to answer a user's question.

|

||||

|

||||

|

||||

```python

|

||||

from crewai import Agent, Task, Crew

|

||||

|

||||

from crewai_tools import (

|

||||

WebsiteSearchTool

|

||||

)

|

||||

|

||||

web_rag_tool = WebsiteSearchTool()

|

||||

|

||||

writer = Agent(

|

||||

role="Writer",

|

||||

goal="You make math engaging and understandable for young children through poetry",

|

||||

backstory="You're an expert in writing haikus but you know nothing of math.",

|

||||

tools=[web_rag_tool],

|

||||

)

|

||||

|

||||

task = Task(description=("What is {multiplication}?"),

|

||||

expected_output=("Compose a haiku that includes the answer."),

|

||||

agent=writer)

|

||||

|

||||

crew = Crew(

|

||||

agents=[writer],

|

||||

tasks=[task],

|

||||

share_crew=False

|

||||

)

|

||||

```

|

||||

|

||||

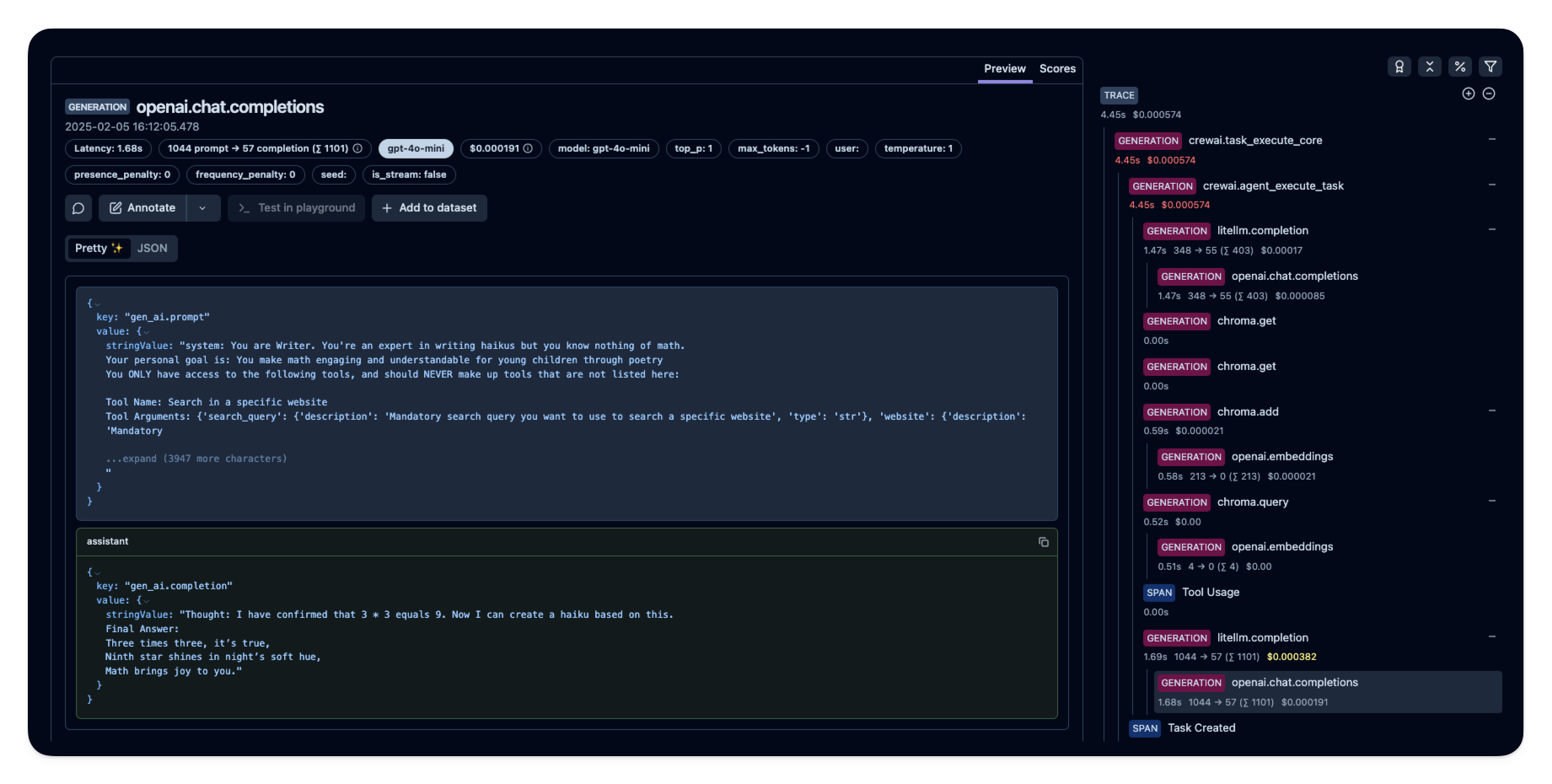

### Step 5: See Traces in Langfuse

|

||||

|

||||

After running the agent, you can view the traces generated by your CrewAI application in [Langfuse](https://cloud.langfuse.com). You should see detailed steps of the LLM interactions, which can help you debug and optimize your AI agent.

|

||||

|

||||

|

||||

|

||||

_[Public example trace in Langfuse](https://cloud.langfuse.com/project/cloramnkj0002jz088vzn1ja4/traces/e2cf380ffc8d47d28da98f136140642b?timestamp=2025-02-05T15%3A12%3A02.717Z&observation=3b32338ee6a5d9af)_

|

||||

|

||||

## References

|

||||

|

||||

- [Langfuse OpenTelemetry Docs](https://langfuse.com/docs/opentelemetry/get-started)

|

||||

@@ -1,211 +0,0 @@

|

||||

# Portkey Integration with CrewAI

|

||||

<img src="https://raw.githubusercontent.com/siddharthsambharia-portkey/Portkey-Product-Images/main/Portkey-CrewAI.png" alt="Portkey CrewAI Header Image" width="70%" />

|

||||

|

||||

|

||||

[Portkey](https://portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) is a 2-line upgrade to make your CrewAI agents reliable, cost-efficient, and fast.

|

||||

|

||||

Portkey adds 4 core production capabilities to any CrewAI agent:

|

||||

1. Routing to **200+ LLMs**

|

||||

2. Making each LLM call more robust

|

||||

3. Full-stack tracing & cost, performance analytics

|

||||

4. Real-time guardrails to enforce behavior

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## Getting Started

|

||||

|

||||

1. **Install Required Packages:**

|

||||

|

||||

```bash

|

||||

pip install -qU crewai portkey-ai

|

||||

```

|

||||

|

||||

2. **Configure the LLM Client:**

|

||||

|

||||

To build CrewAI Agents with Portkey, you'll need two keys:

|

||||

- **Portkey API Key**: Sign up on the [Portkey app](https://app.portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) and copy your API key

|

||||

- **Virtual Key**: Virtual Keys securely manage your LLM API keys in one place. Store your LLM provider API keys securely in Portkey's vault

|

||||

|

||||

```python

|

||||

from crewai import LLM

|

||||

from portkey_ai import createHeaders, PORTKEY_GATEWAY_URL

|

||||

|

||||

gpt_llm = LLM(

|

||||

model="gpt-4",

|

||||

base_url=PORTKEY_GATEWAY_URL,

|

||||

api_key="dummy", # We are using Virtual key

|

||||

extra_headers=createHeaders(

|

||||

api_key="YOUR_PORTKEY_API_KEY",

|

||||

virtual_key="YOUR_VIRTUAL_KEY", # Enter your Virtual key from Portkey

|

||||

)

|

||||

)

|

||||

```

|

||||

|

||||

3. **Create and Run Your First Agent:**

|

||||

|

||||

```python

|

||||

from crewai import Agent, Task, Crew

|

||||

|

||||

# Define your agents with roles and goals

|

||||

coder = Agent(

|

||||

role='Software developer',

|

||||

goal='Write clear, concise code on demand',

|

||||

backstory='An expert coder with a keen eye for software trends.',

|

||||

llm=gpt_llm

|

||||

)

|

||||

|

||||

# Create tasks for your agents

|

||||

task1 = Task(

|

||||

description="Define the HTML for making a simple website with heading- Hello World! Portkey is working!",

|

||||

expected_output="A clear and concise HTML code",

|

||||

agent=coder

|

||||

)

|

||||

|

||||

# Instantiate your crew

|

||||

crew = Crew(

|

||||

agents=[coder],

|

||||

tasks=[task1],

|

||||

)

|

||||

|

||||

result = crew.kickoff()

|

||||

print(result)

|

||||

```

|

||||

|

||||

|

||||

## Key Features

|

||||

|

||||

| Feature | Description |

|

||||

|---------|-------------|

|

||||

| 🌐 Multi-LLM Support | Access OpenAI, Anthropic, Gemini, Azure, and 250+ providers through a unified interface |

|

||||

| 🛡️ Production Reliability | Implement retries, timeouts, load balancing, and fallbacks |

|

||||

| 📊 Advanced Observability | Track 40+ metrics including costs, tokens, latency, and custom metadata |

|

||||

| 🔍 Comprehensive Logging | Debug with detailed execution traces and function call logs |

|

||||

| 🚧 Security Controls | Set budget limits and implement role-based access control |

|

||||

| 🔄 Performance Analytics | Capture and analyze feedback for continuous improvement |

|

||||

| 💾 Intelligent Caching | Reduce costs and latency with semantic or simple caching |

|

||||

|

||||

|

||||

## Production Features with Portkey Configs

|

||||

|

||||

All features mentioned below are through Portkey's Config system. Portkey's Config system allows you to define routing strategies using simple JSON objects in your LLM API calls. You can create and manage Configs directly in your code or through the Portkey Dashboard. Each Config has a unique ID for easy reference.

|

||||

|

||||

<Frame>

|

||||

<img src="https://raw.githubusercontent.com/Portkey-AI/docs-core/refs/heads/main/images/libraries/libraries-3.avif"/>

|

||||

</Frame>

|

||||

|

||||

|

||||

### 1. Use 250+ LLMs

|

||||

Access various LLMs like Anthropic, Gemini, Mistral, Azure OpenAI, and more with minimal code changes. Switch between providers or use them together seamlessly. [Learn more about Universal API](https://portkey.ai/docs/product/ai-gateway/universal-api)

|

||||

|

||||

|

||||

Easily switch between different LLM providers:

|

||||

|

||||

```python

|

||||

# Anthropic Configuration

|

||||

anthropic_llm = LLM(

|

||||

model="claude-3-5-sonnet-latest",

|

||||

base_url=PORTKEY_GATEWAY_URL,

|

||||

api_key="dummy",

|

||||

extra_headers=createHeaders(

|

||||

api_key="YOUR_PORTKEY_API_KEY",

|

||||

virtual_key="YOUR_ANTHROPIC_VIRTUAL_KEY", #You don't need provider when using Virtual keys

|

||||

trace_id="anthropic_agent"

|

||||

)

|

||||

)

|

||||

|

||||

# Azure OpenAI Configuration

|

||||

azure_llm = LLM(

|

||||

model="gpt-4",

|

||||

base_url=PORTKEY_GATEWAY_URL,

|

||||

api_key="dummy",

|

||||

extra_headers=createHeaders(

|

||||

api_key="YOUR_PORTKEY_API_KEY",

|

||||

virtual_key="YOUR_AZURE_VIRTUAL_KEY", #You don't need provider when using Virtual keys

|

||||

trace_id="azure_agent"

|

||||

)

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

### 2. Caching

|

||||

Improve response times and reduce costs with two powerful caching modes:

|

||||

- **Simple Cache**: Perfect for exact matches

|

||||

- **Semantic Cache**: Matches responses for requests that are semantically similar

|

||||

[Learn more about Caching](https://portkey.ai/docs/product/ai-gateway/cache-simple-and-semantic)

|

||||

|

||||

```py

|

||||

config = {

|

||||

"cache": {

|

||||

"mode": "semantic", # or "simple" for exact matching

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### 3. Production Reliability

|

||||

Portkey provides comprehensive reliability features:

|

||||

- **Automatic Retries**: Handle temporary failures gracefully

|

||||

- **Request Timeouts**: Prevent hanging operations

|

||||

- **Conditional Routing**: Route requests based on specific conditions

|

||||

- **Fallbacks**: Set up automatic provider failovers

|

||||

- **Load Balancing**: Distribute requests efficiently

|

||||

|

||||

[Learn more about Reliability Features](https://portkey.ai/docs/product/ai-gateway/)

|

||||

|

||||

|

||||

|

||||

### 4. Metrics

|

||||

|

||||

Agent runs are complex. Portkey automatically logs **40+ comprehensive metrics** for your AI agents, including cost, tokens used, latency, etc. Whether you need a broad overview or granular insights into your agent runs, Portkey's customizable filters provide the metrics you need.

|

||||

|

||||

|

||||

- Cost per agent interaction

|

||||

- Response times and latency

|

||||

- Token usage and efficiency

|

||||

- Success/failure rates

|

||||

- Cache hit rates

|

||||

|

||||

<img src="https://github.com/siddharthsambharia-portkey/Portkey-Product-Images/blob/main/Portkey-Dashboard.png?raw=true" width="70%" alt="Portkey Dashboard" />

|

||||

|

||||

### 5. Detailed Logging

|

||||

Logs are essential for understanding agent behavior, diagnosing issues, and improving performance. They provide a detailed record of agent activities and tool use, which is crucial for debugging and optimizing processes.

|

||||

|

||||

|

||||

Access a dedicated section to view records of agent executions, including parameters, outcomes, function calls, and errors. Filter logs based on multiple parameters such as trace ID, model, tokens used, and metadata.

|

||||

|

||||

<details>

|

||||

<summary><b>Traces</b></summary>

|

||||

<img src="https://raw.githubusercontent.com/siddharthsambharia-portkey/Portkey-Product-Images/main/Portkey-Traces.png" alt="Portkey Traces" width="70%" />

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><b>Logs</b></summary>

|

||||

<img src="https://raw.githubusercontent.com/siddharthsambharia-portkey/Portkey-Product-Images/main/Portkey-Logs.png" alt="Portkey Logs" width="70%" />

|

||||

</details>

|

||||

|

||||

### 6. Enterprise Security Features

|

||||

- Set budget limit and rate limts per Virtual Key (disposable API keys)

|

||||

- Implement role-based access control

|

||||

- Track system changes with audit logs

|

||||

- Configure data retention policies

|

||||

|

||||

|

||||

|

||||

For detailed information on creating and managing Configs, visit the [Portkey documentation](https://docs.portkey.ai/product/ai-gateway/configs).

|

||||

|

||||

## Resources

|

||||

|

||||

- [📘 Portkey Documentation](https://docs.portkey.ai)

|

||||

- [📊 Portkey Dashboard](https://app.portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai)

|

||||

- [🐦 Twitter](https://twitter.com/portkeyai)

|

||||

- [💬 Discord Community](https://discord.gg/DD7vgKK299)

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

---

|

||||

title: Portkey Observability and Guardrails

|

||||

title: Agent Monitoring with Portkey

|

||||

description: How to use Portkey with CrewAI

|

||||

icon: key

|

||||

---

|

||||

|

||||

@@ -103,7 +103,8 @@

|

||||

"how-to/langtrace-observability",

|

||||

"how-to/mlflow-observability",

|

||||

"how-to/openlit-observability",

|

||||

"how-to/portkey-observability"

|

||||

"how-to/portkey-observability",

|

||||

"how-to/langfuse-observability"

|

||||

]

|

||||

},

|

||||

{

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

[project]

|

||||

name = "crewai"

|

||||

version = "0.100.1"

|

||||

version = "0.102.0"

|

||||

description = "Cutting-edge framework for orchestrating role-playing, autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks."

|

||||

readme = "README.md"

|

||||

requires-python = ">=3.10,<3.13"

|

||||

@@ -45,7 +45,7 @@ Documentation = "https://docs.crewai.com"

|

||||

Repository = "https://github.com/crewAIInc/crewAI"

|

||||

|

||||

[project.optional-dependencies]

|

||||

tools = ["crewai-tools>=0.32.1"]

|

||||

tools = ["crewai-tools>=0.36.0"]

|

||||

embeddings = [

|

||||

"tiktoken~=0.7.0"

|

||||

]

|

||||

|

||||

@@ -14,7 +14,7 @@ warnings.filterwarnings(

|

||||

category=UserWarning,

|

||||

module="pydantic.main",

|

||||

)

|

||||

__version__ = "0.100.1"

|

||||

__version__ = "0.102.0"

|

||||

__all__ = [

|

||||

"Agent",

|

||||

"Crew",

|

||||

|

||||

@@ -5,7 +5,7 @@ description = "{{name}} using crewAI"

|

||||

authors = [{ name = "Your Name", email = "you@example.com" }]

|

||||

requires-python = ">=3.10,<3.13"

|

||||

dependencies = [

|

||||

"crewai[tools]>=0.100.1,<1.0.0"

|

||||

"crewai[tools]>=0.102.0,<1.0.0"

|

||||

]

|

||||

|

||||

[project.scripts]

|

||||

|

||||

@@ -5,7 +5,7 @@ description = "{{name}} using crewAI"

|

||||

authors = [{ name = "Your Name", email = "you@example.com" }]

|

||||

requires-python = ">=3.10,<3.13"

|

||||

dependencies = [

|

||||

"crewai[tools]>=0.100.1,<1.0.0",

|

||||

"crewai[tools]>=0.102.0,<1.0.0",

|

||||

]

|

||||

|

||||

[project.scripts]

|

||||

|

||||

@@ -5,7 +5,7 @@ description = "Power up your crews with {{folder_name}}"

|

||||

readme = "README.md"

|

||||

requires-python = ">=3.10,<3.13"

|

||||

dependencies = [

|

||||

"crewai[tools]>=0.100.1"

|

||||

"crewai[tools]>=0.102.0"

|

||||

]

|

||||

|

||||

[tool.crewai]

|

||||

|

||||

@@ -1,6 +1,11 @@

|

||||

import asyncio

|

||||

import dataclasses

|

||||

import functools

|

||||

import inspect

|

||||

import logging

|

||||

import threading

|

||||

import time

|

||||

from contextlib import contextmanager

|

||||

from typing import (

|

||||

Any,

|

||||

Callable,

|

||||

@@ -14,6 +19,22 @@ from typing import (

|

||||

Union,

|

||||

cast,

|

||||

)

|

||||

|

||||

from typing_extensions import Protocol

|

||||

|

||||

logger = logging.getLogger(__name__)

|

||||

|

||||

|

||||

class SerializationError(Exception):

|

||||

"""Error during state serialization."""

|

||||

pass

|

||||

|

||||

|

||||

class LockProtocol(Protocol):

|

||||

"""Protocol for thread-safe primitives."""

|

||||

def acquire(self) -> bool: ...

|

||||

def release(self) -> None: ...

|

||||

def _is_owned(self) -> bool: ...

|

||||

from uuid import uuid4

|

||||

|

||||

from blinker import Signal

|

||||

@@ -394,7 +415,6 @@ class FlowMeta(type):

|

||||

or hasattr(attr_value, "__trigger_methods__")

|

||||

or hasattr(attr_value, "__is_router__")

|

||||

):

|

||||

|

||||

# Register start methods

|

||||

if hasattr(attr_value, "__is_start_method__"):

|

||||

start_methods.append(attr_name)

|

||||

@@ -437,6 +457,23 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

initial_state: Union[Type[T], T, None] = None

|

||||

event_emitter = Signal("event_emitter")

|

||||

|

||||

@contextmanager

|

||||

def _performance_monitor(self, operation: str):

|

||||

"""Monitor performance of an operation.

|

||||

|

||||

Args:

|

||||

operation: Name of the operation being monitored

|

||||

|

||||

Yields:

|

||||

None

|

||||

"""

|

||||

start = time.perf_counter()

|

||||

try:

|

||||

yield

|

||||

finally:

|

||||

duration = time.perf_counter() - start

|

||||

logger.debug(f"{operation} took {duration:.4f} seconds")

|

||||

|

||||

def __class_getitem__(cls: Type["Flow"], item: Type[T]) -> Type["Flow"]:

|

||||

class _FlowGeneric(cls): # type: ignore

|

||||

_initial_state_T = item # type: ignore

|

||||

@@ -569,6 +606,172 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

f"Initial state must be dict or BaseModel, got {type(self.initial_state)}"

|

||||

)

|

||||

|

||||

# Cache thread-safe primitive types

|

||||

THREAD_SAFE_TYPES = {

|

||||

type(threading.RLock()): threading.RLock,

|

||||

type(threading.Lock()): threading.Lock,

|

||||

type(threading.Semaphore()): threading.Semaphore,

|

||||

type(threading.Event()): threading.Event,

|

||||

type(threading.Condition()): threading.Condition,

|

||||

type(asyncio.Lock()): asyncio.Lock,

|

||||

type(asyncio.Event()): asyncio.Event,

|

||||

type(asyncio.Condition()): asyncio.Condition,

|

||||

type(asyncio.Semaphore()): asyncio.Semaphore,

|

||||

}

|

||||

|

||||

def _get_thread_safe_primitive_type(self, value: Any) -> Optional[Type[LockProtocol]]:

|

||||

"""Get the type of a thread-safe primitive for recreation.

|

||||

|

||||

Args:

|

||||

value: Any Python value to check

|

||||

|

||||

Returns:

|

||||

The type of the thread-safe primitive, or None if not a primitive

|

||||

"""

|

||||

return (self.THREAD_SAFE_TYPES.get(type(value))

|

||||

if hasattr(value, '_is_owned') and hasattr(value, 'acquire')

|

||||

else None)

|

||||

|

||||

@functools.lru_cache(maxsize=128)

|

||||

def _get_dataclass_fields(self, cls):

|

||||

"""Get cached dataclass fields.

|

||||

|

||||

Args:

|

||||

cls: Dataclass type

|

||||

|

||||

Returns:

|

||||

Dict mapping field names to Field objects

|

||||

"""

|

||||

return {field.name: field for field in dataclasses.fields(cls)}

|

||||

|

||||

def _serialize_dataclass(self, value: Any) -> Union[Dict[str, Any], Any]:

|

||||

"""Serialize a dataclass instance.

|

||||

|

||||

Args:

|

||||

value: A dataclass instance

|

||||

|

||||

Returns:

|

||||

A new instance of the dataclass with thread-safe primitives recreated

|

||||

"""

|

||||

try:

|

||||

if not hasattr(value, '__class__'):

|

||||

return value

|

||||

|

||||

if hasattr(value, '__pydantic_validate__'):

|

||||

return value.__pydantic_validate__()

|

||||

|

||||

# Get field values, handling thread-safe primitives

|

||||

field_values = {}

|

||||

for field_name, field in self._get_dataclass_fields(value.__class__).items():

|

||||

field_value = getattr(value, field_name)

|

||||

primitive_type = self._get_thread_safe_primitive_type(field_value)

|

||||

if primitive_type is not None:

|

||||

field_values[field_name] = primitive_type()

|

||||

else:

|

||||

field_values[field_name] = self._serialize_value(field_value)

|

||||

|

||||

# Create new instance

|

||||

return value.__class__(**field_values)

|

||||

except Exception as e:

|

||||

logger.error(f"Dataclass serialization error for {type(value)}: {str(e)}")

|

||||

raise SerializationError(f"Failed to serialize dataclass {type(value)}") from e

|

||||

|

||||

def _serialize_value(self, value: Any) -> Any:

|

||||

"""Recursively serialize a value, handling thread locks.

|

||||

|

||||

Args:

|

||||

value: Any Python value to serialize

|

||||

|

||||

Returns:

|

||||

Serialized version of the value with thread-safe primitives handled

|

||||

|

||||

Raises:

|

||||

SerializationError: If serialization fails

|

||||

"""

|

||||

with self._performance_monitor(f"serialize_{type(value).__name__}"):

|

||||

try:

|

||||

# Handle None

|

||||

if value is None:

|

||||

return None

|

||||

|

||||

# Handle thread-safe primitives

|

||||

primitive_type = self._get_thread_safe_primitive_type(value)

|

||||

if primitive_type is not None:

|

||||

return primitive_type()

|

||||

|

||||

# Handle Pydantic models

|

||||

if isinstance(value, BaseModel):

|

||||

model_class = type(value)

|

||||

model_data = value.model_dump(exclude_none=True)

|

||||

|

||||

# Create new instance

|

||||

instance = model_class(**model_data)

|

||||

|

||||

# Copy excluded fields that are thread-safe primitives

|

||||

for field_name, field in value.__class__.model_fields.items():

|

||||

if field.exclude:

|

||||

field_value = getattr(value, field_name, None)

|

||||

if field_value is not None:

|

||||

primitive_type = self._get_thread_safe_primitive_type(field_value)

|

||||

if primitive_type is not None:

|

||||

setattr(instance, field_name, primitive_type())

|

||||

|

||||

return instance

|

||||

|

||||

# Handle dataclasses

|

||||

if dataclasses.is_dataclass(value):

|

||||

return self._serialize_dataclass(value)

|

||||

|

||||

# Handle dictionaries

|

||||

if isinstance(value, dict):

|

||||

return {

|

||||

k: self._serialize_value(v)

|

||||

for k, v in value.items()

|

||||

}

|

||||

|

||||

# Handle lists, tuples, and sets

|

||||

if isinstance(value, (list, tuple, set)):

|

||||

serialized = [self._serialize_value(item) for item in value]

|

||||

return (

|

||||

serialized if isinstance(value, list)

|

||||

else tuple(serialized) if isinstance(value, tuple)

|

||||

else set(serialized)

|

||||

)

|

||||

|

||||

# Handle other types

|

||||

return value

|

||||

|

||||

except Exception as e:

|

||||

logger.error(f"Serialization error for {type(value)}: {str(e)}")

|

||||

raise SerializationError(f"Failed to serialize {type(value)}") from e

|

||||

|

||||

# Handle dataclasses

|

||||

if dataclasses.is_dataclass(value):

|

||||

return self._serialize_dataclass(value)

|

||||

|

||||

# Handle dictionaries

|

||||

if isinstance(value, dict):

|

||||

return {

|

||||

k: self._serialize_value(v)

|

||||

for k, v in value.items()

|

||||

}

|

||||

|

||||

# Handle lists, tuples, and sets

|

||||

if isinstance(value, (list, tuple, set)):

|

||||

serialized = [self._serialize_value(item) for item in value]

|

||||

return (

|

||||

serialized if isinstance(value, list)

|

||||

else tuple(serialized) if isinstance(value, tuple)

|

||||

else set(serialized)

|

||||

)

|

||||

|

||||

# Handle other types

|

||||

return value

|

||||

|

||||

def _copy_state(self) -> T:

|

||||

"""Create a deep copy of the current state."""

|

||||

return self._serialize_value(self._state)

|

||||

|

||||

@property

|

||||

def state(self) -> T:

|

||||

return self._state

|

||||

@@ -740,6 +943,7 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

event=FlowStartedEvent(

|

||||

type="flow_started",

|

||||

flow_name=self.__class__.__name__,

|

||||

inputs=inputs,

|

||||

),

|

||||

)

|

||||

self._log_flow_event(

|

||||

@@ -803,6 +1007,18 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

async def _execute_method(

|

||||

self, method_name: str, method: Callable, *args: Any, **kwargs: Any

|

||||

) -> Any:

|

||||

dumped_params = {f"_{i}": arg for i, arg in enumerate(args)} | (kwargs or {})

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionStartedEvent(

|

||||

type="method_execution_started",

|

||||

method_name=method_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

params=dumped_params,

|

||||

state=self._copy_state(),

|

||||

),

|

||||

)

|

||||

|

||||

result = (

|

||||

await method(*args, **kwargs)

|

||||

if asyncio.iscoroutinefunction(method)

|

||||

@@ -812,6 +1028,18 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

self._method_execution_counts[method_name] = (

|

||||

self._method_execution_counts.get(method_name, 0) + 1

|

||||

)

|

||||

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionFinishedEvent(

|

||||

type="method_execution_finished",

|

||||

method_name=method_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

state=self._copy_state(),

|

||||

result=result,

|

||||

),

|

||||

)

|

||||

|

||||

return result

|

||||

|

||||

async def _execute_listeners(self, trigger_method: str, result: Any) -> None:

|

||||

@@ -950,16 +1178,6 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

"""

|

||||

try:

|

||||

method = self._methods[listener_name]

|

||||

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionStartedEvent(

|

||||

type="method_execution_started",

|

||||

method_name=listener_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

),

|

||||

)

|

||||

|

||||

sig = inspect.signature(method)

|

||||

params = list(sig.parameters.values())

|

||||

method_params = [p for p in params if p.name != "self"]

|

||||

@@ -971,15 +1189,6 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

else:

|

||||

listener_result = await self._execute_method(listener_name, method)

|

||||

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionFinishedEvent(

|

||||

type="method_execution_finished",

|

||||

method_name=listener_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

),

|

||||

)

|

||||

|

||||

# Execute listeners (and possibly routers) of this listener

|

||||

await self._execute_listeners(listener_name, listener_result)

|

||||

|

||||

|

||||

@@ -1,6 +1,8 @@

|

||||

from dataclasses import dataclass, field

|

||||

from datetime import datetime

|

||||

from typing import Any, Optional

|

||||

from typing import Any, Dict, Optional, Union

|

||||

|

||||

from pydantic import BaseModel

|

||||

|

||||

|

||||

@dataclass

|

||||

@@ -15,17 +17,21 @@ class Event:

|

||||

|

||||

@dataclass

|

||||

class FlowStartedEvent(Event):

|

||||

pass

|

||||

inputs: Optional[Dict[str, Any]] = None

|

||||

|

||||

|

||||

@dataclass

|

||||

class MethodExecutionStartedEvent(Event):

|

||||

method_name: str

|

||||

state: Union[Dict[str, Any], BaseModel]

|

||||

params: Optional[Dict[str, Any]] = None

|

||||

|

||||

|

||||

@dataclass

|

||||

class MethodExecutionFinishedEvent(Event):

|

||||

method_name: str

|

||||

state: Union[Dict[str, Any], BaseModel]

|

||||

result: Any = None

|

||||

|

||||

|

||||

@dataclass

|

||||

|

||||

@@ -15,27 +15,18 @@ class AgentTools:

|

||||

|

||||

def tools(self) -> list[BaseTool]:

|

||||

"""Get all available agent tools"""

|

||||

coworkers = []

|

||||

for agent in self.agents:

|

||||

coworker_desc = f"{agent.role} (Goal: {agent.goal})"

|

||||

if agent.backstory:

|

||||

# Truncate backstory to first sentence for brevity

|

||||

first_sentence = agent.backstory.split('.')[0]

|

||||

coworker_desc += f" - {first_sentence}"

|

||||

coworkers.append(coworker_desc)

|

||||

|

||||

coworkers_str = "\n- ".join(coworkers)

|

||||

coworkers = ", ".join([f"{agent.role}" for agent in self.agents])

|

||||

|

||||

delegate_tool = DelegateWorkTool(

|

||||

agents=self.agents,

|

||||

i18n=self.i18n,

|

||||

description=self.i18n.tools("delegate_work").format(coworkers=coworkers_str), # type: ignore

|

||||

description=self.i18n.tools("delegate_work").format(coworkers=coworkers), # type: ignore

|

||||

)

|

||||

|

||||

ask_tool = AskQuestionTool(

|

||||

agents=self.agents,

|

||||

i18n=self.i18n,

|

||||

description=self.i18n.tools("ask_question").format(coworkers=coworkers_str), # type: ignore

|

||||

description=self.i18n.tools("ask_question").format(coworkers=coworkers), # type: ignore

|

||||

)

|

||||

|

||||

return [delegate_tool, ask_tool]

|

||||

|

||||

@@ -40,8 +40,8 @@

|

||||

"validation_error": "### Previous attempt failed validation: {guardrail_result_error}\n\n\n### Previous result:\n{task_output}\n\n\nTry again, making sure to address the validation error."

|

||||

},

|

||||

"tools": {

|

||||

"delegate_work": "Delegate a specific task to one of the following coworkers:\n{coworkers}\nThe input to this tool should be the coworker, the task you want them to do, and ALL necessary context to execute the task, they know nothing about the task, so share absolute everything you know, don't reference things but instead explain them.",

|

||||

"ask_question": "Ask a specific question to one of the following coworkers:\n{coworkers}\nThe input to this tool should be the coworker, the question you have for them, and ALL necessary context to ask the question properly, they know nothing about the question, so share absolute everything you know, don't reference things but instead explain them.",

|

||||

"delegate_work": "Delegate a specific task to one of the following coworkers: {coworkers}\nThe input to this tool should be the coworker, the task you want them to do, and ALL necessary context to execute the task, they know nothing about the task, so share absolute everything you know, don't reference things but instead explain them.",

|

||||

"ask_question": "Ask a specific question to one of the following coworkers: {coworkers}\nThe input to this tool should be the coworker, the question you have for them, and ALL necessary context to ask the question properly, they know nothing about the question, so share absolute everything you know, don't reference things but instead explain them.",

|

||||

"add_image": {

|

||||

"name": "Add image to content",

|

||||

"description": "See image to understand its content, you can optionally ask a question about the image",

|

||||

|

||||

@@ -351,38 +351,6 @@ def test_manager_llm_requirement_for_hierarchical_process():

|

||||

)

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

def test_hierarchical_crew_with_similar_roles():

|

||||

"""Test that manager can effectively delegate to agents with similar roles using enhanced context."""

|

||||

quick_researcher = Agent(

|

||||

role="Quick Researcher",

|

||||

goal="Find information quickly, prioritizing speed over depth",

|

||||

backstory="Specialist in rapid information gathering and quick insights"

|

||||

)

|

||||

deep_researcher = Agent(

|

||||

role="Deep Researcher",

|

||||

goal="Find detailed and thorough information through comprehensive analysis",

|

||||

backstory="Expert in comprehensive research and in-depth investigation"

|

||||

)

|

||||

|

||||

task = Task(

|

||||

description="Research the impact of AI on healthcare. Quick researcher should focus on recent developments, while deep researcher should analyze long-term implications.",

|

||||

expected_output="A comprehensive analysis combining quick insights and deep research."

|

||||

)

|

||||

|

||||

crew = Crew(

|

||||

agents=[quick_researcher, deep_researcher],

|

||||

tasks=[task],

|

||||

process=Process.hierarchical,

|

||||

manager_llm="gpt-4"

|

||||

)

|

||||

|

||||

result = crew.kickoff()

|

||||

assert result # Verify crew execution completes

|

||||

assert isinstance(result, CrewOutput) # Verify output type

|

||||

assert len(result.tasks_output) == 1 # Verify task was completed

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

def test_manager_agent_delegating_to_assigned_task_agent():

|

||||

"""

|

||||

@@ -424,12 +392,12 @@ def test_manager_agent_delegating_to_assigned_task_agent():

|

||||

# Verify the delegation tools were passed correctly

|

||||

assert len(tools) == 2

|

||||

assert any(

|

||||

"Researcher (Goal: Make the best research and analysis on content about AI and AI agents)"

|

||||

"Delegate a specific task to one of the following coworkers: Researcher"

|

||||

in tool.description

|

||||

for tool in tools

|

||||

)

|

||||

assert any(

|

||||

"Researcher (Goal: Make the best research and analysis on content about AI and AI agents)"

|

||||

"Ask a specific question to one of the following coworkers: Researcher"

|

||||

in tool.description

|

||||

for tool in tools

|

||||

)

|

||||

@@ -458,15 +426,13 @@ def test_manager_agent_delegating_to_all_agents():

|

||||

assert crew.manager_agent.tools is not None

|

||||

|

||||

assert len(crew.manager_agent.tools) == 2

|

||||

assert any(

|

||||

"Researcher (Goal: Make the best research and analysis on content about AI and AI agents)"

|

||||

in tool.description

|

||||

for tool in crew.manager_agent.tools

|

||||

assert (

|

||||

"Delegate a specific task to one of the following coworkers: Researcher, Senior Writer\n"

|

||||

in crew.manager_agent.tools[0].description

|

||||

)

|

||||

assert any(

|

||||

"Senior Writer (Goal: Write the best content about AI and AI agents.)"

|

||||

in tool.description

|

||||

for tool in crew.manager_agent.tools

|

||||

assert (

|

||||

"Ask a specific question to one of the following coworkers: Researcher, Senior Writer\n"

|

||||

in crew.manager_agent.tools[1].description

|

||||

)

|

||||

|

||||

|

||||

@@ -1765,11 +1731,13 @@ def test_hierarchical_crew_creation_tasks_with_agents():

|

||||

# Verify the delegation tools were passed correctly

|

||||

assert len(tools) == 2

|

||||

assert any(

|

||||

"Senior Writer (Goal: Write the best content about AI and AI agents.)" in tool.description

|

||||

"Delegate a specific task to one of the following coworkers: Senior Writer"

|

||||

in tool.description

|

||||

for tool in tools

|

||||

)

|

||||

assert any(

|

||||

"Senior Writer (Goal: Write the best content about AI and AI agents.)" in tool.description

|

||||

"Ask a specific question to one of the following coworkers: Senior Writer"

|

||||

in tool.description

|

||||

for tool in tools

|

||||

)

|

||||

|

||||

@@ -1820,15 +1788,13 @@ def test_hierarchical_crew_creation_tasks_with_async_execution():

|

||||

# Verify the delegation tools were passed correctly

|

||||

assert len(tools) == 2

|

||||

assert any(

|

||||

"Senior Writer (Goal: Write the best content about AI and AI agents.)" in tool.description

|

||||

"Delegate a specific task to one of the following coworkers: Senior Writer\n"

|

||||

in tool.description

|

||||

for tool in tools

|

||||

)

|

||||

assert any(

|

||||

"Researcher (Goal: Make the best research and analysis on content about AI and AI agents)" in tool.description

|

||||

for tool in tools

|

||||

)

|

||||

assert any(

|

||||

"CEO (Goal: Make sure the writers in your company produce amazing content.)" in tool.description

|

||||

"Ask a specific question to one of the following coworkers: Senior Writer\n"

|

||||

in tool.description

|

||||

for tool in tools

|

||||

)

|

||||

|

||||

@@ -1861,17 +1827,9 @@ def test_hierarchical_crew_creation_tasks_with_sync_last():

|

||||

crew.kickoff()

|

||||

assert crew.manager_agent is not None

|

||||

assert crew.manager_agent.tools is not None

|

||||

assert any(

|

||||

"Senior Writer (Goal: Write the best content about AI and AI agents.)" in tool.description

|

||||

for tool in crew.manager_agent.tools

|

||||

)

|

||||

assert any(

|

||||

"Researcher (Goal: Make the best research and analysis on content about AI and AI agents)" in tool.description

|

||||

for tool in crew.manager_agent.tools

|

||||

)

|

||||

assert any(

|

||||

"CEO (Goal: Make sure the writers in your company produce amazing content.)" in tool.description

|

||||

for tool in crew.manager_agent.tools

|

||||

assert (

|

||||

"Delegate a specific task to one of the following coworkers: Senior Writer, Researcher, CEO\n"

|

||||

in crew.manager_agent.tools[0].description

|

||||

)

|

||||

|

||||

|

||||

|

||||

@@ -1,11 +1,18 @@

|

||||

"""Test Flow creation and execution basic functionality."""

|

||||

|

||||

import asyncio

|

||||

from datetime import datetime

|

||||

|

||||

import pytest

|

||||

from pydantic import BaseModel

|

||||

|

||||

from crewai.flow.flow import Flow, and_, listen, or_, router, start

|

||||

from crewai.flow.flow_events import (

|

||||

FlowFinishedEvent,

|

||||

FlowStartedEvent,

|

||||

MethodExecutionFinishedEvent,

|

||||

MethodExecutionStartedEvent,

|

||||

)

|

||||

|

||||

|

||||

def test_simple_sequential_flow():

|

||||

@@ -398,3 +405,218 @@ def test_router_with_multiple_conditions():

|

||||

|

||||

# final_step should run after router_and

|

||||

assert execution_order.index("log_final_step") > execution_order.index("router_and")

|

||||

|

||||

|

||||

def test_unstructured_flow_event_emission():

|

||||

"""Test that the correct events are emitted during unstructured flow

|

||||

execution with all fields validated."""

|

||||

|

||||

class PoemFlow(Flow):

|

||||

@start()

|

||||

def prepare_flower(self):

|

||||

self.state["flower"] = "roses"

|

||||

return "foo"

|

||||

|

||||

@start()

|

||||

def prepare_color(self):

|

||||

self.state["color"] = "red"

|

||||

return "bar"

|

||||

|

||||

@listen(prepare_color)

|

||||

def write_first_sentence(self):

|

||||

return f"{self.state['flower']} are {self.state['color']}"

|

||||

|

||||

@listen(write_first_sentence)

|

||||

def finish_poem(self, first_sentence):

|

||||

separator = self.state.get("separator", "\n")

|

||||

return separator.join([first_sentence, "violets are blue"])

|

||||

|

||||

@listen(finish_poem)

|

||||

def save_poem_to_database(self):

|

||||

# A method without args/kwargs to ensure events are sent correctly

|

||||

pass

|

||||

|

||||

event_log = []

|

||||

|

||||

def handle_event(_, event):

|

||||

event_log.append(event)

|

||||

|

||||

flow = PoemFlow()

|

||||

flow.event_emitter.connect(handle_event)

|

||||

flow.kickoff(inputs={"separator": ", "})

|

||||

|

||||

assert isinstance(event_log[0], FlowStartedEvent)

|

||||

assert event_log[0].flow_name == "PoemFlow"

|

||||

assert event_log[0].inputs == {"separator": ", "}

|

||||

assert isinstance(event_log[0].timestamp, datetime)

|

||||

|

||||

# Asserting for concurrent start method executions in a for loop as you

|

||||

# can't guarantee ordering in asynchronous executions

|

||||

for i in range(1, 5):

|

||||

event = event_log[i]

|

||||

assert isinstance(event.state, dict)

|

||||

assert isinstance(event.state["id"], str)

|

||||

|

||||

if event.method_name == "prepare_flower":

|

||||

if isinstance(event, MethodExecutionStartedEvent):

|

||||

assert event.params == {}

|

||||

assert event.state["separator"] == ", "

|

||||

elif isinstance(event, MethodExecutionFinishedEvent):

|

||||

assert event.result == "foo"

|

||||

assert event.state["flower"] == "roses"

|

||||

assert event.state["separator"] == ", "

|

||||

else:

|

||||

assert False, "Unexpected event type for prepare_flower"

|

||||

elif event.method_name == "prepare_color":

|

||||

if isinstance(event, MethodExecutionStartedEvent):

|

||||

assert event.params == {}

|

||||

assert event.state["separator"] == ", "

|

||||

elif isinstance(event, MethodExecutionFinishedEvent):

|

||||

assert event.result == "bar"

|

||||

assert event.state["color"] == "red"

|

||||

assert event.state["separator"] == ", "

|

||||

else:

|

||||

assert False, "Unexpected event type for prepare_color"

|

||||

else:

|

||||

assert False, f"Unexpected method {event.method_name} in prepare events"

|

||||

|

||||

assert isinstance(event_log[5], MethodExecutionStartedEvent)

|

||||

assert event_log[5].method_name == "write_first_sentence"

|

||||

assert event_log[5].params == {}

|

||||

assert isinstance(event_log[5].state, dict)

|

||||

assert event_log[5].state["flower"] == "roses"

|

||||

assert event_log[5].state["color"] == "red"

|

||||

assert event_log[5].state["separator"] == ", "

|

||||

|

||||

assert isinstance(event_log[6], MethodExecutionFinishedEvent)

|

||||

assert event_log[6].method_name == "write_first_sentence"

|

||||

assert event_log[6].result == "roses are red"

|

||||

|

||||

assert isinstance(event_log[7], MethodExecutionStartedEvent)

|

||||

assert event_log[7].method_name == "finish_poem"

|

||||

assert event_log[7].params == {"_0": "roses are red"}

|

||||

assert isinstance(event_log[7].state, dict)

|

||||

assert event_log[7].state["flower"] == "roses"

|

||||

assert event_log[7].state["color"] == "red"

|

||||

|

||||

assert isinstance(event_log[8], MethodExecutionFinishedEvent)

|

||||

assert event_log[8].method_name == "finish_poem"

|

||||

assert event_log[8].result == "roses are red, violets are blue"

|

||||

|

||||

assert isinstance(event_log[9], MethodExecutionStartedEvent)

|

||||

assert event_log[9].method_name == "save_poem_to_database"

|

||||

assert event_log[9].params == {}

|

||||

assert isinstance(event_log[9].state, dict)

|

||||

assert event_log[9].state["flower"] == "roses"

|

||||

assert event_log[9].state["color"] == "red"

|

||||

|

||||

assert isinstance(event_log[10], MethodExecutionFinishedEvent)

|

||||

assert event_log[10].method_name == "save_poem_to_database"

|

||||

assert event_log[10].result is None

|

||||

|

||||

assert isinstance(event_log[11], FlowFinishedEvent)

|

||||

assert event_log[11].flow_name == "PoemFlow"

|

||||

assert event_log[11].result is None

|

||||

assert isinstance(event_log[11].timestamp, datetime)

|

||||

|

||||

|

||||

def test_structured_flow_event_emission():

|

||||

"""Test that the correct events are emitted during structured flow

|

||||

execution with all fields validated."""

|

||||

|

||||

class OnboardingState(BaseModel):

|

||||

name: str = ""

|

||||

sent: bool = False

|

||||

|

||||

class OnboardingFlow(Flow[OnboardingState]):

|

||||

@start()

|

||||

def user_signs_up(self):

|

||||

self.state.sent = False

|

||||

|

||||

@listen(user_signs_up)

|

||||

def send_welcome_message(self):

|

||||

self.state.sent = True

|

||||

return f"Welcome, {self.state.name}!"

|

||||

|

||||

event_log = []

|

||||

|

||||

def handle_event(_, event):

|

||||

event_log.append(event)

|

||||

|

||||

flow = OnboardingFlow()

|

||||

flow.event_emitter.connect(handle_event)

|

||||

flow.kickoff(inputs={"name": "Anakin"})

|

||||

|

||||

assert isinstance(event_log[0], FlowStartedEvent)

|

||||

assert event_log[0].flow_name == "OnboardingFlow"

|

||||

assert event_log[0].inputs == {"name": "Anakin"}

|

||||

assert isinstance(event_log[0].timestamp, datetime)

|

||||

|

||||

assert isinstance(event_log[1], MethodExecutionStartedEvent)

|

||||

assert event_log[1].method_name == "user_signs_up"

|

||||

|

||||

assert isinstance(event_log[2], MethodExecutionFinishedEvent)

|

||||

assert event_log[2].method_name == "user_signs_up"

|

||||

|

||||

assert isinstance(event_log[3], MethodExecutionStartedEvent)

|

||||

assert event_log[3].method_name == "send_welcome_message"

|

||||

assert event_log[3].params == {}

|

||||

assert getattr(event_log[3].state, "sent") is False

|

||||

|

||||

assert isinstance(event_log[4], MethodExecutionFinishedEvent)

|

||||

assert event_log[4].method_name == "send_welcome_message"

|

||||

assert getattr(event_log[4].state, "sent") is True

|

||||

assert event_log[4].result == "Welcome, Anakin!"

|

||||

|

||||

assert isinstance(event_log[5], FlowFinishedEvent)

|

||||

assert event_log[5].flow_name == "OnboardingFlow"

|

||||

assert event_log[5].result == "Welcome, Anakin!"

|

||||

assert isinstance(event_log[5].timestamp, datetime)

|

||||

|

||||

|

||||

def test_stateless_flow_event_emission():

|

||||

"""Test that the correct events are emitted stateless during flow execution

|

||||

with all fields validated."""

|

||||

|

||||

class StatelessFlow(Flow):

|

||||

@start()

|

||||

def init(self):

|

||||

pass

|

||||

|

||||

@listen(init)

|

||||

def process(self):

|

||||

return "Deeds will not be less valiant because they are unpraised."

|

||||

|

||||

event_log = []

|

||||

|

||||

def handle_event(_, event):

|

||||

event_log.append(event)

|

||||

|

||||

flow = StatelessFlow()

|

||||

flow.event_emitter.connect(handle_event)

|

||||

flow.kickoff()

|

||||

|

||||

assert isinstance(event_log[0], FlowStartedEvent)

|

||||

assert event_log[0].flow_name == "StatelessFlow"

|

||||

assert event_log[0].inputs is None

|

||||

assert isinstance(event_log[0].timestamp, datetime)

|

||||

|

||||

assert isinstance(event_log[1], MethodExecutionStartedEvent)

|

||||

assert event_log[1].method_name == "init"

|

||||

|

||||

assert isinstance(event_log[2], MethodExecutionFinishedEvent)

|

||||

assert event_log[2].method_name == "init"

|

||||

|

||||

assert isinstance(event_log[3], MethodExecutionStartedEvent)

|

||||

assert event_log[3].method_name == "process"

|

||||

|

||||

assert isinstance(event_log[4], MethodExecutionFinishedEvent)

|

||||

assert event_log[4].method_name == "process"

|

||||

|

||||

assert isinstance(event_log[5], FlowFinishedEvent)

|

||||

assert event_log[5].flow_name == "StatelessFlow"

|

||||

assert (

|

||||

event_log[5].result

|

||||

== "Deeds will not be less valiant because they are unpraised."

|

||||

)

|

||||

assert isinstance(event_log[5].timestamp, datetime)

|

||||

|

||||

182

tests/test_flow_thread_locks.py

Normal file

182

tests/test_flow_thread_locks.py

Normal file

@@ -0,0 +1,182 @@

|

||||

"""Tests for Flow with thread locks."""

|

||||

import asyncio

|

||||

import threading

|

||||

from typing import Optional

|

||||

from uuid import uuid4

|

||||

|

||||

import pytest

|

||||

from pydantic import BaseModel, Field, field_validator

|

||||

|

||||

from crewai.flow.flow import Flow, listen, start

|

||||

|

||||

|

||||

class ThreadSafeState(BaseModel):

|

||||

"""Test state model with thread locks."""

|

||||

model_config = {

|

||||

"arbitrary_types_allowed": True,

|

||||

"exclude": {"lock"}

|

||||

}

|

||||

|

||||

id: str = Field(default_factory=lambda: str(uuid4()))

|

||||

lock: Optional[threading.RLock] = Field(default=None, exclude=True)

|

||||

value: str = ""

|

||||

|

||||

def __init__(self, **data):

|

||||

super().__init__(**data)

|

||||

if self.lock is None:

|

||||

self.lock = threading.RLock()

|

||||

|

||||

|

||||

class LockFlow(Flow[ThreadSafeState]):

|

||||

"""Test flow with thread locks."""

|

||||

initial_state = ThreadSafeState

|

||||

|

||||

@start()

|

||||

async def step_1(self):

|

||||

with self.state.lock:

|

||||

self.state.value = "step 1"

|

||||

return "step 1"

|

||||

|

||||

@listen(step_1)

|

||||

async def step_2(self, result):

|

||||

with self.state.lock:

|

||||

self.state.value += " -> step 2"

|

||||

return result + " -> step 2"

|

||||

|

||||

|

||||

def test_flow_with_thread_locks():

|

||||

"""Test Flow with thread locks in state."""

|

||||

flow = LockFlow()

|

||||

result = asyncio.run(flow.kickoff_async())

|

||||

assert result == "step 1 -> step 2"

|

||||

assert flow.state.value == "step 1 -> step 2"

|

||||

|

||||

|

||||

def test_kickoff_async_with_lock_inputs():

|

||||

"""Test kickoff_async with thread lock inputs."""

|

||||

flow = LockFlow()

|

||||

inputs = {

|

||||

"lock": threading.RLock(),

|

||||

"value": "test"

|

||||

}

|

||||

result = asyncio.run(flow.kickoff_async(inputs=inputs))

|

||||

assert result == "step 1 -> step 2"

|

||||

assert flow.state.value == "step 1 -> step 2"

|

||||

|

||||

|

||||

class ComplexState(BaseModel):

|

||||

"""Test state model with nested thread locks."""

|

||||

model_config = {

|

||||

"arbitrary_types_allowed": True,

|

||||

"exclude": {"outer_lock"}

|

||||

}

|

||||

|

||||

id: str = Field(default_factory=lambda: str(uuid4()))

|

||||

outer_lock: Optional[threading.RLock] = Field(default=None, exclude=True)

|

||||

inner: Optional[ThreadSafeState] = Field(default_factory=ThreadSafeState)

|

||||

value: str = ""

|

||||

|

||||

def __init__(self, **data):

|

||||

super().__init__(**data)

|

||||

if self.outer_lock is None:

|

||||

self.outer_lock = threading.RLock()

|

||||

|

||||

|

||||

class NestedLockFlow(Flow[ComplexState]):

|

||||

"""Test flow with nested thread locks."""

|

||||

initial_state = ComplexState

|

||||

|

||||

@start()

|

||||

async def step_1(self):

|

||||

with self.state.outer_lock:

|

||||

with self.state.inner.lock:

|

||||

self.state.value = "outer"

|

||||

self.state.inner.value = "inner"

|

||||

return "step 1"

|

||||

|

||||

@listen(step_1)

|

||||

async def step_2(self, result):

|

||||

with self.state.outer_lock:

|

||||

with self.state.inner.lock:

|

||||

self.state.value += " -> outer 2"

|

||||

self.state.inner.value += " -> inner 2"

|

||||

return result + " -> step 2"

|

||||

|

||||

|

||||

def test_flow_with_nested_locks():

|

||||

"""Test Flow with nested thread locks in state."""

|

||||

flow = NestedLockFlow()

|

||||

result = asyncio.run(flow.kickoff_async())

|

||||

assert result == "step 1 -> step 2"

|

||||

assert flow.state.value == "outer -> outer 2"

|

||||

assert flow.state.inner.value == "inner -> inner 2"

|

||||

|

||||

|

||||

class AsyncLockState(BaseModel):

|

||||

"""Test state model with async locks."""

|

||||

model_config = {

|

||||

"arbitrary_types_allowed": True,

|

||||

"exclude": {"lock", "event"}

|

||||

}

|

||||

|

||||

id: str = Field(default_factory=lambda: str(uuid4()))

|

||||

lock: Optional[asyncio.Lock] = Field(default=None, exclude=True)

|

||||

event: Optional[asyncio.Event] = Field(default=None, exclude=True)

|

||||

value: str = ""

|

||||

|

||||

def __init__(self, **data):

|

||||

super().__init__(**data)

|

||||

if self.lock is None:

|

||||

self.lock = asyncio.Lock()

|

||||

if self.event is None:

|

||||

self.event = asyncio.Event()

|

||||

|

||||

|

||||

class AsyncLockFlow(Flow[AsyncLockState]):

|

||||

"""Test flow with async locks."""

|

||||

initial_state = AsyncLockState

|

||||

|

||||

@start()

|

||||

async def step_1(self):

|

||||

async with self.state.lock:

|

||||

self.state.value = "step 1"

|

||||

self.state.event.set()

|

||||

return "step 1"

|

||||

|

||||

@listen(step_1)

|

||||

async def step_2(self, result):

|

||||

async with self.state.lock:

|

||||

await self.state.event.wait()

|

||||

self.state.value += " -> step 2"

|

||||

return result + " -> step 2"

|

||||

|

||||

|

||||

def test_flow_with_async_locks():

|

||||

"""Test Flow with async locks in state."""

|

||||

flow = AsyncLockFlow()

|

||||

result = asyncio.run(flow.kickoff_async())

|

||||

assert result == "step 1 -> step 2"

|

||||

assert flow.state.value == "step 1 -> step 2"

|

||||

|

||||

|

||||

def test_flow_concurrent_access():

|

||||

"""Test Flow with concurrent access."""

|

||||

flow = LockFlow()

|

||||

results = []

|

||||

errors = []

|

||||

|

||||

async def run_flow():

|

||||

try:

|

||||

result = await flow.kickoff_async()

|

||||

results.append(result)

|

||||

except Exception as e:

|

||||

errors.append(e)

|

||||

|

||||

async def test():

|

||||

tasks = [run_flow() for _ in range(10)]

|

||||

await asyncio.gather(*tasks)

|

||||

|

||||

asyncio.run(test())

|

||||

assert len(results) == 10

|

||||

assert not errors

|

||||

assert all(result == "step 1 -> step 2" for result in results)

|

||||

@@ -124,36 +124,3 @@ def test_ask_question_to_wrong_agent():

|

||||

result

|

||||

== "\nError executing tool. coworker mentioned not found, it must be one of the following options:\n- researcher\n"

|

||||

)

|

||||

|

||||

|

||||

def test_delegate_work_with_similar_roles():

|

||||

"""Test that delegation tools show rich context for similar roles."""

|

||||

researcher1 = Agent(

|

||||

role="Quick Researcher",

|

||||

goal="Find information quickly, prioritizing speed",

|

||||

backstory="Specialist in rapid information gathering"

|

||||

)

|

||||

researcher2 = Agent(

|

||||

role="Deep Researcher",

|

||||

goal="Find detailed and thorough information",

|

||||

backstory="Expert in comprehensive research"

|

||||

)

|

||||

tools = AgentTools(agents=[researcher1, researcher2]).tools()

|

||||

delegate_tool = tools[0]

|

||||

ask_tool = tools[1]

|

||||

|

||||

# Verify tool descriptions include goals and backstories

|

||||

assert "Quick Researcher (Goal: Find information quickly" in delegate_tool.description

|

||||

assert "Deep Researcher (Goal: Find detailed" in delegate_tool.description

|

||||

assert "Specialist in rapid information gathering" in delegate_tool.description

|

||||

assert "Expert in comprehensive research" in delegate_tool.description

|

||||

|

||||

# Verify ask tool also has the enhanced descriptions

|

||||

assert "Quick Researcher (Goal: Find information quickly" in ask_tool.description

|

||||

assert "Deep Researcher (Goal: Find detailed" in ask_tool.description

|

||||

assert "Specialist in rapid information gathering" in ask_tool.description

|

||||

assert "Expert in comprehensive research" in ask_tool.description

|

||||

|

||||

# Verify multiline formatting

|

||||

assert "\n- " in delegate_tool.description

|

||||

assert "\n- " in ask_tool.description

|

||||

|

||||

419

uv.lock

generated

419

uv.lock

generated

@@ -198,15 +198,6 @@ wheels = [

|

||||

{ url = "https://files.pythonhosted.org/packages/39/e3/893e8757be2612e6c266d9bb58ad2e3651524b5b40cf56761e985a28b13e/asgiref-3.8.1-py3-none-any.whl", hash = "sha256:3e1e3ecc849832fe52ccf2cb6686b7a55f82bb1d6aee72a58826471390335e47", size = 23828 },

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "asn1crypto"

|

||||

version = "1.5.1"

|

||||

source = { registry = "https://pypi.org/simple" }

|

||||

sdist = { url = "https://files.pythonhosted.org/packages/de/cf/d547feed25b5244fcb9392e288ff9fdc3280b10260362fc45d37a798a6ee/asn1crypto-1.5.1.tar.gz", hash = "sha256:13ae38502be632115abf8a24cbe5f4da52e3b5231990aff31123c805306ccb9c", size = 121080 }

|

||||

wheels = [

|

||||

{ url = "https://files.pythonhosted.org/packages/c9/7f/09065fd9e27da0eda08b4d6897f1c13535066174cc023af248fc2a8d5e5a/asn1crypto-1.5.1-py2.py3-none-any.whl", hash = "sha256:db4e40728b728508912cbb3d44f19ce188f218e9eba635821bb4b68564f8fd67", size = 105045 },

|

||||

]

|

||||

|

||||

[[package]]

|

||||