mirror of

https://github.com/crewAIInc/crewAI.git

synced 2026-05-01 07:13:00 +00:00

Compare commits

30 Commits

devin/1739

...

devin/1739

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

ed139b3cc7 | ||

|

|

d3c712a473 | ||

|

|

baea1af374 | ||

|

|

e587a8c433 | ||

|

|

c8b01295f5 | ||

|

|

812b63af0f | ||

|

|

b47aaa10c6 | ||

|

|

92101e77e4 | ||

|

|

96f6210fa6 | ||

|

|

5d7282971a | ||

|

|

09a6fab35f | ||

|

|

3bf93f1091 | ||

|

|

eeeb46ff85 | ||

|

|

ce44f3bc09 | ||

|

|

3dd20e3503 | ||

|

|

44502d73f5 | ||

|

|

b62c908626 | ||

|

|

d52fd09602 | ||

|

|

d6800d8957 | ||

|

|

2fd7506ed9 | ||

|

|

161084aff2 | ||

|

|

b145cb3247 | ||

|

|

1adbcf697d | ||

|

|

e51355200a | ||

|

|

47818f4f41 | ||

|

|

9b10fd47b0 | ||

|

|

c408368267 | ||

|

|

90b3145e92 | ||

|

|

fbd0e015d5 | ||

|

|

17e25fb842 |

@@ -91,7 +91,7 @@ result = crew.kickoff(inputs={"question": "What city does John live in and how o

|

||||

```

|

||||

|

||||

|

||||

Here's another example with the `CrewDoclingSource`. The CrewDoclingSource is actually quite versatile and can handle multiple file formats including TXT, PDF, DOCX, HTML, and more.

|

||||

Here's another example with the `CrewDoclingSource`. The CrewDoclingSource is actually quite versatile and can handle multiple file formats including MD, PDF, DOCX, HTML, and more.

|

||||

|

||||

<Note>

|

||||

You need to install `docling` for the following example to work: `uv add docling`

|

||||

@@ -152,10 +152,10 @@ Here are examples of how to use different types of knowledge sources:

|

||||

|

||||

### Text File Knowledge Source

|

||||

```python

|

||||

from crewai.knowledge.source.crew_docling_source import CrewDoclingSource

|

||||

from crewai.knowledge.source.text_file_knowledge_source import TextFileKnowledgeSource

|

||||

|

||||

# Create a text file knowledge source

|

||||

text_source = CrewDoclingSource(

|

||||

text_source = TextFileKnowledgeSource(

|

||||

file_paths=["document.txt", "another.txt"]

|

||||

)

|

||||

|

||||

|

||||

@@ -282,6 +282,19 @@ my_crew = Crew(

|

||||

|

||||

### Using Google AI embeddings

|

||||

|

||||

#### Prerequisites

|

||||

Before using Google AI embeddings, ensure you have:

|

||||

- Access to the Gemini API

|

||||

- The necessary API keys and permissions

|

||||

|

||||

You will need to update your *pyproject.toml* dependencies:

|

||||

```YAML

|

||||

dependencies = [

|

||||

"google-generativeai>=0.8.4", #main version in January/2025 - crewai v.0.100.0 and crewai-tools 0.33.0

|

||||

"crewai[tools]>=0.100.0,<1.0.0"

|

||||

]

|

||||

```

|

||||

|

||||

```python Code

|

||||

from crewai import Crew, Agent, Task, Process

|

||||

|

||||

@@ -434,6 +447,38 @@ my_crew = Crew(

|

||||

)

|

||||

```

|

||||

|

||||

### Using Amazon Bedrock embeddings

|

||||

|

||||

```python Code

|

||||

# Note: Ensure you have installed `boto3` for Bedrock embeddings to work.

|

||||

|

||||

import os

|

||||

import boto3

|

||||

from crewai import Crew, Agent, Task, Process

|

||||

|

||||

boto3_session = boto3.Session(

|

||||

region_name=os.environ.get("AWS_REGION_NAME"),

|

||||

aws_access_key_id=os.environ.get("AWS_ACCESS_KEY_ID"),

|

||||

aws_secret_access_key=os.environ.get("AWS_SECRET_ACCESS_KEY")

|

||||

)

|

||||

|

||||

my_crew = Crew(

|

||||

agents=[...],

|

||||

tasks=[...],

|

||||

process=Process.sequential,

|

||||

memory=True,

|

||||

embedder={

|

||||

"provider": "bedrock",

|

||||

"config":{

|

||||

"session": boto3_session,

|

||||

"model": "amazon.titan-embed-text-v2:0",

|

||||

"vector_dimension": 1024

|

||||

}

|

||||

}

|

||||

verbose=True

|

||||

)

|

||||

```

|

||||

|

||||

### Adding Custom Embedding Function

|

||||

|

||||

```python Code

|

||||

|

||||

@@ -268,7 +268,7 @@ analysis_task = Task(

|

||||

|

||||

Task guardrails provide a way to validate and transform task outputs before they

|

||||

are passed to the next task. This feature helps ensure data quality and provides

|

||||

efeedback to agents when their output doesn't meet specific criteria.

|

||||

feedback to agents when their output doesn't meet specific criteria.

|

||||

|

||||

### Using Task Guardrails

|

||||

|

||||

|

||||

98

docs/how-to/langfuse-observability.mdx

Normal file

98

docs/how-to/langfuse-observability.mdx

Normal file

@@ -0,0 +1,98 @@

|

||||

---

|

||||

title: Agent Monitoring with Langfuse

|

||||

description: Learn how to integrate Langfuse with CrewAI via OpenTelemetry using OpenLit

|

||||

icon: magnifying-glass-chart

|

||||

---

|

||||

|

||||

# Integrate Langfuse with CrewAI

|

||||

|

||||

This notebook demonstrates how to integrate **Langfuse** with **CrewAI** using OpenTelemetry via the **OpenLit** SDK. By the end of this notebook, you will be able to trace your CrewAI applications with Langfuse for improved observability and debugging.

|

||||

|

||||

> **What is Langfuse?** [Langfuse](https://langfuse.com) is an open-source LLM engineering platform. It provides tracing and monitoring capabilities for LLM applications, helping developers debug, analyze, and optimize their AI systems. Langfuse integrates with various tools and frameworks via native integrations, OpenTelemetry, and APIs/SDKs.

|

||||

|

||||

## Get Started

|

||||

|

||||

We'll walk through a simple example of using CrewAI and integrating it with Langfuse via OpenTelemetry using OpenLit.

|

||||

|

||||

### Step 1: Install Dependencies

|

||||

|

||||

|

||||

```python

|

||||

%pip install langfuse openlit crewai crewai_tools

|

||||

```

|

||||

|

||||

### Step 2: Set Up Environment Variables

|

||||

|

||||

Set your Langfuse API keys and configure OpenTelemetry export settings to send traces to Langfuse. Please refer to the [Langfuse OpenTelemetry Docs](https://langfuse.com/docs/opentelemetry/get-started) for more information on the Langfuse OpenTelemetry endpoint `/api/public/otel` and authentication.

|

||||

|

||||

|

||||

```python

|

||||

import os

|

||||

import base64

|

||||

|

||||

LANGFUSE_PUBLIC_KEY="pk-lf-..."

|

||||

LANGFUSE_SECRET_KEY="sk-lf-..."

|

||||

LANGFUSE_AUTH=base64.b64encode(f"{LANGFUSE_PUBLIC_KEY}:{LANGFUSE_SECRET_KEY}".encode()).decode()

|

||||

|

||||

os.environ["OTEL_EXPORTER_OTLP_ENDPOINT"] = "https://cloud.langfuse.com/api/public/otel" # EU data region

|

||||

# os.environ["OTEL_EXPORTER_OTLP_ENDPOINT"] = "https://us.cloud.langfuse.com/api/public/otel" # US data region

|

||||

os.environ["OTEL_EXPORTER_OTLP_HEADERS"] = f"Authorization=Basic {LANGFUSE_AUTH}"

|

||||

|

||||

# your openai key

|

||||

os.environ["OPENAI_API_KEY"] = "sk-..."

|

||||

```

|

||||

|

||||

### Step 3: Initialize OpenLit

|

||||

|

||||

Initialize the OpenLit OpenTelemetry instrumentation SDK to start capturing OpenTelemetry traces.

|

||||

|

||||

|

||||

```python

|

||||

import openlit

|

||||

|

||||

openlit.init()

|

||||

```

|

||||

|

||||

### Step 4: Create a Simple CrewAI Application

|

||||

|

||||

We'll create a simple CrewAI application where multiple agents collaborate to answer a user's question.

|

||||

|

||||

|

||||

```python

|

||||

from crewai import Agent, Task, Crew

|

||||

|

||||

from crewai_tools import (

|

||||

WebsiteSearchTool

|

||||

)

|

||||

|

||||

web_rag_tool = WebsiteSearchTool()

|

||||

|

||||

writer = Agent(

|

||||

role="Writer",

|

||||

goal="You make math engaging and understandable for young children through poetry",

|

||||

backstory="You're an expert in writing haikus but you know nothing of math.",

|

||||

tools=[web_rag_tool],

|

||||

)

|

||||

|

||||

task = Task(description=("What is {multiplication}?"),

|

||||

expected_output=("Compose a haiku that includes the answer."),

|

||||

agent=writer)

|

||||

|

||||

crew = Crew(

|

||||

agents=[writer],

|

||||

tasks=[task],

|

||||

share_crew=False

|

||||

)

|

||||

```

|

||||

|

||||

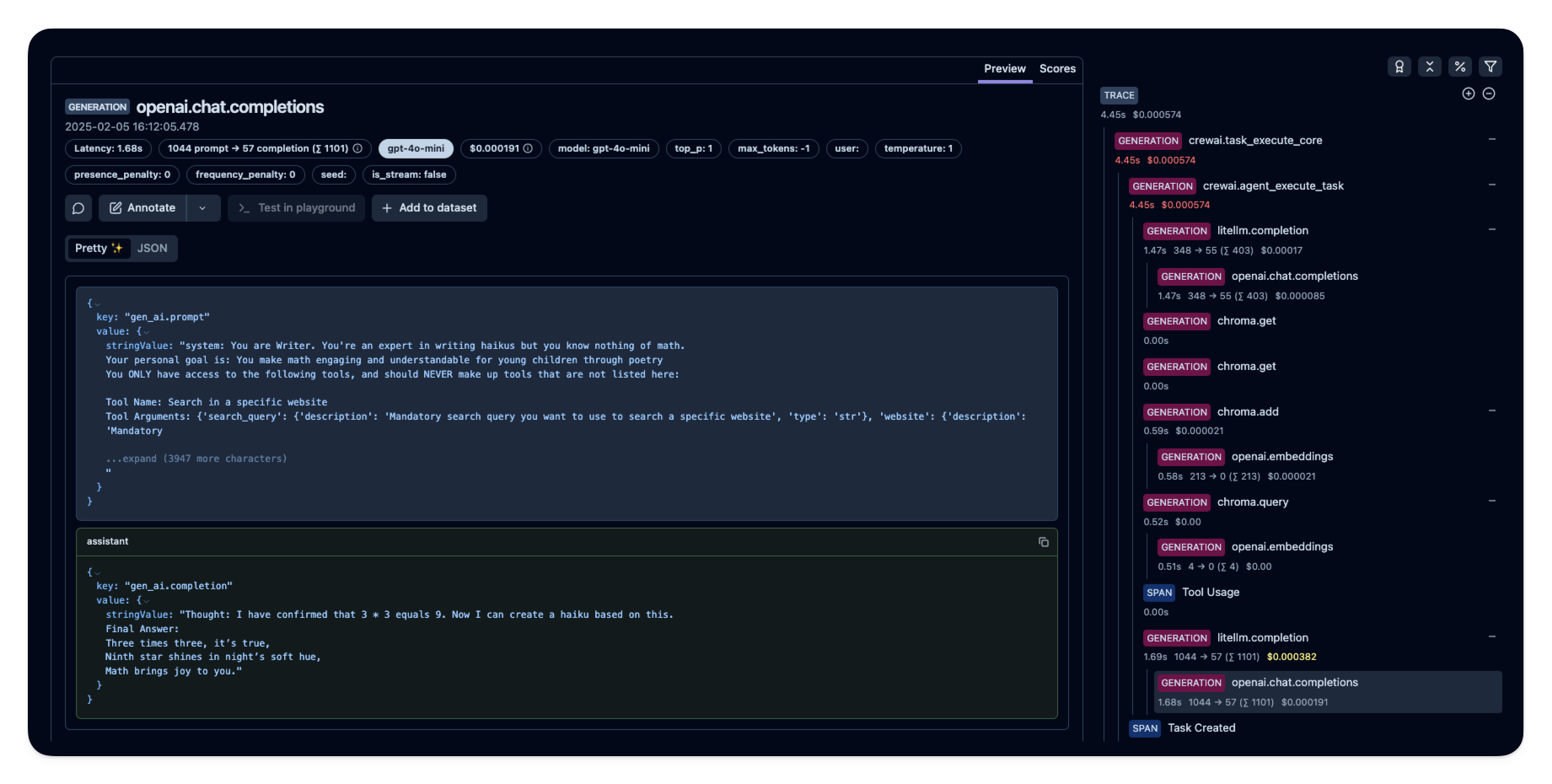

### Step 5: See Traces in Langfuse

|

||||

|

||||

After running the agent, you can view the traces generated by your CrewAI application in [Langfuse](https://cloud.langfuse.com). You should see detailed steps of the LLM interactions, which can help you debug and optimize your AI agent.

|

||||

|

||||

|

||||

|

||||

_[Public example trace in Langfuse](https://cloud.langfuse.com/project/cloramnkj0002jz088vzn1ja4/traces/e2cf380ffc8d47d28da98f136140642b?timestamp=2025-02-05T15%3A12%3A02.717Z&observation=3b32338ee6a5d9af)_

|

||||

|

||||

## References

|

||||

|

||||

- [Langfuse OpenTelemetry Docs](https://langfuse.com/docs/opentelemetry/get-started)

|

||||

@@ -1,211 +0,0 @@

|

||||

# Portkey Integration with CrewAI

|

||||

<img src="https://raw.githubusercontent.com/siddharthsambharia-portkey/Portkey-Product-Images/main/Portkey-CrewAI.png" alt="Portkey CrewAI Header Image" width="70%" />

|

||||

|

||||

|

||||

[Portkey](https://portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) is a 2-line upgrade to make your CrewAI agents reliable, cost-efficient, and fast.

|

||||

|

||||

Portkey adds 4 core production capabilities to any CrewAI agent:

|

||||

1. Routing to **200+ LLMs**

|

||||

2. Making each LLM call more robust

|

||||

3. Full-stack tracing & cost, performance analytics

|

||||

4. Real-time guardrails to enforce behavior

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## Getting Started

|

||||

|

||||

1. **Install Required Packages:**

|

||||

|

||||

```bash

|

||||

pip install -qU crewai portkey-ai

|

||||

```

|

||||

|

||||

2. **Configure the LLM Client:**

|

||||

|

||||

To build CrewAI Agents with Portkey, you'll need two keys:

|

||||

- **Portkey API Key**: Sign up on the [Portkey app](https://app.portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) and copy your API key

|

||||

- **Virtual Key**: Virtual Keys securely manage your LLM API keys in one place. Store your LLM provider API keys securely in Portkey's vault

|

||||

|

||||

```python

|

||||

from crewai import LLM

|

||||

from portkey_ai import createHeaders, PORTKEY_GATEWAY_URL

|

||||

|

||||

gpt_llm = LLM(

|

||||

model="gpt-4",

|

||||

base_url=PORTKEY_GATEWAY_URL,

|

||||

api_key="dummy", # We are using Virtual key

|

||||

extra_headers=createHeaders(

|

||||

api_key="YOUR_PORTKEY_API_KEY",

|

||||

virtual_key="YOUR_VIRTUAL_KEY", # Enter your Virtual key from Portkey

|

||||

)

|

||||

)

|

||||

```

|

||||

|

||||

3. **Create and Run Your First Agent:**

|

||||

|

||||

```python

|

||||

from crewai import Agent, Task, Crew

|

||||

|

||||

# Define your agents with roles and goals

|

||||

coder = Agent(

|

||||

role='Software developer',

|

||||

goal='Write clear, concise code on demand',

|

||||

backstory='An expert coder with a keen eye for software trends.',

|

||||

llm=gpt_llm

|

||||

)

|

||||

|

||||

# Create tasks for your agents

|

||||

task1 = Task(

|

||||

description="Define the HTML for making a simple website with heading- Hello World! Portkey is working!",

|

||||

expected_output="A clear and concise HTML code",

|

||||

agent=coder

|

||||

)

|

||||

|

||||

# Instantiate your crew

|

||||

crew = Crew(

|

||||

agents=[coder],

|

||||

tasks=[task1],

|

||||

)

|

||||

|

||||

result = crew.kickoff()

|

||||

print(result)

|

||||

```

|

||||

|

||||

|

||||

## Key Features

|

||||

|

||||

| Feature | Description |

|

||||

|---------|-------------|

|

||||

| 🌐 Multi-LLM Support | Access OpenAI, Anthropic, Gemini, Azure, and 250+ providers through a unified interface |

|

||||

| 🛡️ Production Reliability | Implement retries, timeouts, load balancing, and fallbacks |

|

||||

| 📊 Advanced Observability | Track 40+ metrics including costs, tokens, latency, and custom metadata |

|

||||

| 🔍 Comprehensive Logging | Debug with detailed execution traces and function call logs |

|

||||

| 🚧 Security Controls | Set budget limits and implement role-based access control |

|

||||

| 🔄 Performance Analytics | Capture and analyze feedback for continuous improvement |

|

||||

| 💾 Intelligent Caching | Reduce costs and latency with semantic or simple caching |

|

||||

|

||||

|

||||

## Production Features with Portkey Configs

|

||||

|

||||

All features mentioned below are through Portkey's Config system. Portkey's Config system allows you to define routing strategies using simple JSON objects in your LLM API calls. You can create and manage Configs directly in your code or through the Portkey Dashboard. Each Config has a unique ID for easy reference.

|

||||

|

||||

<Frame>

|

||||

<img src="https://raw.githubusercontent.com/Portkey-AI/docs-core/refs/heads/main/images/libraries/libraries-3.avif"/>

|

||||

</Frame>

|

||||

|

||||

|

||||

### 1. Use 250+ LLMs

|

||||

Access various LLMs like Anthropic, Gemini, Mistral, Azure OpenAI, and more with minimal code changes. Switch between providers or use them together seamlessly. [Learn more about Universal API](https://portkey.ai/docs/product/ai-gateway/universal-api)

|

||||

|

||||

|

||||

Easily switch between different LLM providers:

|

||||

|

||||

```python

|

||||

# Anthropic Configuration

|

||||

anthropic_llm = LLM(

|

||||

model="claude-3-5-sonnet-latest",

|

||||

base_url=PORTKEY_GATEWAY_URL,

|

||||

api_key="dummy",

|

||||

extra_headers=createHeaders(

|

||||

api_key="YOUR_PORTKEY_API_KEY",

|

||||

virtual_key="YOUR_ANTHROPIC_VIRTUAL_KEY", #You don't need provider when using Virtual keys

|

||||

trace_id="anthropic_agent"

|

||||

)

|

||||

)

|

||||

|

||||

# Azure OpenAI Configuration

|

||||

azure_llm = LLM(

|

||||

model="gpt-4",

|

||||

base_url=PORTKEY_GATEWAY_URL,

|

||||

api_key="dummy",

|

||||

extra_headers=createHeaders(

|

||||

api_key="YOUR_PORTKEY_API_KEY",

|

||||

virtual_key="YOUR_AZURE_VIRTUAL_KEY", #You don't need provider when using Virtual keys

|

||||

trace_id="azure_agent"

|

||||

)

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

### 2. Caching

|

||||

Improve response times and reduce costs with two powerful caching modes:

|

||||

- **Simple Cache**: Perfect for exact matches

|

||||

- **Semantic Cache**: Matches responses for requests that are semantically similar

|

||||

[Learn more about Caching](https://portkey.ai/docs/product/ai-gateway/cache-simple-and-semantic)

|

||||

|

||||

```py

|

||||

config = {

|

||||

"cache": {

|

||||

"mode": "semantic", # or "simple" for exact matching

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

### 3. Production Reliability

|

||||

Portkey provides comprehensive reliability features:

|

||||

- **Automatic Retries**: Handle temporary failures gracefully

|

||||

- **Request Timeouts**: Prevent hanging operations

|

||||

- **Conditional Routing**: Route requests based on specific conditions

|

||||

- **Fallbacks**: Set up automatic provider failovers

|

||||

- **Load Balancing**: Distribute requests efficiently

|

||||

|

||||

[Learn more about Reliability Features](https://portkey.ai/docs/product/ai-gateway/)

|

||||

|

||||

|

||||

|

||||

### 4. Metrics

|

||||

|

||||

Agent runs are complex. Portkey automatically logs **40+ comprehensive metrics** for your AI agents, including cost, tokens used, latency, etc. Whether you need a broad overview or granular insights into your agent runs, Portkey's customizable filters provide the metrics you need.

|

||||

|

||||

|

||||

- Cost per agent interaction

|

||||

- Response times and latency

|

||||

- Token usage and efficiency

|

||||

- Success/failure rates

|

||||

- Cache hit rates

|

||||

|

||||

<img src="https://github.com/siddharthsambharia-portkey/Portkey-Product-Images/blob/main/Portkey-Dashboard.png?raw=true" width="70%" alt="Portkey Dashboard" />

|

||||

|

||||

### 5. Detailed Logging

|

||||

Logs are essential for understanding agent behavior, diagnosing issues, and improving performance. They provide a detailed record of agent activities and tool use, which is crucial for debugging and optimizing processes.

|

||||

|

||||

|

||||

Access a dedicated section to view records of agent executions, including parameters, outcomes, function calls, and errors. Filter logs based on multiple parameters such as trace ID, model, tokens used, and metadata.

|

||||

|

||||

<details>

|

||||

<summary><b>Traces</b></summary>

|

||||

<img src="https://raw.githubusercontent.com/siddharthsambharia-portkey/Portkey-Product-Images/main/Portkey-Traces.png" alt="Portkey Traces" width="70%" />

|

||||

</details>

|

||||

|

||||

<details>

|

||||

<summary><b>Logs</b></summary>

|

||||

<img src="https://raw.githubusercontent.com/siddharthsambharia-portkey/Portkey-Product-Images/main/Portkey-Logs.png" alt="Portkey Logs" width="70%" />

|

||||

</details>

|

||||

|

||||

### 6. Enterprise Security Features

|

||||

- Set budget limit and rate limts per Virtual Key (disposable API keys)

|

||||

- Implement role-based access control

|

||||

- Track system changes with audit logs

|

||||

- Configure data retention policies

|

||||

|

||||

|

||||

|

||||

For detailed information on creating and managing Configs, visit the [Portkey documentation](https://docs.portkey.ai/product/ai-gateway/configs).

|

||||

|

||||

## Resources

|

||||

|

||||

- [📘 Portkey Documentation](https://docs.portkey.ai)

|

||||

- [📊 Portkey Dashboard](https://app.portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai)

|

||||

- [🐦 Twitter](https://twitter.com/portkeyai)

|

||||

- [💬 Discord Community](https://discord.gg/DD7vgKK299)

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -1,5 +1,5 @@

|

||||

---

|

||||

title: Portkey Observability and Guardrails

|

||||

title: Agent Monitoring with Portkey

|

||||

description: How to use Portkey with CrewAI

|

||||

icon: key

|

||||

---

|

||||

|

||||

@@ -103,7 +103,8 @@

|

||||

"how-to/langtrace-observability",

|

||||

"how-to/mlflow-observability",

|

||||

"how-to/openlit-observability",

|

||||

"how-to/portkey-observability"

|

||||

"how-to/portkey-observability",

|

||||

"how-to/langfuse-observability"

|

||||

]

|

||||

},

|

||||

{

|

||||

|

||||

@@ -1,4 +1,5 @@

|

||||

import json

|

||||

import os

|

||||

import time

|

||||

from collections import defaultdict

|

||||

from pathlib import Path

|

||||

@@ -153,6 +154,56 @@ def read_cache_file(cache_file):

|

||||

return None

|

||||

|

||||

|

||||

def validate_response(response):

|

||||

"""

|

||||

Validates the response content type.

|

||||

|

||||

Args:

|

||||

- response: The HTTP response object.

|

||||

|

||||

Returns:

|

||||

- bool: True if the content type is valid, False otherwise.

|

||||

"""

|

||||

content_type = response.headers.get('content-type', '').lower()

|

||||

valid_types = ['application/json', 'application/json; charset=utf-8']

|

||||

if not any(content_type.startswith(t) for t in valid_types):

|

||||

click.secho(f"Error: Expected JSON response but got {content_type}", fg="red")

|

||||

return False

|

||||

return True

|

||||

|

||||

def handle_provider_error(error, error_type="fetch"):

|

||||

"""

|

||||

Handles provider data errors with consistent messaging.

|

||||

|

||||

Args:

|

||||

- error: The error object.

|

||||

- error_type: Type of error for message selection.

|

||||

|

||||

Returns:

|

||||

- None: Always returns None to indicate error.

|

||||

"""

|

||||

error_messages = {

|

||||

"fetch": "Error fetching provider data",

|

||||

"parse": "Error parsing provider data",

|

||||

"unexpected": "Unexpected error"

|

||||

}

|

||||

base_message = error_messages.get(error_type, "Error")

|

||||

click.secho(f"{base_message}: {str(error)}", fg="red")

|

||||

return None

|

||||

|

||||

def invalidate_cache(cache_file):

|

||||

"""

|

||||

Invalidates the cache file in error scenarios.

|

||||

|

||||

Args:

|

||||

- cache_file: Path to the cache file.

|

||||

"""

|

||||

try:

|

||||

if os.path.exists(cache_file):

|

||||

os.remove(cache_file)

|

||||

except OSError as e:

|

||||

click.secho(f"Warning: Could not clear cache file: {e}", fg="yellow")

|

||||

|

||||

def fetch_provider_data(cache_file):

|

||||

"""

|

||||

Fetches provider data from a specified URL and caches it to a file.

|

||||

@@ -166,15 +217,24 @@ def fetch_provider_data(cache_file):

|

||||

try:

|

||||

response = requests.get(JSON_URL, stream=True, timeout=60)

|

||||

response.raise_for_status()

|

||||

|

||||

if not validate_response(response):

|

||||

invalidate_cache(cache_file)

|

||||

return None

|

||||

|

||||

data = download_data(response)

|

||||

with open(cache_file, "w") as f:

|

||||

json.dump(data, f)

|

||||

return data

|

||||

except requests.RequestException as e:

|

||||

click.secho(f"Error fetching provider data: {e}", fg="red")

|

||||

except json.JSONDecodeError:

|

||||

click.secho("Error parsing provider data. Invalid JSON format.", fg="red")

|

||||

return None

|

||||

invalidate_cache(cache_file)

|

||||

return handle_provider_error(e, "fetch")

|

||||

except json.JSONDecodeError as e:

|

||||

invalidate_cache(cache_file)

|

||||

return handle_provider_error(e, "parse")

|

||||

except Exception as e:

|

||||

invalidate_cache(cache_file)

|

||||

return handle_provider_error(e, "unexpected")

|

||||

|

||||

|

||||

def download_data(response):

|

||||

@@ -206,7 +266,7 @@ def get_provider_data():

|

||||

Retrieves provider data from a cache file, filters out models based on provider criteria, and returns a dictionary of providers mapped to their models.

|

||||

|

||||

Returns:

|

||||

- dict or None: A dictionary of providers mapped to their models or None if the operation fails.

|

||||

- dict: A dictionary of providers mapped to their models, using default providers if fetch fails.

|

||||

"""

|

||||

cache_dir = Path.home() / ".crewai"

|

||||

cache_dir.mkdir(exist_ok=True)

|

||||

@@ -215,7 +275,9 @@ def get_provider_data():

|

||||

|

||||

data = load_provider_data(cache_file, cache_expiry)

|

||||

if not data:

|

||||

return None

|

||||

# Return default providers if fetch fails

|

||||

return {provider.lower(): MODELS.get(provider.lower(), [])

|

||||

for provider in PROVIDERS}

|

||||

|

||||

provider_models = defaultdict(list)

|

||||

for model_name, properties in data.items():

|

||||

|

||||

@@ -1147,32 +1147,20 @@ class Crew(BaseModel):

|

||||

|

||||

def test(

|

||||

self,

|

||||

n_iterations: int = 1,

|

||||

n_iterations: int,

|

||||

openai_model_name: Optional[str] = None,

|

||||

llm: Optional[Union[str, LLM]] = None,

|

||||

inputs: Optional[Dict[str, Any]] = None,

|

||||

) -> None:

|

||||

"""Test and evaluate the Crew with the given inputs for n iterations.

|

||||

|

||||

Args:

|

||||

n_iterations: Number of iterations to run the test

|

||||

openai_model_name: OpenAI model name to use for evaluation (deprecated)

|

||||

llm: LLM instance or model name to use for evaluation

|

||||

inputs: Optional dictionary of inputs to pass to the crew

|

||||

"""

|

||||

if not llm and not openai_model_name:

|

||||

raise ValueError("Must provide either 'llm' or 'openai_model_name' parameter")

|

||||

|

||||

model_to_use = self._get_llm_instance(llm, openai_model_name)

|

||||

"""Test and evaluate the Crew with the given inputs for n iterations concurrently using concurrent.futures."""

|

||||

test_crew = self.copy()

|

||||

|

||||

self._test_execution_span = test_crew._telemetry.test_execution_span(

|

||||

test_crew,

|

||||

n_iterations,

|

||||

inputs,

|

||||

str(model_to_use.model),

|

||||

)

|

||||

evaluator = CrewEvaluator(test_crew, model_to_use)

|

||||

openai_model_name, # type: ignore[arg-type]

|

||||

) # type: ignore[arg-type]

|

||||

evaluator = CrewEvaluator(test_crew, openai_model_name) # type: ignore[arg-type]

|

||||

|

||||

for i in range(1, n_iterations + 1):

|

||||

evaluator.set_iteration(i)

|

||||

@@ -1180,28 +1168,6 @@ class Crew(BaseModel):

|

||||

|

||||

evaluator.print_crew_evaluation_result()

|

||||

|

||||

def _get_llm_instance(self, llm: Optional[Union[str, LLM]], openai_model_name: Optional[str]) -> LLM:

|

||||

"""Get an LLM instance from either llm or openai_model_name parameter.

|

||||

|

||||

Args:

|

||||

llm: LLM instance or model name

|

||||

openai_model_name: OpenAI model name (deprecated)

|

||||

|

||||

Returns:

|

||||

LLM instance

|

||||

|

||||

Raises:

|

||||

ValueError: If neither llm nor openai_model_name is provided

|

||||

"""

|

||||

model = llm if llm is not None else openai_model_name

|

||||

if model is None:

|

||||

raise ValueError("Must provide either 'llm' or 'openai_model_name' parameter")

|

||||

if isinstance(model, str):

|

||||

return LLM(model=model)

|

||||

if not isinstance(model, LLM):

|

||||

raise ValueError("Model must be either a string or an LLM instance")

|

||||

return model

|

||||

|

||||

def __repr__(self):

|

||||

return f"Crew(id={self.id}, process={self.process}, number_of_agents={len(self.agents)}, number_of_tasks={len(self.tasks)})"

|

||||

|

||||

|

||||

@@ -1,4 +1,5 @@

|

||||

import asyncio

|

||||

import copy

|

||||

import inspect

|

||||

import logging

|

||||

from typing import (

|

||||

@@ -394,7 +395,6 @@ class FlowMeta(type):

|

||||

or hasattr(attr_value, "__trigger_methods__")

|

||||

or hasattr(attr_value, "__is_router__")

|

||||

):

|

||||

|

||||

# Register start methods

|

||||

if hasattr(attr_value, "__is_start_method__"):

|

||||

start_methods.append(attr_name)

|

||||

@@ -569,6 +569,9 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

f"Initial state must be dict or BaseModel, got {type(self.initial_state)}"

|

||||

)

|

||||

|

||||

def _copy_state(self) -> T:

|

||||

return copy.deepcopy(self._state)

|

||||

|

||||

@property

|

||||

def state(self) -> T:

|

||||

return self._state

|

||||

@@ -740,6 +743,7 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

event=FlowStartedEvent(

|

||||

type="flow_started",

|

||||

flow_name=self.__class__.__name__,

|

||||

inputs=inputs,

|

||||

),

|

||||

)

|

||||

self._log_flow_event(

|

||||

@@ -803,6 +807,18 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

async def _execute_method(

|

||||

self, method_name: str, method: Callable, *args: Any, **kwargs: Any

|

||||

) -> Any:

|

||||

dumped_params = {f"_{i}": arg for i, arg in enumerate(args)} | (kwargs or {})

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionStartedEvent(

|

||||

type="method_execution_started",

|

||||

method_name=method_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

params=dumped_params,

|

||||

state=self._copy_state(),

|

||||

),

|

||||

)

|

||||

|

||||

result = (

|

||||

await method(*args, **kwargs)

|

||||

if asyncio.iscoroutinefunction(method)

|

||||

@@ -812,6 +828,18 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

self._method_execution_counts[method_name] = (

|

||||

self._method_execution_counts.get(method_name, 0) + 1

|

||||

)

|

||||

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionFinishedEvent(

|

||||

type="method_execution_finished",

|

||||

method_name=method_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

state=self._copy_state(),

|

||||

result=result,

|

||||

),

|

||||

)

|

||||

|

||||

return result

|

||||

|

||||

async def _execute_listeners(self, trigger_method: str, result: Any) -> None:

|

||||

@@ -950,16 +978,6 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

"""

|

||||

try:

|

||||

method = self._methods[listener_name]

|

||||

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionStartedEvent(

|

||||

type="method_execution_started",

|

||||

method_name=listener_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

),

|

||||

)

|

||||

|

||||

sig = inspect.signature(method)

|

||||

params = list(sig.parameters.values())

|

||||

method_params = [p for p in params if p.name != "self"]

|

||||

@@ -971,15 +989,6 @@ class Flow(Generic[T], metaclass=FlowMeta):

|

||||

else:

|

||||

listener_result = await self._execute_method(listener_name, method)

|

||||

|

||||

self.event_emitter.send(

|

||||

self,

|

||||

event=MethodExecutionFinishedEvent(

|

||||

type="method_execution_finished",

|

||||

method_name=listener_name,

|

||||

flow_name=self.__class__.__name__,

|

||||

),

|

||||

)

|

||||

|

||||

# Execute listeners (and possibly routers) of this listener

|

||||

await self._execute_listeners(listener_name, listener_result)

|

||||

|

||||

|

||||

@@ -1,6 +1,8 @@

|

||||

from dataclasses import dataclass, field

|

||||

from datetime import datetime

|

||||

from typing import Any, Optional

|

||||

from typing import Any, Dict, Optional, Union

|

||||

|

||||

from pydantic import BaseModel

|

||||

|

||||

|

||||

@dataclass

|

||||

@@ -15,17 +17,21 @@ class Event:

|

||||

|

||||

@dataclass

|

||||

class FlowStartedEvent(Event):

|

||||

pass

|

||||

inputs: Optional[Dict[str, Any]] = None

|

||||

|

||||

|

||||

@dataclass

|

||||

class MethodExecutionStartedEvent(Event):

|

||||

method_name: str

|

||||

state: Union[Dict[str, Any], BaseModel]

|

||||

params: Optional[Dict[str, Any]] = None

|

||||

|

||||

|

||||

@dataclass

|

||||

class MethodExecutionFinishedEvent(Event):

|

||||

method_name: str

|

||||

state: Union[Dict[str, Any], BaseModel]

|

||||

result: Any = None

|

||||

|

||||

|

||||

@dataclass

|

||||

|

||||

@@ -1,28 +1,138 @@

|

||||

from pathlib import Path

|

||||

from typing import Dict, List

|

||||

from typing import Dict, Iterator, List, Optional, Union

|

||||

from urllib.parse import urlparse

|

||||

|

||||

from crewai.knowledge.source.base_file_knowledge_source import BaseFileKnowledgeSource

|

||||

from pydantic import Field, field_validator

|

||||

|

||||

from crewai.knowledge.source.base_knowledge_source import BaseKnowledgeSource

|

||||

from crewai.utilities.constants import KNOWLEDGE_DIRECTORY

|

||||

from crewai.utilities.logger import Logger

|

||||

|

||||

|

||||

class ExcelKnowledgeSource(BaseFileKnowledgeSource):

|

||||

class ExcelKnowledgeSource(BaseKnowledgeSource):

|

||||

"""A knowledge source that stores and queries Excel file content using embeddings."""

|

||||

|

||||

def load_content(self) -> Dict[Path, str]:

|

||||

"""Load and preprocess Excel file content."""

|

||||

pd = self._import_dependencies()

|

||||

# override content to be a dict of file paths to sheet names to csv content

|

||||

|

||||

_logger: Logger = Logger(verbose=True)

|

||||

|

||||

file_path: Optional[Union[Path, List[Path], str, List[str]]] = Field(

|

||||

default=None,

|

||||

description="[Deprecated] The path to the file. Use file_paths instead.",

|

||||

)

|

||||

file_paths: Optional[Union[Path, List[Path], str, List[str]]] = Field(

|

||||

default_factory=list, description="The path to the file"

|

||||

)

|

||||

chunks: List[str] = Field(default_factory=list)

|

||||

content: Dict[Path, Dict[str, str]] = Field(default_factory=dict)

|

||||

safe_file_paths: List[Path] = Field(default_factory=list)

|

||||

|

||||

@field_validator("file_path", "file_paths", mode="before")

|

||||

def validate_file_path(cls, v, info):

|

||||

"""Validate that at least one of file_path or file_paths is provided."""

|

||||

# Single check if both are None, O(1) instead of nested conditions

|

||||

if (

|

||||

v is None

|

||||

and info.data.get(

|

||||

"file_path" if info.field_name == "file_paths" else "file_paths"

|

||||

)

|

||||

is None

|

||||

):

|

||||

raise ValueError("Either file_path or file_paths must be provided")

|

||||

return v

|

||||

|

||||

def _process_file_paths(self) -> List[Path]:

|

||||

"""Convert file_path to a list of Path objects."""

|

||||

|

||||

if hasattr(self, "file_path") and self.file_path is not None:

|

||||

self._logger.log(

|

||||

"warning",

|

||||

"The 'file_path' attribute is deprecated and will be removed in a future version. Please use 'file_paths' instead.",

|

||||

color="yellow",

|

||||

)

|

||||

self.file_paths = self.file_path

|

||||

|

||||

if self.file_paths is None:

|

||||

raise ValueError("Your source must be provided with a file_paths: []")

|

||||

|

||||

# Convert single path to list

|

||||

path_list: List[Union[Path, str]] = (

|

||||

[self.file_paths]

|

||||

if isinstance(self.file_paths, (str, Path))

|

||||

else list(self.file_paths)

|

||||

if isinstance(self.file_paths, list)

|

||||

else []

|

||||

)

|

||||

|

||||

if not path_list:

|

||||

raise ValueError(

|

||||

"file_path/file_paths must be a Path, str, or a list of these types"

|

||||

)

|

||||

|

||||

return [self.convert_to_path(path) for path in path_list]

|

||||

|

||||

def validate_content(self):

|

||||

"""Validate the paths."""

|

||||

for path in self.safe_file_paths:

|

||||

if not path.exists():

|

||||

self._logger.log(

|

||||

"error",

|

||||

f"File not found: {path}. Try adding sources to the knowledge directory. If it's inside the knowledge directory, use the relative path.",

|

||||

color="red",

|

||||

)

|

||||

raise FileNotFoundError(f"File not found: {path}")

|

||||

if not path.is_file():

|

||||

self._logger.log(

|

||||

"error",

|

||||

f"Path is not a file: {path}",

|

||||

color="red",

|

||||

)

|

||||

|

||||

def model_post_init(self, _) -> None:

|

||||

if self.file_path:

|

||||

self._logger.log(

|

||||

"warning",

|

||||

"The 'file_path' attribute is deprecated and will be removed in a future version. Please use 'file_paths' instead.",

|

||||

color="yellow",

|

||||

)

|

||||

self.file_paths = self.file_path

|

||||

self.safe_file_paths = self._process_file_paths()

|

||||

self.validate_content()

|

||||

self.content = self._load_content()

|

||||

|

||||

def _load_content(self) -> Dict[Path, Dict[str, str]]:

|

||||

"""Load and preprocess Excel file content from multiple sheets.

|

||||

|

||||

Each sheet's content is converted to CSV format and stored.

|

||||

|

||||

Returns:

|

||||

Dict[Path, Dict[str, str]]: A mapping of file paths to their respective sheet contents.

|

||||

|

||||

Raises:

|

||||

ImportError: If required dependencies are missing.

|

||||

FileNotFoundError: If the specified Excel file cannot be opened.

|

||||

"""

|

||||

pd = self._import_dependencies()

|

||||

content_dict = {}

|

||||

for file_path in self.safe_file_paths:

|

||||

file_path = self.convert_to_path(file_path)

|

||||

df = pd.read_excel(file_path)

|

||||

content = df.to_csv(index=False)

|

||||

content_dict[file_path] = content

|

||||

with pd.ExcelFile(file_path) as xl:

|

||||

sheet_dict = {

|

||||

str(sheet_name): str(

|

||||

pd.read_excel(xl, sheet_name).to_csv(index=False)

|

||||

)

|

||||

for sheet_name in xl.sheet_names

|

||||

}

|

||||

content_dict[file_path] = sheet_dict

|

||||

return content_dict

|

||||

|

||||

def convert_to_path(self, path: Union[Path, str]) -> Path:

|

||||

"""Convert a path to a Path object."""

|

||||

return Path(KNOWLEDGE_DIRECTORY + "/" + path) if isinstance(path, str) else path

|

||||

|

||||

def _import_dependencies(self):

|

||||

"""Dynamically import dependencies."""

|

||||

try:

|

||||

import openpyxl # noqa

|

||||

import pandas as pd

|

||||

|

||||

return pd

|

||||

@@ -38,10 +148,14 @@ class ExcelKnowledgeSource(BaseFileKnowledgeSource):

|

||||

and save the embeddings.

|

||||

"""

|

||||

# Convert dictionary values to a single string if content is a dictionary

|

||||

if isinstance(self.content, dict):

|

||||

content_str = "\n".join(str(value) for value in self.content.values())

|

||||

else:

|

||||

content_str = str(self.content)

|

||||

# Updated to account for .xlsx workbooks with multiple tabs/sheets

|

||||

content_str = ""

|

||||

for value in self.content.values():

|

||||

if isinstance(value, dict):

|

||||

for sheet_value in value.values():

|

||||

content_str += str(sheet_value) + "\n"

|

||||

else:

|

||||

content_str += str(value) + "\n"

|

||||

|

||||

new_chunks = self._chunk_text(content_str)

|

||||

self.chunks.extend(new_chunks)

|

||||

|

||||

@@ -1,5 +1,4 @@

|

||||

from collections import defaultdict

|

||||

from typing import Union

|

||||

|

||||

from pydantic import BaseModel, Field

|

||||

from rich.box import HEAVY_EDGE

|

||||

@@ -7,7 +6,6 @@ from rich.console import Console

|

||||

from rich.table import Table

|

||||

|

||||

from crewai.agent import Agent

|

||||

from crewai.llm import LLM

|

||||

from crewai.task import Task

|

||||

from crewai.tasks.task_output import TaskOutput

|

||||

from crewai.telemetry import Telemetry

|

||||

@@ -34,9 +32,9 @@ class CrewEvaluator:

|

||||

run_execution_times: defaultdict = defaultdict(list)

|

||||

iteration: int = 0

|

||||

|

||||

def __init__(self, crew, llm: Union[str, LLM]):

|

||||

def __init__(self, crew, openai_model_name: str):

|

||||

self.crew = crew

|

||||

self.llm = LLM(model=llm) if isinstance(llm, str) else llm

|

||||

self.openai_model_name = openai_model_name

|

||||

self._telemetry = Telemetry()

|

||||

self._setup_for_evaluating()

|

||||

|

||||

@@ -53,7 +51,7 @@ class CrewEvaluator:

|

||||

),

|

||||

backstory="Evaluator agent for crew evaluation with precise capabilities to evaluate the performance of the agents in the crew based on the tasks they have performed",

|

||||

verbose=False,

|

||||

llm=self.llm,

|

||||

llm=self.openai_model_name,

|

||||

)

|

||||

|

||||

def _evaluation_task(

|

||||

@@ -183,7 +181,7 @@ class CrewEvaluator:

|

||||

self.crew,

|

||||

evaluation_result.pydantic.quality,

|

||||

current_task.execution_duration,

|

||||

self.llm.model,

|

||||

self.openai_model_name,

|

||||

)

|

||||

self.tasks_scores[self.iteration].append(evaluation_result.pydantic.quality)

|

||||

self.run_execution_times[self.iteration].append(

|

||||

|

||||

@@ -78,6 +78,18 @@ def test_agent_default_values():

|

||||

assert agent.llm.model == "gpt-4o-mini"

|

||||

assert agent.allow_delegation is False

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

def test_agent_creation_without_model_prices():

|

||||

with patch('crewai.cli.provider.get_provider_data') as mock_get:

|

||||

mock_get.return_value = None

|

||||

agent = Agent(

|

||||

role="test role",

|

||||

goal="test goal",

|

||||

backstory="test backstory"

|

||||

)

|

||||

assert agent is not None

|

||||

assert agent.role == "test role"

|

||||

|

||||

|

||||

def test_custom_llm():

|

||||

agent = Agent(

|

||||

|

||||

47

tests/cli/provider_test.py

Normal file

47

tests/cli/provider_test.py

Normal file

@@ -0,0 +1,47 @@

|

||||

from unittest.mock import Mock, patch

|

||||

|

||||

import json

|

||||

import os

|

||||

import pytest

|

||||

import requests

|

||||

import time

|

||||

|

||||

from crewai.cli.constants import JSON_URL, MODELS, PROVIDERS

|

||||

from crewai.cli.provider import fetch_provider_data, get_provider_data

|

||||

|

||||

def test_fetch_provider_data_timeout():

|

||||

with patch('requests.get') as mock_get:

|

||||

mock_get.side_effect = requests.exceptions.Timeout

|

||||

result = fetch_provider_data('/tmp/cache.json')

|

||||

assert result is None

|

||||

|

||||

def test_fetch_provider_data_wrong_content_type():

|

||||

with patch('requests.get') as mock_get:

|

||||

mock_response = Mock()

|

||||

mock_response.headers = {'content-type': 'text/plain'}

|

||||

mock_get.return_value = mock_response

|

||||

result = fetch_provider_data('/tmp/cache.json')

|

||||

assert result is None

|

||||

|

||||

def test_fetch_provider_data_success():

|

||||

mock_data = {"model1": {"provider": "test"}}

|

||||

with patch('requests.get') as mock_get:

|

||||

mock_response = Mock()

|

||||

mock_response.headers = {'content-type': 'application/json'}

|

||||

mock_response.json.return_value = mock_data

|

||||

mock_response.iter_content.return_value = [json.dumps(mock_data).encode()]

|

||||

mock_get.return_value = mock_response

|

||||

result = fetch_provider_data('/tmp/cache.json')

|

||||

assert result == mock_data

|

||||

|

||||

def test_cache_expiry():

|

||||

with patch('os.path.getmtime') as mock_time:

|

||||

mock_time.return_value = time.time() - (25 * 60 * 60) # 25 hours old

|

||||

with patch('crewai.cli.provider.load_provider_data') as mock_load:

|

||||

mock_load.return_value = None

|

||||

result = get_provider_data()

|

||||

assert result is not None

|

||||

assert all(provider.lower() in result for provider in PROVIDERS)

|

||||

# Verify that each provider has its models from MODELS

|

||||

for provider in PROVIDERS:

|

||||

assert result[provider.lower()] == MODELS.get(provider.lower(), [])

|

||||

@@ -51,6 +51,7 @@ writer = Agent(

|

||||

|

||||

def test_crew_with_only_conditional_tasks_raises_error():

|

||||

"""Test that creating a crew with only conditional tasks raises an error."""

|

||||

|

||||

def condition_func(task_output: TaskOutput) -> bool:

|

||||

return True

|

||||

|

||||

@@ -82,6 +83,7 @@ def test_crew_with_only_conditional_tasks_raises_error():

|

||||

tasks=[conditional1, conditional2, conditional3],

|

||||

)

|

||||

|

||||

|

||||

def test_crew_config_conditional_requirement():

|

||||

with pytest.raises(ValueError):

|

||||

Crew(process=Process.sequential)

|

||||

@@ -589,12 +591,12 @@ def test_crew_with_delegating_agents_should_not_override_task_tools():

|

||||

_, kwargs = mock_execute_sync.call_args

|

||||

tools = kwargs["tools"]

|

||||

|

||||

assert any(isinstance(tool, TestTool) for tool in tools), (

|

||||

"TestTool should be present"

|

||||

)

|

||||

assert any("delegate" in tool.name.lower() for tool in tools), (

|

||||

"Delegation tool should be present"

|

||||

)

|

||||

assert any(

|

||||

isinstance(tool, TestTool) for tool in tools

|

||||

), "TestTool should be present"

|

||||

assert any(

|

||||

"delegate" in tool.name.lower() for tool in tools

|

||||

), "Delegation tool should be present"

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

@@ -653,12 +655,12 @@ def test_crew_with_delegating_agents_should_not_override_agent_tools():

|

||||

_, kwargs = mock_execute_sync.call_args

|

||||

tools = kwargs["tools"]

|

||||

|

||||

assert any(isinstance(tool, TestTool) for tool in new_ceo.tools), (

|

||||

"TestTool should be present"

|

||||

)

|

||||

assert any("delegate" in tool.name.lower() for tool in tools), (

|

||||

"Delegation tool should be present"

|

||||

)

|

||||

assert any(

|

||||

isinstance(tool, TestTool) for tool in new_ceo.tools

|

||||

), "TestTool should be present"

|

||||

assert any(

|

||||

"delegate" in tool.name.lower() for tool in tools

|

||||

), "Delegation tool should be present"

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

@@ -782,17 +784,17 @@ def test_task_tools_override_agent_tools_with_allow_delegation():

|

||||

used_tools = kwargs["tools"]

|

||||

|

||||

# Confirm AnotherTestTool is present but TestTool is not

|

||||

assert any(isinstance(tool, AnotherTestTool) for tool in used_tools), (

|

||||

"AnotherTestTool should be present"

|

||||

)

|

||||

assert not any(isinstance(tool, TestTool) for tool in used_tools), (

|

||||

"TestTool should not be present among used tools"

|

||||

)

|

||||

assert any(

|

||||

isinstance(tool, AnotherTestTool) for tool in used_tools

|

||||

), "AnotherTestTool should be present"

|

||||

assert not any(

|

||||

isinstance(tool, TestTool) for tool in used_tools

|

||||

), "TestTool should not be present among used tools"

|

||||

|

||||

# Confirm delegation tool(s) are present

|

||||

assert any("delegate" in tool.name.lower() for tool in used_tools), (

|

||||

"Delegation tool should be present"

|

||||

)

|

||||

assert any(

|

||||

"delegate" in tool.name.lower() for tool in used_tools

|

||||

), "Delegation tool should be present"

|

||||

|

||||

# Finally, make sure the agent's original tools remain unchanged

|

||||

assert len(researcher_with_delegation.tools) == 1

|

||||

@@ -1593,9 +1595,9 @@ def test_code_execution_flag_adds_code_tool_upon_kickoff():

|

||||

|

||||

# Verify that exactly one tool was used and it was a CodeInterpreterTool

|

||||

assert len(used_tools) == 1, "Should have exactly one tool"

|

||||

assert isinstance(used_tools[0], CodeInterpreterTool), (

|

||||

"Tool should be CodeInterpreterTool"

|

||||

)

|

||||

assert isinstance(

|

||||

used_tools[0], CodeInterpreterTool

|

||||

), "Tool should be CodeInterpreterTool"

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

@@ -1952,6 +1954,7 @@ def test_task_callback_on_crew():

|

||||

|

||||

def test_task_callback_both_on_task_and_crew():

|

||||

from unittest.mock import MagicMock, patch

|

||||

|

||||

mock_callback_on_task = MagicMock()

|

||||

mock_callback_on_crew = MagicMock()

|

||||

|

||||

@@ -2101,21 +2104,22 @@ def test_conditional_task_uses_last_output():

|

||||

expected_output="First output",

|

||||

agent=researcher,

|

||||

)

|

||||

|

||||

def condition_fails(task_output: TaskOutput) -> bool:

|

||||

# This condition will never be met

|

||||

return "never matches" in task_output.raw.lower()

|

||||

|

||||

|

||||

def condition_succeeds(task_output: TaskOutput) -> bool:

|

||||

# This condition will match first task's output

|

||||

return "first success" in task_output.raw.lower()

|

||||

|

||||

|

||||

conditional_task1 = ConditionalTask(

|

||||

description="Second task - conditional that fails condition",

|

||||

expected_output="Second output",

|

||||

agent=researcher,

|

||||

condition=condition_fails,

|

||||

)

|

||||

|

||||

|

||||

conditional_task2 = ConditionalTask(

|

||||

description="Third task - conditional that succeeds using first task output",

|

||||

expected_output="Third output",

|

||||

@@ -2134,35 +2138,37 @@ def test_conditional_task_uses_last_output():

|

||||

raw="First success output", # Will be used by third task's condition

|

||||

agent=researcher.role,

|

||||

)

|

||||

mock_skipped = TaskOutput(

|

||||

description="Second task output",

|

||||

raw="", # Empty output since condition fails

|

||||

agent=researcher.role,

|

||||

)

|

||||

mock_third = TaskOutput(

|

||||

description="Third task output",

|

||||

raw="Third task executed", # Output when condition succeeds using first task output

|

||||

agent=writer.role,

|

||||

)

|

||||

|

||||

|

||||

# Set up mocks for task execution and conditional logic

|

||||

with patch.object(ConditionalTask, "should_execute") as mock_should_execute:

|

||||

# First conditional fails, second succeeds

|

||||

mock_should_execute.side_effect = [False, True]

|

||||

|

||||

with patch.object(Task, "execute_sync") as mock_execute:

|

||||

mock_execute.side_effect = [mock_first, mock_third]

|

||||

result = crew.kickoff()

|

||||

|

||||

|

||||

# Verify execution behavior

|

||||

assert mock_execute.call_count == 2 # Only first and third tasks execute

|

||||

assert mock_should_execute.call_count == 2 # Both conditionals checked

|

||||

|

||||

# Verify outputs collection

|

||||

|

||||

# Verify outputs collection:

|

||||

# First executed task output, followed by an automatically generated (skipped) output, then the conditional execution

|

||||

assert len(result.tasks_output) == 3

|

||||

assert result.tasks_output[0].raw == "First success output" # First task succeeded

|

||||

assert result.tasks_output[1].raw == "" # Second task skipped (condition failed)

|

||||

assert result.tasks_output[2].raw == "Third task executed" # Third task used first task's output

|

||||

assert (

|

||||

result.tasks_output[0].raw == "First success output"

|

||||

) # First task succeeded

|

||||

assert (

|

||||

result.tasks_output[1].raw == ""

|

||||

) # Second task skipped (condition failed)

|

||||

assert (

|

||||

result.tasks_output[2].raw == "Third task executed"

|

||||

) # Third task used first task's output

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

def test_conditional_tasks_result_collection():

|

||||

@@ -2172,20 +2178,20 @@ def test_conditional_tasks_result_collection():

|

||||

expected_output="First output",

|

||||

agent=researcher,

|

||||

)

|

||||

|

||||

|

||||

def condition_never_met(task_output: TaskOutput) -> bool:

|

||||

return "never matches" in task_output.raw.lower()

|

||||

|

||||

|

||||

def condition_always_met(task_output: TaskOutput) -> bool:

|

||||

return "success" in task_output.raw.lower()

|

||||

|

||||

|

||||

task2 = ConditionalTask(

|

||||

description="Conditional task that never executes",

|

||||

expected_output="Second output",

|

||||

agent=researcher,

|

||||

condition=condition_never_met,

|

||||

)

|

||||

|

||||

|

||||

task3 = ConditionalTask(

|

||||

description="Conditional task that always executes",

|

||||

expected_output="Third output",

|

||||

@@ -2204,35 +2210,46 @@ def test_conditional_tasks_result_collection():

|

||||

raw="Success output", # Triggers third task's condition

|

||||

agent=researcher.role,

|

||||

)

|

||||

mock_skipped = TaskOutput(

|

||||

description="Skipped output",

|

||||

raw="", # Empty output for skipped task

|

||||

agent=researcher.role,

|

||||

)

|

||||

mock_conditional = TaskOutput(

|

||||

description="Conditional output",

|

||||

raw="Conditional task executed",

|

||||

agent=writer.role,

|

||||

)

|

||||

|

||||

|

||||

# Set up mocks for task execution and conditional logic

|

||||

with patch.object(ConditionalTask, "should_execute") as mock_should_execute:

|

||||

# First conditional fails, second succeeds

|

||||

mock_should_execute.side_effect = [False, True]

|

||||

|

||||

with patch.object(Task, "execute_sync") as mock_execute:

|

||||

mock_execute.side_effect = [mock_success, mock_conditional]

|

||||

result = crew.kickoff()

|

||||

|

||||

|

||||

# Verify execution behavior

|

||||

assert mock_execute.call_count == 2 # Only first and third tasks execute

|

||||

assert mock_should_execute.call_count == 2 # Both conditionals checked

|

||||

|

||||

|

||||

# Verify task output collection:

|

||||

# There should be three outputs: normal task, skipped conditional task (empty output),

|

||||

# and the conditional task that executed.

|

||||

assert len(result.tasks_output) == 3

|

||||

assert (

|

||||

result.tasks_output[0].raw == "Success output"

|

||||

) # Normal task executed

|

||||

assert result.tasks_output[1].raw == "" # Second task skipped

|

||||

assert (

|

||||

result.tasks_output[2].raw == "Conditional task executed"

|

||||

) # Third task executed

|

||||

|

||||

# Verify task output collection

|

||||

assert len(result.tasks_output) == 3

|

||||

assert result.tasks_output[0].raw == "Success output" # Normal task executed

|

||||

assert result.tasks_output[1].raw == "" # Second task skipped

|

||||

assert result.tasks_output[2].raw == "Conditional task executed" # Third task executed

|

||||

assert (

|

||||

result.tasks_output[0].raw == "Success output"

|

||||

) # Normal task executed

|

||||

assert result.tasks_output[1].raw == "" # Second task skipped

|

||||

assert (

|

||||

result.tasks_output[2].raw == "Conditional task executed"

|

||||

) # Third task executed

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

def test_multiple_conditional_tasks():

|

||||

@@ -2242,20 +2259,20 @@ def test_multiple_conditional_tasks():

|

||||

expected_output="Research output",

|

||||

agent=researcher,

|

||||

)

|

||||

|

||||

|

||||

def condition1(task_output: TaskOutput) -> bool:

|

||||

return "success" in task_output.raw.lower()

|

||||

|

||||

|

||||

def condition2(task_output: TaskOutput) -> bool:

|

||||

return "proceed" in task_output.raw.lower()

|

||||

|

||||

|

||||

task2 = ConditionalTask(

|

||||

description="First conditional task",

|

||||

expected_output="Conditional output 1",

|

||||

agent=writer,

|

||||

condition=condition1,

|

||||

)

|

||||

|

||||

|

||||

task3 = ConditionalTask(

|

||||

description="Second conditional task",

|

||||

expected_output="Conditional output 2",

|

||||

@@ -2274,7 +2291,7 @@ def test_multiple_conditional_tasks():

|

||||

raw="Success and proceed output",

|

||||

agent=researcher.role,

|

||||

)

|

||||

|

||||

|

||||

# Set up mocks for task execution

|

||||

with patch.object(Task, "execute_sync", return_value=mock_success) as mock_execute:

|

||||

result = crew.kickoff()

|

||||

@@ -2282,6 +2299,7 @@ def test_multiple_conditional_tasks():

|

||||

assert mock_execute.call_count == 3

|

||||

assert len(result.tasks_output) == 3

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

def test_using_contextual_memory():

|

||||

from unittest.mock import patch

|

||||

@@ -3306,7 +3324,8 @@ def test_conditional_should_execute():

|

||||

|

||||

@mock.patch("crewai.crew.CrewEvaluator")

|

||||

@mock.patch("crewai.crew.Crew.copy")

|

||||

def test_crew_testing_function(copy_mock, crew_evaluator_mock):

|

||||

@mock.patch("crewai.crew.Crew.kickoff")

|

||||

def test_crew_testing_function(kickoff_mock, copy_mock, crew_evaluator):

|

||||

task = Task(

|

||||

description="Come up with a list of 5 interesting ideas to explore for an article, then write one amazing paragraph highlight for each idea that showcases how good an article about this topic could be. Return the list of ideas with their paragraph and your notes.",

|

||||

expected_output="5 bullet points with a paragraph for each idea.",

|

||||

@@ -3318,28 +3337,25 @@ def test_crew_testing_function(copy_mock, crew_evaluator_mock):

|

||||

tasks=[task],

|

||||

)

|

||||

|

||||

# Create a mock for the copied crew with a mock kickoff method

|

||||

copied_crew = MagicMock()

|

||||

copy_mock.return_value = copied_crew

|

||||

|

||||

# Create a mock for the CrewEvaluator instance

|

||||

evaluator_instance = MagicMock()

|

||||

crew_evaluator_mock.return_value = evaluator_instance

|

||||

# Create a mock for the copied crew

|

||||

copy_mock.return_value = crew

|

||||

|

||||

n_iterations = 2

|

||||

crew.test(n_iterations, openai_model_name="gpt-4o-mini", inputs={"topic": "AI"})

|

||||

|

||||

# Ensure kickoff is called on the copied crew

|

||||

copied_crew.kickoff.assert_has_calls(

|

||||

kickoff_mock.assert_has_calls(

|

||||

[mock.call(inputs={"topic": "AI"}), mock.call(inputs={"topic": "AI"})]

|

||||

)

|

||||

|

||||

# Verify CrewEvaluator interactions

|

||||

# We don't check the exact LLM object since it's created internally

|

||||

assert len(crew_evaluator_mock.mock_calls) == 4

|

||||

assert crew_evaluator_mock.mock_calls[1] == mock.call().set_iteration(1)

|

||||

assert crew_evaluator_mock.mock_calls[2] == mock.call().set_iteration(2)

|

||||

assert crew_evaluator_mock.mock_calls[3] == mock.call().print_crew_evaluation_result()

|

||||

crew_evaluator.assert_has_calls(

|

||||

[

|

||||

mock.call(crew, "gpt-4o-mini"),

|

||||

mock.call().set_iteration(1),

|

||||

mock.call().set_iteration(2),

|

||||

mock.call().print_crew_evaluation_result(),

|

||||

]

|

||||

)

|

||||

|

||||

|

||||

@pytest.mark.vcr(filter_headers=["authorization"])

|

||||

@@ -3402,9 +3418,9 @@ def test_fetch_inputs():

|

||||

expected_placeholders = {"role_detail", "topic", "field"}

|

||||

actual_placeholders = crew.fetch_inputs()

|

||||

|

||||

assert actual_placeholders == expected_placeholders, (

|

||||