mirror of

https://github.com/crewAIInc/crewAI.git

synced 2026-05-03 16:22:49 +00:00

Add pt-BR docs translation (#3039)

* docs: add pt-br translations Powered by a CrewAI Flow https://github.com/danielfsbarreto/docs_translator * Update mcp/overview.mdx brazilian docs Its en-US counterpart was updated after I did a pass, so now it includes the new section about @CrewBase

This commit is contained in:

69

docs/en/tools/database-data/mysqltool.mdx

Normal file

69

docs/en/tools/database-data/mysqltool.mdx

Normal file

@@ -0,0 +1,69 @@

|

||||

---

|

||||

title: MySQL RAG Search

|

||||

description: The `MySQLSearchTool` is designed to search MySQL databases and return the most relevant results.

|

||||

icon: database

|

||||

---

|

||||

|

||||

## Overview

|

||||

|

||||

This tool is designed to facilitate semantic searches within MySQL database tables. Leveraging the RAG (Retrieve and Generate) technology,

|

||||

the MySQLSearchTool provides users with an efficient means of querying database table content, specifically tailored for MySQL databases.

|

||||

It simplifies the process of finding relevant data through semantic search queries, making it an invaluable resource for users needing

|

||||

to perform advanced queries on extensive datasets within a MySQL database.

|

||||

|

||||

## Installation

|

||||

|

||||

To install the `crewai_tools` package and utilize the MySQLSearchTool, execute the following command in your terminal:

|

||||

|

||||

```shell

|

||||

pip install 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Example

|

||||

|

||||

Below is an example showcasing how to use the MySQLSearchTool to conduct a semantic search on a table within a MySQL database:

|

||||

|

||||

```python Code

|

||||

from crewai_tools import MySQLSearchTool

|

||||

|

||||

# Initialize the tool with the database URI and the target table name

|

||||

tool = MySQLSearchTool(

|

||||

db_uri='mysql://user:password@localhost:3306/mydatabase',

|

||||

table_name='employees'

|

||||

)

|

||||

```

|

||||

|

||||

## Arguments

|

||||

|

||||

The MySQLSearchTool requires the following arguments for its operation:

|

||||

|

||||

- `db_uri`: A string representing the URI of the MySQL database to be queried. This argument is mandatory and must include the necessary authentication details and the location of the database.

|

||||

- `table_name`: A string specifying the name of the table within the database on which the semantic search will be performed. This argument is mandatory.

|

||||

|

||||

## Custom model and embeddings

|

||||

|

||||

By default, the tool uses OpenAI for both embeddings and summarization. To customize the model, you can use a config dictionary as follows:

|

||||

|

||||

```python Code

|

||||

tool = MySQLSearchTool(

|

||||

config=dict(

|

||||

llm=dict(

|

||||

provider="ollama", # or google, openai, anthropic, llama2, ...

|

||||

config=dict(

|

||||

model="llama2",

|

||||

# temperature=0.5,

|

||||

# top_p=1,

|

||||

# stream=true,

|

||||

),

|

||||

),

|

||||

embedder=dict(

|

||||

provider="google",

|

||||

config=dict(

|

||||

model="models/embedding-001",

|

||||

task_type="retrieval_document",

|

||||

# title="Embeddings",

|

||||

),

|

||||

),

|

||||

)

|

||||

)

|

||||

```

|

||||

83

docs/en/tools/database-data/nl2sqltool.mdx

Normal file

83

docs/en/tools/database-data/nl2sqltool.mdx

Normal file

@@ -0,0 +1,83 @@

|

||||

---

|

||||

title: NL2SQL Tool

|

||||

description: The `NL2SQLTool` is designed to convert natural language to SQL queries.

|

||||

icon: language

|

||||

---

|

||||

|

||||

## Overview

|

||||

|

||||

|

||||

This tool is used to convert natural language to SQL queries. When passed to the agent it will generate queries and then use them to interact with the database.

|

||||

|

||||

This enables multiple workflows like having an Agent to access the database fetch information based on the goal and then use the information to generate a response, report or any other output.

|

||||

Along with that provides the ability for the Agent to update the database based on its goal.

|

||||

|

||||

**Attention**: Make sure that the Agent has access to a Read-Replica or that is okay for the Agent to run insert/update queries on the database.

|

||||

|

||||

## Requirements

|

||||

|

||||

- SqlAlchemy

|

||||

- Any DB compatible library (e.g. psycopg2, mysql-connector-python)

|

||||

|

||||

## Installation

|

||||

|

||||

Install the crewai_tools package

|

||||

|

||||

```shell

|

||||

pip install 'crewai[tools]'

|

||||

```

|

||||

|

||||

## Usage

|

||||

|

||||

In order to use the NL2SQLTool, you need to pass the database URI to the tool. The URI should be in the format `dialect+driver://username:password@host:port/database`.

|

||||

|

||||

|

||||

```python Code

|

||||

from crewai_tools import NL2SQLTool

|

||||

|

||||

# psycopg2 was installed to run this example with PostgreSQL

|

||||

nl2sql = NL2SQLTool(db_uri="postgresql://example@localhost:5432/test_db")

|

||||

|

||||

@agent

|

||||

def researcher(self) -> Agent:

|

||||

return Agent(

|

||||

config=self.agents_config["researcher"],

|

||||

allow_delegation=False,

|

||||

tools=[nl2sql]

|

||||

)

|

||||

```

|

||||

|

||||

## Example

|

||||

|

||||

The primary task goal was:

|

||||

|

||||

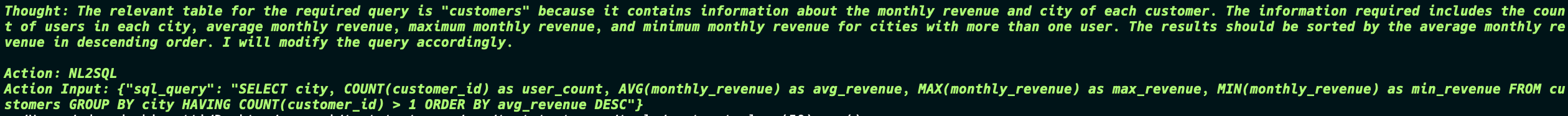

"Retrieve the average, maximum, and minimum monthly revenue for each city, but only include cities that have more than one user. Also, count the number of user in each city and

|

||||

sort the results by the average monthly revenue in descending order"

|

||||

|

||||

So the Agent tried to get information from the DB, the first one is wrong so the Agent tries again and gets the correct information and passes to the next agent.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

The second task goal was:

|

||||

|

||||

"Review the data and create a detailed report, and then create the table on the database with the fields based on the data provided.

|

||||

Include information on the average, maximum, and minimum monthly revenue for each city, but only include cities that have more than one user. Also, count the number of users in each city and sort the results by the average monthly revenue in descending order."

|

||||

|

||||

Now things start to get interesting, the Agent generates the SQL query to not only create the table but also insert the data into the table. And in the end the Agent still returns the final report which is exactly what was in the database.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

This is a simple example of how the NL2SQLTool can be used to interact with the database and generate reports based on the data in the database.

|

||||

|

||||

The Tool provides endless possibilities on the logic of the Agent and how it can interact with the database.

|

||||

|

||||

```md

|

||||

DB -> Agent -> ... -> Agent -> DB

|

||||

```

|

||||

57

docs/en/tools/database-data/overview.mdx

Normal file

57

docs/en/tools/database-data/overview.mdx

Normal file

@@ -0,0 +1,57 @@

|

||||

---

|

||||

title: "Overview"

|

||||

description: "Connect to databases, vector stores, and data warehouses for comprehensive data access"

|

||||

icon: "face-smile"

|

||||

---

|

||||

|

||||

These tools enable your agents to interact with various database systems, from traditional SQL databases to modern vector stores and data warehouses.

|

||||

|

||||

## **Available Tools**

|

||||

|

||||

<CardGroup cols={2}>

|

||||

<Card title="MySQL Tool" icon="database" href="/en/tools/database-data/mysqltool">

|

||||

Connect to and query MySQL databases with SQL operations.

|

||||

</Card>

|

||||

|

||||

<Card title="PostgreSQL Search" icon="elephant" href="/en/tools/database-data/pgsearchtool">

|

||||

Search and query PostgreSQL databases efficiently.

|

||||

</Card>

|

||||

|

||||

<Card title="Snowflake Search" icon="snowflake" href="/en/tools/database-data/snowflakesearchtool">

|

||||

Access Snowflake data warehouse for analytics and reporting.

|

||||

</Card>

|

||||

|

||||

<Card title="NL2SQL Tool" icon="language" href="/en/tools/database-data/nl2sqltool">

|

||||

Convert natural language queries to SQL statements automatically.

|

||||

</Card>

|

||||

|

||||

<Card title="Qdrant Vector Search" icon="vector-square" href="/en/tools/database-data/qdrantvectorsearchtool">

|

||||

Search vector embeddings using Qdrant vector database.

|

||||

</Card>

|

||||

|

||||

<Card title="Weaviate Vector Search" icon="network-wired" href="/en/tools/database-data/weaviatevectorsearchtool">

|

||||

Perform semantic search with Weaviate vector database.

|

||||

</Card>

|

||||

</CardGroup>

|

||||

|

||||

## **Common Use Cases**

|

||||

|

||||

- **Data Analysis**: Query databases for business intelligence and reporting

|

||||

- **Vector Search**: Find similar content using semantic embeddings

|

||||

- **ETL Operations**: Extract, transform, and load data between systems

|

||||

- **Real-time Analytics**: Access live data for decision making

|

||||

|

||||

```python

|

||||

from crewai_tools import MySQLTool, QdrantVectorSearchTool, NL2SQLTool

|

||||

|

||||

# Create database tools

|

||||

mysql_db = MySQLTool()

|

||||

vector_search = QdrantVectorSearchTool()

|

||||

nl_to_sql = NL2SQLTool()

|

||||

|

||||

# Add to your agent

|

||||

agent = Agent(

|

||||

role="Data Analyst",

|

||||

tools=[mysql_db, vector_search, nl_to_sql],

|

||||

goal="Extract insights from various data sources"

|

||||

)

|

||||

82

docs/en/tools/database-data/pgsearchtool.mdx

Normal file

82

docs/en/tools/database-data/pgsearchtool.mdx

Normal file

@@ -0,0 +1,82 @@

|

||||

---

|

||||

title: PG RAG Search

|

||||

description: The `PGSearchTool` is designed to search PostgreSQL databases and return the most relevant results.

|

||||

icon: elephant

|

||||

---

|

||||

|

||||

## Overview

|

||||

|

||||

<Note>

|

||||

The PGSearchTool is currently under development. This document outlines the intended functionality and interface.

|

||||

As development progresses, please be aware that some features may not be available or could change.

|

||||

</Note>

|

||||

|

||||

## Description

|

||||

|

||||

The PGSearchTool is envisioned as a powerful tool for facilitating semantic searches within PostgreSQL database tables. By leveraging advanced Retrieve and Generate (RAG) technology,

|

||||

it aims to provide an efficient means for querying database table content, specifically tailored for PostgreSQL databases.

|

||||

The tool's goal is to simplify the process of finding relevant data through semantic search queries, offering a valuable resource for users needing to conduct advanced queries on

|

||||

extensive datasets within a PostgreSQL environment.

|

||||

|

||||

## Installation

|

||||

|

||||

The `crewai_tools` package, which will include the PGSearchTool upon its release, can be installed using the following command:

|

||||

|

||||

```shell

|

||||

pip install 'crewai[tools]'

|

||||

```

|

||||

|

||||

<Note>

|

||||

The PGSearchTool is not yet available in the current version of the `crewai_tools` package. This installation command will be updated once the tool is released.

|

||||

</Note>

|

||||

|

||||

## Example Usage

|

||||

|

||||

Below is a proposed example showcasing how to use the PGSearchTool for conducting a semantic search on a table within a PostgreSQL database:

|

||||

|

||||

```python Code

|

||||

from crewai_tools import PGSearchTool

|

||||

|

||||

# Initialize the tool with the database URI and the target table name

|

||||

tool = PGSearchTool(

|

||||

db_uri='postgresql://user:password@localhost:5432/mydatabase',

|

||||

table_name='employees'

|

||||

)

|

||||

```

|

||||

|

||||

## Arguments

|

||||

|

||||

The PGSearchTool is designed to require the following arguments for its operation:

|

||||

|

||||

| Argument | Type | Description |

|

||||

|:---------------|:---------|:-------------------------------------------------------------------------------------------------------------------------------------|

|

||||

| **db_uri** | `string` | **Mandatory**. A string representing the URI of the PostgreSQL database to be queried. This argument will be mandatory and must include the necessary authentication details and the location of the database. |

|

||||

| **table_name** | `string` | **Mandatory**. A string specifying the name of the table within the database on which the semantic search will be performed. This argument will also be mandatory. |

|

||||

|

||||

## Custom Model and Embeddings

|

||||

|

||||

The tool intends to use OpenAI for both embeddings and summarization by default. Users will have the option to customize the model using a config dictionary as follows:

|

||||

|

||||

```python Code

|

||||

tool = PGSearchTool(

|

||||

config=dict(

|

||||

llm=dict(

|

||||

provider="ollama", # or google, openai, anthropic, llama2, ...

|

||||

config=dict(

|

||||

model="llama2",

|

||||

# temperature=0.5,

|

||||

# top_p=1,

|

||||

# stream=true,

|

||||

),

|

||||

),

|

||||

embedder=dict(

|

||||

provider="google", # or openai, ollama, ...

|

||||

config=dict(

|

||||

model="models/embedding-001",

|

||||

task_type="retrieval_document",

|

||||

# title="Embeddings",

|

||||

),

|

||||

),

|

||||

)

|

||||

)

|

||||

```

|

||||

271

docs/en/tools/database-data/qdrantvectorsearchtool.mdx

Normal file

271

docs/en/tools/database-data/qdrantvectorsearchtool.mdx

Normal file

@@ -0,0 +1,271 @@

|

||||

---

|

||||

title: 'Qdrant Vector Search Tool'

|

||||

description: 'Semantic search capabilities for CrewAI agents using Qdrant vector database'

|

||||

icon: vector-square

|

||||

---

|

||||

|

||||

## Overview

|

||||

|

||||

The Qdrant Vector Search Tool enables semantic search capabilities in your CrewAI agents by leveraging [Qdrant](https://qdrant.tech/), a vector similarity search engine. This tool allows your agents to search through documents stored in a Qdrant collection using semantic similarity.

|

||||

|

||||

## Installation

|

||||

|

||||

Install the required packages:

|

||||

|

||||

```bash

|

||||

uv add qdrant-client

|

||||

```

|

||||

|

||||

## Basic Usage

|

||||

|

||||

Here's a minimal example of how to use the tool:

|

||||

|

||||

```python

|

||||

from crewai import Agent

|

||||

from crewai_tools import QdrantVectorSearchTool

|

||||

|

||||

# Initialize the tool

|

||||

qdrant_tool = QdrantVectorSearchTool(

|

||||

qdrant_url="your_qdrant_url",

|

||||

qdrant_api_key="your_qdrant_api_key",

|

||||

collection_name="your_collection"

|

||||

)

|

||||

|

||||

# Create an agent that uses the tool

|

||||

agent = Agent(

|

||||

role="Research Assistant",

|

||||

goal="Find relevant information in documents",

|

||||

tools=[qdrant_tool]

|

||||

)

|

||||

|

||||

# The tool will automatically use OpenAI embeddings

|

||||

# and return the 3 most relevant results with scores > 0.35

|

||||

```

|

||||

|

||||

## Complete Working Example

|

||||

|

||||

Here's a complete example showing how to:

|

||||

1. Extract text from a PDF

|

||||

2. Generate embeddings using OpenAI

|

||||

3. Store in Qdrant

|

||||

4. Create a CrewAI agentic RAG workflow for semantic search

|

||||

|

||||

```python

|

||||

import os

|

||||

import uuid

|

||||

import pdfplumber

|

||||

from openai import OpenAI

|

||||

from dotenv import load_dotenv

|

||||

from crewai import Agent, Task, Crew, Process, LLM

|

||||

from crewai_tools import QdrantVectorSearchTool

|

||||

from qdrant_client import QdrantClient

|

||||

from qdrant_client.models import PointStruct, Distance, VectorParams

|

||||

|

||||

# Load environment variables

|

||||

load_dotenv()

|

||||

|

||||

# Initialize OpenAI client

|

||||

client = OpenAI(api_key=os.getenv("OPENAI_API_KEY"))

|

||||

|

||||

# Extract text from PDF

|

||||

def extract_text_from_pdf(pdf_path):

|

||||

text = []

|

||||

with pdfplumber.open(pdf_path) as pdf:

|

||||

for page in pdf.pages:

|

||||

page_text = page.extract_text()

|

||||

if page_text:

|

||||

text.append(page_text.strip())

|

||||

return text

|

||||

|

||||

# Generate OpenAI embeddings

|

||||

def get_openai_embedding(text):

|

||||

response = client.embeddings.create(

|

||||

input=text,

|

||||

model="text-embedding-3-small"

|

||||

)

|

||||

return response.data[0].embedding

|

||||

|

||||

# Store text and embeddings in Qdrant

|

||||

def load_pdf_to_qdrant(pdf_path, qdrant, collection_name):

|

||||

# Extract text from PDF

|

||||

text_chunks = extract_text_from_pdf(pdf_path)

|

||||

|

||||

# Create Qdrant collection

|

||||

if qdrant.collection_exists(collection_name):

|

||||

qdrant.delete_collection(collection_name)

|

||||

qdrant.create_collection(

|

||||

collection_name=collection_name,

|

||||

vectors_config=VectorParams(size=1536, distance=Distance.COSINE)

|

||||

)

|

||||

|

||||

# Store embeddings

|

||||

points = []

|

||||

for chunk in text_chunks:

|

||||

embedding = get_openai_embedding(chunk)

|

||||

points.append(PointStruct(

|

||||

id=str(uuid.uuid4()),

|

||||

vector=embedding,

|

||||

payload={"text": chunk}

|

||||

))

|

||||

qdrant.upsert(collection_name=collection_name, points=points)

|

||||

|

||||

# Initialize Qdrant client and load data

|

||||

qdrant = QdrantClient(

|

||||

url=os.getenv("QDRANT_URL"),

|

||||

api_key=os.getenv("QDRANT_API_KEY")

|

||||

)

|

||||

collection_name = "example_collection"

|

||||

pdf_path = "path/to/your/document.pdf"

|

||||

load_pdf_to_qdrant(pdf_path, qdrant, collection_name)

|

||||

|

||||

# Initialize Qdrant search tool

|

||||

qdrant_tool = QdrantVectorSearchTool(

|

||||

qdrant_url=os.getenv("QDRANT_URL"),

|

||||

qdrant_api_key=os.getenv("QDRANT_API_KEY"),

|

||||

collection_name=collection_name,

|

||||

limit=3,

|

||||

score_threshold=0.35

|

||||

)

|

||||

|

||||

# Create CrewAI agents

|

||||

search_agent = Agent(

|

||||

role="Senior Semantic Search Agent",

|

||||

goal="Find and analyze documents based on semantic search",

|

||||

backstory="""You are an expert research assistant who can find relevant

|

||||

information using semantic search in a Qdrant database.""",

|

||||

tools=[qdrant_tool],

|

||||

verbose=True

|

||||

)

|

||||

|

||||

answer_agent = Agent(

|

||||

role="Senior Answer Assistant",

|

||||

goal="Generate answers to questions based on the context provided",

|

||||

backstory="""You are an expert answer assistant who can generate

|

||||

answers to questions based on the context provided.""",

|

||||

tools=[qdrant_tool],

|

||||

verbose=True

|

||||

)

|

||||

|

||||

# Define tasks

|

||||

search_task = Task(

|

||||

description="""Search for relevant documents about the {query}.

|

||||

Your final answer should include:

|

||||

- The relevant information found

|

||||

- The similarity scores of the results

|

||||

- The metadata of the relevant documents""",

|

||||

agent=search_agent

|

||||

)

|

||||

|

||||

answer_task = Task(

|

||||

description="""Given the context and metadata of relevant documents,

|

||||

generate a final answer based on the context.""",

|

||||

agent=answer_agent

|

||||

)

|

||||

|

||||

# Run CrewAI workflow

|

||||

crew = Crew(

|

||||

agents=[search_agent, answer_agent],

|

||||

tasks=[search_task, answer_task],

|

||||

process=Process.sequential,

|

||||

verbose=True

|

||||

)

|

||||

|

||||

result = crew.kickoff(

|

||||

inputs={"query": "What is the role of X in the document?"}

|

||||

)

|

||||

print(result)

|

||||

```

|

||||

|

||||

## Tool Parameters

|

||||

|

||||

### Required Parameters

|

||||

- `qdrant_url` (str): The URL of your Qdrant server

|

||||

- `qdrant_api_key` (str): API key for authentication with Qdrant

|

||||

- `collection_name` (str): Name of the Qdrant collection to search

|

||||

|

||||

### Optional Parameters

|

||||

- `limit` (int): Maximum number of results to return (default: 3)

|

||||

- `score_threshold` (float): Minimum similarity score threshold (default: 0.35)

|

||||

- `custom_embedding_fn` (Callable[[str], list[float]]): Custom function for text vectorization

|

||||

|

||||

## Search Parameters

|

||||

|

||||

The tool accepts these parameters in its schema:

|

||||

- `query` (str): The search query to find similar documents

|

||||

- `filter_by` (str, optional): Metadata field to filter on

|

||||

- `filter_value` (str, optional): Value to filter by

|

||||

|

||||

## Return Format

|

||||

|

||||

The tool returns results in JSON format:

|

||||

|

||||

```json

|

||||

[

|

||||

{

|

||||

"metadata": {

|

||||

// Any metadata stored with the document

|

||||

},

|

||||

"context": "The actual text content of the document",

|

||||

"distance": 0.95 // Similarity score

|

||||

}

|

||||

]

|

||||

```

|

||||

|

||||

## Default Embedding

|

||||

|

||||

By default, the tool uses OpenAI's `text-embedding-3-small` model for vectorization. This requires:

|

||||

- OpenAI API key set in environment: `OPENAI_API_KEY`

|

||||

|

||||

## Custom Embeddings

|

||||

|

||||

Instead of using the default embedding model, you might want to use your own embedding function in cases where you:

|

||||

|

||||

1. Want to use a different embedding model (e.g., Cohere, HuggingFace, Ollama models)

|

||||

2. Need to reduce costs by using open-source embedding models

|

||||

3. Have specific requirements for vector dimensions or embedding quality

|

||||

4. Want to use domain-specific embeddings (e.g., for medical or legal text)

|

||||

|

||||

Here's an example using a HuggingFace model:

|

||||

|

||||

```python

|

||||

from transformers import AutoTokenizer, AutoModel

|

||||

import torch

|

||||

|

||||

# Load model and tokenizer

|

||||

tokenizer = AutoTokenizer.from_pretrained('sentence-transformers/all-MiniLM-L6-v2')

|

||||

model = AutoModel.from_pretrained('sentence-transformers/all-MiniLM-L6-v2')

|

||||

|

||||

def custom_embeddings(text: str) -> list[float]:

|

||||

# Tokenize and get model outputs

|

||||

inputs = tokenizer(text, return_tensors="pt", padding=True, truncation=True)

|

||||

outputs = model(**inputs)

|

||||

|

||||

# Use mean pooling to get text embedding

|

||||

embeddings = outputs.last_hidden_state.mean(dim=1)

|

||||

|

||||

# Convert to list of floats and return

|

||||

return embeddings[0].tolist()

|

||||

|

||||

# Use custom embeddings with the tool

|

||||

tool = QdrantVectorSearchTool(

|

||||

qdrant_url="your_url",

|

||||

qdrant_api_key="your_key",

|

||||

collection_name="your_collection",

|

||||

custom_embedding_fn=custom_embeddings # Pass your custom function

|

||||

)

|

||||

```

|

||||

|

||||

## Error Handling

|

||||

|

||||

The tool handles these specific errors:

|

||||

- Raises ImportError if `qdrant-client` is not installed (with option to auto-install)

|

||||

- Raises ValueError if `QDRANT_URL` is not set

|

||||

- Prompts to install `qdrant-client` if missing using `uv add qdrant-client`

|

||||

|

||||

## Environment Variables

|

||||

|

||||

Required environment variables:

|

||||

```bash

|

||||

export QDRANT_URL="your_qdrant_url" # If not provided in constructor

|

||||

export QDRANT_API_KEY="your_api_key" # If not provided in constructor

|

||||

export OPENAI_API_KEY="your_openai_key" # If using default embeddings

|

||||

202

docs/en/tools/database-data/snowflakesearchtool.mdx

Normal file

202

docs/en/tools/database-data/snowflakesearchtool.mdx

Normal file

@@ -0,0 +1,202 @@

|

||||

---

|

||||

title: Snowflake Search Tool

|

||||

description: The `SnowflakeSearchTool` enables CrewAI agents to execute SQL queries and perform semantic search on Snowflake data warehouses.

|

||||

icon: snowflake

|

||||

---

|

||||

|

||||

# `SnowflakeSearchTool`

|

||||

|

||||

## Description

|

||||

|

||||

The `SnowflakeSearchTool` is designed to connect to Snowflake data warehouses and execute SQL queries with advanced features like connection pooling, retry logic, and asynchronous execution. This tool allows CrewAI agents to interact with Snowflake databases, making it ideal for data analysis, reporting, and business intelligence tasks that require access to enterprise data stored in Snowflake.

|

||||

|

||||

## Installation

|

||||

|

||||

To use this tool, you need to install the required dependencies:

|

||||

|

||||

```shell

|

||||

uv add cryptography snowflake-connector-python snowflake-sqlalchemy

|

||||

```

|

||||

|

||||

Or alternatively:

|

||||

|

||||

```shell

|

||||

uv sync --extra snowflake

|

||||

```

|

||||

|

||||

## Steps to Get Started

|

||||

|

||||

To effectively use the `SnowflakeSearchTool`, follow these steps:

|

||||

|

||||

1. **Install Dependencies**: Install the required packages using one of the commands above.

|

||||

2. **Configure Snowflake Connection**: Create a `SnowflakeConfig` object with your Snowflake credentials.

|

||||

3. **Initialize the Tool**: Create an instance of the tool with the necessary configuration.

|

||||

4. **Execute Queries**: Use the tool to run SQL queries against your Snowflake database.

|

||||

|

||||

## Example

|

||||

|

||||

The following example demonstrates how to use the `SnowflakeSearchTool` to query data from a Snowflake database:

|

||||

|

||||

```python Code

|

||||

from crewai import Agent, Task, Crew

|

||||

from crewai_tools import SnowflakeSearchTool, SnowflakeConfig

|

||||

|

||||

# Create Snowflake configuration

|

||||

config = SnowflakeConfig(

|

||||

account="your_account",

|

||||

user="your_username",

|

||||

password="your_password",

|

||||

warehouse="COMPUTE_WH",

|

||||

database="your_database",

|

||||

snowflake_schema="your_schema"

|

||||

)

|

||||

|

||||

# Initialize the tool

|

||||

snowflake_tool = SnowflakeSearchTool(config=config)

|

||||

|

||||

# Define an agent that uses the tool

|

||||

data_analyst_agent = Agent(

|

||||

role="Data Analyst",

|

||||

goal="Analyze data from Snowflake database",

|

||||

backstory="An expert data analyst who can extract insights from enterprise data.",

|

||||

tools=[snowflake_tool],

|

||||

verbose=True,

|

||||

)

|

||||

|

||||

# Example task to query sales data

|

||||

query_task = Task(

|

||||

description="Query the sales data for the last quarter and summarize the top 5 products by revenue.",

|

||||

expected_output="A summary of the top 5 products by revenue for the last quarter.",

|

||||

agent=data_analyst_agent,

|

||||

)

|

||||

|

||||

# Create and run the crew

|

||||

crew = Crew(agents=[data_analyst_agent],

|

||||

tasks=[query_task])

|

||||

result = crew.kickoff()

|

||||

```

|

||||

|

||||

You can also customize the tool with additional parameters:

|

||||

|

||||

```python Code

|

||||

# Initialize the tool with custom parameters

|

||||

snowflake_tool = SnowflakeSearchTool(

|

||||

config=config,

|

||||

pool_size=10,

|

||||

max_retries=5,

|

||||

retry_delay=2.0,

|

||||

enable_caching=True

|

||||

)

|

||||

```

|

||||

|

||||

## Parameters

|

||||

|

||||

### SnowflakeConfig Parameters

|

||||

|

||||

The `SnowflakeConfig` class accepts the following parameters:

|

||||

|

||||

- **account**: Required. Snowflake account identifier.

|

||||

- **user**: Required. Snowflake username.

|

||||

- **password**: Optional*. Snowflake password.

|

||||

- **private_key_path**: Optional*. Path to private key file (alternative to password).

|

||||

- **warehouse**: Required. Snowflake warehouse name.

|

||||

- **database**: Required. Default database.

|

||||

- **snowflake_schema**: Required. Default schema.

|

||||

- **role**: Optional. Snowflake role.

|

||||

- **session_parameters**: Optional. Custom session parameters as a dictionary.

|

||||

|

||||

*Either `password` or `private_key_path` must be provided.

|

||||

|

||||

### SnowflakeSearchTool Parameters

|

||||

|

||||

The `SnowflakeSearchTool` accepts the following parameters during initialization:

|

||||

|

||||

- **config**: Required. A `SnowflakeConfig` object containing connection details.

|

||||

- **pool_size**: Optional. Number of connections in the pool. Default is 5.

|

||||

- **max_retries**: Optional. Maximum retry attempts for failed queries. Default is 3.

|

||||

- **retry_delay**: Optional. Delay between retries in seconds. Default is 1.0.

|

||||

- **enable_caching**: Optional. Whether to enable query result caching. Default is True.

|

||||

|

||||

## Usage

|

||||

|

||||

When using the `SnowflakeSearchTool`, you need to provide the following parameters:

|

||||

|

||||

- **query**: Required. The SQL query to execute.

|

||||

- **database**: Optional. Override the default database specified in the config.

|

||||

- **snowflake_schema**: Optional. Override the default schema specified in the config.

|

||||

- **timeout**: Optional. Query timeout in seconds. Default is 300.

|

||||

|

||||

The tool will return the query results as a list of dictionaries, where each dictionary represents a row with column names as keys.

|

||||

|

||||

```python Code

|

||||

# Example of using the tool with an agent

|

||||

data_analyst = Agent(

|

||||

role="Data Analyst",

|

||||

goal="Analyze sales data from Snowflake",

|

||||

backstory="An expert data analyst with experience in SQL and data visualization.",

|

||||

tools=[snowflake_tool],

|

||||

verbose=True

|

||||

)

|

||||

|

||||

# The agent will use the tool with parameters like:

|

||||

# query="SELECT product_name, SUM(revenue) as total_revenue FROM sales GROUP BY product_name ORDER BY total_revenue DESC LIMIT 5"

|

||||

# timeout=600

|

||||

|

||||

# Create a task for the agent

|

||||

analysis_task = Task(

|

||||

description="Query the sales database and identify the top 5 products by revenue for the last quarter.",

|

||||

expected_output="A detailed analysis of the top 5 products by revenue.",

|

||||

agent=data_analyst

|

||||

)

|

||||

|

||||

# Run the task

|

||||

crew = Crew(

|

||||

agents=[data_analyst],

|

||||

tasks=[analysis_task]

|

||||

)

|

||||

result = crew.kickoff()

|

||||

```

|

||||

|

||||

## Advanced Features

|

||||

|

||||

### Connection Pooling

|

||||

|

||||

The `SnowflakeSearchTool` implements connection pooling to improve performance by reusing database connections. You can control the pool size with the `pool_size` parameter.

|

||||

|

||||

### Automatic Retries

|

||||

|

||||

The tool automatically retries failed queries with exponential backoff. You can configure the retry behavior with the `max_retries` and `retry_delay` parameters.

|

||||

|

||||

### Query Result Caching

|

||||

|

||||

To improve performance for repeated queries, the tool can cache query results. This feature is enabled by default but can be disabled by setting `enable_caching=False`.

|

||||

|

||||

### Key-Pair Authentication

|

||||

|

||||

In addition to password authentication, the tool supports key-pair authentication for enhanced security:

|

||||

|

||||

```python Code

|

||||

config = SnowflakeConfig(

|

||||

account="your_account",

|

||||

user="your_username",

|

||||

private_key_path="/path/to/your/private/key.p8",

|

||||

warehouse="COMPUTE_WH",

|

||||

database="your_database",

|

||||

snowflake_schema="your_schema"

|

||||

)

|

||||

```

|

||||

|

||||

## Error Handling

|

||||

|

||||

The `SnowflakeSearchTool` includes comprehensive error handling for common Snowflake issues:

|

||||

|

||||

- Connection failures

|

||||

- Query timeouts

|

||||

- Authentication errors

|

||||

- Database and schema errors

|

||||

|

||||

When an error occurs, the tool will attempt to retry the operation (if configured) and provide detailed error information.

|

||||

|

||||

## Conclusion

|

||||

|

||||

The `SnowflakeSearchTool` provides a powerful way to integrate Snowflake data warehouses with CrewAI agents. With features like connection pooling, automatic retries, and query caching, it enables efficient and reliable access to enterprise data. This tool is particularly useful for data analysis, reporting, and business intelligence tasks that require access to structured data stored in Snowflake.

|

||||

163

docs/en/tools/database-data/weaviatevectorsearchtool.mdx

Normal file

163

docs/en/tools/database-data/weaviatevectorsearchtool.mdx

Normal file

@@ -0,0 +1,163 @@

|

||||

---

|

||||

title: Weaviate Vector Search

|

||||

description: The `WeaviateVectorSearchTool` is designed to search a Weaviate vector database for semantically similar documents.

|

||||

icon: network-wired

|

||||

---

|

||||

|

||||

## Overview

|

||||

|

||||

|

||||

The `WeaviateVectorSearchTool` is specifically crafted for conducting semantic searches within documents stored in a Weaviate vector database. This tool allows you to find semantically similar documents to a given query, leveraging the power of vector embeddings for more accurate and contextually relevant search results.

|

||||

|

||||

[Weaviate](https://weaviate.io/) is a vector database that stores and queries vector embeddings, enabling semantic search capabilities.

|

||||

|

||||

## Installation

|

||||

|

||||

To incorporate this tool into your project, you need to install the Weaviate client:

|

||||

|

||||

```shell

|

||||

uv add weaviate-client

|

||||

```

|

||||

|

||||

## Steps to Get Started

|

||||

|

||||

To effectively use the `WeaviateVectorSearchTool`, follow these steps:

|

||||

|

||||

1. **Package Installation**: Confirm that the `crewai[tools]` and `weaviate-client` packages are installed in your Python environment.

|

||||

2. **Weaviate Setup**: Set up a Weaviate cluster. You can follow the [Weaviate documentation](https://weaviate.io/developers/wcs/manage-clusters/connect) for instructions.

|

||||

3. **API Keys**: Obtain your Weaviate cluster URL and API key.

|

||||

4. **OpenAI API Key**: Ensure you have an OpenAI API key set in your environment variables as `OPENAI_API_KEY`.

|

||||

|

||||

## Example

|

||||

|

||||

The following example demonstrates how to initialize the tool and execute a search:

|

||||

|

||||

```python Code

|

||||

from crewai_tools import WeaviateVectorSearchTool

|

||||

|

||||

# Initialize the tool

|

||||

tool = WeaviateVectorSearchTool(

|

||||

collection_name='example_collections',

|

||||

limit=3,

|

||||

weaviate_cluster_url="https://your-weaviate-cluster-url.com",

|

||||

weaviate_api_key="your-weaviate-api-key",

|

||||

)

|

||||

|

||||

@agent

|

||||

def search_agent(self) -> Agent:

|

||||

'''

|

||||

This agent uses the WeaviateVectorSearchTool to search for

|

||||

semantically similar documents in a Weaviate vector database.

|

||||

'''

|

||||

return Agent(

|

||||

config=self.agents_config["search_agent"],

|

||||

tools=[tool]

|

||||

)

|

||||

```

|

||||

|

||||

## Parameters

|

||||

|

||||

The `WeaviateVectorSearchTool` accepts the following parameters:

|

||||

|

||||

- **collection_name**: Required. The name of the collection to search within.

|

||||

- **weaviate_cluster_url**: Required. The URL of the Weaviate cluster.

|

||||

- **weaviate_api_key**: Required. The API key for the Weaviate cluster.

|

||||

- **limit**: Optional. The number of results to return. Default is `3`.

|

||||

- **vectorizer**: Optional. The vectorizer to use. If not provided, it will use `text2vec_openai` with the `nomic-embed-text` model.

|

||||

- **generative_model**: Optional. The generative model to use. If not provided, it will use OpenAI's `gpt-4o`.

|

||||

|

||||

## Advanced Configuration

|

||||

|

||||

You can customize the vectorizer and generative model used by the tool:

|

||||

|

||||

```python Code

|

||||

from crewai_tools import WeaviateVectorSearchTool

|

||||

from weaviate.classes.config import Configure

|

||||

|

||||

# Setup custom model for vectorizer and generative model

|

||||

tool = WeaviateVectorSearchTool(

|

||||

collection_name='example_collections',

|

||||

limit=3,

|

||||

vectorizer=Configure.Vectorizer.text2vec_openai(model="nomic-embed-text"),

|

||||

generative_model=Configure.Generative.openai(model="gpt-4o-mini"),

|

||||

weaviate_cluster_url="https://your-weaviate-cluster-url.com",

|

||||

weaviate_api_key="your-weaviate-api-key",

|

||||

)

|

||||

```

|

||||

|

||||

## Preloading Documents

|

||||

|

||||

You can preload your Weaviate database with documents before using the tool:

|

||||

|

||||

```python Code

|

||||

import os

|

||||

from crewai_tools import WeaviateVectorSearchTool

|

||||

import weaviate

|

||||

from weaviate.classes.init import Auth

|

||||

|

||||

# Connect to Weaviate

|

||||

client = weaviate.connect_to_weaviate_cloud(

|

||||

cluster_url="https://your-weaviate-cluster-url.com",

|

||||

auth_credentials=Auth.api_key("your-weaviate-api-key"),

|

||||

headers={"X-OpenAI-Api-Key": "your-openai-api-key"}

|

||||

)

|

||||

|

||||

# Get or create collection

|

||||

test_docs = client.collections.get("example_collections")

|

||||

if not test_docs:

|

||||

test_docs = client.collections.create(

|

||||

name="example_collections",

|

||||

vectorizer_config=Configure.Vectorizer.text2vec_openai(model="nomic-embed-text"),

|

||||

generative_config=Configure.Generative.openai(model="gpt-4o"),

|

||||

)

|

||||

|

||||

# Load documents

|

||||

docs_to_load = os.listdir("knowledge")

|

||||

with test_docs.batch.dynamic() as batch:

|

||||

for d in docs_to_load:

|

||||

with open(os.path.join("knowledge", d), "r") as f:

|

||||

content = f.read()

|

||||

batch.add_object(

|

||||

{

|

||||

"content": content,

|

||||

"year": d.split("_")[0],

|

||||

}

|

||||

)

|

||||

|

||||

# Initialize the tool

|

||||

tool = WeaviateVectorSearchTool(

|

||||

collection_name='example_collections',

|

||||

limit=3,

|

||||

weaviate_cluster_url="https://your-weaviate-cluster-url.com",

|

||||

weaviate_api_key="your-weaviate-api-key",

|

||||

)

|

||||

```

|

||||

|

||||

## Agent Integration Example

|

||||

|

||||

Here's how to integrate the `WeaviateVectorSearchTool` with a CrewAI agent:

|

||||

|

||||

```python Code

|

||||

from crewai import Agent

|

||||

from crewai_tools import WeaviateVectorSearchTool

|

||||

|

||||

# Initialize the tool

|

||||

weaviate_tool = WeaviateVectorSearchTool(

|

||||

collection_name='example_collections',

|

||||

limit=3,

|

||||

weaviate_cluster_url="https://your-weaviate-cluster-url.com",

|

||||

weaviate_api_key="your-weaviate-api-key",

|

||||

)

|

||||

|

||||

# Create an agent with the tool

|

||||

rag_agent = Agent(

|

||||

name="rag_agent",

|

||||

role="You are a helpful assistant that can answer questions with the help of the WeaviateVectorSearchTool.",

|

||||

llm="gpt-4o-mini",

|

||||

tools=[weaviate_tool],

|

||||

)

|

||||

```

|

||||

|

||||

## Conclusion

|

||||

|

||||

The `WeaviateVectorSearchTool` provides a powerful way to search for semantically similar documents in a Weaviate vector database. By leveraging vector embeddings, it enables more accurate and contextually relevant search results compared to traditional keyword-based searches. This tool is particularly useful for applications that require finding information based on meaning rather than exact matches.

|

||||

Reference in New Issue

Block a user