mirror of

https://github.com/crewAIInc/crewAI.git

synced 2026-01-25 16:18:13 +00:00

docs: Add transparency features for prompts and memory systems (#2902)

* docs: Fix major memory system documentation issues - Remove misleading deprecation warnings, fix confusing comments, clearly separate three memory approaches, provide accurate examples that match implementation * fix: Correct broken image paths in README - Update crewai_logo.png and asset.png paths to point to docs/images/ directory instead of docs/ directly * docs: Add system prompt transparency and customization guide - Add 'Understanding Default System Instructions' section to address black-box concerns - Document what CrewAI automatically injects into prompts - Provide code examples to inspect complete system prompts - Show 3 methods to override default instructions - Include observability integration examples with Langfuse - Add best practices for production prompt management * docs: Fix implementation accuracy issues in memory documentation - Fix Ollama embedding URL parameter and remove unsupported Cohere input_type parameter * docs: Reference observability docs instead of showing specific tool examples * docs: Reorganize knowledge documentation for better developer experience - Move quickstart examples right after overview for immediate hands-on experience - Create logical learning progression: basics → configuration → advanced → troubleshooting - Add comprehensive agent vs crew knowledge guide with working examples - Consolidate debugging and troubleshooting in dedicated section - Organize best practices by topic in accordion format - Improve content flow from simple concepts to advanced features - Ensure all examples are grounded in actual codebase implementation * docs: enhance custom LLM documentation with comprehensive examples and accurate imports * docs: reorganize observability tools into dedicated section with comprehensive overview and improved navigation * docs: rename how-to section to learn and add comprehensive overview page * docs: finalize documentation reorganization and update navigation labels * docs: enhance README with comprehensive badges, navigation links, and getting started video

This commit is contained in:

@@ -1,126 +0,0 @@

|

||||

---

|

||||

title: AgentOps Integration

|

||||

description: Understanding and logging your agent performance with AgentOps.

|

||||

icon: paperclip

|

||||

---

|

||||

|

||||

# Introduction

|

||||

|

||||

Observability is a key aspect of developing and deploying conversational AI agents. It allows developers to understand how their agents are performing,

|

||||

how their agents are interacting with users, and how their agents use external tools and APIs.

|

||||

AgentOps is a product independent of CrewAI that provides a comprehensive observability solution for agents.

|

||||

|

||||

## AgentOps

|

||||

|

||||

[AgentOps](https://agentops.ai/?=crew) provides session replays, metrics, and monitoring for agents.

|

||||

|

||||

At a high level, AgentOps gives you the ability to monitor cost, token usage, latency, agent failures, session-wide statistics, and more.

|

||||

For more info, check out the [AgentOps Repo](https://github.com/AgentOps-AI/agentops).

|

||||

|

||||

### Overview

|

||||

|

||||

AgentOps provides monitoring for agents in development and production.

|

||||

It provides a dashboard for tracking agent performance, session replays, and custom reporting.

|

||||

|

||||

Additionally, AgentOps provides session drilldowns for viewing Crew agent interactions, LLM calls, and tool usage in real-time.

|

||||

This feature is useful for debugging and understanding how agents interact with users as well as other agents.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

### Features

|

||||

|

||||

- **LLM Cost Management and Tracking**: Track spend with foundation model providers.

|

||||

- **Replay Analytics**: Watch step-by-step agent execution graphs.

|

||||

- **Recursive Thought Detection**: Identify when agents fall into infinite loops.

|

||||

- **Custom Reporting**: Create custom analytics on agent performance.

|

||||

- **Analytics Dashboard**: Monitor high-level statistics about agents in development and production.

|

||||

- **Public Model Testing**: Test your agents against benchmarks and leaderboards.

|

||||

- **Custom Tests**: Run your agents against domain-specific tests.

|

||||

- **Time Travel Debugging**: Restart your sessions from checkpoints.

|

||||

- **Compliance and Security**: Create audit logs and detect potential threats such as profanity and PII leaks.

|

||||

- **Prompt Injection Detection**: Identify potential code injection and secret leaks.

|

||||

|

||||

### Using AgentOps

|

||||

|

||||

<Steps>

|

||||

<Step title="Create an API Key">

|

||||

Create a user API key here: [Create API Key](https://app.agentops.ai/account)

|

||||

</Step>

|

||||

<Step title="Configure Your Environment">

|

||||

Add your API key to your environment variables:

|

||||

```bash

|

||||

AGENTOPS_API_KEY=<YOUR_AGENTOPS_API_KEY>

|

||||

```

|

||||

</Step>

|

||||

<Step title="Install AgentOps">

|

||||

Install AgentOps with:

|

||||

```bash

|

||||

pip install 'crewai[agentops]'

|

||||

```

|

||||

or

|

||||

```bash

|

||||

pip install agentops

|

||||

```

|

||||

</Step>

|

||||

<Step title="Initialize AgentOps">

|

||||

Before using `Crew` in your script, include these lines:

|

||||

|

||||

```python

|

||||

import agentops

|

||||

agentops.init()

|

||||

```

|

||||

|

||||

This will initiate an AgentOps session as well as automatically track Crew agents. For further info on how to outfit more complex agentic systems,

|

||||

check out the [AgentOps documentation](https://docs.agentops.ai) or join the [Discord](https://discord.gg/j4f3KbeH).

|

||||

</Step>

|

||||

</Steps>

|

||||

|

||||

### Crew + AgentOps Examples

|

||||

|

||||

<CardGroup cols={3}>

|

||||

<Card

|

||||

title="Job Posting"

|

||||

color="#F3A78B"

|

||||

href="https://github.com/joaomdmoura/crewAI-examples/tree/main/job-posting"

|

||||

icon="briefcase"

|

||||

iconType="solid"

|

||||

>

|

||||

Example of a Crew agent that generates job posts.

|

||||

</Card>

|

||||

<Card

|

||||

title="Markdown Validator"

|

||||

color="#F3A78B"

|

||||

href="https://github.com/joaomdmoura/crewAI-examples/tree/main/markdown_validator"

|

||||

icon="markdown"

|

||||

iconType="solid"

|

||||

>

|

||||

Example of a Crew agent that validates Markdown files.

|

||||

</Card>

|

||||

<Card

|

||||

title="Instagram Post"

|

||||

color="#F3A78B"

|

||||

href="https://github.com/joaomdmoura/crewAI-examples/tree/main/instagram_post"

|

||||

icon="square-instagram"

|

||||

iconType="brands"

|

||||

>

|

||||

Example of a Crew agent that generates Instagram posts.

|

||||

</Card>

|

||||

</CardGroup>

|

||||

|

||||

### Further Information

|

||||

|

||||

To get started, create an [AgentOps account](https://agentops.ai/?=crew).

|

||||

|

||||

For feature requests or bug reports, please reach out to the AgentOps team on the [AgentOps Repo](https://github.com/AgentOps-AI/agentops).

|

||||

|

||||

#### Extra links

|

||||

|

||||

<a href="https://twitter.com/agentopsai/">🐦 Twitter</a>

|

||||

<span> • </span>

|

||||

<a href="https://discord.gg/JHPt4C7r">📢 Discord</a>

|

||||

<span> • </span>

|

||||

<a href="https://app.agentops.ai/?=crew">🖇️ AgentOps Dashboard</a>

|

||||

<span> • </span>

|

||||

<a href="https://docs.agentops.ai/introduction">📙 Documentation</a>

|

||||

@@ -1,151 +0,0 @@

|

||||

---

|

||||

title: Arize Phoenix

|

||||

description: Arize Phoenix integration for CrewAI with OpenTelemetry and OpenInference

|

||||

icon: magnifying-glass-chart

|

||||

---

|

||||

|

||||

# Arize Phoenix Integration

|

||||

|

||||

This guide demonstrates how to integrate **Arize Phoenix** with **CrewAI** using OpenTelemetry via the [OpenInference](https://github.com/openinference/openinference) SDK. By the end of this guide, you will be able to trace your CrewAI agents and easily debug your agents.

|

||||

|

||||

> **What is Arize Phoenix?** [Arize Phoenix](https://phoenix.arize.com) is an LLM observability platform that provides tracing and evaluation for AI applications.

|

||||

|

||||

[](https://www.youtube.com/watch?v=Yc5q3l6F7Ww)

|

||||

|

||||

## Get Started

|

||||

|

||||

We'll walk through a simple example of using CrewAI and integrating it with Arize Phoenix via OpenTelemetry using OpenInference.

|

||||

|

||||

You can also access this guide on [Google Colab](https://colab.research.google.com/github/Arize-ai/phoenix/blob/main/tutorials/tracing/crewai_tracing_tutorial.ipynb).

|

||||

|

||||

### Step 1: Install Dependencies

|

||||

|

||||

```bash

|

||||

pip install openinference-instrumentation-crewai crewai crewai-tools arize-phoenix-otel

|

||||

```

|

||||

|

||||

### Step 2: Set Up Environment Variables

|

||||

|

||||

Setup Phoenix Cloud API keys and configure OpenTelemetry to send traces to Phoenix. Phoenix Cloud is a hosted version of Arize Phoenix, but it is not required to use this integration.

|

||||

|

||||

You can get your free Serper API key [here](https://serper.dev/).

|

||||

|

||||

```python

|

||||

import os

|

||||

from getpass import getpass

|

||||

|

||||

# Get your Phoenix Cloud credentials

|

||||

PHOENIX_API_KEY = getpass("🔑 Enter your Phoenix Cloud API Key: ")

|

||||

|

||||

# Get API keys for services

|

||||

OPENAI_API_KEY = getpass("🔑 Enter your OpenAI API key: ")

|

||||

SERPER_API_KEY = getpass("🔑 Enter your Serper API key: ")

|

||||

|

||||

# Set environment variables

|

||||

os.environ["PHOENIX_CLIENT_HEADERS"] = f"api_key={PHOENIX_API_KEY}"

|

||||

os.environ["PHOENIX_COLLECTOR_ENDPOINT"] = "https://app.phoenix.arize.com" # Phoenix Cloud, change this to your own endpoint if you are using a self-hosted instance

|

||||

os.environ["OPENAI_API_KEY"] = OPENAI_API_KEY

|

||||

os.environ["SERPER_API_KEY"] = SERPER_API_KEY

|

||||

```

|

||||

|

||||

### Step 3: Initialize OpenTelemetry with Phoenix

|

||||

|

||||

Initialize the OpenInference OpenTelemetry instrumentation SDK to start capturing traces and send them to Phoenix.

|

||||

|

||||

```python

|

||||

from phoenix.otel import register

|

||||

|

||||

tracer_provider = register(

|

||||

project_name="crewai-tracing-demo",

|

||||

auto_instrument=True,

|

||||

)

|

||||

```

|

||||

|

||||

### Step 4: Create a CrewAI Application

|

||||

|

||||

We'll create a CrewAI application where two agents collaborate to research and write a blog post about AI advancements.

|

||||

|

||||

```python

|

||||

from crewai import Agent, Crew, Process, Task

|

||||

from crewai_tools import SerperDevTool

|

||||

from openinference.instrumentation.crewai import CrewAIInstrumentor

|

||||

from phoenix.otel import register

|

||||

|

||||

# setup monitoring for your crew

|

||||

tracer_provider = register(

|

||||

endpoint="http://localhost:6006/v1/traces")

|

||||

CrewAIInstrumentor().instrument(skip_dep_check=True, tracer_provider=tracer_provider)

|

||||

search_tool = SerperDevTool()

|

||||

|

||||

# Define your agents with roles and goals

|

||||

researcher = Agent(

|

||||

role="Senior Research Analyst",

|

||||

goal="Uncover cutting-edge developments in AI and data science",

|

||||

backstory="""You work at a leading tech think tank.

|

||||

Your expertise lies in identifying emerging trends.

|

||||

You have a knack for dissecting complex data and presenting actionable insights.""",

|

||||

verbose=True,

|

||||

allow_delegation=False,

|

||||

# You can pass an optional llm attribute specifying what model you wanna use.

|

||||

# llm=ChatOpenAI(model_name="gpt-3.5", temperature=0.7),

|

||||

tools=[search_tool],

|

||||

)

|

||||

writer = Agent(

|

||||

role="Tech Content Strategist",

|

||||

goal="Craft compelling content on tech advancements",

|

||||

backstory="""You are a renowned Content Strategist, known for your insightful and engaging articles.

|

||||

You transform complex concepts into compelling narratives.""",

|

||||

verbose=True,

|

||||

allow_delegation=True,

|

||||

)

|

||||

|

||||

# Create tasks for your agents

|

||||

task1 = Task(

|

||||

description="""Conduct a comprehensive analysis of the latest advancements in AI in 2024.

|

||||

Identify key trends, breakthrough technologies, and potential industry impacts.""",

|

||||

expected_output="Full analysis report in bullet points",

|

||||

agent=researcher,

|

||||

)

|

||||

|

||||

task2 = Task(

|

||||

description="""Using the insights provided, develop an engaging blog

|

||||

post that highlights the most significant AI advancements.

|

||||

Your post should be informative yet accessible, catering to a tech-savvy audience.

|

||||

Make it sound cool, avoid complex words so it doesn't sound like AI.""",

|

||||

expected_output="Full blog post of at least 4 paragraphs",

|

||||

agent=writer,

|

||||

)

|

||||

|

||||

# Instantiate your crew with a sequential process

|

||||

crew = Crew(

|

||||

agents=[researcher, writer], tasks=[task1, task2], verbose=1, process=Process.sequential

|

||||

)

|

||||

|

||||

# Get your crew to work!

|

||||

result = crew.kickoff()

|

||||

|

||||

print("######################")

|

||||

print(result)

|

||||

```

|

||||

|

||||

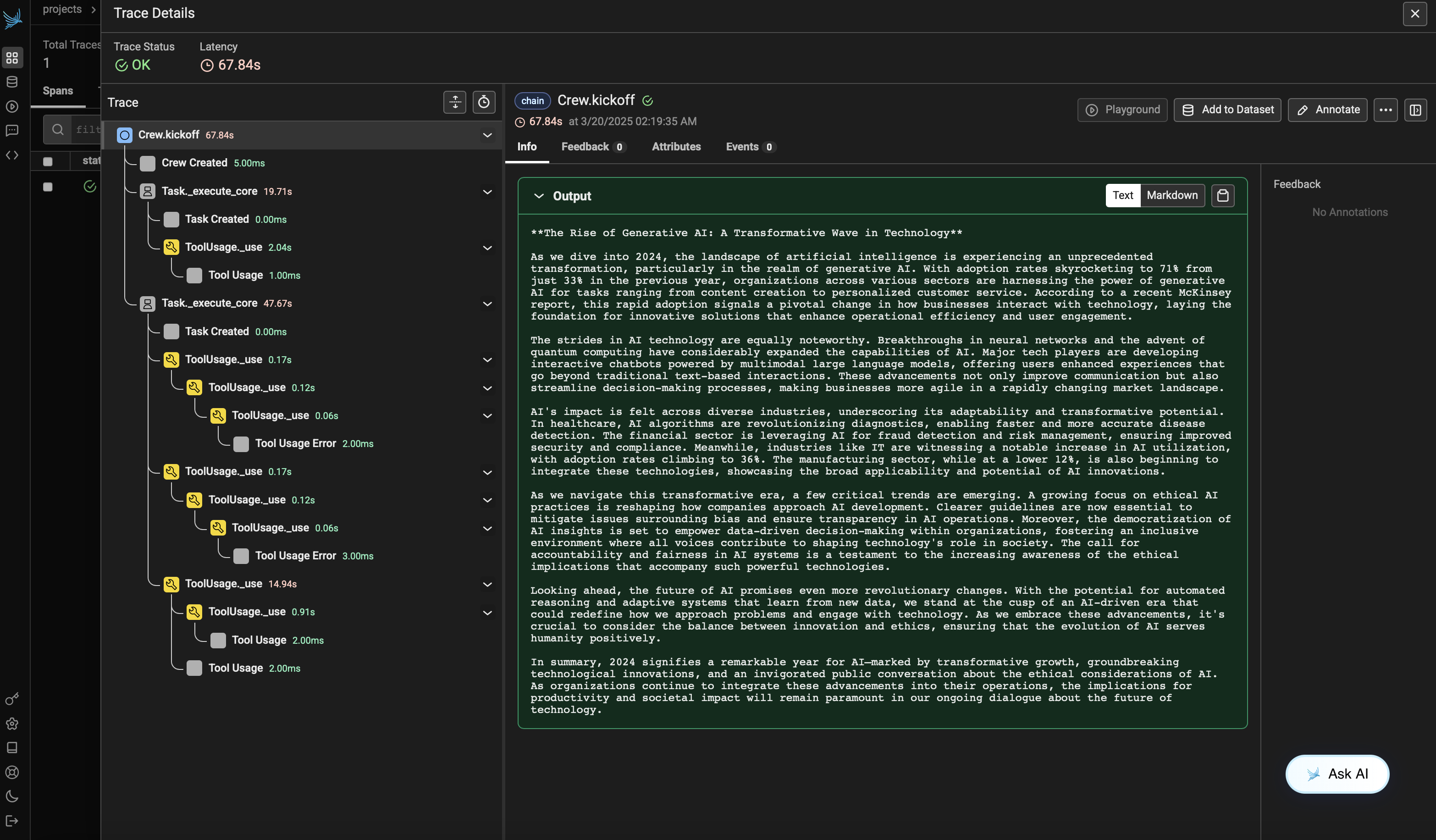

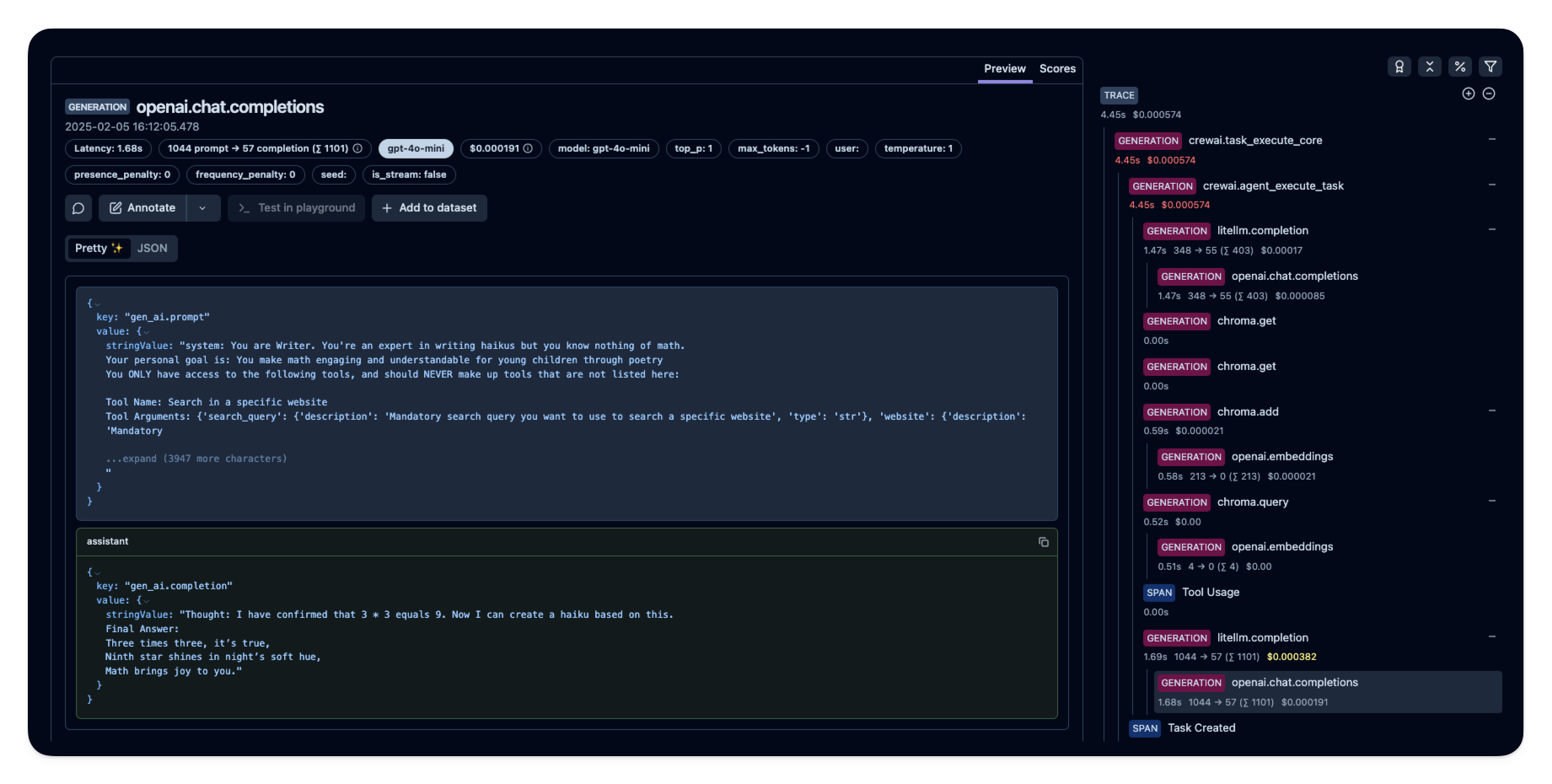

### Step 5: View Traces in Phoenix

|

||||

|

||||

After running the agent, you can view the traces generated by your CrewAI application in Phoenix. You should see detailed steps of the agent interactions and LLM calls, which can help you debug and optimize your AI agents.

|

||||

|

||||

Log into your Phoenix Cloud account and navigate to the project you specified in the `project_name` parameter. You'll see a timeline view of your trace with all the agent interactions, tool usages, and LLM calls.

|

||||

|

||||

|

||||

|

||||

|

||||

### Version Compatibility Information

|

||||

- Python 3.8+

|

||||

- CrewAI >= 0.86.0

|

||||

- Arize Phoenix >= 7.0.1

|

||||

- OpenTelemetry SDK >= 1.31.0

|

||||

|

||||

|

||||

### References

|

||||

- [Phoenix Documentation](https://docs.arize.com/phoenix/) - Overview of the Phoenix platform.

|

||||

- [CrewAI Documentation](https://docs.crewai.com/) - Overview of the CrewAI framework.

|

||||

- [OpenTelemetry Docs](https://opentelemetry.io/docs/) - OpenTelemetry guide

|

||||

- [OpenInference GitHub](https://github.com/openinference/openinference) - Source code for OpenInference SDK.

|

||||

@@ -1,61 +0,0 @@

|

||||

---

|

||||

title: Before and After Kickoff Hooks

|

||||

description: Learn how to use before and after kickoff hooks in CrewAI

|

||||

---

|

||||

|

||||

CrewAI provides hooks that allow you to execute code before and after a crew's kickoff. These hooks are useful for preprocessing inputs or post-processing results.

|

||||

|

||||

## Before Kickoff Hook

|

||||

|

||||

The before kickoff hook is executed before the crew starts its tasks. It receives the input dictionary and can modify it before passing it to the crew. You can use this hook to set up your environment, load necessary data, or preprocess your inputs. This is useful in scenarios where the input data might need enrichment or validation before being processed by the crew.

|

||||

|

||||

Here's an example of defining a before kickoff function in your `crew.py`:

|

||||

|

||||

```python

|

||||

from crewai import CrewBase

|

||||

from crewai.project import before_kickoff

|

||||

|

||||

@CrewBase

|

||||

class MyCrew:

|

||||

@before_kickoff

|

||||

def prepare_data(self, inputs):

|

||||

# Preprocess or modify inputs

|

||||

inputs['processed'] = True

|

||||

return inputs

|

||||

|

||||

#...

|

||||

```

|

||||

|

||||

In this example, the prepare_data function modifies the inputs by adding a new key-value pair indicating that the inputs have been processed.

|

||||

|

||||

## After Kickoff Hook

|

||||

|

||||

The after kickoff hook is executed after the crew has completed its tasks. It receives the result object, which contains the outputs of the crew's execution. This hook is ideal for post-processing results, such as logging, data transformation, or further analysis.

|

||||

|

||||

Here's how you can define an after kickoff function in your `crew.py`:

|

||||

|

||||

```python

|

||||

from crewai import CrewBase

|

||||

from crewai.project import after_kickoff

|

||||

|

||||

@CrewBase

|

||||

class MyCrew:

|

||||

@after_kickoff

|

||||

def log_results(self, result):

|

||||

# Log or modify the results

|

||||

print("Crew execution completed with result:", result)

|

||||

return result

|

||||

|

||||

# ...

|

||||

```

|

||||

|

||||

|

||||

In the `log_results` function, the results of the crew execution are simply printed out. You can extend this to perform more complex operations such as sending notifications or integrating with other services.

|

||||

|

||||

## Utilizing Both Hooks

|

||||

|

||||

Both hooks can be used together to provide a comprehensive setup and teardown process for your crew's execution. They are particularly useful in maintaining clean code architecture by separating concerns and enhancing the modularity of your CrewAI implementations.

|

||||

|

||||

## Conclusion

|

||||

|

||||

Before and after kickoff hooks in CrewAI offer powerful ways to interact with the lifecycle of a crew's execution. By understanding and utilizing these hooks, you can greatly enhance the robustness and flexibility of your AI agents.

|

||||

@@ -1,443 +0,0 @@

|

||||

---

|

||||

title: Bring your own agent

|

||||

description: Learn how to bring your own agents that work within a Crew.

|

||||

icon: robots

|

||||

---

|

||||

|

||||

Interoperability is a core concept in CrewAI. This guide will show you how to bring your own agents that work within a Crew.

|

||||

|

||||

|

||||

## Adapter Guide for Bringing your own agents (Langgraph Agents, OpenAI Agents, etc...)

|

||||

We require 3 adapters to turn any agent from different frameworks to work within crew.

|

||||

|

||||

1. BaseAgentAdapter

|

||||

2. BaseToolAdapter

|

||||

3. BaseConverter

|

||||

|

||||

|

||||

## BaseAgentAdapter

|

||||

This abstract class defines the common interface and functionality that all

|

||||

agent adapters must implement. It extends BaseAgent to maintain compatibility

|

||||

with the CrewAI framework while adding adapter-specific requirements.

|

||||

|

||||

Required Methods:

|

||||

|

||||

1. `def configure_tools`

|

||||

2. `def configure_structured_output`

|

||||

|

||||

## Creating your own Adapter

|

||||

To integrate an agent from a different framework (e.g., LangGraph, Autogen, OpenAI Assistants) into CrewAI, you need to create a custom adapter by inheriting from `BaseAgentAdapter`. This adapter acts as a compatibility layer, translating between the CrewAI interfaces and the specific requirements of your external agent.

|

||||

|

||||

Here's how you implement your custom adapter:

|

||||

|

||||

1. **Inherit from `BaseAgentAdapter`**:

|

||||

```python

|

||||

from crewai.agents.agent_adapters.base_agent_adapter import BaseAgentAdapter

|

||||

from crewai.tools import BaseTool

|

||||

from typing import List, Optional, Any, Dict

|

||||

|

||||

class MyCustomAgentAdapter(BaseAgentAdapter):

|

||||

# ... implementation details ...

|

||||

```

|

||||

|

||||

2. **Implement `__init__`**:

|

||||

The constructor should call the parent class constructor `super().__init__(**kwargs)` and perform any initialization specific to your external agent. You can use the optional `agent_config` dictionary passed during CrewAI's `Agent` initialization to configure your adapter and the underlying agent.

|

||||

|

||||

```python

|

||||

def __init__(self, agent_config: Optional[Dict[str, Any]] = None, **kwargs: Any):

|

||||

super().__init__(agent_config=agent_config, **kwargs)

|

||||

# Initialize your external agent here, possibly using agent_config

|

||||

# Example: self.external_agent = initialize_my_agent(agent_config)

|

||||

print(f"Initializing MyCustomAgentAdapter with config: {agent_config}")

|

||||

```

|

||||

|

||||

3. **Implement `configure_tools`**:

|

||||

This abstract method is crucial. It receives a list of CrewAI `BaseTool` instances. Your implementation must convert or adapt these tools into the format expected by your external agent framework. This might involve wrapping them, extracting specific attributes, or registering them with the external agent instance.

|

||||

|

||||

```python

|

||||

def configure_tools(self, tools: Optional[List[BaseTool]] = None) -> None:

|

||||

if tools:

|

||||

adapted_tools = []

|

||||

for tool in tools:

|

||||

# Adapt CrewAI BaseTool to the format your agent expects

|

||||

# Example: adapted_tool = adapt_to_my_framework(tool)

|

||||

# adapted_tools.append(adapted_tool)

|

||||

pass # Replace with your actual adaptation logic

|

||||

|

||||

# Configure the external agent with the adapted tools

|

||||

# Example: self.external_agent.set_tools(adapted_tools)

|

||||

print(f"Configuring tools for MyCustomAgentAdapter: {adapted_tools}") # Placeholder

|

||||

else:

|

||||

# Handle the case where no tools are provided

|

||||

# Example: self.external_agent.set_tools([])

|

||||

print("No tools provided for MyCustomAgentAdapter.")

|

||||

```

|

||||

|

||||

4. **Implement `configure_structured_output`**:

|

||||

This method is called when the CrewAI `Agent` is configured with structured output requirements (e.g., `output_json` or `output_pydantic`). Your adapter needs to ensure the external agent is set up to comply with these requirements. This might involve setting specific parameters on the external agent or ensuring its underlying model supports the requested format. If the external agent doesn't support structured output in a way compatible with CrewAI's expectations, you might need to handle the conversion or raise an appropriate error.

|

||||

|

||||

```python

|

||||

def configure_structured_output(self, structured_output: Any) -> None:

|

||||

# Configure your external agent to produce output in the specified format

|

||||

# Example: self.external_agent.set_output_format(structured_output)

|

||||

self.adapted_structured_output = True # Signal that structured output is handled

|

||||

print(f"Configuring structured output for MyCustomAgentAdapter: {structured_output}")

|

||||

```

|

||||

|

||||

By implementing these methods, your `MyCustomAgentAdapter` will allow your custom agent implementation to function correctly within a CrewAI crew, interacting with tasks and tools seamlessly. Remember to replace the example comments and print statements with your actual adaptation logic specific to the external agent framework you are integrating.

|

||||

|

||||

## BaseToolAdapter implementation

|

||||

The `BaseToolAdapter` class is responsible for converting CrewAI's native `BaseTool` objects into a format that your specific external agent framework can understand and utilize. Different agent frameworks (like LangGraph, OpenAI Assistants, etc.) have their own unique ways of defining and handling tools, and the `BaseToolAdapter` acts as the translator.

|

||||

|

||||

Here's how you implement your custom tool adapter:

|

||||

|

||||

1. **Inherit from `BaseToolAdapter`**:

|

||||

```python

|

||||

from crewai.agents.agent_adapters.base_tool_adapter import BaseToolAdapter

|

||||

from crewai.tools import BaseTool

|

||||

from typing import List, Any

|

||||

|

||||

class MyCustomToolAdapter(BaseToolAdapter):

|

||||

# ... implementation details ...

|

||||

```

|

||||

|

||||

2. **Implement `configure_tools`**:

|

||||

This is the core abstract method you must implement. It receives a list of CrewAI `BaseTool` instances provided to the agent. Your task is to iterate through this list, adapt each `BaseTool` into the format expected by your external framework, and store the converted tools in the `self.converted_tools` list (which is initialized in the base class constructor).

|

||||

|

||||

```python

|

||||

def configure_tools(self, tools: List[BaseTool]) -> None:

|

||||

"""Configure and convert CrewAI tools for the specific implementation."""

|

||||

self.converted_tools = [] # Reset in case it's called multiple times

|

||||

for tool in tools:

|

||||

# Sanitize the tool name if required by the target framework

|

||||

sanitized_name = self.sanitize_tool_name(tool.name)

|

||||

|

||||

# --- Your Conversion Logic Goes Here ---

|

||||

# Example: Convert BaseTool to a dictionary format for LangGraph

|

||||

# converted_tool = {

|

||||

# "name": sanitized_name,

|

||||

# "description": tool.description,

|

||||

# "parameters": tool.args_schema.schema() if tool.args_schema else {},

|

||||

# # Add any other framework-specific fields

|

||||

# }

|

||||

|

||||

# Example: Convert BaseTool to an OpenAI function definition

|

||||

# converted_tool = {

|

||||

# "type": "function",

|

||||

# "function": {

|

||||

# "name": sanitized_name,

|

||||

# "description": tool.description,

|

||||

# "parameters": tool.args_schema.schema() if tool.args_schema else {"type": "object", "properties": {}},

|

||||

# }

|

||||

# }

|

||||

|

||||

# --- Replace above examples with your actual adaptation ---

|

||||

converted_tool = self.adapt_tool_to_my_framework(tool, sanitized_name) # Placeholder

|

||||

|

||||

self.converted_tools.append(converted_tool)

|

||||

print(f"Adapted tool '{tool.name}' to '{sanitized_name}' for MyCustomToolAdapter") # Placeholder

|

||||

|

||||

print(f"MyCustomToolAdapter finished configuring tools: {len(self.converted_tools)} adapted.") # Placeholder

|

||||

|

||||

# --- Helper method for adaptation (Example) ---

|

||||

def adapt_tool_to_my_framework(self, tool: BaseTool, sanitized_name: str) -> Any:

|

||||

# Replace this with the actual logic to convert a CrewAI BaseTool

|

||||

# to the format needed by your specific external agent framework.

|

||||

# This will vary greatly depending on the target framework.

|

||||

adapted_representation = {

|

||||

"framework_specific_name": sanitized_name,

|

||||

"framework_specific_description": tool.description,

|

||||

"inputs": tool.args_schema.schema() if tool.args_schema else None,

|

||||

"implementation_reference": tool.run # Or however the framework needs to call it

|

||||

}

|

||||

# Also ensure the tool works both sync and async

|

||||

async def async_tool_wrapper(*args, **kwargs):

|

||||

output = tool.run(*args, **kwargs)

|

||||

if inspect.isawaitable(output):

|

||||

return await output

|

||||

else:

|

||||

return output

|

||||

|

||||

adapted_tool = MyFrameworkTool(

|

||||

name=sanitized_name,

|

||||

description=tool.description,

|

||||

inputs=tool.args_schema.schema() if tool.args_schema else None,

|

||||

implementation_reference=async_tool_wrapper

|

||||

)

|

||||

|

||||

return adapted_representation

|

||||

|

||||

```

|

||||

|

||||

3. **Using the Adapter**:

|

||||

Typically, you would instantiate your `MyCustomToolAdapter` within your `MyCustomAgentAdapter`'s `configure_tools` method and use it to process the tools before configuring your external agent.

|

||||

|

||||

```python

|

||||

# Inside MyCustomAgentAdapter.configure_tools

|

||||

def configure_tools(self, tools: Optional[List[BaseTool]] = None) -> None:

|

||||

if tools:

|

||||

tool_adapter = MyCustomToolAdapter() # Instantiate your tool adapter

|

||||

tool_adapter.configure_tools(tools) # Convert the tools

|

||||

adapted_tools = tool_adapter.tools() # Get the converted tools

|

||||

|

||||

# Now configure your external agent with the adapted_tools

|

||||

# Example: self.external_agent.set_tools(adapted_tools)

|

||||

print(f"Configuring external agent with adapted tools: {adapted_tools}") # Placeholder

|

||||

else:

|

||||

# Handle no tools case

|

||||

print("No tools provided for MyCustomAgentAdapter.")

|

||||

```

|

||||

|

||||

By creating a `BaseToolAdapter`, you decouple the tool conversion logic from the agent adaptation, making the integration cleaner and more modular. Remember to replace the placeholder examples with the actual conversion logic required by your specific external agent framework.

|

||||

|

||||

## BaseConverter

|

||||

The `BaseConverterAdapter` plays a crucial role when a CrewAI `Task` requires an agent to return its final output in a specific structured format, such as JSON or a Pydantic model. It bridges the gap between CrewAI's structured output requirements and the capabilities of your external agent.

|

||||

|

||||

Its primary responsibilities are:

|

||||

1. **Configuring the Agent for Structured Output:** Based on the `Task`'s requirements (`output_json` or `output_pydantic`), it instructs the associated `BaseAgentAdapter` (and indirectly, the external agent) on what format is expected.

|

||||

2. **Enhancing the System Prompt:** It modifies the agent's system prompt to include clear instructions on *how* to generate the output in the required structure.

|

||||

3. **Post-processing the Result:** It takes the raw output from the agent and attempts to parse, validate, and format it according to the required structure, ultimately returning a string representation (e.g., a JSON string).

|

||||

|

||||

Here's how you implement your custom converter adapter:

|

||||

|

||||

1. **Inherit from `BaseConverterAdapter`**:

|

||||

```python

|

||||

from crewai.agents.agent_adapters.base_converter_adapter import BaseConverterAdapter

|

||||

# Assuming you have your MyCustomAgentAdapter defined

|

||||

# from .my_custom_agent_adapter import MyCustomAgentAdapter

|

||||

from crewai.task import Task

|

||||

from typing import Any

|

||||

|

||||

class MyCustomConverterAdapter(BaseConverterAdapter):

|

||||

# Store the expected output type (e.g., 'json', 'pydantic', 'text')

|

||||

_output_type: str = 'text'

|

||||

_output_schema: Any = None # Store JSON schema or Pydantic model

|

||||

|

||||

# ... implementation details ...

|

||||

```

|

||||

|

||||

2. **Implement `__init__`**:

|

||||

The constructor must accept the corresponding `agent_adapter` instance it will work with.

|

||||

|

||||

```python

|

||||

def __init__(self, agent_adapter: Any): # Use your specific AgentAdapter type hint

|

||||

self.agent_adapter = agent_adapter

|

||||

print(f"Initializing MyCustomConverterAdapter for agent adapter: {type(agent_adapter).__name__}")

|

||||

```

|

||||

|

||||

3. **Implement `configure_structured_output`**:

|

||||

This method receives the CrewAI `Task` object. You need to check the task's `output_json` and `output_pydantic` attributes to determine the required output structure. Store this information (e.g., in `_output_type` and `_output_schema`) and potentially call configuration methods on your `self.agent_adapter` if the external agent needs specific setup for structured output (which might have been partially handled in the agent adapter's `configure_structured_output` already).

|

||||

|

||||

```python

|

||||

def configure_structured_output(self, task: Task) -> None:

|

||||

"""Configure the expected structured output based on the task."""

|

||||

if task.output_pydantic:

|

||||

self._output_type = 'pydantic'

|

||||

self._output_schema = task.output_pydantic

|

||||

print(f"Converter: Configured for Pydantic output: {self._output_schema.__name__}")

|

||||

elif task.output_json:

|

||||

self._output_type = 'json'

|

||||

self._output_schema = task.output_json

|

||||

print(f"Converter: Configured for JSON output with schema: {self._output_schema}")

|

||||

else:

|

||||

self._output_type = 'text'

|

||||

self._output_schema = None

|

||||

print("Converter: Configured for standard text output.")

|

||||

|

||||

# Optionally, inform the agent adapter if needed

|

||||

# self.agent_adapter.set_output_mode(self._output_type, self._output_schema)

|

||||

```

|

||||

|

||||

4. **Implement `enhance_system_prompt`**:

|

||||

This method takes the agent's base system prompt string and should append instructions tailored to the currently configured `_output_type` and `_output_schema`. The goal is to guide the LLM powering the agent to produce output in the correct format.

|

||||

|

||||

```python

|

||||

def enhance_system_prompt(self, base_prompt: str) -> str:

|

||||

"""Enhance the system prompt with structured output instructions."""

|

||||

if self._output_type == 'text':

|

||||

return base_prompt # No enhancement needed for plain text

|

||||

|

||||

instructions = "\n\nYour final answer MUST be formatted as "

|

||||

if self._output_type == 'json':

|

||||

schema_str = json.dumps(self._output_schema, indent=2)

|

||||

instructions += f"a JSON object conforming to the following schema:\n```json\n{schema_str}\n```"

|

||||

elif self._output_type == 'pydantic':

|

||||

schema_str = json.dumps(self._output_schema.model_json_schema(), indent=2)

|

||||

instructions += f"a JSON object conforming to the Pydantic model '{self._output_schema.__name__}' with the following schema:\n```json\n{schema_str}\n```"

|

||||

|

||||

instructions += "\nEnsure your entire response is ONLY the valid JSON object, without any introductory text, explanations, or concluding remarks."

|

||||

|

||||

print(f"Converter: Enhancing prompt for {self._output_type} output.")

|

||||

return base_prompt + instructions

|

||||

```

|

||||

*Note: The exact prompt engineering might need tuning based on the agent/LLM being used.*

|

||||

|

||||

5. **Implement `post_process_result`**:

|

||||

This method receives the raw string output from the agent. If structured output was requested (`json` or `pydantic`), you should attempt to parse the string into the expected format. Handle potential parsing errors (e.g., log them, attempt simple fixes, or raise an exception). Crucially, the method must **always return a string**, even if the intermediate format was a dictionary or Pydantic object (e.g., by serializing it back to a JSON string).

|

||||

|

||||

```python

|

||||

import json

|

||||

from pydantic import ValidationError

|

||||

|

||||

def post_process_result(self, result: str) -> str:

|

||||

"""Post-process the agent's result to ensure it matches the expected format."""

|

||||

print(f"Converter: Post-processing result for {self._output_type} output.")

|

||||

if self._output_type == 'json':

|

||||

try:

|

||||

# Attempt to parse and re-serialize to ensure validity and consistent format

|

||||

parsed_json = json.loads(result)

|

||||

# Optional: Validate against self._output_schema if it's a JSON schema dictionary

|

||||

# from jsonschema import validate

|

||||

# validate(instance=parsed_json, schema=self._output_schema)

|

||||

return json.dumps(parsed_json)

|

||||

except json.JSONDecodeError as e:

|

||||

print(f"Error: Failed to parse JSON output: {e}\nRaw output:\n{result}")

|

||||

# Handle error: return raw, raise exception, or try to fix

|

||||

return result # Example: return raw output on failure

|

||||

# except Exception as e: # Catch validation errors if using jsonschema

|

||||

# print(f"Error: JSON output failed schema validation: {e}\nRaw output:\n{result}")

|

||||

# return result

|

||||

elif self._output_type == 'pydantic':

|

||||

try:

|

||||

# Attempt to parse into the Pydantic model

|

||||

model_instance = self._output_schema.model_validate_json(result)

|

||||

# Return the model serialized back to JSON

|

||||

return model_instance.model_dump_json()

|

||||

except ValidationError as e:

|

||||

print(f"Error: Failed to validate Pydantic output: {e}\nRaw output:\n{result}")

|

||||

# Handle error

|

||||

return result # Example: return raw output on failure

|

||||

except json.JSONDecodeError as e:

|

||||

print(f"Error: Failed to parse JSON for Pydantic model: {e}\nRaw output:\n{result}")

|

||||

return result

|

||||

else: # 'text'

|

||||

return result # No processing needed for plain text

|

||||

```

|

||||

|

||||

By implementing these methods, your `MyCustomConverterAdapter` ensures that structured output requests from CrewAI tasks are correctly handled by your integrated external agent, improving the reliability and usability of your custom agent within the CrewAI framework.

|

||||

|

||||

## Out of the Box Adapters

|

||||

|

||||

We provide out of the box adapters for the following frameworks:

|

||||

1. LangGraph

|

||||

2. OpenAI Agents

|

||||

|

||||

## Kicking off a crew with adapted agents:

|

||||

|

||||

```python

|

||||

import json

|

||||

import os

|

||||

from typing import List

|

||||

|

||||

from crewai_tools import SerperDevTool

|

||||

from src.crewai import Agent, Crew, Task

|

||||

from langchain_openai import ChatOpenAI

|

||||

from pydantic import BaseModel

|

||||

|

||||

from crewai.agents.agent_adapters.langgraph.langgraph_adapter import (

|

||||

LangGraphAgentAdapter,

|

||||

)

|

||||

from crewai.agents.agent_adapters.openai_agents.openai_adapter import OpenAIAgentAdapter

|

||||

|

||||

# CrewAI Agent

|

||||

code_helper_agent = Agent(

|

||||

role="Code Helper",

|

||||

goal="Help users solve coding problems effectively and provide clear explanations.",

|

||||

backstory="You are an experienced programmer with deep knowledge across multiple programming languages and frameworks. You specialize in solving complex coding challenges and explaining solutions clearly.",

|

||||

allow_delegation=False,

|

||||

verbose=True,

|

||||

)

|

||||

# OpenAI Agent Adapter

|

||||

link_finder_agent = OpenAIAgentAdapter(

|

||||

role="Link Finder",

|

||||

goal="Find the most relevant and high-quality resources for coding tasks.",

|

||||

backstory="You are a research specialist with a talent for finding the most helpful resources. You're skilled at using search tools to discover documentation, tutorials, and examples that directly address the user's coding needs.",

|

||||

tools=[SerperDevTool()],

|

||||

allow_delegation=False,

|

||||

verbose=True,

|

||||

)

|

||||

|

||||

# LangGraph Agent Adapter

|

||||

reporter_agent = LangGraphAgentAdapter(

|

||||

role="Reporter",

|

||||

goal="Report the results of the tasks.",

|

||||

backstory="You are a reporter who reports the results of the other tasks",

|

||||

llm=ChatOpenAI(model="gpt-4o"),

|

||||

allow_delegation=True,

|

||||

verbose=True,

|

||||

)

|

||||

|

||||

|

||||

class Code(BaseModel):

|

||||

code: str

|

||||

|

||||

|

||||

task = Task(

|

||||

description="Give an answer to the coding question: {task}",

|

||||

expected_output="A thorough answer to the coding question: {task}",

|

||||

agent=code_helper_agent,

|

||||

output_json=Code,

|

||||

)

|

||||

task2 = Task(

|

||||

description="Find links to resources that can help with coding tasks. Use the serper tool to find resources that can help.",

|

||||

expected_output="A list of links to resources that can help with coding tasks",

|

||||

agent=link_finder_agent,

|

||||

)

|

||||

|

||||

|

||||

class Report(BaseModel):

|

||||

code: str

|

||||

links: List[str]

|

||||

|

||||

|

||||

task3 = Task(

|

||||

description="Report the results of the tasks.",

|

||||

expected_output="A report of the results of the tasks. this is the code produced and then the links to the resources that can help with the coding task.",

|

||||

agent=reporter_agent,

|

||||

output_json=Report,

|

||||

)

|

||||

# Use in CrewAI

|

||||

crew = Crew(

|

||||

agents=[code_helper_agent, link_finder_agent, reporter_agent],

|

||||

tasks=[task, task2, task3],

|

||||

verbose=True,

|

||||

)

|

||||

|

||||

result = crew.kickoff(

|

||||

inputs={"task": "How do you implement an abstract class in python?"}

|

||||

)

|

||||

|

||||

# Print raw result first

|

||||

print("Raw result:", result)

|

||||

|

||||

# Handle result based on its type

|

||||

if hasattr(result, "json_dict") and result.json_dict:

|

||||

json_result = result.json_dict

|

||||

print("\nStructured JSON result:")

|

||||

print(f"{json.dumps(json_result, indent=2)}")

|

||||

|

||||

# Access fields safely

|

||||

if isinstance(json_result, dict):

|

||||

if "code" in json_result:

|

||||

print("\nCode:")

|

||||

print(

|

||||

json_result["code"][:200] + "..."

|

||||

if len(json_result["code"]) > 200

|

||||

else json_result["code"]

|

||||

)

|

||||

|

||||

if "links" in json_result:

|

||||

print("\nLinks:")

|

||||

for link in json_result["links"][:5]: # Print first 5 links

|

||||

print(f"- {link}")

|

||||

if len(json_result["links"]) > 5:

|

||||

print(f"...and {len(json_result['links']) - 5} more links")

|

||||

elif hasattr(result, "pydantic") and result.pydantic:

|

||||

print("\nPydantic model result:")

|

||||

print(result.pydantic.model_dump_json(indent=2))

|

||||

else:

|

||||

# Fallback to raw output

|

||||

print("\nNo structured result available, using raw output:")

|

||||

print(result.raw[:500] + "..." if len(result.raw) > 500 else result.raw)

|

||||

|

||||

```

|

||||

@@ -1,95 +0,0 @@

|

||||

---

|

||||

title: Coding Agents

|

||||

description: Learn how to enable your CrewAI Agents to write and execute code, and explore advanced features for enhanced functionality.

|

||||

icon: rectangle-code

|

||||

---

|

||||

|

||||

## Introduction

|

||||

|

||||

CrewAI Agents now have the powerful ability to write and execute code, significantly enhancing their problem-solving capabilities. This feature is particularly useful for tasks that require computational or programmatic solutions.

|

||||

|

||||

## Enabling Code Execution

|

||||

|

||||

To enable code execution for an agent, set the `allow_code_execution` parameter to `True` when creating the agent.

|

||||

|

||||

Here's an example:

|

||||

|

||||

```python Code

|

||||

from crewai import Agent

|

||||

|

||||

coding_agent = Agent(

|

||||

role="Senior Python Developer",

|

||||

goal="Craft well-designed and thought-out code",

|

||||

backstory="You are a senior Python developer with extensive experience in software architecture and best practices.",

|

||||

allow_code_execution=True

|

||||

)

|

||||

```

|

||||

|

||||

<Note>

|

||||

Note that `allow_code_execution` parameter defaults to `False`.

|

||||

</Note>

|

||||

|

||||

## Important Considerations

|

||||

|

||||

1. **Model Selection**: It is strongly recommended to use more capable models like Claude 3.5 Sonnet and GPT-4 when enabling code execution.

|

||||

These models have a better understanding of programming concepts and are more likely to generate correct and efficient code.

|

||||

|

||||

2. **Error Handling**: The code execution feature includes error handling. If executed code raises an exception, the agent will receive the error message and can attempt to correct the code or

|

||||

provide alternative solutions. The `max_retry_limit` parameter, which defaults to 2, controls the maximum number of retries for a task.

|

||||

|

||||

3. **Dependencies**: To use the code execution feature, you need to install the `crewai_tools` package. If not installed, the agent will log an info message:

|

||||

"Coding tools not available. Install crewai_tools."

|

||||

|

||||

## Code Execution Process

|

||||

|

||||

When an agent with code execution enabled encounters a task requiring programming:

|

||||

|

||||

<Steps>

|

||||

<Step title="Task Analysis">

|

||||

The agent analyzes the task and determines that code execution is necessary.

|

||||

</Step>

|

||||

<Step title="Code Formulation">

|

||||

It formulates the Python code needed to solve the problem.

|

||||

</Step>

|

||||

<Step title="Code Execution">

|

||||

The code is sent to the internal code execution tool (`CodeInterpreterTool`).

|

||||

</Step>

|

||||

<Step title="Result Interpretation">

|

||||

The agent interprets the result and incorporates it into its response or uses it for further problem-solving.

|

||||

</Step>

|

||||

</Steps>

|

||||

|

||||

## Example Usage

|

||||

|

||||

Here's a detailed example of creating an agent with code execution capabilities and using it in a task:

|

||||

|

||||

```python Code

|

||||

from crewai import Agent, Task, Crew

|

||||

|

||||

# Create an agent with code execution enabled

|

||||

coding_agent = Agent(

|

||||

role="Python Data Analyst",

|

||||

goal="Analyze data and provide insights using Python",

|

||||

backstory="You are an experienced data analyst with strong Python skills.",

|

||||

allow_code_execution=True

|

||||

)

|

||||

|

||||

# Create a task that requires code execution

|

||||

data_analysis_task = Task(

|

||||

description="Analyze the given dataset and calculate the average age of participants.",

|

||||

agent=coding_agent

|

||||

)

|

||||

|

||||

# Create a crew and add the task

|

||||

analysis_crew = Crew(

|

||||

agents=[coding_agent],

|

||||

tasks=[data_analysis_task]

|

||||

)

|

||||

|

||||

# Execute the crew

|

||||

result = analysis_crew.kickoff()

|

||||

|

||||

print(result)

|

||||

```

|

||||

|

||||

In this example, the `coding_agent` can write and execute Python code to perform data analysis tasks.

|

||||

@@ -1,89 +0,0 @@

|

||||

---

|

||||

title: Conditional Tasks

|

||||

description: Learn how to use conditional tasks in a crewAI kickoff

|

||||

icon: diagram-subtask

|

||||

---

|

||||

|

||||

## Introduction

|

||||

|

||||

Conditional Tasks in crewAI allow for dynamic workflow adaptation based on the outcomes of previous tasks.

|

||||

This powerful feature enables crews to make decisions and execute tasks selectively, enhancing the flexibility and efficiency of your AI-driven processes.

|

||||

|

||||

## Example Usage

|

||||

|

||||

```python Code

|

||||

from typing import List

|

||||

from pydantic import BaseModel

|

||||

from crewai import Agent, Crew

|

||||

from crewai.tasks.conditional_task import ConditionalTask

|

||||

from crewai.tasks.task_output import TaskOutput

|

||||

from crewai.task import Task

|

||||

from crewai_tools import SerperDevTool

|

||||

|

||||

# Define a condition function for the conditional task

|

||||

# If false, the task will be skipped, if true, then execute the task.

|

||||

def is_data_missing(output: TaskOutput) -> bool:

|

||||

return len(output.pydantic.events) < 10 # this will skip this task

|

||||

|

||||

# Define the agents

|

||||

data_fetcher_agent = Agent(

|

||||

role="Data Fetcher",

|

||||

goal="Fetch data online using Serper tool",

|

||||

backstory="Backstory 1",

|

||||

verbose=True,

|

||||

tools=[SerperDevTool()]

|

||||

)

|

||||

|

||||

data_processor_agent = Agent(

|

||||

role="Data Processor",

|

||||

goal="Process fetched data",

|

||||

backstory="Backstory 2",

|

||||

verbose=True

|

||||

)

|

||||

|

||||

summary_generator_agent = Agent(

|

||||

role="Summary Generator",

|

||||

goal="Generate summary from fetched data",

|

||||

backstory="Backstory 3",

|

||||

verbose=True

|

||||

)

|

||||

|

||||

class EventOutput(BaseModel):

|

||||

events: List[str]

|

||||

|

||||

task1 = Task(

|

||||

description="Fetch data about events in San Francisco using Serper tool",

|

||||

expected_output="List of 10 things to do in SF this week",

|

||||

agent=data_fetcher_agent,

|

||||

output_pydantic=EventOutput,

|

||||

)

|

||||

|

||||

conditional_task = ConditionalTask(

|

||||

description="""

|

||||

Check if data is missing. If we have less than 10 events,

|

||||

fetch more events using Serper tool so that

|

||||

we have a total of 10 events in SF this week..

|

||||

""",

|

||||

expected_output="List of 10 Things to do in SF this week",

|

||||

condition=is_data_missing,

|

||||

agent=data_processor_agent,

|

||||

)

|

||||

|

||||

task3 = Task(

|

||||

description="Generate summary of events in San Francisco from fetched data",

|

||||

expected_output="A complete report on the customer and their customers and competitors, including their demographics, preferences, market positioning and audience engagement.",

|

||||

agent=summary_generator_agent,

|

||||

)

|

||||

|

||||

# Create a crew with the tasks

|

||||

crew = Crew(

|

||||

agents=[data_fetcher_agent, data_processor_agent, summary_generator_agent],

|

||||

tasks=[task1, conditional_task, task3],

|

||||

verbose=True,

|

||||

planning=True

|

||||

)

|

||||

|

||||

# Run the crew

|

||||

result = crew.kickoff()

|

||||

print("results", result)

|

||||

```

|

||||

@@ -1,69 +0,0 @@

|

||||

---

|

||||

title: Create Custom Tools

|

||||

description: Comprehensive guide on crafting, using, and managing custom tools within the CrewAI framework, including new functionalities and error handling.

|

||||

icon: hammer

|

||||

---

|

||||

|

||||

## Creating and Utilizing Tools in CrewAI

|

||||

|

||||

This guide provides detailed instructions on creating custom tools for the CrewAI framework and how to efficiently manage and utilize these tools,

|

||||

incorporating the latest functionalities such as tool delegation, error handling, and dynamic tool calling. It also highlights the importance of collaboration tools,

|

||||

enabling agents to perform a wide range of actions.

|

||||

|

||||

### Subclassing `BaseTool`

|

||||

|

||||

To create a personalized tool, inherit from `BaseTool` and define the necessary attributes, including the `args_schema` for input validation, and the `_run` method.

|

||||

|

||||

```python Code

|

||||

from typing import Type

|

||||

from crewai.tools import BaseTool

|

||||

from pydantic import BaseModel, Field

|

||||

|

||||

class MyToolInput(BaseModel):

|

||||

"""Input schema for MyCustomTool."""

|

||||

argument: str = Field(..., description="Description of the argument.")

|

||||

|

||||

class MyCustomTool(BaseTool):

|

||||

name: str = "Name of my tool"

|

||||

description: str = "What this tool does. It's vital for effective utilization."

|

||||

args_schema: Type[BaseModel] = MyToolInput

|

||||

|

||||

def _run(self, argument: str) -> str:

|

||||

# Your tool's logic here

|

||||

return "Tool's result"

|

||||

```

|

||||

|

||||

### Using the `tool` Decorator

|

||||

|

||||

Alternatively, you can use the tool decorator `@tool`. This approach allows you to define the tool's attributes and functionality directly within a function,

|

||||

offering a concise and efficient way to create specialized tools tailored to your needs.

|

||||

|

||||

```python Code

|

||||

from crewai.tools import tool

|

||||

|

||||

@tool("Tool Name")

|

||||

def my_simple_tool(question: str) -> str:

|

||||

"""Tool description for clarity."""

|

||||

# Tool logic here

|

||||

return "Tool output"

|

||||

```

|

||||

|

||||

### Defining a Cache Function for the Tool

|

||||

|

||||

To optimize tool performance with caching, define custom caching strategies using the `cache_function` attribute.

|

||||

|

||||

```python Code

|

||||

@tool("Tool with Caching")

|

||||

def cached_tool(argument: str) -> str:

|

||||

"""Tool functionality description."""

|

||||

return "Cacheable result"

|

||||

|

||||

def my_cache_strategy(arguments: dict, result: str) -> bool:

|

||||

# Define custom caching logic

|

||||

return True if some_condition else False

|

||||

|

||||

cached_tool.cache_function = my_cache_strategy

|

||||

```

|

||||

|

||||

By adhering to these guidelines and incorporating new functionalities and collaboration tools into your tool creation and management processes,

|

||||

you can leverage the full capabilities of the CrewAI framework, enhancing both the development experience and the efficiency of your AI agents.

|

||||

@@ -1,646 +0,0 @@

|

||||

---

|

||||

title: Custom LLM Implementation

|

||||

description: Learn how to create custom LLM implementations in CrewAI.

|

||||

icon: code

|

||||

---

|

||||

|

||||

## Custom LLM Implementations

|

||||

|

||||

CrewAI now supports custom LLM implementations through the `BaseLLM` abstract base class. This allows you to create your own LLM implementations that don't rely on litellm's authentication mechanism.

|

||||

|

||||

To create a custom LLM implementation, you need to:

|

||||

|

||||

1. Inherit from the `BaseLLM` abstract base class

|

||||

2. Implement the required methods:

|

||||

- `call()`: The main method to call the LLM with messages

|

||||

- `supports_function_calling()`: Whether the LLM supports function calling

|

||||

- `supports_stop_words()`: Whether the LLM supports stop words

|

||||

- `get_context_window_size()`: The context window size of the LLM

|

||||

|

||||

## Example: Basic Custom LLM

|

||||

|

||||

```python

|

||||

from crewai import BaseLLM

|

||||

from typing import Any, Dict, List, Optional, Union

|

||||

|

||||

class CustomLLM(BaseLLM):

|

||||

def __init__(self, api_key: str, endpoint: str):

|

||||

super().__init__() # Initialize the base class to set default attributes

|

||||

if not api_key or not isinstance(api_key, str):

|

||||

raise ValueError("Invalid API key: must be a non-empty string")

|

||||

if not endpoint or not isinstance(endpoint, str):

|

||||

raise ValueError("Invalid endpoint URL: must be a non-empty string")

|

||||

self.api_key = api_key

|

||||

self.endpoint = endpoint

|

||||

self.stop = [] # You can customize stop words if needed

|

||||

|

||||

def call(

|

||||

self,

|

||||

messages: Union[str, List[Dict[str, str]]],

|

||||

tools: Optional[List[dict]] = None,

|

||||

callbacks: Optional[List[Any]] = None,

|

||||

available_functions: Optional[Dict[str, Any]] = None,

|

||||

) -> Union[str, Any]:

|

||||

"""Call the LLM with the given messages.

|

||||

|

||||

Args:

|

||||

messages: Input messages for the LLM.

|

||||

tools: Optional list of tool schemas for function calling.

|

||||

callbacks: Optional list of callback functions.

|

||||

available_functions: Optional dict mapping function names to callables.

|

||||

|

||||

Returns:

|

||||

Either a text response from the LLM or the result of a tool function call.

|

||||

|

||||

Raises:

|

||||

TimeoutError: If the LLM request times out.

|

||||

RuntimeError: If the LLM request fails for other reasons.

|

||||

ValueError: If the response format is invalid.

|

||||

"""

|

||||

# Implement your own logic to call the LLM

|

||||

# For example, using requests:

|

||||

import requests

|

||||

|

||||

try:

|

||||

headers = {

|

||||

"Authorization": f"Bearer {self.api_key}",

|

||||

"Content-Type": "application/json"

|

||||

}

|

||||

|

||||

# Convert string message to proper format if needed

|

||||

if isinstance(messages, str):

|

||||

messages = [{"role": "user", "content": messages}]

|

||||

|

||||

data = {

|

||||

"messages": messages,

|

||||

"tools": tools

|

||||

}

|

||||

|

||||

response = requests.post(

|

||||

self.endpoint,

|

||||

headers=headers,

|

||||

json=data,

|

||||

timeout=30 # Set a reasonable timeout

|

||||

)

|

||||

response.raise_for_status() # Raise an exception for HTTP errors

|

||||

return response.json()["choices"][0]["message"]["content"]

|

||||

except requests.Timeout:

|

||||

raise TimeoutError("LLM request timed out")

|

||||

except requests.RequestException as e:

|

||||

raise RuntimeError(f"LLM request failed: {str(e)}")

|

||||

except (KeyError, IndexError, ValueError) as e:

|

||||

raise ValueError(f"Invalid response format: {str(e)}")

|

||||

|

||||

def supports_function_calling(self) -> bool:

|

||||

"""Check if the LLM supports function calling.

|

||||

|

||||

Returns:

|

||||

True if the LLM supports function calling, False otherwise.

|

||||

"""

|

||||

# Return True if your LLM supports function calling

|

||||

return True

|

||||

|

||||

def supports_stop_words(self) -> bool:

|

||||

"""Check if the LLM supports stop words.

|

||||

|

||||

Returns:

|

||||

True if the LLM supports stop words, False otherwise.

|

||||

"""

|

||||

# Return True if your LLM supports stop words

|

||||

return True

|

||||

|

||||

def get_context_window_size(self) -> int:

|

||||

"""Get the context window size of the LLM.

|

||||

|

||||

Returns:

|

||||

The context window size as an integer.

|

||||

"""

|

||||

# Return the context window size of your LLM

|

||||

return 8192

|

||||

```

|

||||

|

||||

## Error Handling Best Practices

|

||||

|

||||

When implementing custom LLMs, it's important to handle errors properly to ensure robustness and reliability. Here are some best practices:

|

||||

|

||||

### 1. Implement Try-Except Blocks for API Calls

|

||||

|

||||

Always wrap API calls in try-except blocks to handle different types of errors:

|

||||

|

||||

```python

|

||||

def call(

|

||||

self,

|

||||

messages: Union[str, List[Dict[str, str]]],

|

||||

tools: Optional[List[dict]] = None,

|

||||

callbacks: Optional[List[Any]] = None,

|

||||

available_functions: Optional[Dict[str, Any]] = None,

|

||||

) -> Union[str, Any]:

|

||||

try:

|

||||

# API call implementation

|

||||

response = requests.post(

|

||||

self.endpoint,

|

||||

headers=self.headers,

|

||||

json=self.prepare_payload(messages),

|

||||

timeout=30 # Set a reasonable timeout

|

||||

)

|

||||

response.raise_for_status() # Raise an exception for HTTP errors

|

||||

return response.json()["choices"][0]["message"]["content"]

|

||||

except requests.Timeout:

|

||||

raise TimeoutError("LLM request timed out")

|

||||

except requests.RequestException as e:

|

||||

raise RuntimeError(f"LLM request failed: {str(e)}")

|

||||

except (KeyError, IndexError, ValueError) as e:

|

||||

raise ValueError(f"Invalid response format: {str(e)}")

|

||||

```

|

||||

|

||||

### 2. Implement Retry Logic for Transient Failures

|

||||

|

||||

For transient failures like network issues or rate limiting, implement retry logic with exponential backoff:

|

||||

|

||||

```python

|

||||

def call(

|

||||

self,

|

||||

messages: Union[str, List[Dict[str, str]]],

|

||||

tools: Optional[List[dict]] = None,

|

||||

callbacks: Optional[List[Any]] = None,

|

||||

available_functions: Optional[Dict[str, Any]] = None,

|

||||

) -> Union[str, Any]:

|

||||

import time

|

||||

|

||||

max_retries = 3

|

||||

retry_delay = 1 # seconds

|

||||

|

||||

for attempt in range(max_retries):

|

||||

try:

|

||||

response = requests.post(

|

||||

self.endpoint,

|

||||

headers=self.headers,

|

||||

json=self.prepare_payload(messages),

|

||||

timeout=30

|

||||

)

|

||||

response.raise_for_status()

|

||||

return response.json()["choices"][0]["message"]["content"]

|

||||

except (requests.Timeout, requests.ConnectionError) as e:

|

||||

if attempt < max_retries - 1:

|

||||

time.sleep(retry_delay * (2 ** attempt)) # Exponential backoff

|

||||

continue

|

||||