diff --git a/docs/concepts/flows.mdx b/docs/concepts/flows.mdx

index 324118310..cf9d20f63 100644

--- a/docs/concepts/flows.mdx

+++ b/docs/concepts/flows.mdx

@@ -138,7 +138,7 @@ print("---- Final Output ----")

print(final_output)

````

-``` text Output

+```text Output

---- Final Output ----

Second method received: Output from first_method

````

diff --git a/docs/concepts/knowledge.mdx b/docs/concepts/knowledge.mdx

index 8a777833e..ba4f54450 100644

--- a/docs/concepts/knowledge.mdx

+++ b/docs/concepts/knowledge.mdx

@@ -4,8 +4,6 @@ description: What is knowledge in CrewAI and how to use it.

icon: book

---

-# Using Knowledge in CrewAI

-

## What is Knowledge?

Knowledge in CrewAI is a powerful system that allows AI agents to access and utilize external information sources during their tasks.

@@ -36,7 +34,20 @@ CrewAI supports various types of knowledge sources out of the box:

-## Quick Start

+## Supported Knowledge Parameters

+

+| Parameter | Type | Required | Description |

+| :--------------------------- | :---------------------------------- | :------- | :---------------------------------------------------------------------------------------------------------------------------------------------------- |

+| `sources` | **List[BaseKnowledgeSource]** | Yes | List of knowledge sources that provide content to be stored and queried. Can include PDF, CSV, Excel, JSON, text files, or string content. |

+| `collection_name` | **str** | No | Name of the collection where the knowledge will be stored. Used to identify different sets of knowledge. Defaults to "knowledge" if not provided. |

+| `storage` | **Optional[KnowledgeStorage]** | No | Custom storage configuration for managing how the knowledge is stored and retrieved. If not provided, a default storage will be created. |

+

+## Quickstart Example

+

+

+For file-Based Knowledge Sources, make sure to place your files in a `knowledge` directory at the root of your project.

+Also, use relative paths from the `knowledge` directory when creating the source.

+

Here's an example using string-based knowledge:

@@ -80,7 +91,8 @@ result = crew.kickoff(inputs={"question": "What city does John live in and how o

```

-Here's another example with the `CrewDoclingSource`

+Here's another example with the `CrewDoclingSource`. The CrewDoclingSource is actually quite versatile and can handle multiple file formats including TXT, PDF, DOCX, HTML, and more.

+

```python Code

from crewai import LLM, Agent, Crew, Process, Task

from crewai.knowledge.source.crew_docling_source import CrewDoclingSource

@@ -128,39 +140,192 @@ result = crew.kickoff(

)

```

+## More Examples

+

+Here are examples of how to use different types of knowledge sources:

+

+### Text File Knowledge Source

+```python

+from crewai.knowledge.source import CrewDoclingSource

+

+# Create a text file knowledge source

+text_source = CrewDoclingSource(

+ file_paths=["document.txt", "another.txt"]

+)

+

+# Create knowledge with text file source

+knowledge = Knowledge(

+ collection_name="text_knowledge",

+ sources=[text_source]

+)

+```

+

+### PDF Knowledge Source

+```python

+from crewai.knowledge.source import PDFKnowledgeSource

+

+# Create a PDF knowledge source

+pdf_source = PDFKnowledgeSource(

+ file_paths=["document.pdf", "another.pdf"]

+)

+

+# Create knowledge with PDF source

+knowledge = Knowledge(

+ collection_name="pdf_knowledge",

+ sources=[pdf_source]

+)

+```

+

+### CSV Knowledge Source

+```python

+from crewai.knowledge.source import CSVKnowledgeSource

+

+# Create a CSV knowledge source

+csv_source = CSVKnowledgeSource(

+ file_paths=["data.csv"]

+)

+

+# Create knowledge with CSV source

+knowledge = Knowledge(

+ collection_name="csv_knowledge",

+ sources=[csv_source]

+)

+```

+

+### Excel Knowledge Source

+```python

+from crewai.knowledge.source import ExcelKnowledgeSource

+

+# Create an Excel knowledge source

+excel_source = ExcelKnowledgeSource(

+ file_paths=["spreadsheet.xlsx"]

+)

+

+# Create knowledge with Excel source

+knowledge = Knowledge(

+ collection_name="excel_knowledge",

+ sources=[excel_source]

+)

+```

+

+### JSON Knowledge Source

+```python

+from crewai.knowledge.source import JSONKnowledgeSource

+

+# Create a JSON knowledge source

+json_source = JSONKnowledgeSource(

+ file_paths=["data.json"]

+)

+

+# Create knowledge with JSON source

+knowledge = Knowledge(

+ collection_name="json_knowledge",

+ sources=[json_source]

+)

+```

+

## Knowledge Configuration

### Chunking Configuration

-Control how content is split for processing by setting the chunk size and overlap.

+Knowledge sources automatically chunk content for better processing.

+You can configure chunking behavior in your knowledge sources:

-```python Code

-knowledge_source = StringKnowledgeSource(

- content="Long content...",

- chunk_size=4000, # Characters per chunk (default)

- chunk_overlap=200 # Overlap between chunks (default)

+```python

+from crewai.knowledge.source import StringKnowledgeSource

+

+source = StringKnowledgeSource(

+ content="Your content here",

+ chunk_size=4000, # Maximum size of each chunk (default: 4000)

+ chunk_overlap=200 # Overlap between chunks (default: 200)

)

```

-## Embedder Configuration

+The chunking configuration helps in:

+- Breaking down large documents into manageable pieces

+- Maintaining context through chunk overlap

+- Optimizing retrieval accuracy

-You can also configure the embedder for the knowledge store. This is useful if you want to use a different embedder for the knowledge store than the one used for the agents.

+### Embeddings Configuration

-```python Code

-...

+You can also configure the embedder for the knowledge store.

+This is useful if you want to use a different embedder for the knowledge store than the one used for the agents.

+The `embedder` parameter supports various embedding model providers that include:

+- `openai`: OpenAI's embedding models

+- `google`: Google's text embedding models

+- `azure`: Azure OpenAI embeddings

+- `ollama`: Local embeddings with Ollama

+- `vertexai`: Google Cloud VertexAI embeddings

+- `cohere`: Cohere's embedding models

+- `bedrock`: AWS Bedrock embeddings

+- `huggingface`: Hugging Face models

+- `watson`: IBM Watson embeddings

+

+Here's an example of how to configure the embedder for the knowledge store using Google's `text-embedding-004` model:

+

+```python Example

+from crewai import Agent, Task, Crew, Process, LLM

+from crewai.knowledge.source.string_knowledge_source import StringKnowledgeSource

+import os

+

+# Get the GEMINI API key

+GEMINI_API_KEY = os.environ.get("GEMINI_API_KEY")

+

+# Create a knowledge source

+content = "Users name is John. He is 30 years old and lives in San Francisco."

string_source = StringKnowledgeSource(

- content="Users name is John. He is 30 years old and lives in San Francisco.",

+ content=content,

)

+

+# Create an LLM with a temperature of 0 to ensure deterministic outputs

+gemini_llm = LLM(

+ model="gemini/gemini-1.5-pro-002",

+ api_key=GEMINI_API_KEY,

+ temperature=0,

+)

+

+# Create an agent with the knowledge store

+agent = Agent(

+ role="About User",

+ goal="You know everything about the user.",

+ backstory="""You are a master at understanding people and their preferences.""",

+ verbose=True,

+ allow_delegation=False,

+ llm=gemini_llm,

+)

+

+task = Task(

+ description="Answer the following questions about the user: {question}",

+ expected_output="An answer to the question.",

+ agent=agent,

+)

+

crew = Crew(

- ...

+ agents=[agent],

+ tasks=[task],

+ verbose=True,

+ process=Process.sequential,

knowledge_sources=[string_source],

embedder={

- "provider": "openai",

- "config": {"model": "text-embedding-3-small"},

- },

+ "provider": "google",

+ "config": {

+ "model": "models/text-embedding-004",

+ "api_key": GEMINI_API_KEY,

+ }

+ }

)

-```

+result = crew.kickoff(inputs={"question": "What city does John live in and how old is he?"})

+```

+```text Output

+# Agent: About User

+## Task: Answer the following questions about the user: What city does John live in and how old is he?

+

+# Agent: About User

+## Final Answer:

+John is 30 years old and lives in San Francisco.

+```

+

## Clearing Knowledge

If you need to clear the knowledge stored in CrewAI, you can use the `crewai reset-memories` command with the `--knowledge` option.

diff --git a/docs/how-to/Portkey-Observability-and-Guardrails.md b/docs/how-to/portkey-observability-and-guardrails.mdx

similarity index 100%

rename from docs/how-to/Portkey-Observability-and-Guardrails.md

rename to docs/how-to/portkey-observability-and-guardrails.mdx

diff --git a/docs/how-to/portkey-observability.mdx b/docs/how-to/portkey-observability.mdx

new file mode 100644

index 000000000..4002323a5

--- /dev/null

+++ b/docs/how-to/portkey-observability.mdx

@@ -0,0 +1,202 @@

+---

+title: Portkey Observability and Guardrails

+description: How to use Portkey with CrewAI

+icon: key

+---

+

+ +

+

+[Portkey](https://portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) is a 2-line upgrade to make your CrewAI agents reliable, cost-efficient, and fast.

+

+Portkey adds 4 core production capabilities to any CrewAI agent:

+1. Routing to **200+ LLMs**

+2. Making each LLM call more robust

+3. Full-stack tracing & cost, performance analytics

+4. Real-time guardrails to enforce behavior

+

+## Getting Started

+

+

+

+ ```bash

+ pip install -qU crewai portkey-ai

+ ```

+

+

+ To build CrewAI Agents with Portkey, you'll need two keys:

+ - **Portkey API Key**: Sign up on the [Portkey app](https://app.portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) and copy your API key

+ - **Virtual Key**: Virtual Keys securely manage your LLM API keys in one place. Store your LLM provider API keys securely in Portkey's vault

+

+ ```python

+ from crewai import LLM

+ from portkey_ai import createHeaders, PORTKEY_GATEWAY_URL

+

+ gpt_llm = LLM(

+ model="gpt-4",

+ base_url=PORTKEY_GATEWAY_URL,

+ api_key="dummy", # We are using Virtual key

+ extra_headers=createHeaders(

+ api_key="YOUR_PORTKEY_API_KEY",

+ virtual_key="YOUR_VIRTUAL_KEY", # Enter your Virtual key from Portkey

+ )

+ )

+ ```

+

+

+ ```python

+ from crewai import Agent, Task, Crew

+

+ # Define your agents with roles and goals

+ coder = Agent(

+ role='Software developer',

+ goal='Write clear, concise code on demand',

+ backstory='An expert coder with a keen eye for software trends.',

+ llm=gpt_llm

+ )

+

+ # Create tasks for your agents

+ task1 = Task(

+ description="Define the HTML for making a simple website with heading- Hello World! Portkey is working!",

+ expected_output="A clear and concise HTML code",

+ agent=coder

+ )

+

+ # Instantiate your crew

+ crew = Crew(

+ agents=[coder],

+ tasks=[task1],

+ )

+

+ result = crew.kickoff()

+ print(result)

+ ```

+

+

+

+## Key Features

+

+| Feature | Description |

+|:--------|:------------|

+| 🌐 Multi-LLM Support | Access OpenAI, Anthropic, Gemini, Azure, and 250+ providers through a unified interface |

+| 🛡️ Production Reliability | Implement retries, timeouts, load balancing, and fallbacks |

+| 📊 Advanced Observability | Track 40+ metrics including costs, tokens, latency, and custom metadata |

+| 🔍 Comprehensive Logging | Debug with detailed execution traces and function call logs |

+| 🚧 Security Controls | Set budget limits and implement role-based access control |

+| 🔄 Performance Analytics | Capture and analyze feedback for continuous improvement |

+| 💾 Intelligent Caching | Reduce costs and latency with semantic or simple caching |

+

+

+## Production Features with Portkey Configs

+

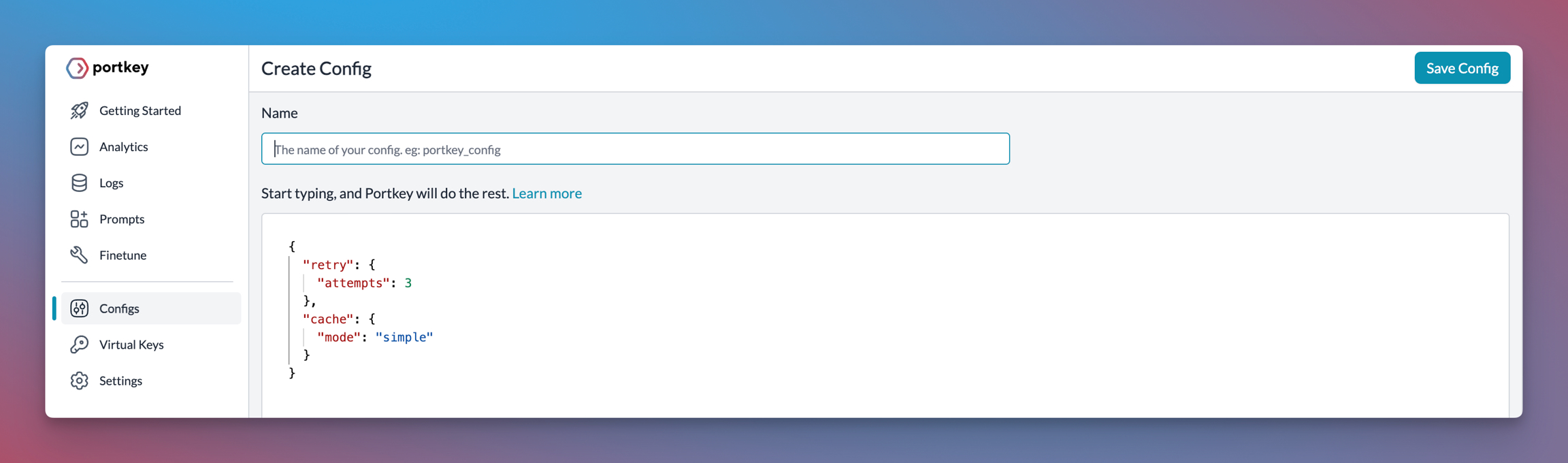

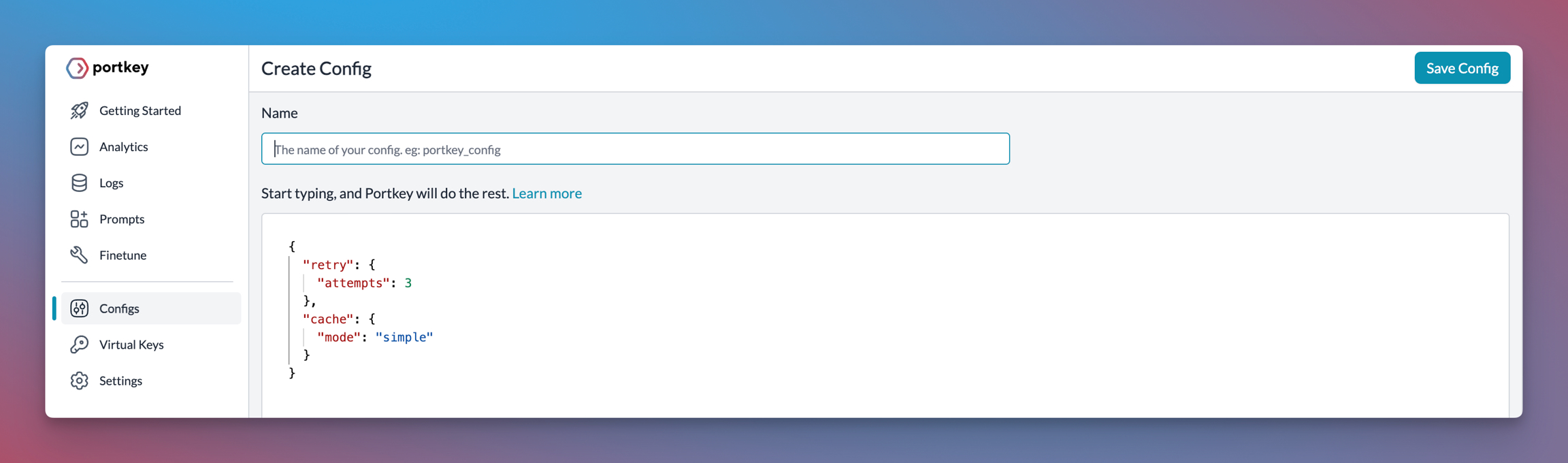

+All features mentioned below are through Portkey's Config system. Portkey's Config system allows you to define routing strategies using simple JSON objects in your LLM API calls. You can create and manage Configs directly in your code or through the Portkey Dashboard. Each Config has a unique ID for easy reference.

+

+

+

+

+

+[Portkey](https://portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) is a 2-line upgrade to make your CrewAI agents reliable, cost-efficient, and fast.

+

+Portkey adds 4 core production capabilities to any CrewAI agent:

+1. Routing to **200+ LLMs**

+2. Making each LLM call more robust

+3. Full-stack tracing & cost, performance analytics

+4. Real-time guardrails to enforce behavior

+

+## Getting Started

+

+

+

+ ```bash

+ pip install -qU crewai portkey-ai

+ ```

+

+

+ To build CrewAI Agents with Portkey, you'll need two keys:

+ - **Portkey API Key**: Sign up on the [Portkey app](https://app.portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai) and copy your API key

+ - **Virtual Key**: Virtual Keys securely manage your LLM API keys in one place. Store your LLM provider API keys securely in Portkey's vault

+

+ ```python

+ from crewai import LLM

+ from portkey_ai import createHeaders, PORTKEY_GATEWAY_URL

+

+ gpt_llm = LLM(

+ model="gpt-4",

+ base_url=PORTKEY_GATEWAY_URL,

+ api_key="dummy", # We are using Virtual key

+ extra_headers=createHeaders(

+ api_key="YOUR_PORTKEY_API_KEY",

+ virtual_key="YOUR_VIRTUAL_KEY", # Enter your Virtual key from Portkey

+ )

+ )

+ ```

+

+

+ ```python

+ from crewai import Agent, Task, Crew

+

+ # Define your agents with roles and goals

+ coder = Agent(

+ role='Software developer',

+ goal='Write clear, concise code on demand',

+ backstory='An expert coder with a keen eye for software trends.',

+ llm=gpt_llm

+ )

+

+ # Create tasks for your agents

+ task1 = Task(

+ description="Define the HTML for making a simple website with heading- Hello World! Portkey is working!",

+ expected_output="A clear and concise HTML code",

+ agent=coder

+ )

+

+ # Instantiate your crew

+ crew = Crew(

+ agents=[coder],

+ tasks=[task1],

+ )

+

+ result = crew.kickoff()

+ print(result)

+ ```

+

+

+

+## Key Features

+

+| Feature | Description |

+|:--------|:------------|

+| 🌐 Multi-LLM Support | Access OpenAI, Anthropic, Gemini, Azure, and 250+ providers through a unified interface |

+| 🛡️ Production Reliability | Implement retries, timeouts, load balancing, and fallbacks |

+| 📊 Advanced Observability | Track 40+ metrics including costs, tokens, latency, and custom metadata |

+| 🔍 Comprehensive Logging | Debug with detailed execution traces and function call logs |

+| 🚧 Security Controls | Set budget limits and implement role-based access control |

+| 🔄 Performance Analytics | Capture and analyze feedback for continuous improvement |

+| 💾 Intelligent Caching | Reduce costs and latency with semantic or simple caching |

+

+

+## Production Features with Portkey Configs

+

+All features mentioned below are through Portkey's Config system. Portkey's Config system allows you to define routing strategies using simple JSON objects in your LLM API calls. You can create and manage Configs directly in your code or through the Portkey Dashboard. Each Config has a unique ID for easy reference.

+

+

+  +

+

+

+### 1. Use 250+ LLMs

+Access various LLMs like Anthropic, Gemini, Mistral, Azure OpenAI, and more with minimal code changes. Switch between providers or use them together seamlessly. [Learn more about Universal API](https://portkey.ai/docs/product/ai-gateway/universal-api)

+

+

+Easily switch between different LLM providers:

+

+```python

+# Anthropic Configuration

+anthropic_llm = LLM(

+ model="claude-3-5-sonnet-latest",

+ base_url=PORTKEY_GATEWAY_URL,

+ api_key="dummy",

+ extra_headers=createHeaders(

+ api_key="YOUR_PORTKEY_API_KEY",

+ virtual_key="YOUR_ANTHROPIC_VIRTUAL_KEY", #You don't need provider when using Virtual keys

+ trace_id="anthropic_agent"

+ )

+)

+

+# Azure OpenAI Configuration

+azure_llm = LLM(

+ model="gpt-4",

+ base_url=PORTKEY_GATEWAY_URL,

+ api_key="dummy",

+ extra_headers=createHeaders(

+ api_key="YOUR_PORTKEY_API_KEY",

+ virtual_key="YOUR_AZURE_VIRTUAL_KEY", #You don't need provider when using Virtual keys

+ trace_id="azure_agent"

+ )

+)

+```

+

+

+### 2. Caching

+Improve response times and reduce costs with two powerful caching modes:

+- **Simple Cache**: Perfect for exact matches

+- **Semantic Cache**: Matches responses for requests that are semantically similar

+[Learn more about Caching](https://portkey.ai/docs/product/ai-gateway/cache-simple-and-semantic)

+

+```py

+config = {

+ "cache": {

+ "mode": "semantic", # or "simple" for exact matching

+ }

+}

+```

+

+### 3. Production Reliability

+Portkey provides comprehensive reliability features:

+- **Automatic Retries**: Handle temporary failures gracefully

+- **Request Timeouts**: Prevent hanging operations

+- **Conditional Routing**: Route requests based on specific conditions

+- **Fallbacks**: Set up automatic provider failovers

+- **Load Balancing**: Distribute requests efficiently

+

+[Learn more about Reliability Features](https://portkey.ai/docs/product/ai-gateway/)

+

+

+

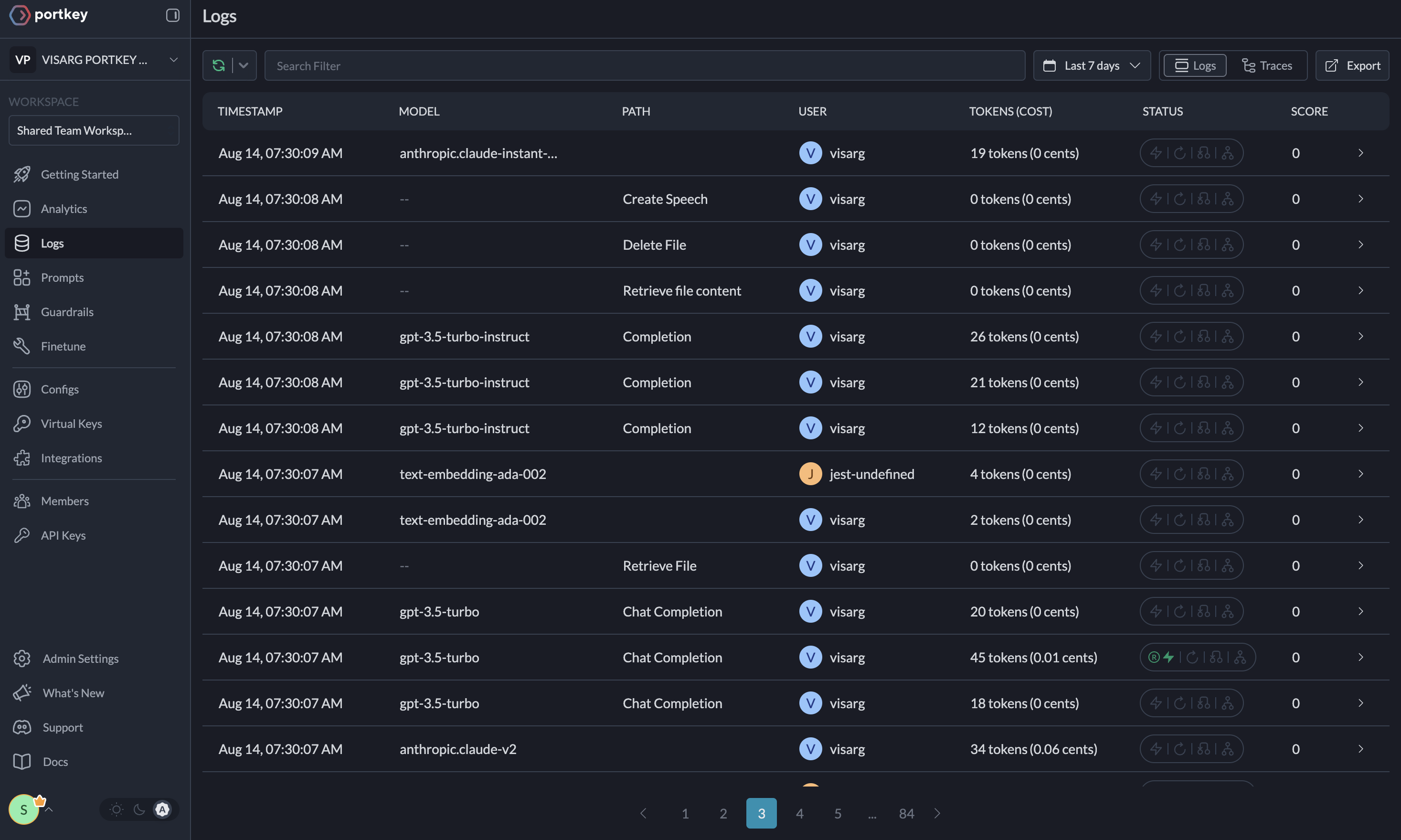

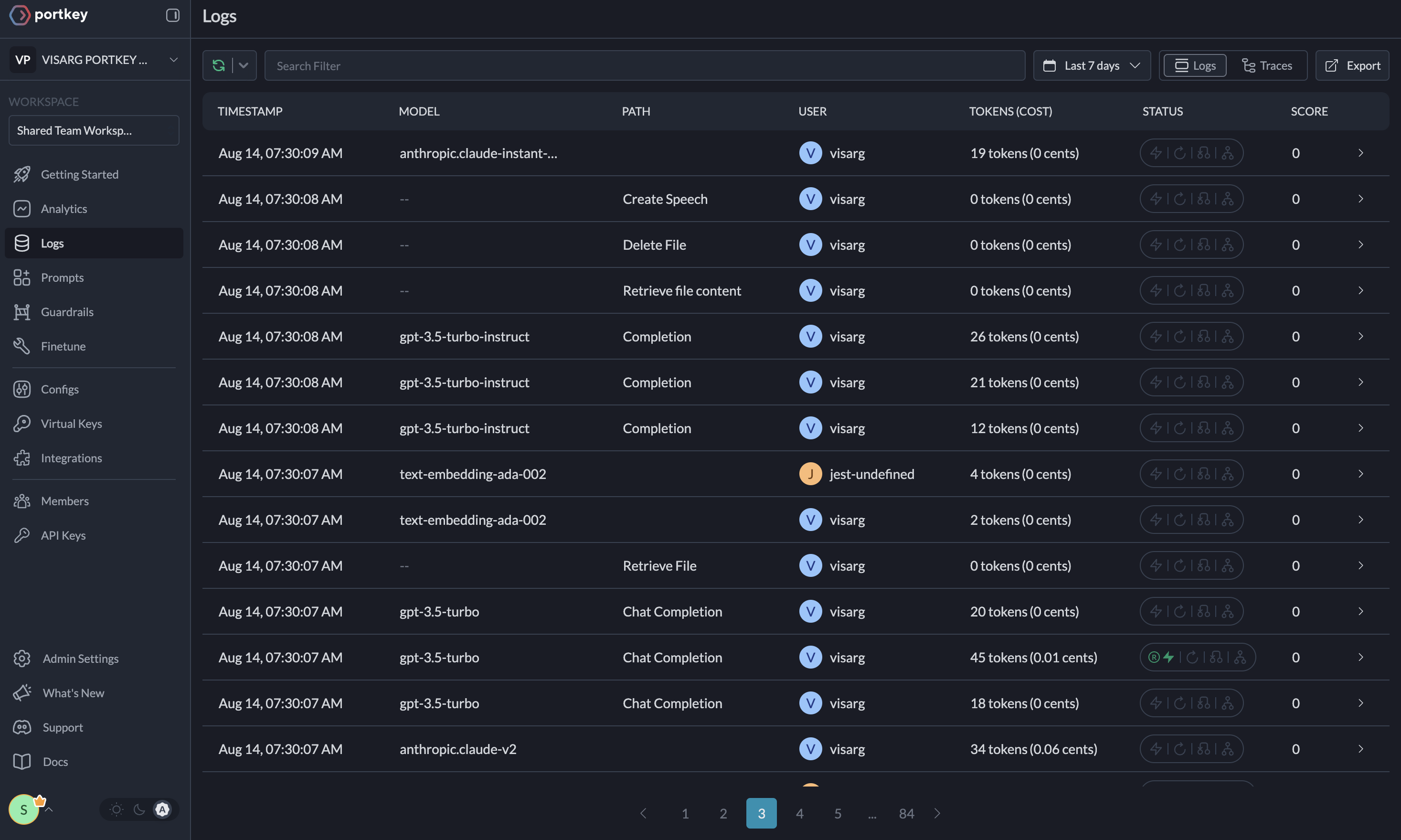

+### 4. Metrics

+

+Agent runs are complex. Portkey automatically logs **40+ comprehensive metrics** for your AI agents, including cost, tokens used, latency, etc. Whether you need a broad overview or granular insights into your agent runs, Portkey's customizable filters provide the metrics you need.

+

+

+- Cost per agent interaction

+- Response times and latency

+- Token usage and efficiency

+- Success/failure rates

+- Cache hit rates

+

+

+

+

+

+### 1. Use 250+ LLMs

+Access various LLMs like Anthropic, Gemini, Mistral, Azure OpenAI, and more with minimal code changes. Switch between providers or use them together seamlessly. [Learn more about Universal API](https://portkey.ai/docs/product/ai-gateway/universal-api)

+

+

+Easily switch between different LLM providers:

+

+```python

+# Anthropic Configuration

+anthropic_llm = LLM(

+ model="claude-3-5-sonnet-latest",

+ base_url=PORTKEY_GATEWAY_URL,

+ api_key="dummy",

+ extra_headers=createHeaders(

+ api_key="YOUR_PORTKEY_API_KEY",

+ virtual_key="YOUR_ANTHROPIC_VIRTUAL_KEY", #You don't need provider when using Virtual keys

+ trace_id="anthropic_agent"

+ )

+)

+

+# Azure OpenAI Configuration

+azure_llm = LLM(

+ model="gpt-4",

+ base_url=PORTKEY_GATEWAY_URL,

+ api_key="dummy",

+ extra_headers=createHeaders(

+ api_key="YOUR_PORTKEY_API_KEY",

+ virtual_key="YOUR_AZURE_VIRTUAL_KEY", #You don't need provider when using Virtual keys

+ trace_id="azure_agent"

+ )

+)

+```

+

+

+### 2. Caching

+Improve response times and reduce costs with two powerful caching modes:

+- **Simple Cache**: Perfect for exact matches

+- **Semantic Cache**: Matches responses for requests that are semantically similar

+[Learn more about Caching](https://portkey.ai/docs/product/ai-gateway/cache-simple-and-semantic)

+

+```py

+config = {

+ "cache": {

+ "mode": "semantic", # or "simple" for exact matching

+ }

+}

+```

+

+### 3. Production Reliability

+Portkey provides comprehensive reliability features:

+- **Automatic Retries**: Handle temporary failures gracefully

+- **Request Timeouts**: Prevent hanging operations

+- **Conditional Routing**: Route requests based on specific conditions

+- **Fallbacks**: Set up automatic provider failovers

+- **Load Balancing**: Distribute requests efficiently

+

+[Learn more about Reliability Features](https://portkey.ai/docs/product/ai-gateway/)

+

+

+

+### 4. Metrics

+

+Agent runs are complex. Portkey automatically logs **40+ comprehensive metrics** for your AI agents, including cost, tokens used, latency, etc. Whether you need a broad overview or granular insights into your agent runs, Portkey's customizable filters provide the metrics you need.

+

+

+- Cost per agent interaction

+- Response times and latency

+- Token usage and efficiency

+- Success/failure rates

+- Cache hit rates

+

+ +

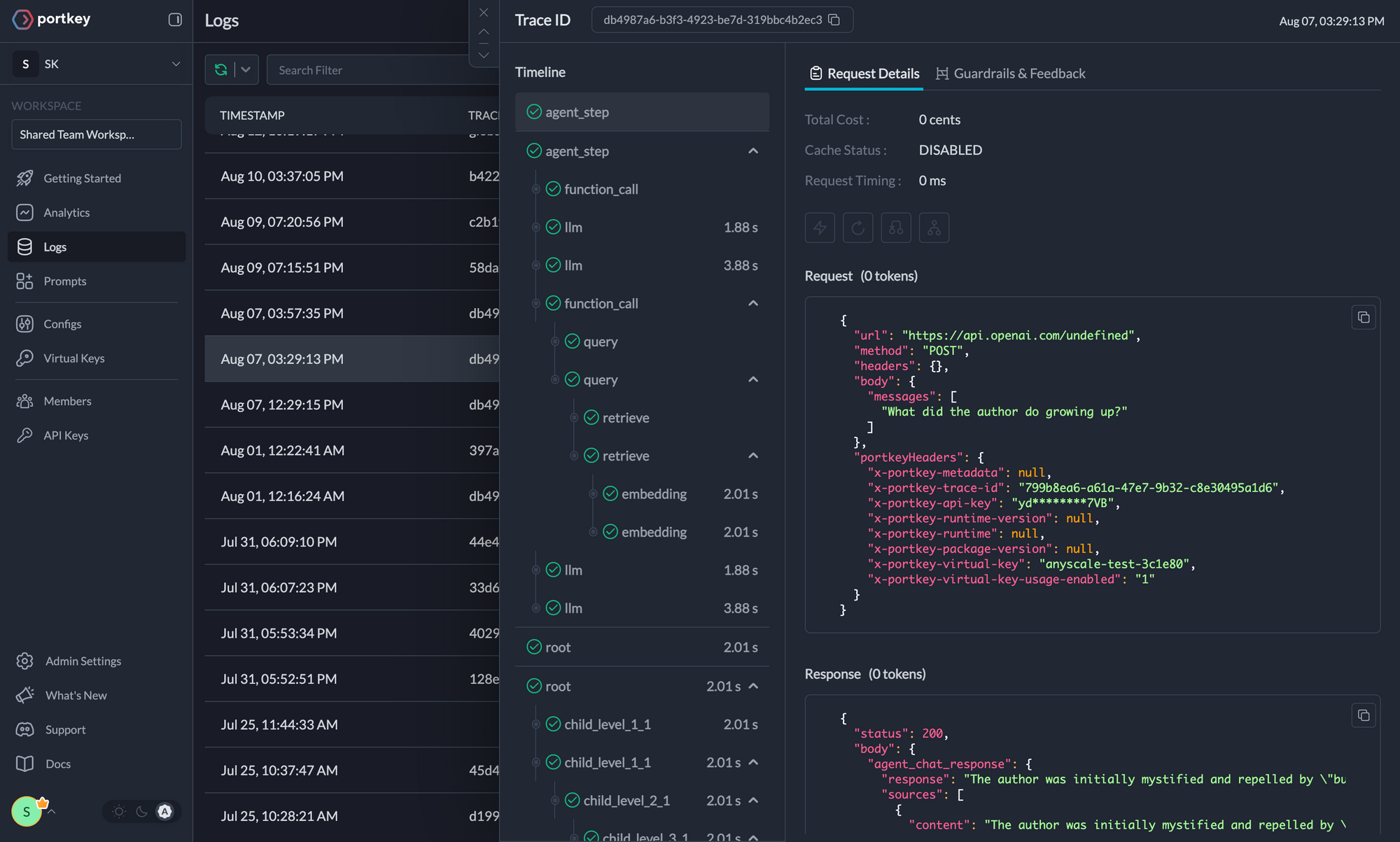

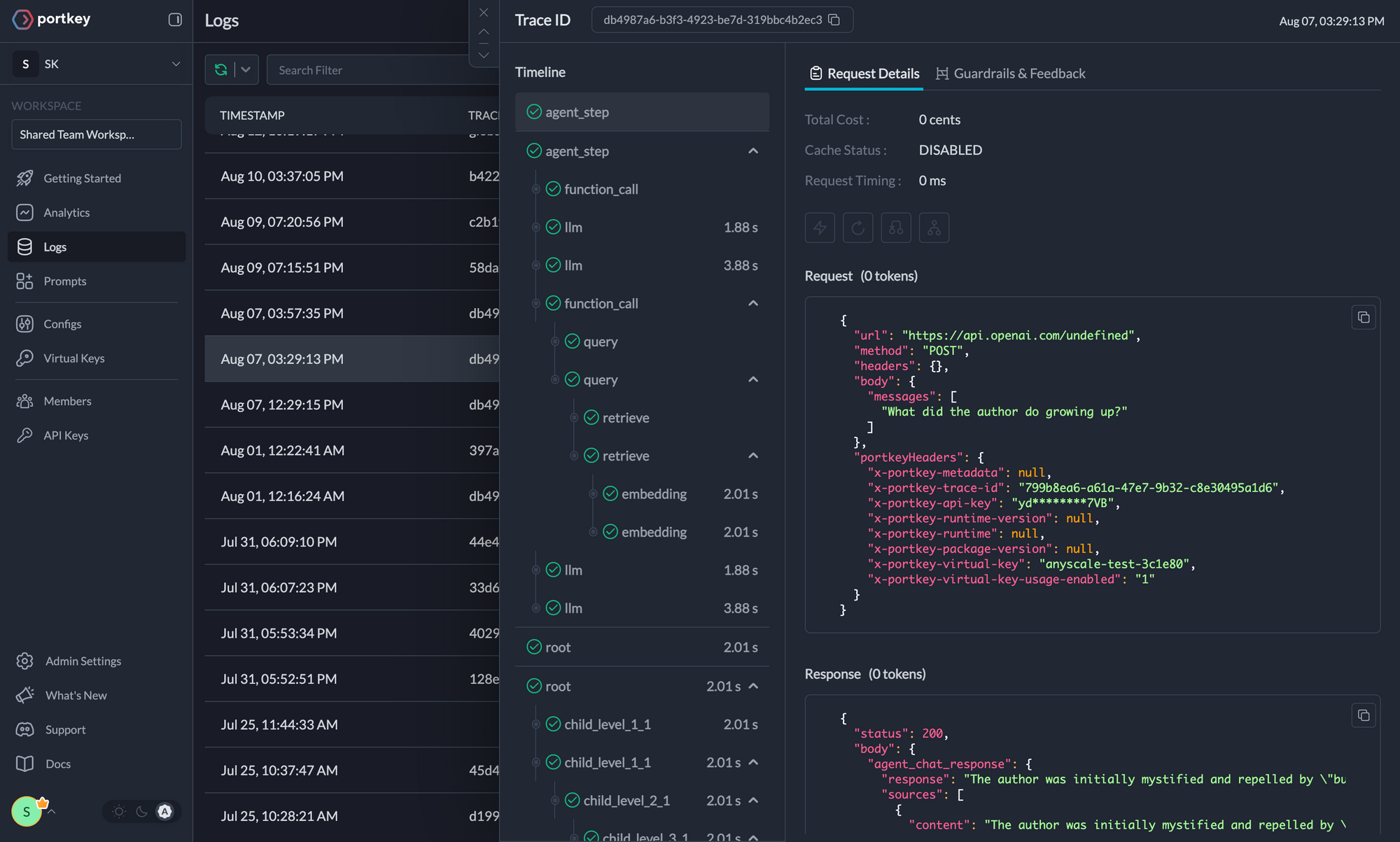

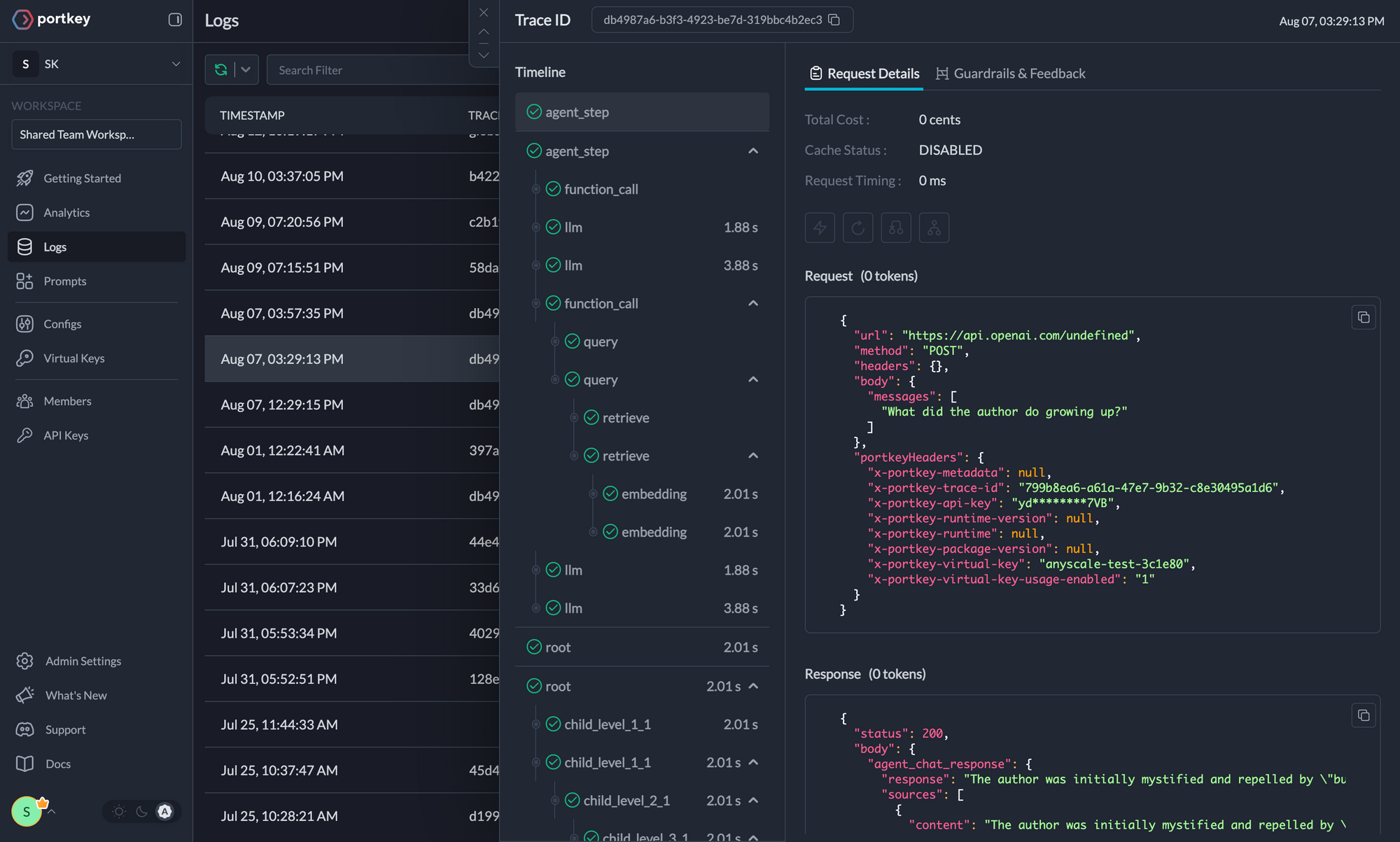

+### 5. Detailed Logging

+Logs are essential for understanding agent behavior, diagnosing issues, and improving performance. They provide a detailed record of agent activities and tool use, which is crucial for debugging and optimizing processes.

+

+

+Access a dedicated section to view records of agent executions, including parameters, outcomes, function calls, and errors. Filter logs based on multiple parameters such as trace ID, model, tokens used, and metadata.

+

+

+

+### 5. Detailed Logging

+Logs are essential for understanding agent behavior, diagnosing issues, and improving performance. They provide a detailed record of agent activities and tool use, which is crucial for debugging and optimizing processes.

+

+

+Access a dedicated section to view records of agent executions, including parameters, outcomes, function calls, and errors. Filter logs based on multiple parameters such as trace ID, model, tokens used, and metadata.

+

+

+ Traces

+  +

+

+

+

+ Logs

+  +

+

+

+### 6. Enterprise Security Features

+- Set budget limit and rate limts per Virtual Key (disposable API keys)

+- Implement role-based access control

+- Track system changes with audit logs

+- Configure data retention policies

+

+

+

+For detailed information on creating and managing Configs, visit the [Portkey documentation](https://docs.portkey.ai/product/ai-gateway/configs).

+

+## Resources

+

+- [📘 Portkey Documentation](https://docs.portkey.ai)

+- [📊 Portkey Dashboard](https://app.portkey.ai/?utm_source=crewai&utm_medium=crewai&utm_campaign=crewai)

+- [🐦 Twitter](https://twitter.com/portkeyai)

+- [💬 Discord Community](https://discord.gg/DD7vgKK299)

diff --git a/docs/mint.json b/docs/mint.json

index fad9689b8..9103434b4 100644

--- a/docs/mint.json

+++ b/docs/mint.json

@@ -100,7 +100,8 @@

"how-to/conditional-tasks",

"how-to/agentops-observability",

"how-to/langtrace-observability",

- "how-to/openlit-observability"

+ "how-to/openlit-observability",

+ "how-to/portkey-observability"

]

},

{

diff --git a/src/crewai/crew.py b/src/crewai/crew.py

index d488783ea..c01a89280 100644

--- a/src/crewai/crew.py

+++ b/src/crewai/crew.py

@@ -726,11 +726,7 @@ class Crew(BaseModel):

# Determine which tools to use - task tools take precedence over agent tools

tools_for_task = task.tools or agent_to_use.tools or []

- tools_for_task = self._prepare_tools(

- agent_to_use,

- task,

- tools_for_task

- )

+ tools_for_task = self._prepare_tools(agent_to_use, task, tools_for_task)

self._log_task_start(task, agent_to_use.role)

@@ -797,14 +793,18 @@ class Crew(BaseModel):

return skipped_task_output

return None

- def _prepare_tools(self, agent: BaseAgent, task: Task, tools: List[Tool]) -> List[Tool]:

+ def _prepare_tools(

+ self, agent: BaseAgent, task: Task, tools: List[Tool]

+ ) -> List[Tool]:

# Add delegation tools if agent allows delegation

if agent.allow_delegation:

if self.process == Process.hierarchical:

if self.manager_agent:

tools = self._update_manager_tools(task, tools)

else:

- raise ValueError("Manager agent is required for hierarchical process.")

+ raise ValueError(

+ "Manager agent is required for hierarchical process."

+ )

elif agent and agent.allow_delegation:

tools = self._add_delegation_tools(task, tools)

@@ -823,7 +823,9 @@ class Crew(BaseModel):

return self.manager_agent

return task.agent

- def _merge_tools(self, existing_tools: List[Tool], new_tools: List[Tool]) -> List[Tool]:

+ def _merge_tools(

+ self, existing_tools: List[Tool], new_tools: List[Tool]

+ ) -> List[Tool]:

"""Merge new tools into existing tools list, avoiding duplicates by tool name."""

if not new_tools:

return existing_tools

@@ -839,7 +841,9 @@ class Crew(BaseModel):

return tools

- def _inject_delegation_tools(self, tools: List[Tool], task_agent: BaseAgent, agents: List[BaseAgent]):

+ def _inject_delegation_tools(

+ self, tools: List[Tool], task_agent: BaseAgent, agents: List[BaseAgent]

+ ):

delegation_tools = task_agent.get_delegation_tools(agents)

return self._merge_tools(tools, delegation_tools)

@@ -856,7 +860,9 @@ class Crew(BaseModel):

if len(self.agents) > 1 and len(agents_for_delegation) > 0 and task.agent:

if not tools:

tools = []

- tools = self._inject_delegation_tools(tools, task.agent, agents_for_delegation)

+ tools = self._inject_delegation_tools(

+ tools, task.agent, agents_for_delegation

+ )

return tools

def _log_task_start(self, task: Task, role: str = "None"):

@@ -870,7 +876,9 @@ class Crew(BaseModel):

if task.agent:

tools = self._inject_delegation_tools(tools, task.agent, [task.agent])

else:

- tools = self._inject_delegation_tools(tools, self.manager_agent, self.agents)

+ tools = self._inject_delegation_tools(

+ tools, self.manager_agent, self.agents

+ )

return tools

def _get_context(self, task: Task, task_outputs: List[TaskOutput]):

diff --git a/src/crewai/llm.py b/src/crewai/llm.py

index 5d6a0ccf5..bdac7080a 100644

--- a/src/crewai/llm.py

+++ b/src/crewai/llm.py

@@ -6,8 +6,10 @@ import warnings

from contextlib import contextmanager

from typing import Any, Dict, List, Optional, Union

-import litellm

-from litellm import get_supported_openai_params

+with warnings.catch_warnings():

+ warnings.simplefilter("ignore", UserWarning)

+ import litellm

+ from litellm import get_supported_openai_params

from crewai.utilities.exceptions.context_window_exceeding_exception import (

LLMContextLengthExceededException,

@@ -138,7 +140,7 @@ class LLM:

self.kwargs = kwargs

litellm.drop_params = True

- litellm.set_verbose = False

+

self.set_callbacks(callbacks)

self.set_env_callbacks()

diff --git a/src/crewai/utilities/internal_instructor.py b/src/crewai/utilities/internal_instructor.py

index 13fe5a19f..a3206ba15 100644

--- a/src/crewai/utilities/internal_instructor.py

+++ b/src/crewai/utilities/internal_instructor.py

@@ -1,3 +1,4 @@

+import warnings

from typing import Any, Optional, Type

@@ -25,9 +26,10 @@ class InternalInstructor:

if self.agent and not self.llm:

self.llm = self.agent.function_calling_llm or self.agent.llm

- # Lazy import

- import instructor

- from litellm import completion

+ with warnings.catch_warnings():

+ warnings.simplefilter("ignore", UserWarning)

+ import instructor

+ from litellm import completion

self._client = instructor.from_litellm(

completion,

diff --git a/src/crewai/utilities/token_counter_callback.py b/src/crewai/utilities/token_counter_callback.py

index 06ad15022..46c7c68f9 100644

--- a/src/crewai/utilities/token_counter_callback.py

+++ b/src/crewai/utilities/token_counter_callback.py

@@ -1,3 +1,5 @@

+import warnings

+

from litellm.integrations.custom_logger import CustomLogger

from litellm.types.utils import Usage

@@ -12,11 +14,13 @@ class TokenCalcHandler(CustomLogger):

if self.token_cost_process is None:

return

- usage: Usage = response_obj["usage"]

- self.token_cost_process.sum_successful_requests(1)

- self.token_cost_process.sum_prompt_tokens(usage.prompt_tokens)

- self.token_cost_process.sum_completion_tokens(usage.completion_tokens)

- if usage.prompt_tokens_details:

- self.token_cost_process.sum_cached_prompt_tokens(

- usage.prompt_tokens_details.cached_tokens

- )

+ with warnings.catch_warnings():

+ warnings.simplefilter("ignore", UserWarning)

+ usage: Usage = response_obj["usage"]

+ self.token_cost_process.sum_successful_requests(1)

+ self.token_cost_process.sum_prompt_tokens(usage.prompt_tokens)

+ self.token_cost_process.sum_completion_tokens(usage.completion_tokens)

+ if usage.prompt_tokens_details:

+ self.token_cost_process.sum_cached_prompt_tokens(

+ usage.prompt_tokens_details.cached_tokens

+ )

diff --git a/tests/agent_test.py b/tests/agent_test.py

index 6879a4519..c490a5e13 100644

--- a/tests/agent_test.py

+++ b/tests/agent_test.py

@@ -1445,44 +1445,43 @@ def test_llm_call_with_all_attributes():

@pytest.mark.vcr(filter_headers=["authorization"])

-def test_agent_with_ollama_gemma():

+def test_agent_with_ollama_llama3():

agent = Agent(

role="test role",

goal="test goal",

backstory="test backstory",

- llm=LLM(

- model="ollama/gemma2:latest",

- base_url="http://localhost:8080",

- ),

+ llm=LLM(model="ollama/llama3.2:3b", base_url="http://localhost:11434"),

)

assert isinstance(agent.llm, LLM)

- assert agent.llm.model == "ollama/gemma2:latest"

- assert agent.llm.base_url == "http://localhost:8080"

+ assert agent.llm.model == "ollama/llama3.2:3b"

+ assert agent.llm.base_url == "http://localhost:11434"

- task = "Respond in 20 words. Who are you?"

+ task = "Respond in 20 words. Which model are you?"

response = agent.llm.call([{"role": "user", "content": task}])

assert response

assert len(response.split()) <= 25 # Allow a little flexibility in word count

- assert "Gemma" in response or "AI" in response or "language model" in response

+ assert "Llama3" in response or "AI" in response or "language model" in response

@pytest.mark.vcr(filter_headers=["authorization"])

-def test_llm_call_with_ollama_gemma():

+def test_llm_call_with_ollama_llama3():

llm = LLM(

- model="ollama/gemma2:latest",

- base_url="http://localhost:8080",

+ model="ollama/llama3.2:3b",

+ base_url="http://localhost:11434",

temperature=0.7,

max_tokens=30,

)

- messages = [{"role": "user", "content": "Respond in 20 words. Who are you?"}]

+ messages = [

+ {"role": "user", "content": "Respond in 20 words. Which model are you?"}

+ ]

response = llm.call(messages)

assert response

assert len(response.split()) <= 25 # Allow a little flexibility in word count

- assert "Gemma" in response or "AI" in response or "language model" in response

+ assert "Llama3" in response or "AI" in response or "language model" in response

@pytest.mark.vcr(filter_headers=["authorization"])

@@ -1578,7 +1577,7 @@ def test_agent_execute_task_with_ollama():

role="test role",

goal="test goal",

backstory="test backstory",

- llm=LLM(model="ollama/gemma2:latest", base_url="http://localhost:8080"),

+ llm=LLM(model="ollama/llama3.2:3b", base_url="http://localhost:11434"),

)

task = Task(

diff --git a/tests/cassettes/test_agent_execute_task_with_ollama.yaml b/tests/cassettes/test_agent_execute_task_with_ollama.yaml

index 62f1fe37f..d8ecb4dde 100644

--- a/tests/cassettes/test_agent_execute_task_with_ollama.yaml

+++ b/tests/cassettes/test_agent_execute_task_with_ollama.yaml

@@ -1,42 +1,6 @@

interactions:

- request:

- body: !!binary |

- CrcCCiQKIgoMc2VydmljZS5uYW1lEhIKEGNyZXdBSS10ZWxlbWV0cnkSjgIKEgoQY3Jld2FpLnRl

- bGVtZXRyeRJoChA/Q8UW5bidCRtKvri5fOaNEgh5qLzvLvZJkioQVG9vbCBVc2FnZSBFcnJvcjAB

- OYjFVQr1TPgXQXCXhwr1TPgXShoKDmNyZXdhaV92ZXJzaW9uEggKBjAuNjEuMHoCGAGFAQABAAAS

- jQEKEChQTWQ07t26ELkZmP5RresSCHEivRGBpsP7KgpUb29sIFVzYWdlMAE5sKkbC/VM+BdB8MIc

- C/VM+BdKGgoOY3Jld2FpX3ZlcnNpb24SCAoGMC42MS4wShkKCXRvb2xfbmFtZRIMCgpkdW1teV90

- b29sSg4KCGF0dGVtcHRzEgIYAXoCGAGFAQABAAA=

- headers:

- Accept:

- - '*/*'

- Accept-Encoding:

- - gzip, deflate

- Connection:

- - keep-alive

- Content-Length:

- - '314'

- Content-Type:

- - application/x-protobuf

- User-Agent:

- - OTel-OTLP-Exporter-Python/1.27.0

- method: POST

- uri: https://telemetry.crewai.com:4319/v1/traces

- response:

- body:

- string: "\n\0"

- headers:

- Content-Length:

- - '2'

- Content-Type:

- - application/x-protobuf

- Date:

- - Tue, 24 Sep 2024 21:57:54 GMT

- status:

- code: 200

- message: OK

-- request:

- body: '{"model": "gemma2:latest", "prompt": "### System:\nYou are test role. test

+ body: '{"model": "llama3.2:3b", "prompt": "### System:\nYou are test role. test

backstory\nYour personal goal is: test goal\nTo give my best complete final

answer to the task use the exact following format:\n\nThought: I now can give

a great answer\nFinal Answer: Your final answer must be the great and the most

@@ -46,7 +10,7 @@ interactions:

explanation of AI\nyou MUST return the actual complete content as the final

answer, not a summary.\n\nBegin! This is VERY important to you, use the tools

available and give your best Final Answer, your job depends on it!\n\nThought:\n\n",

- "options": {}, "stream": false}'

+ "options": {"stop": ["\nObservation:"]}, "stream": false}'

headers:

Accept:

- '*/*'

@@ -55,26 +19,26 @@ interactions:

Connection:

- keep-alive

Content-Length:

- - '815'

+ - '839'

Content-Type:

- application/json

User-Agent:

- - python-requests/2.31.0

+ - python-requests/2.32.3

method: POST

- uri: http://localhost:8080/api/generate

+ uri: http://localhost:11434/api/generate

response:

body:

- string: '{"model":"gemma2:latest","created_at":"2024-09-24T21:57:55.835715Z","response":"Thought:

- I can explain AI in one sentence. \n\nFinal Answer: Artificial intelligence

- (AI) is the ability of computer systems to perform tasks that typically require

- human intelligence, such as learning, problem-solving, and decision-making. \n","done":true,"done_reason":"stop","context":[106,1645,108,6176,1479,235292,108,2045,708,2121,4731,235265,2121,135147,108,6922,3749,6789,603,235292,2121,6789,108,1469,2734,970,1963,3407,2048,3448,577,573,6911,1281,573,5463,2412,5920,235292,109,65366,235292,590,1490,798,2734,476,1775,3448,108,11263,10358,235292,3883,2048,3448,2004,614,573,1775,578,573,1546,3407,685,3077,235269,665,2004,614,17526,6547,235265,109,235285,44472,1281,1450,32808,235269,970,3356,12014,611,665,235341,109,6176,4926,235292,109,6846,12297,235292,36576,1212,16481,603,575,974,13060,109,1596,603,573,5246,12830,604,861,2048,3448,235292,586,974,235290,47366,15844,576,16481,108,4747,44472,2203,573,5579,3407,3381,685,573,2048,3448,235269,780,476,13367,235265,109,12694,235341,1417,603,50471,2845,577,692,235269,1281,573,8112,2506,578,2734,861,1963,14124,10358,235269,861,3356,12014,611,665,235341,109,65366,235292,109,107,108,106,2516,108,65366,235292,590,798,10200,16481,575,974,13060,235265,235248,109,11263,10358,235292,42456,17273,591,11716,235275,603,573,7374,576,6875,5188,577,3114,13333,674,15976,2817,3515,17273,235269,1582,685,6044,235269,3210,235290,60495,235269,578,4530,235290,14577,235265,139,108],"total_duration":3370959792,"load_duration":20611750,"prompt_eval_count":173,"prompt_eval_duration":688036000,"eval_count":51,"eval_duration":2660291000}'

+ string: '{"model":"llama3.2:3b","created_at":"2025-01-02T20:05:52.24992Z","response":"Final

+ Answer: Artificial Intelligence (AI) refers to the development of computer

+ systems capable of performing tasks that typically require human intelligence,

+ such as learning, problem-solving, decision-making, and perception.","done":true,"done_reason":"stop","context":[128006,9125,128007,271,38766,1303,33025,2696,25,6790,220,2366,18,271,128009,128006,882,128007,271,14711,744,512,2675,527,1296,3560,13,1296,93371,198,7927,4443,5915,374,25,1296,5915,198,1271,3041,856,1888,4686,1620,4320,311,279,3465,1005,279,4839,2768,3645,1473,85269,25,358,1457,649,3041,264,2294,4320,198,19918,22559,25,4718,1620,4320,2011,387,279,2294,323,279,1455,4686,439,3284,11,433,2011,387,15632,7633,382,40,28832,1005,1521,20447,11,856,2683,14117,389,433,2268,14711,2724,1473,5520,5546,25,83017,1148,15592,374,304,832,11914,271,2028,374,279,1755,13186,369,701,1620,4320,25,362,832,1355,18886,16540,315,15592,198,9514,28832,471,279,5150,4686,2262,439,279,1620,4320,11,539,264,12399,382,11382,0,1115,374,48174,3062,311,499,11,1005,279,7526,2561,323,3041,701,1888,13321,22559,11,701,2683,14117,389,433,2268,85269,1473,128009,128006,78191,128007,271,19918,22559,25,59294,22107,320,15836,8,19813,311,279,4500,315,6500,6067,13171,315,16785,9256,430,11383,1397,3823,11478,11,1778,439,6975,11,3575,99246,11,5597,28846,11,323,21063,13],"total_duration":1461909875,"load_duration":39886208,"prompt_eval_count":181,"prompt_eval_duration":701000000,"eval_count":39,"eval_duration":719000000}'

headers:

Content-Length:

- - '1662'

+ - '1537'

Content-Type:

- application/json; charset=utf-8

Date:

- - Tue, 24 Sep 2024 21:57:55 GMT

+ - Thu, 02 Jan 2025 20:05:52 GMT

status:

code: 200

message: OK

diff --git a/tests/cassettes/test_agent_with_ollama_gemma.yaml b/tests/cassettes/test_agent_with_ollama_gemma.yaml

deleted file mode 100644

index 86e829fbc..000000000

--- a/tests/cassettes/test_agent_with_ollama_gemma.yaml

+++ /dev/null

@@ -1,397 +0,0 @@

-interactions:

-- request:

- body: !!binary |

- CumTAQokCiIKDHNlcnZpY2UubmFtZRISChBjcmV3QUktdGVsZW1ldHJ5Er+TAQoSChBjcmV3YWku

- dGVsZW1ldHJ5EqoHChDvqD2QZooz9BkEwtbWjp4OEgjxh72KACHvZSoMQ3JldyBDcmVhdGVkMAE5

- qMhNnvBM+BdBcO9PnvBM+BdKGgoOY3Jld2FpX3ZlcnNpb24SCAoGMC42MS4wShoKDnB5dGhvbl92

- ZXJzaW9uEggKBjMuMTEuN0ouCghjcmV3X2tleRIiCiBkNTUxMTNiZTRhYTQxYmE2NDNkMzI2MDQy

- YjJmMDNmMUoxCgdjcmV3X2lkEiYKJGY4YTA1OTA1LTk0OGEtNDQ0YS04NmJmLTJiNTNiNDkyYjgy

- MkocCgxjcmV3X3Byb2Nlc3MSDAoKc2VxdWVudGlhbEoRCgtjcmV3X21lbW9yeRICEABKGgoUY3Jl

- d19udW1iZXJfb2ZfdGFza3MSAhgBShsKFWNyZXdfbnVtYmVyX29mX2FnZW50cxICGAFKxwIKC2Ny

- ZXdfYWdlbnRzErcCCrQCW3sia2V5IjogImUxNDhlNTMyMDI5MzQ5OWY4Y2ViZWE4MjZlNzI1ODJi

- IiwgImlkIjogIjg1MGJjNWUwLTk4NTctNDhkOC1iNWZlLTJmZjk2OWExYTU3YiIsICJyb2xlIjog

- InRlc3Qgcm9sZSIsICJ2ZXJib3NlPyI6IHRydWUsICJtYXhfaXRlciI6IDQsICJtYXhfcnBtIjog

- MTAsICJmdW5jdGlvbl9jYWxsaW5nX2xsbSI6ICIiLCAibGxtIjogImdwdC00byIsICJkZWxlZ2F0

- aW9uX2VuYWJsZWQ/IjogZmFsc2UsICJhbGxvd19jb2RlX2V4ZWN1dGlvbj8iOiBmYWxzZSwgIm1h

- eF9yZXRyeV9saW1pdCI6IDIsICJ0b29sc19uYW1lcyI6IFtdfV1KkAIKCmNyZXdfdGFza3MSgQIK

- /gFbeyJrZXkiOiAiNGEzMWI4NTEzM2EzYTI5NGM2ODUzZGE3NTdkNGJhZTciLCAiaWQiOiAiOTc1

- ZDgwMjItMWJkMS00NjBlLTg2NmEtYjJmZGNiYjA4ZDliIiwgImFzeW5jX2V4ZWN1dGlvbj8iOiBm

- YWxzZSwgImh1bWFuX2lucHV0PyI6IGZhbHNlLCAiYWdlbnRfcm9sZSI6ICJ0ZXN0IHJvbGUiLCAi

- YWdlbnRfa2V5IjogImUxNDhlNTMyMDI5MzQ5OWY4Y2ViZWE4MjZlNzI1ODJiIiwgInRvb2xzX25h

- bWVzIjogWyJnZXRfZmluYWxfYW5zd2VyIl19XXoCGAGFAQABAAASjgIKEP9UYSAOFQbZquSppN1j

- IeUSCAgZmXUoJKFmKgxUYXNrIENyZWF0ZWQwATloPV+e8Ez4F0GYsl+e8Ez4F0ouCghjcmV3X2tl

- eRIiCiBkNTUxMTNiZTRhYTQxYmE2NDNkMzI2MDQyYjJmMDNmMUoxCgdjcmV3X2lkEiYKJGY4YTA1

- OTA1LTk0OGEtNDQ0YS04NmJmLTJiNTNiNDkyYjgyMkouCgh0YXNrX2tleRIiCiA0YTMxYjg1MTMz

- YTNhMjk0YzY4NTNkYTc1N2Q0YmFlN0oxCgd0YXNrX2lkEiYKJDk3NWQ4MDIyLTFiZDEtNDYwZS04

- NjZhLWIyZmRjYmIwOGQ5YnoCGAGFAQABAAASkwEKEEfiywgqgiUXE3KoUbrnHDQSCGmv+iM7Wc1Z

- KgpUb29sIFVzYWdlMAE5kOybnvBM+BdBIM+cnvBM+BdKGgoOY3Jld2FpX3ZlcnNpb24SCAoGMC42

- MS4wSh8KCXRvb2xfbmFtZRISChBnZXRfZmluYWxfYW5zd2VySg4KCGF0dGVtcHRzEgIYAXoCGAGF

- AQABAAASkwEKEH7AHXpfmvwIkA45HB8YyY0SCAFRC+uJpsEZKgpUb29sIFVzYWdlMAE56PLdnvBM

- +BdBYFbfnvBM+BdKGgoOY3Jld2FpX3ZlcnNpb24SCAoGMC42MS4wSh8KCXRvb2xfbmFtZRISChBn

- ZXRfZmluYWxfYW5zd2VySg4KCGF0dGVtcHRzEgIYAXoCGAGFAQABAAASkwEKEIDKKEbYU4lcJF+a

- WsAVZwESCI+/La7oL86MKgpUb29sIFVzYWdlMAE5yIkgn/BM+BdBWGwhn/BM+BdKGgoOY3Jld2Fp

- X3ZlcnNpb24SCAoGMC42MS4wSh8KCXRvb2xfbmFtZRISChBnZXRfZmluYWxfYW5zd2VySg4KCGF0

- dGVtcHRzEgIYAXoCGAGFAQABAAASnAEKEMTZ2IhpLz6J2hJhHBQ8/M4SCEuWz+vjzYifKhNUb29s

- IFJlcGVhdGVkIFVzYWdlMAE5mAVhn/BM+BdBKOhhn/BM+BdKGgoOY3Jld2FpX3ZlcnNpb24SCAoG

- MC42MS4wSh8KCXRvb2xfbmFtZRISChBnZXRfZmluYWxfYW5zd2VySg4KCGF0dGVtcHRzEgIYAXoC

- GAGFAQABAAASkAIKED8C+t95p855kLcXs5Nnt/sSCM4XAhL6u8O8Kg5UYXNrIEV4ZWN1dGlvbjAB

- OdD8X57wTPgXQUgno5/wTPgXSi4KCGNyZXdfa2V5EiIKIGQ1NTExM2JlNGFhNDFiYTY0M2QzMjYw

- NDJiMmYwM2YxSjEKB2NyZXdfaWQSJgokZjhhMDU5MDUtOTQ4YS00NDRhLTg2YmYtMmI1M2I0OTJi

- ODIySi4KCHRhc2tfa2V5EiIKIDRhMzFiODUxMzNhM2EyOTRjNjg1M2RhNzU3ZDRiYWU3SjEKB3Rh

- c2tfaWQSJgokOTc1ZDgwMjItMWJkMS00NjBlLTg2NmEtYjJmZGNiYjA4ZDliegIYAYUBAAEAABLO

- CwoQFlnZCfbZ3Dj0L9TAE5LrLBIIoFr7BZErFNgqDENyZXcgQ3JlYXRlZDABOVhDDaDwTPgXQSg/

- D6DwTPgXShoKDmNyZXdhaV92ZXJzaW9uEggKBjAuNjEuMEoaCg5weXRob25fdmVyc2lvbhIICgYz

- LjExLjdKLgoIY3Jld19rZXkSIgogOTRjMzBkNmMzYjJhYzhmYjk0YjJkY2ZjNTcyZDBmNTlKMQoH

- Y3Jld19pZBImCiQyMzM2MzRjNi1lNmQ2LTQ5ZTYtODhhZS1lYWUxYTM5YjBlMGZKHAoMY3Jld19w

- cm9jZXNzEgwKCnNlcXVlbnRpYWxKEQoLY3Jld19tZW1vcnkSAhAAShoKFGNyZXdfbnVtYmVyX29m

- X3Rhc2tzEgIYAkobChVjcmV3X251bWJlcl9vZl9hZ2VudHMSAhgCSv4ECgtjcmV3X2FnZW50cxLu

- BArrBFt7ImtleSI6ICJlMTQ4ZTUzMjAyOTM0OTlmOGNlYmVhODI2ZTcyNTgyYiIsICJpZCI6ICI0

- MjAzZjIyYi0wNWM3LTRiNjUtODBjMS1kM2Y0YmFlNzZhNDYiLCAicm9sZSI6ICJ0ZXN0IHJvbGUi

- LCAidmVyYm9zZT8iOiB0cnVlLCAibWF4X2l0ZXIiOiAyLCAibWF4X3JwbSI6IDEwLCAiZnVuY3Rp

- b25fY2FsbGluZ19sbG0iOiAiIiwgImxsbSI6ICJncHQtNG8iLCAiZGVsZWdhdGlvbl9lbmFibGVk

- PyI6IGZhbHNlLCAiYWxsb3dfY29kZV9leGVjdXRpb24/IjogZmFsc2UsICJtYXhfcmV0cnlfbGlt

- aXQiOiAyLCAidG9vbHNfbmFtZXMiOiBbXX0sIHsia2V5IjogImU3ZThlZWE4ODZiY2I4ZjEwNDVh

- YmVlY2YxNDI1ZGI3IiwgImlkIjogImZjOTZjOTQ1LTY4ZDUtNDIxMy05NmNkLTNmYTAwNmUyZTYz

- MCIsICJyb2xlIjogInRlc3Qgcm9sZTIiLCAidmVyYm9zZT8iOiB0cnVlLCAibWF4X2l0ZXIiOiAx

- LCAibWF4X3JwbSI6IG51bGwsICJmdW5jdGlvbl9jYWxsaW5nX2xsbSI6ICIiLCAibGxtIjogImdw

- dC00byIsICJkZWxlZ2F0aW9uX2VuYWJsZWQ/IjogZmFsc2UsICJhbGxvd19jb2RlX2V4ZWN1dGlv

- bj8iOiBmYWxzZSwgIm1heF9yZXRyeV9saW1pdCI6IDIsICJ0b29sc19uYW1lcyI6IFtdfV1K/QMK

- CmNyZXdfdGFza3MS7gMK6wNbeyJrZXkiOiAiMzIyZGRhZTNiYzgwYzFkNDViODVmYTc3NTZkYjg2

- NjUiLCAiaWQiOiAiOTVjYTg4NDItNmExMi00MGQ5LWIwZDItNGI0MzYxYmJlNTZkIiwgImFzeW5j

- X2V4ZWN1dGlvbj8iOiBmYWxzZSwgImh1bWFuX2lucHV0PyI6IGZhbHNlLCAiYWdlbnRfcm9sZSI6

- ICJ0ZXN0IHJvbGUiLCAiYWdlbnRfa2V5IjogImUxNDhlNTMyMDI5MzQ5OWY4Y2ViZWE4MjZlNzI1

- ODJiIiwgInRvb2xzX25hbWVzIjogW119LCB7ImtleSI6ICI1ZTljYTdkNjRiNDIwNWJiN2M0N2Uw

- YjNmY2I1ZDIxZiIsICJpZCI6ICI5NzI5MTg2Yy1kN2JlLTRkYjQtYTk0ZS02OWU5OTk2NTI3MDAi

- LCAiYXN5bmNfZXhlY3V0aW9uPyI6IGZhbHNlLCAiaHVtYW5faW5wdXQ/IjogZmFsc2UsICJhZ2Vu

- dF9yb2xlIjogInRlc3Qgcm9sZTIiLCAiYWdlbnRfa2V5IjogImU3ZThlZWE4ODZiY2I4ZjEwNDVh

- YmVlY2YxNDI1ZGI3IiwgInRvb2xzX25hbWVzIjogWyJnZXRfZmluYWxfYW5zd2VyIl19XXoCGAGF

- AQABAAASjgIKEC/YM2OukRrSg+ZAev4VhGESCOQ5RvzSS5IEKgxUYXNrIENyZWF0ZWQwATmQJx6g

- 8Ez4F0EgjR6g8Ez4F0ouCghjcmV3X2tleRIiCiA5NGMzMGQ2YzNiMmFjOGZiOTRiMmRjZmM1NzJk

- MGY1OUoxCgdjcmV3X2lkEiYKJDIzMzYzNGM2LWU2ZDYtNDllNi04OGFlLWVhZTFhMzliMGUwZkou

- Cgh0YXNrX2tleRIiCiAzMjJkZGFlM2JjODBjMWQ0NWI4NWZhNzc1NmRiODY2NUoxCgd0YXNrX2lk

- EiYKJDk1Y2E4ODQyLTZhMTItNDBkOS1iMGQyLTRiNDM2MWJiZTU2ZHoCGAGFAQABAAASkAIKEHqZ

- L8s3clXQyVTemNcTCcQSCA0tzK95agRQKg5UYXNrIEV4ZWN1dGlvbjABOQC8HqDwTPgXQdgNSqDw

- TPgXSi4KCGNyZXdfa2V5EiIKIDk0YzMwZDZjM2IyYWM4ZmI5NGIyZGNmYzU3MmQwZjU5SjEKB2Ny

- ZXdfaWQSJgokMjMzNjM0YzYtZTZkNi00OWU2LTg4YWUtZWFlMWEzOWIwZTBmSi4KCHRhc2tfa2V5

- EiIKIDMyMmRkYWUzYmM4MGMxZDQ1Yjg1ZmE3NzU2ZGI4NjY1SjEKB3Rhc2tfaWQSJgokOTVjYTg4

- NDItNmExMi00MGQ5LWIwZDItNGI0MzYxYmJlNTZkegIYAYUBAAEAABKOAgoQjhKzodMUmQ8NWtdy

- Uj99whIIBsGtAymZibwqDFRhc2sgQ3JlYXRlZDABOXjVVaDwTPgXQXhSVqDwTPgXSi4KCGNyZXdf

- a2V5EiIKIDk0YzMwZDZjM2IyYWM4ZmI5NGIyZGNmYzU3MmQwZjU5SjEKB2NyZXdfaWQSJgokMjMz

- NjM0YzYtZTZkNi00OWU2LTg4YWUtZWFlMWEzOWIwZTBmSi4KCHRhc2tfa2V5EiIKIDVlOWNhN2Q2

- NGI0MjA1YmI3YzQ3ZTBiM2ZjYjVkMjFmSjEKB3Rhc2tfaWQSJgokOTcyOTE4NmMtZDdiZS00ZGI0

- LWE5NGUtNjllOTk5NjUyNzAwegIYAYUBAAEAABKTAQoQx5IUsjAFMGNUaz5MHy20OBIIzl2tr25P

- LL8qClRvb2wgVXNhZ2UwATkgt5Sg8Ez4F0GwFpag8Ez4F0oaCg5jcmV3YWlfdmVyc2lvbhIICgYw

- LjYxLjBKHwoJdG9vbF9uYW1lEhIKEGdldF9maW5hbF9hbnN3ZXJKDgoIYXR0ZW1wdHMSAhgBegIY

- AYUBAAEAABKQAgoQEkfcfCrzTYIM6GQXhknlexIIa/oxeT78OL8qDlRhc2sgRXhlY3V0aW9uMAE5

- WIFWoPBM+BdBuL/GoPBM+BdKLgoIY3Jld19rZXkSIgogOTRjMzBkNmMzYjJhYzhmYjk0YjJkY2Zj

- NTcyZDBmNTlKMQoHY3Jld19pZBImCiQyMzM2MzRjNi1lNmQ2LTQ5ZTYtODhhZS1lYWUxYTM5YjBl

- MGZKLgoIdGFza19rZXkSIgogNWU5Y2E3ZDY0YjQyMDViYjdjNDdlMGIzZmNiNWQyMWZKMQoHdGFz

- a19pZBImCiQ5NzI5MTg2Yy1kN2JlLTRkYjQtYTk0ZS02OWU5OTk2NTI3MDB6AhgBhQEAAQAAEqwH

- ChDrKBdEe+Z5276g9fgg6VzjEgiJfnDwsv1SrCoMQ3JldyBDcmVhdGVkMAE5MLQYofBM+BdBQFIa

- ofBM+BdKGgoOY3Jld2FpX3ZlcnNpb24SCAoGMC42MS4wShoKDnB5dGhvbl92ZXJzaW9uEggKBjMu

- MTEuN0ouCghjcmV3X2tleRIiCiA3M2FhYzI4NWU2NzQ2NjY3Zjc1MTQ3NjcwMDAzNDExMEoxCgdj

- cmV3X2lkEiYKJDg0NDY0YjhlLTRiZjctNDRiYy05MmUxLWE4ZDE1NGZlNWZkN0ocCgxjcmV3X3By

- b2Nlc3MSDAoKc2VxdWVudGlhbEoRCgtjcmV3X21lbW9yeRICEABKGgoUY3Jld19udW1iZXJfb2Zf

- dGFza3MSAhgBShsKFWNyZXdfbnVtYmVyX29mX2FnZW50cxICGAFKyQIKC2NyZXdfYWdlbnRzErkC

- CrYCW3sia2V5IjogImUxNDhlNTMyMDI5MzQ5OWY4Y2ViZWE4MjZlNzI1ODJiIiwgImlkIjogIjk4

- YmIwNGYxLTBhZGMtNGZiNC04YzM2LWM3M2Q1MzQ1ZGRhZCIsICJyb2xlIjogInRlc3Qgcm9sZSIs

- ICJ2ZXJib3NlPyI6IHRydWUsICJtYXhfaXRlciI6IDEsICJtYXhfcnBtIjogbnVsbCwgImZ1bmN0

- aW9uX2NhbGxpbmdfbGxtIjogIiIsICJsbG0iOiAiZ3B0LTRvIiwgImRlbGVnYXRpb25fZW5hYmxl

- ZD8iOiBmYWxzZSwgImFsbG93X2NvZGVfZXhlY3V0aW9uPyI6IGZhbHNlLCAibWF4X3JldHJ5X2xp

- bWl0IjogMiwgInRvb2xzX25hbWVzIjogW119XUqQAgoKY3Jld190YXNrcxKBAgr+AVt7ImtleSI6

- ICJmN2E5ZjdiYjFhZWU0YjZlZjJjNTI2ZDBhOGMyZjJhYyIsICJpZCI6ICIxZjRhYzJhYS03YmQ4

- LTQ1NWQtODgyMC1jMzZmMjJjMDY4MzciLCAiYXN5bmNfZXhlY3V0aW9uPyI6IGZhbHNlLCAiaHVt

- YW5faW5wdXQ/IjogZmFsc2UsICJhZ2VudF9yb2xlIjogInRlc3Qgcm9sZSIsICJhZ2VudF9rZXki

- OiAiZTE0OGU1MzIwMjkzNDk5ZjhjZWJlYTgyNmU3MjU4MmIiLCAidG9vbHNfbmFtZXMiOiBbImdl

- dF9maW5hbF9hbnN3ZXIiXX1degIYAYUBAAEAABKOAgoQ0/vrakH7zD0uSvmVBUV8lxIIYe4YKcYG

- hNgqDFRhc2sgQ3JlYXRlZDABOdBXKqHwTPgXQcCtKqHwTPgXSi4KCGNyZXdfa2V5EiIKIDczYWFj

- Mjg1ZTY3NDY2NjdmNzUxNDc2NzAwMDM0MTEwSjEKB2NyZXdfaWQSJgokODQ0NjRiOGUtNGJmNy00

- NGJjLTkyZTEtYThkMTU0ZmU1ZmQ3Si4KCHRhc2tfa2V5EiIKIGY3YTlmN2JiMWFlZTRiNmVmMmM1

- MjZkMGE4YzJmMmFjSjEKB3Rhc2tfaWQSJgokMWY0YWMyYWEtN2JkOC00NTVkLTg4MjAtYzM2ZjIy

- YzA2ODM3egIYAYUBAAEAABKkAQoQ5GDzHNlSdlcVDdxsI3abfRIIhYu8fZS3iA4qClRvb2wgVXNh

- Z2UwATnIi2eh8Ez4F0FYbmih8Ez4F0oaCg5jcmV3YWlfdmVyc2lvbhIICgYwLjYxLjBKHwoJdG9v

- bF9uYW1lEhIKEGdldF9maW5hbF9hbnN3ZXJKDgoIYXR0ZW1wdHMSAhgBSg8KA2xsbRIICgZncHQt

- NG96AhgBhQEAAQAAEpACChAy85Jfr/EEIe1THU8koXoYEgjlkNn7xfysjioOVGFzayBFeGVjdXRp

- b24wATm42Cqh8Ez4F0GgxZah8Ez4F0ouCghjcmV3X2tleRIiCiA3M2FhYzI4NWU2NzQ2NjY3Zjc1

- MTQ3NjcwMDAzNDExMEoxCgdjcmV3X2lkEiYKJDg0NDY0YjhlLTRiZjctNDRiYy05MmUxLWE4ZDE1

- NGZlNWZkN0ouCgh0YXNrX2tleRIiCiBmN2E5ZjdiYjFhZWU0YjZlZjJjNTI2ZDBhOGMyZjJhY0ox

- Cgd0YXNrX2lkEiYKJDFmNGFjMmFhLTdiZDgtNDU1ZC04ODIwLWMzNmYyMmMwNjgzN3oCGAGFAQAB

- AAASrAcKEG0ZVq5Ww+/A0wOY3HmKgq4SCMe0ooxqjqBlKgxDcmV3IENyZWF0ZWQwATlwmISi8Ez4

- F0HYUYai8Ez4F0oaCg5jcmV3YWlfdmVyc2lvbhIICgYwLjYxLjBKGgoOcHl0aG9uX3ZlcnNpb24S

- CAoGMy4xMS43Si4KCGNyZXdfa2V5EiIKIGQ1NTExM2JlNGFhNDFiYTY0M2QzMjYwNDJiMmYwM2Yx

- SjEKB2NyZXdfaWQSJgokNzkyMWVlYmItMWI4NS00MzNjLWIxMDAtZDU4MmMyOTg5MzBkShwKDGNy

- ZXdfcHJvY2VzcxIMCgpzZXF1ZW50aWFsShEKC2NyZXdfbWVtb3J5EgIQAEoaChRjcmV3X251bWJl

- cl9vZl90YXNrcxICGAFKGwoVY3Jld19udW1iZXJfb2ZfYWdlbnRzEgIYAUrJAgoLY3Jld19hZ2Vu

- dHMSuQIKtgJbeyJrZXkiOiAiZTE0OGU1MzIwMjkzNDk5ZjhjZWJlYTgyNmU3MjU4MmIiLCAiaWQi

- OiAiZmRiZDI1MWYtYzUwOC00YmFhLTkwNjctN2U5YzQ2ZGZiZTJhIiwgInJvbGUiOiAidGVzdCBy

- b2xlIiwgInZlcmJvc2U/IjogdHJ1ZSwgIm1heF9pdGVyIjogNiwgIm1heF9ycG0iOiBudWxsLCAi

- ZnVuY3Rpb25fY2FsbGluZ19sbG0iOiAiIiwgImxsbSI6ICJncHQtNG8iLCAiZGVsZWdhdGlvbl9l

- bmFibGVkPyI6IGZhbHNlLCAiYWxsb3dfY29kZV9leGVjdXRpb24/IjogZmFsc2UsICJtYXhfcmV0

- cnlfbGltaXQiOiAyLCAidG9vbHNfbmFtZXMiOiBbXX1dSpACCgpjcmV3X3Rhc2tzEoECCv4BW3si

- a2V5IjogIjRhMzFiODUxMzNhM2EyOTRjNjg1M2RhNzU3ZDRiYWU3IiwgImlkIjogIjA2YWFmM2Y1

- LTE5ODctNDAxYS05Yzk0LWY3ZjM1YmQzMDg3OSIsICJhc3luY19leGVjdXRpb24/IjogZmFsc2Us

- ICJodW1hbl9pbnB1dD8iOiBmYWxzZSwgImFnZW50X3JvbGUiOiAidGVzdCByb2xlIiwgImFnZW50

- X2tleSI6ICJlMTQ4ZTUzMjAyOTM0OTlmOGNlYmVhODI2ZTcyNTgyYiIsICJ0b29sc19uYW1lcyI6

- IFsiZ2V0X2ZpbmFsX2Fuc3dlciJdfV16AhgBhQEAAQAAEo4CChDT+zPZHwfacDilkzaZJ9uGEgip

- Kr5r62JB+ioMVGFzayBDcmVhdGVkMAE56KeTovBM+BdB8PmTovBM+BdKLgoIY3Jld19rZXkSIgog

- ZDU1MTEzYmU0YWE0MWJhNjQzZDMyNjA0MmIyZjAzZjFKMQoHY3Jld19pZBImCiQ3OTIxZWViYi0x

- Yjg1LTQzM2MtYjEwMC1kNTgyYzI5ODkzMGRKLgoIdGFza19rZXkSIgogNGEzMWI4NTEzM2EzYTI5

- NGM2ODUzZGE3NTdkNGJhZTdKMQoHdGFza19pZBImCiQwNmFhZjNmNS0xOTg3LTQwMWEtOWM5NC1m

- N2YzNWJkMzA4Nzl6AhgBhQEAAQAAEpMBChCl85ZcL2Fa0N5QTl6EsIfnEghyDo3bxT+AkyoKVG9v

- bCBVc2FnZTABOVBA2aLwTPgXQYAy2qLwTPgXShoKDmNyZXdhaV92ZXJzaW9uEggKBjAuNjEuMEof

- Cgl0b29sX25hbWUSEgoQZ2V0X2ZpbmFsX2Fuc3dlckoOCghhdHRlbXB0cxICGAF6AhgBhQEAAQAA

- EpwBChB22uwKhaur9zmeoeEMaRKzEgjrtSEzMbRdIioTVG9vbCBSZXBlYXRlZCBVc2FnZTABOQga

- C6PwTPgXQaDRC6PwTPgXShoKDmNyZXdhaV92ZXJzaW9uEggKBjAuNjEuMEofCgl0b29sX25hbWUS

- EgoQZ2V0X2ZpbmFsX2Fuc3dlckoOCghhdHRlbXB0cxICGAF6AhgBhQEAAQAAEpMBChArAfcRpE+W

- 02oszyzccbaWEghTAO9J3zq/kyoKVG9vbCBVc2FnZTABORBRTqPwTPgXQegnT6PwTPgXShoKDmNy

- ZXdhaV92ZXJzaW9uEggKBjAuNjEuMEofCgl0b29sX25hbWUSEgoQZ2V0X2ZpbmFsX2Fuc3dlckoO

- CghhdHRlbXB0cxICGAF6AhgBhQEAAQAAEpwBChBdtM3p3aqT7wTGaXi6el/4Egie6lFQpa+AfioT

- VG9vbCBSZXBlYXRlZCBVc2FnZTABOdBg2KPwTPgXQehW2aPwTPgXShoKDmNyZXdhaV92ZXJzaW9u

- EggKBjAuNjEuMEofCgl0b29sX25hbWUSEgoQZ2V0X2ZpbmFsX2Fuc3dlckoOCghhdHRlbXB0cxIC

- GAF6AhgBhQEAAQAAEpMBChDq4OuaUKkNoi6jlMyahPJpEgg1MFDHktBxNSoKVG9vbCBVc2FnZTAB

- ORD/K6TwTPgXQZgMLaTwTPgXShoKDmNyZXdhaV92ZXJzaW9uEggKBjAuNjEuMEofCgl0b29sX25h

- bWUSEgoQZ2V0X2ZpbmFsX2Fuc3dlckoOCghhdHRlbXB0cxICGAF6AhgBhQEAAQAAEpACChBhvTmu

- QWP+bx9JMmGpt+w5Egh1J17yki7s8ioOVGFzayBFeGVjdXRpb24wATnoJJSi8Ez4F0HwNX6k8Ez4

- F0ouCghjcmV3X2tleRIiCiBkNTUxMTNiZTRhYTQxYmE2NDNkMzI2MDQyYjJmMDNmMUoxCgdjcmV3

- X2lkEiYKJDc5MjFlZWJiLTFiODUtNDMzYy1iMTAwLWQ1ODJjMjk4OTMwZEouCgh0YXNrX2tleRIi

- CiA0YTMxYjg1MTMzYTNhMjk0YzY4NTNkYTc1N2Q0YmFlN0oxCgd0YXNrX2lkEiYKJDA2YWFmM2Y1

- LTE5ODctNDAxYS05Yzk0LWY3ZjM1YmQzMDg3OXoCGAGFAQABAAASrg0KEOJZEqiJ7LTTX/J+tuLR

- stQSCHKjy4tIcmKEKgxDcmV3IENyZWF0ZWQwATmIEuGk8Ez4F0FYDuOk8Ez4F0oaCg5jcmV3YWlf

- dmVyc2lvbhIICgYwLjYxLjBKGgoOcHl0aG9uX3ZlcnNpb24SCAoGMy4xMS43Si4KCGNyZXdfa2V5

- EiIKIDExMWI4NzJkOGYwY2Y3MDNmMmVmZWYwNGNmM2FjNzk4SjEKB2NyZXdfaWQSJgokYWFiYmU5

- MmQtYjg3NC00NTZmLWE0NzAtM2FmMDc4ZTdjYThlShwKDGNyZXdfcHJvY2VzcxIMCgpzZXF1ZW50

- aWFsShEKC2NyZXdfbWVtb3J5EgIQAEoaChRjcmV3X251bWJlcl9vZl90YXNrcxICGANKGwoVY3Jl

- d19udW1iZXJfb2ZfYWdlbnRzEgIYAkqEBQoLY3Jld19hZ2VudHMS9AQK8QRbeyJrZXkiOiAiZTE0

- OGU1MzIwMjkzNDk5ZjhjZWJlYTgyNmU3MjU4MmIiLCAiaWQiOiAiZmYzOTE0OGEtZWI2NS00Nzkx

- LWI3MTMtM2Q4ZmE1YWQ5NTJlIiwgInJvbGUiOiAidGVzdCByb2xlIiwgInZlcmJvc2U/IjogZmFs

- c2UsICJtYXhfaXRlciI6IDE1LCAibWF4X3JwbSI6IG51bGwsICJmdW5jdGlvbl9jYWxsaW5nX2xs

- bSI6ICIiLCAibGxtIjogImdwdC00byIsICJkZWxlZ2F0aW9uX2VuYWJsZWQ/IjogZmFsc2UsICJh

- bGxvd19jb2RlX2V4ZWN1dGlvbj8iOiBmYWxzZSwgIm1heF9yZXRyeV9saW1pdCI6IDIsICJ0b29s

- c19uYW1lcyI6IFtdfSwgeyJrZXkiOiAiZTdlOGVlYTg4NmJjYjhmMTA0NWFiZWVjZjE0MjVkYjci

- LCAiaWQiOiAiYzYyNDJmNDMtNmQ2Mi00N2U4LTliYmMtNjM0ZDQwYWI4YTQ2IiwgInJvbGUiOiAi

- dGVzdCByb2xlMiIsICJ2ZXJib3NlPyI6IGZhbHNlLCAibWF4X2l0ZXIiOiAxNSwgIm1heF9ycG0i

- OiBudWxsLCAiZnVuY3Rpb25fY2FsbGluZ19sbG0iOiAiIiwgImxsbSI6ICJncHQtNG8iLCAiZGVs

- ZWdhdGlvbl9lbmFibGVkPyI6IGZhbHNlLCAiYWxsb3dfY29kZV9leGVjdXRpb24/IjogZmFsc2Us

- ICJtYXhfcmV0cnlfbGltaXQiOiAyLCAidG9vbHNfbmFtZXMiOiBbXX1dStcFCgpjcmV3X3Rhc2tz

- EsgFCsUFW3sia2V5IjogIjMyMmRkYWUzYmM4MGMxZDQ1Yjg1ZmE3NzU2ZGI4NjY1IiwgImlkIjog

- IjRmZDZhZDdiLTFjNWMtNDE1ZC1hMWQ4LTgwYzExZGNjMTY4NiIsICJhc3luY19leGVjdXRpb24/

- IjogZmFsc2UsICJodW1hbl9pbnB1dD8iOiBmYWxzZSwgImFnZW50X3JvbGUiOiAidGVzdCByb2xl

- IiwgImFnZW50X2tleSI6ICJlMTQ4ZTUzMjAyOTM0OTlmOGNlYmVhODI2ZTcyNTgyYiIsICJ0b29s

- c19uYW1lcyI6IFtdfSwgeyJrZXkiOiAiY2M0ODc2ZjZlNTg4ZTcxMzQ5YmJkM2E2NTg4OGMzZTki

- LCAiaWQiOiAiOTFlYWFhMWMtMWI4ZC00MDcxLTk2ZmQtM2QxZWVkMjhjMzZjIiwgImFzeW5jX2V4

- ZWN1dGlvbj8iOiBmYWxzZSwgImh1bWFuX2lucHV0PyI6IGZhbHNlLCAiYWdlbnRfcm9sZSI6ICJ0

- ZXN0IHJvbGUiLCAiYWdlbnRfa2V5IjogImUxNDhlNTMyMDI5MzQ5OWY4Y2ViZWE4MjZlNzI1ODJi

- IiwgInRvb2xzX25hbWVzIjogW119LCB7ImtleSI6ICJlMGIxM2UxMGQ3YTE0NmRjYzRjNDg4ZmNm

- OGQ3NDhhMCIsICJpZCI6ICI4NjExZjhjZS1jNDVlLTQ2OTgtYWEyMS1jMGJkNzdhOGY2ZWYiLCAi

- YXN5bmNfZXhlY3V0aW9uPyI6IGZhbHNlLCAiaHVtYW5faW5wdXQ/IjogZmFsc2UsICJhZ2VudF9y

- b2xlIjogInRlc3Qgcm9sZTIiLCAiYWdlbnRfa2V5IjogImU3ZThlZWE4ODZiY2I4ZjEwNDVhYmVl

- Y2YxNDI1ZGI3IiwgInRvb2xzX25hbWVzIjogW119XXoCGAGFAQABAAASjgIKEMbX6YsWK7RRf4L1

- NBRKD6cSCFLJiNmspsyjKgxUYXNrIENyZWF0ZWQwATnonPGk8Ez4F0EotvKk8Ez4F0ouCghjcmV3

- X2tleRIiCiAxMTFiODcyZDhmMGNmNzAzZjJlZmVmMDRjZjNhYzc5OEoxCgdjcmV3X2lkEiYKJGFh

- YmJlOTJkLWI4NzQtNDU2Zi1hNDcwLTNhZjA3OGU3Y2E4ZUouCgh0YXNrX2tleRIiCiAzMjJkZGFl

- M2JjODBjMWQ0NWI4NWZhNzc1NmRiODY2NUoxCgd0YXNrX2lkEiYKJDRmZDZhZDdiLTFjNWMtNDE1

- ZC1hMWQ4LTgwYzExZGNjMTY4NnoCGAGFAQABAAASkAIKEM9JnUNanFbE9AtnSxqA7H8SCBWlG0WJ

- sMgKKg5UYXNrIEV4ZWN1dGlvbjABOfDo8qTwTPgXQWhEH6XwTPgXSi4KCGNyZXdfa2V5EiIKIDEx

- MWI4NzJkOGYwY2Y3MDNmMmVmZWYwNGNmM2FjNzk4SjEKB2NyZXdfaWQSJgokYWFiYmU5MmQtYjg3

- NC00NTZmLWE0NzAtM2FmMDc4ZTdjYThlSi4KCHRhc2tfa2V5EiIKIDMyMmRkYWUzYmM4MGMxZDQ1

- Yjg1ZmE3NzU2ZGI4NjY1SjEKB3Rhc2tfaWQSJgokNGZkNmFkN2ItMWM1Yy00MTVkLWExZDgtODBj

- MTFkY2MxNjg2egIYAYUBAAEAABKOAgoQaQALCJNe5ByN4Wu7FE0kABIIYW/UfVfnYscqDFRhc2sg

- Q3JlYXRlZDABOWhzLKXwTPgXQSD8LKXwTPgXSi4KCGNyZXdfa2V5EiIKIDExMWI4NzJkOGYwY2Y3

- MDNmMmVmZWYwNGNmM2FjNzk4SjEKB2NyZXdfaWQSJgokYWFiYmU5MmQtYjg3NC00NTZmLWE0NzAt

- M2FmMDc4ZTdjYThlSi4KCHRhc2tfa2V5EiIKIGNjNDg3NmY2ZTU4OGU3MTM0OWJiZDNhNjU4ODhj

- M2U5SjEKB3Rhc2tfaWQSJgokOTFlYWFhMWMtMWI4ZC00MDcxLTk2ZmQtM2QxZWVkMjhjMzZjegIY

- AYUBAAEAABKQAgoQpPfkgFlpIsR/eN2zn+x3MRIILoWF4/HvceAqDlRhc2sgRXhlY3V0aW9uMAE5

- GCctpfBM+BdBQLNapfBM+BdKLgoIY3Jld19rZXkSIgogMTExYjg3MmQ4ZjBjZjcwM2YyZWZlZjA0

- Y2YzYWM3OThKMQoHY3Jld19pZBImCiRhYWJiZTkyZC1iODc0LTQ1NmYtYTQ3MC0zYWYwNzhlN2Nh

- OGVKLgoIdGFza19rZXkSIgogY2M0ODc2ZjZlNTg4ZTcxMzQ5YmJkM2E2NTg4OGMzZTlKMQoHdGFz

- a19pZBImCiQ5MWVhYWExYy0xYjhkLTQwNzEtOTZmZC0zZDFlZWQyOGMzNmN6AhgBhQEAAQAAEo4C

- ChCdvXmXZRltDxEwZx2XkhWhEghoKdomHHhLGSoMVGFzayBDcmVhdGVkMAE54HpmpfBM+BdB4Pdm

- pfBM+BdKLgoIY3Jld19rZXkSIgogMTExYjg3MmQ4ZjBjZjcwM2YyZWZlZjA0Y2YzYWM3OThKMQoH

- Y3Jld19pZBImCiRhYWJiZTkyZC1iODc0LTQ1NmYtYTQ3MC0zYWYwNzhlN2NhOGVKLgoIdGFza19r

- ZXkSIgogZTBiMTNlMTBkN2ExNDZkY2M0YzQ4OGZjZjhkNzQ4YTBKMQoHdGFza19pZBImCiQ4NjEx

- ZjhjZS1jNDVlLTQ2OTgtYWEyMS1jMGJkNzdhOGY2ZWZ6AhgBhQEAAQAAEpACChAIvs/XQL53haTt

- NV8fk6geEgicgSOcpcYulyoOVGFzayBFeGVjdXRpb24wATnYImel8Ez4F0Gw5ZSl8Ez4F0ouCghj

- cmV3X2tleRIiCiAxMTFiODcyZDhmMGNmNzAzZjJlZmVmMDRjZjNhYzc5OEoxCgdjcmV3X2lkEiYK

- JGFhYmJlOTJkLWI4NzQtNDU2Zi1hNDcwLTNhZjA3OGU3Y2E4ZUouCgh0YXNrX2tleRIiCiBlMGIx

- M2UxMGQ3YTE0NmRjYzRjNDg4ZmNmOGQ3NDhhMEoxCgd0YXNrX2lkEiYKJDg2MTFmOGNlLWM0NWUt

- NDY5OC1hYTIxLWMwYmQ3N2E4ZjZlZnoCGAGFAQABAAASvAcKEARTPn0s+U/k8GclUc+5rRoSCHF3

- KCh8OS0FKgxDcmV3IENyZWF0ZWQwATlo+Pul8Ez4F0EQ0f2l8Ez4F0oaCg5jcmV3YWlfdmVyc2lv

- bhIICgYwLjYxLjBKGgoOcHl0aG9uX3ZlcnNpb24SCAoGMy4xMS43Si4KCGNyZXdfa2V5EiIKIDQ5

- NGYzNjU3MjM3YWQ4YTMwMzViMmYxYmVlY2RjNjc3SjEKB2NyZXdfaWQSJgokOWMwNzg3NWUtMTMz

- Mi00MmMzLWFhZTEtZjNjMjc1YTQyNjYwShwKDGNyZXdfcHJvY2VzcxIMCgpzZXF1ZW50aWFsShEK

- C2NyZXdfbWVtb3J5EgIQAEoaChRjcmV3X251bWJlcl9vZl90YXNrcxICGAFKGwoVY3Jld19udW1i

- ZXJfb2ZfYWdlbnRzEgIYAUrbAgoLY3Jld19hZ2VudHMSywIKyAJbeyJrZXkiOiAiZTE0OGU1MzIw

- MjkzNDk5ZjhjZWJlYTgyNmU3MjU4MmIiLCAiaWQiOiAiNGFkYzNmMmItN2IwNC00MDRlLWEwNDQt

- N2JkNjVmYTMyZmE4IiwgInJvbGUiOiAidGVzdCByb2xlIiwgInZlcmJvc2U/IjogZmFsc2UsICJt

- YXhfaXRlciI6IDE1LCAibWF4X3JwbSI6IG51bGwsICJmdW5jdGlvbl9jYWxsaW5nX2xsbSI6ICIi

- LCAibGxtIjogImdwdC00byIsICJkZWxlZ2F0aW9uX2VuYWJsZWQ/IjogZmFsc2UsICJhbGxvd19j

- b2RlX2V4ZWN1dGlvbj8iOiBmYWxzZSwgIm1heF9yZXRyeV9saW1pdCI6IDIsICJ0b29sc19uYW1l

- cyI6IFsibGVhcm5fYWJvdXRfYWkiXX1dSo4CCgpjcmV3X3Rhc2tzEv8BCvwBW3sia2V5IjogImYy

- NTk3Yzc4NjdmYmUzMjRkYzY1ZGMwOGRmZGJmYzZjIiwgImlkIjogIjg2YzZiODE2LTgyOWMtNDUx

- Zi1iMDZkLTUyZjQ4YTdhZWJiMyIsICJhc3luY19leGVjdXRpb24/IjogZmFsc2UsICJodW1hbl9p

- bnB1dD8iOiBmYWxzZSwgImFnZW50X3JvbGUiOiAidGVzdCByb2xlIiwgImFnZW50X2tleSI6ICJl

- MTQ4ZTUzMjAyOTM0OTlmOGNlYmVhODI2ZTcyNTgyYiIsICJ0b29sc19uYW1lcyI6IFsibGVhcm5f

- YWJvdXRfYWkiXX1degIYAYUBAAEAABKOAgoQZWSU3+i71QSqlD8iiLdyWBII1Pawtza2ZHsqDFRh

- c2sgQ3JlYXRlZDABOdj2FKbwTPgXQZhUFabwTPgXSi4KCGNyZXdfa2V5EiIKIDQ5NGYzNjU3MjM3

- YWQ4YTMwMzViMmYxYmVlY2RjNjc3SjEKB2NyZXdfaWQSJgokOWMwNzg3NWUtMTMzMi00MmMzLWFh

- ZTEtZjNjMjc1YTQyNjYwSi4KCHRhc2tfa2V5EiIKIGYyNTk3Yzc4NjdmYmUzMjRkYzY1ZGMwOGRm

- ZGJmYzZjSjEKB3Rhc2tfaWQSJgokODZjNmI4MTYtODI5Yy00NTFmLWIwNmQtNTJmNDhhN2FlYmIz

- egIYAYUBAAEAABKRAQoQl3nNMLhrOg+OgsWWX6A9LxIINbCKrQzQ3JkqClRvb2wgVXNhZ2UwATlA

- TlCm8Ez4F0FASFGm8Ez4F0oaCg5jcmV3YWlfdmVyc2lvbhIICgYwLjYxLjBKHQoJdG9vbF9uYW1l

- EhAKDmxlYXJuX2Fib3V0X0FJSg4KCGF0dGVtcHRzEgIYAXoCGAGFAQABAAASkAIKEL9YI/QwoVBJ

- 1HBkTLyQxOESCCcKWhev/Dc8Kg5UYXNrIEV4ZWN1dGlvbjABOXiDFabwTPgXQcjEfqbwTPgXSi4K

- CGNyZXdfa2V5EiIKIDQ5NGYzNjU3MjM3YWQ4YTMwMzViMmYxYmVlY2RjNjc3SjEKB2NyZXdfaWQS

- JgokOWMwNzg3NWUtMTMzMi00MmMzLWFhZTEtZjNjMjc1YTQyNjYwSi4KCHRhc2tfa2V5EiIKIGYy

- NTk3Yzc4NjdmYmUzMjRkYzY1ZGMwOGRmZGJmYzZjSjEKB3Rhc2tfaWQSJgokODZjNmI4MTYtODI5

- Yy00NTFmLWIwNmQtNTJmNDhhN2FlYmIzegIYAYUBAAEAABLBBwoQ0Le1256mT8wmcvnuLKYeNRII

- IYBlVsTs+qEqDENyZXcgQ3JlYXRlZDABOYCBiKrwTPgXQRBeiqrwTPgXShoKDmNyZXdhaV92ZXJz

- aW9uEggKBjAuNjEuMEoaCg5weXRob25fdmVyc2lvbhIICgYzLjExLjdKLgoIY3Jld19rZXkSIgog

- NDk0ZjM2NTcyMzdhZDhhMzAzNWIyZjFiZWVjZGM2NzdKMQoHY3Jld19pZBImCiQyN2VlMGYyYy1h

- ZjgwLTQxYWMtYjg3ZC0xNmViYWQyMTVhNTJKHAoMY3Jld19wcm9jZXNzEgwKCnNlcXVlbnRpYWxK

- EQoLY3Jld19tZW1vcnkSAhAAShoKFGNyZXdfbnVtYmVyX29mX3Rhc2tzEgIYAUobChVjcmV3X251

- bWJlcl9vZl9hZ2VudHMSAhgBSuACCgtjcmV3X2FnZW50cxLQAgrNAlt7ImtleSI6ICJlMTQ4ZTUz

- MjAyOTM0OTlmOGNlYmVhODI2ZTcyNTgyYiIsICJpZCI6ICJmMTYyMTFjNS00YWJlLTRhZDAtOWI0

- YS0yN2RmMTJhODkyN2UiLCAicm9sZSI6ICJ0ZXN0IHJvbGUiLCAidmVyYm9zZT8iOiBmYWxzZSwg

- Im1heF9pdGVyIjogMiwgIm1heF9ycG0iOiBudWxsLCAiZnVuY3Rpb25fY2FsbGluZ19sbG0iOiAi

- Z3B0LTRvIiwgImxsbSI6ICJncHQtNG8iLCAiZGVsZWdhdGlvbl9lbmFibGVkPyI6IGZhbHNlLCAi

- YWxsb3dfY29kZV9leGVjdXRpb24/IjogZmFsc2UsICJtYXhfcmV0cnlfbGltaXQiOiAyLCAidG9v

- bHNfbmFtZXMiOiBbImxlYXJuX2Fib3V0X2FpIl19XUqOAgoKY3Jld190YXNrcxL/AQr8AVt7Imtl

- eSI6ICJmMjU5N2M3ODY3ZmJlMzI0ZGM2NWRjMDhkZmRiZmM2YyIsICJpZCI6ICJjN2FiOWRiYi0y

- MTc4LTRmOGItOGFiNi1kYTU1YzE0YTBkMGMiLCAiYXN5bmNfZXhlY3V0aW9uPyI6IGZhbHNlLCAi

- aHVtYW5faW5wdXQ/IjogZmFsc2UsICJhZ2VudF9yb2xlIjogInRlc3Qgcm9sZSIsICJhZ2VudF9r

- ZXkiOiAiZTE0OGU1MzIwMjkzNDk5ZjhjZWJlYTgyNmU3MjU4MmIiLCAidG9vbHNfbmFtZXMiOiBb

- ImxlYXJuX2Fib3V0X2FpIl19XXoCGAGFAQABAAASjgIKECr4ueCUCo/tMB7EuBQt6TcSCD/UepYl

- WGqAKgxUYXNrIENyZWF0ZWQwATk4kpyq8Ez4F0Hg85yq8Ez4F0ouCghjcmV3X2tleRIiCiA0OTRm

- MzY1NzIzN2FkOGEzMDM1YjJmMWJlZWNkYzY3N0oxCgdjcmV3X2lkEiYKJDI3ZWUwZjJjLWFmODAt

- NDFhYy1iODdkLTE2ZWJhZDIxNWE1MkouCgh0YXNrX2tleRIiCiBmMjU5N2M3ODY3ZmJlMzI0ZGM2

- NWRjMDhkZmRiZmM2Y0oxCgd0YXNrX2lkEiYKJGM3YWI5ZGJiLTIxNzgtNGY4Yi04YWI2LWRhNTVj

- MTRhMGQwY3oCGAGFAQABAAASeQoQkj0vmbCBIZPi33W9KrvrYhIIM2g73dOAN9QqEFRvb2wgVXNh

- Z2UgRXJyb3IwATnQgsyr8Ez4F0GghM2r8Ez4F0oaCg5jcmV3YWlfdmVyc2lvbhIICgYwLjYxLjBK

- DwoDbGxtEggKBmdwdC00b3oCGAGFAQABAAASeQoQavr4/1SWr8x7HD5mAzlM0hIIXPx740Skkd0q

- EFRvb2wgVXNhZ2UgRXJyb3IwATkouH9C8Uz4F0FQ1YBC8Uz4F0oaCg5jcmV3YWlfdmVyc2lvbhII

- CgYwLjYxLjBKDwoDbGxtEggKBmdwdC00b3oCGAGFAQABAAASkAIKEIgmJ3QURJvSsEifMScSiUsS

- CCyiPHcZT8AnKg5UYXNrIEV4ZWN1dGlvbjABOcAinarwTPgXQeBEynvxTPgXSi4KCGNyZXdfa2V5

- EiIKIDQ5NGYzNjU3MjM3YWQ4YTMwMzViMmYxYmVlY2RjNjc3SjEKB2NyZXdfaWQSJgokMjdlZTBm

- MmMtYWY4MC00MWFjLWI4N2QtMTZlYmFkMjE1YTUySi4KCHRhc2tfa2V5EiIKIGYyNTk3Yzc4Njdm

- YmUzMjRkYzY1ZGMwOGRmZGJmYzZjSjEKB3Rhc2tfaWQSJgokYzdhYjlkYmItMjE3OC00ZjhiLThh

- YjYtZGE1NWMxNGEwZDBjegIYAYUBAAEAABLEBwoQY+GZuYkP6mwdaVQQc11YuhII7ADKOlFZlzQq

- DENyZXcgQ3JlYXRlZDABObCoi3zxTPgXQeCUjXzxTPgXShoKDmNyZXdhaV92ZXJzaW9uEggKBjAu

- NjEuMEoaCg5weXRob25fdmVyc2lvbhIICgYzLjExLjdKLgoIY3Jld19rZXkSIgogN2U2NjA4OTg5

- ODU5YTY3ZWVjODhlZWY3ZmNlODUyMjVKMQoHY3Jld19pZBImCiQxMmE0OTFlNS00NDgwLTQ0MTYt

- OTAxYi1iMmI1N2U1ZWU4ZThKHAoMY3Jld19wcm9jZXNzEgwKCnNlcXVlbnRpYWxKEQoLY3Jld19t

- ZW1vcnkSAhAAShoKFGNyZXdfbnVtYmVyX29mX3Rhc2tzEgIYAUobChVjcmV3X251bWJlcl9vZl9h

- Z2VudHMSAhgBSt8CCgtjcmV3X2FnZW50cxLPAgrMAlt7ImtleSI6ICIyMmFjZDYxMWU0NGVmNWZh

- YzA1YjUzM2Q3NWU4ODkzYiIsICJpZCI6ICI5NjljZjhlMy0yZWEwLTQ5ZjgtODNlMS02MzEzYmE4

- ODc1ZjUiLCAicm9sZSI6ICJEYXRhIFNjaWVudGlzdCIsICJ2ZXJib3NlPyI6IGZhbHNlLCAibWF4

- X2l0ZXIiOiAxNSwgIm1heF9ycG0iOiBudWxsLCAiZnVuY3Rpb25fY2FsbGluZ19sbG0iOiAiIiwg

- ImxsbSI6ICJncHQtNG8iLCAiZGVsZWdhdGlvbl9lbmFibGVkPyI6IGZhbHNlLCAiYWxsb3dfY29k

- ZV9leGVjdXRpb24/IjogZmFsc2UsICJtYXhfcmV0cnlfbGltaXQiOiAyLCAidG9vbHNfbmFtZXMi

- OiBbImdldCBncmVldGluZ3MiXX1dSpICCgpjcmV3X3Rhc2tzEoMCCoACW3sia2V5IjogImEyNzdi

- MzRiMmMxNDZmMGM1NmM1ZTEzNTZlOGY4YTU3IiwgImlkIjogImIwMTg0NTI2LTJlOWItNDA0My1h

- M2JiLTFiM2QzNWIxNTNhOCIsICJhc3luY19leGVjdXRpb24/IjogZmFsc2UsICJodW1hbl9pbnB1

- dD8iOiBmYWxzZSwgImFnZW50X3JvbGUiOiAiRGF0YSBTY2llbnRpc3QiLCAiYWdlbnRfa2V5Ijog

- IjIyYWNkNjExZTQ0ZWY1ZmFjMDViNTMzZDc1ZTg4OTNiIiwgInRvb2xzX25hbWVzIjogWyJnZXQg

- Z3JlZXRpbmdzIl19XXoCGAGFAQABAAASjgIKEI/rrKkPz08VpVWNehfvxJ0SCIpeq76twGj3KgxU

- YXNrIENyZWF0ZWQwATlA9aR88Uz4F0HoVqV88Uz4F0ouCghjcmV3X2tleRIiCiA3ZTY2MDg5ODk4

- NTlhNjdlZWM4OGVlZjdmY2U4NTIyNUoxCgdjcmV3X2lkEiYKJDEyYTQ5MWU1LTQ0ODAtNDQxNi05

- MDFiLWIyYjU3ZTVlZThlOEouCgh0YXNrX2tleRIiCiBhMjc3YjM0YjJjMTQ2ZjBjNTZjNWUxMzU2

- ZThmOGE1N0oxCgd0YXNrX2lkEiYKJGIwMTg0NTI2LTJlOWItNDA0My1hM2JiLTFiM2QzNWIxNTNh

- OHoCGAGFAQABAAASkAEKEKKr5LR8SkqfqqktFhniLdkSCPMnqI2ma9UoKgpUb29sIFVzYWdlMAE5

- sCHgfPFM+BdB+A/hfPFM+BdKGgoOY3Jld2FpX3ZlcnNpb24SCAoGMC42MS4wShwKCXRvb2xfbmFt

- ZRIPCg1HZXQgR3JlZXRpbmdzSg4KCGF0dGVtcHRzEgIYAXoCGAGFAQABAAASkAIKEOj2bALdBlz6

- 1kP1MvHE5T0SCLw4D7D331IOKg5UYXNrIEV4ZWN1dGlvbjABOeCBpXzxTPgXQSjiEH3xTPgXSi4K

- CGNyZXdfa2V5EiIKIDdlNjYwODk4OTg1OWE2N2VlYzg4ZWVmN2ZjZTg1MjI1SjEKB2NyZXdfaWQS

- JgokMTJhNDkxZTUtNDQ4MC00NDE2LTkwMWItYjJiNTdlNWVlOGU4Si4KCHRhc2tfa2V5EiIKIGEy

- NzdiMzRiMmMxNDZmMGM1NmM1ZTEzNTZlOGY4YTU3SjEKB3Rhc2tfaWQSJgokYjAxODQ1MjYtMmU5

- Yi00MDQzLWEzYmItMWIzZDM1YjE1M2E4egIYAYUBAAEAABLQBwoQLjz7NWyGPgGU4tVFJ0sh9BII

- N6EzU5f/sykqDENyZXcgQ3JlYXRlZDABOajOcX3xTPgXQUCAc33xTPgXShoKDmNyZXdhaV92ZXJz

- aW9uEggKBjAuNjEuMEoaCg5weXRob25fdmVyc2lvbhIICgYzLjExLjdKLgoIY3Jld19rZXkSIgog

- YzMwNzYwMDkzMjY3NjE0NDRkNTdjNzFkMWRhM2YyN2NKMQoHY3Jld19pZBImCiQ1N2Y0NjVhNC03

- Zjk1LTQ5Y2MtODNmZC0zZTIwNWRhZDBjZTJKHAoMY3Jld19wcm9jZXNzEgwKCnNlcXVlbnRpYWxK

- EQoLY3Jld19tZW1vcnkSAhAAShoKFGNyZXdfbnVtYmVyX29mX3Rhc2tzEgIYAUobChVjcmV3X251

- bWJlcl9vZl9hZ2VudHMSAhgBSuUCCgtjcmV3X2FnZW50cxLVAgrSAlt7ImtleSI6ICI5OGYzYjFk

- NDdjZTk2OWNmMDU3NzI3Yjc4NDE0MjVjZCIsICJpZCI6ICJjZjcyZDlkNy01MjQwLTRkMzEtYjA2

- Mi0xMmNjMDU2OGNjM2MiLCAicm9sZSI6ICJGcmllbmRseSBOZWlnaGJvciIsICJ2ZXJib3NlPyI6

- IGZhbHNlLCAibWF4X2l0ZXIiOiAxNSwgIm1heF9ycG0iOiBudWxsLCAiZnVuY3Rpb25fY2FsbGlu

- Z19sbG0iOiAiIiwgImxsbSI6ICJncHQtNG8iLCAiZGVsZWdhdGlvbl9lbmFibGVkPyI6IGZhbHNl

- LCAiYWxsb3dfY29kZV9leGVjdXRpb24/IjogZmFsc2UsICJtYXhfcmV0cnlfbGltaXQiOiAyLCAi

- dG9vbHNfbmFtZXMiOiBbImRlY2lkZSBncmVldGluZ3MiXX1dSpgCCgpjcmV3X3Rhc2tzEokCCoYC

- W3sia2V5IjogIjgwZDdiY2Q0OTA5OTI5MDA4MzgzMmYwZTk4MzM4MGRmIiwgImlkIjogIjUxNTJk

- MmQ2LWYwODYtNGIyMi1hOGMxLTMyODA5NzU1NjZhZCIsICJhc3luY19leGVjdXRpb24/IjogZmFs

- c2UsICJodW1hbl9pbnB1dD8iOiBmYWxzZSwgImFnZW50X3JvbGUiOiAiRnJpZW5kbHkgTmVpZ2hi

- b3IiLCAiYWdlbnRfa2V5IjogIjk4ZjNiMWQ0N2NlOTY5Y2YwNTc3MjdiNzg0MTQyNWNkIiwgInRv

- b2xzX25hbWVzIjogWyJkZWNpZGUgZ3JlZXRpbmdzIl19XXoCGAGFAQABAAASjgIKEM+95r2LzVVg

- kqAMolHjl9oSCN9WyhdF/ucVKgxUYXNrIENyZWF0ZWQwATnoCoJ98Uz4F0HwXIJ98Uz4F0ouCghj

- cmV3X2tleRIiCiBjMzA3NjAwOTMyNjc2MTQ0NGQ1N2M3MWQxZGEzZjI3Y0oxCgdjcmV3X2lkEiYK

- JDU3ZjQ2NWE0LTdmOTUtNDljYy04M2ZkLTNlMjA1ZGFkMGNlMkouCgh0YXNrX2tleRIiCiA4MGQ3

- YmNkNDkwOTkyOTAwODM4MzJmMGU5ODMzODBkZkoxCgd0YXNrX2lkEiYKJDUxNTJkMmQ2LWYwODYt

- NGIyMi1hOGMxLTMyODA5NzU1NjZhZHoCGAGFAQABAAASkwEKENJjTKn4eTP/P11ERMIGcdYSCIKF

- bGEmcS7bKgpUb29sIFVzYWdlMAE5EFu5ffFM+BdBoD26ffFM+BdKGgoOY3Jld2FpX3ZlcnNpb24S

- CAoGMC42MS4wSh8KCXRvb2xfbmFtZRISChBEZWNpZGUgR3JlZXRpbmdzSg4KCGF0dGVtcHRzEgIY

- AXoCGAGFAQABAAASkAIKEG29htC06tLF7ihE5Yz6NyMSCAAsKzOcj25nKg5UYXNrIEV4ZWN1dGlv

- bjABOQCEgn3xTPgXQfgg7X3xTPgXSi4KCGNyZXdfa2V5EiIKIGMzMDc2MDA5MzI2NzYxNDQ0ZDU3

- YzcxZDFkYTNmMjdjSjEKB2NyZXdfaWQSJgokNTdmNDY1YTQtN2Y5NS00OWNjLTgzZmQtM2UyMDVk

- YWQwY2UySi4KCHRhc2tfa2V5EiIKIDgwZDdiY2Q0OTA5OTI5MDA4MzgzMmYwZTk4MzM4MGRmSjEK

- B3Rhc2tfaWQSJgokNTE1MmQyZDYtZjA4Ni00YjIyLWE4YzEtMzI4MDk3NTU2NmFkegIYAYUBAAEA

- AA==

- headers:

- Accept:

- - '*/*'

- Accept-Encoding:

- - gzip, deflate

- Connection:

- - keep-alive

- Content-Length:

- - '18925'

- Content-Type:

- - application/x-protobuf

- User-Agent:

- - OTel-OTLP-Exporter-Python/1.27.0

- method: POST

- uri: https://telemetry.crewai.com:4319/v1/traces

- response:

- body:

- string: "\n\0"

- headers:

- Content-Length:

- - '2'

- Content-Type:

- - application/x-protobuf

- Date:

- - Tue, 24 Sep 2024 21:57:39 GMT

- status:

- code: 200

- message: OK

-- request:

- body: '{"model": "gemma2:latest", "prompt": "### User:\nRespond in 20 words. Who

- are you?\n\n", "options": {}, "stream": false}'

- headers:

- Accept:

- - '*/*'

- Accept-Encoding:

- - gzip, deflate

- Connection:

- - keep-alive

- Content-Length:

- - '120'

- Content-Type:

- - application/json

- User-Agent:

- - python-requests/2.31.0

- method: POST

- uri: http://localhost:8080/api/generate

- response:

- body:

- string: '{"model":"gemma2:latest","created_at":"2024-09-24T21:57:51.284303Z","response":"I

- am Gemma, an open-weights AI assistant developed by Google DeepMind. \n","done":true,"done_reason":"stop","context":[106,1645,108,6176,4926,235292,108,54657,575,235248,235284,235276,3907,235265,7702,708,692,235336,109,107,108,106,2516,108,235285,1144,137061,235269,671,2174,235290,30316,16481,20409,6990,731,6238,20555,35777,235265,139,108],"total_duration":14046647083,"load_duration":12942541833,"prompt_eval_count":25,"prompt_eval_duration":177695000,"eval_count":19,"eval_duration":923120000}'

- headers:

- Content-Length:

- - '579'

- Content-Type:

- - application/json; charset=utf-8

- Date:

- - Tue, 24 Sep 2024 21:57:51 GMT

- status:

- code: 200

- message: OK

-version: 1

diff --git a/tests/cassettes/test_agent_with_ollama_llama3.yaml b/tests/cassettes/test_agent_with_ollama_llama3.yaml

new file mode 100644

index 000000000..beb146254

--- /dev/null

+++ b/tests/cassettes/test_agent_with_ollama_llama3.yaml

@@ -0,0 +1,36 @@

+interactions:

+- request:

+ body: '{"model": "llama3.2:3b", "prompt": "### User:\nRespond in 20 words. Who

+ which model are you?\n\n", "options": {"stop": ["\nObservation:"]}, "stream":

+ false}'

+ headers:

+ Accept:

+ - '*/*'

+ Accept-Encoding:

+ - gzip, deflate

+ Connection:

+ - keep-alive

+ Content-Length:

+ - '156'

+ Content-Type:

+ - application/json

+ User-Agent:

+ - python-requests/2.32.3

+ method: POST

+ uri: http://localhost:11434/api/generate

+ response:

+ body:

+ string: '{"model":"llama3.2:3b","created_at":"2025-01-02T20:07:07.623404Z","response":"I''m

+ an AI designed to assist and communicate with users, utilizing a combination

+ of natural language processing models.","done":true,"done_reason":"stop","context":[128006,9125,128007,271,38766,1303,33025,2696,25,6790,220,2366,18,271,128009,128006,882,128007,271,14711,2724,512,66454,304,220,508,4339,13,10699,902,1646,527,499,1980,128009,128006,78191,128007,271,40,2846,459,15592,6319,311,7945,323,19570,449,3932,11,35988,264,10824,315,5933,4221,8863,4211,13],"total_duration":1076617833,"load_duration":46505416,"prompt_eval_count":40,"prompt_eval_duration":626000000,"eval_count":22,"eval_duration":399000000}'

+ headers:

+ Content-Length:

+ - '690'

+ Content-Type:

+ - application/json; charset=utf-8

+ Date:

+ - Thu, 02 Jan 2025 20:07:07 GMT

+ status:

+ code: 200

+ message: OK

+version: 1

diff --git a/tests/cassettes/test_llm_call_with_ollama_gemma.yaml b/tests/cassettes/test_llm_call_with_ollama_gemma.yaml

deleted file mode 100644

index 9735ae23e..000000000

--- a/tests/cassettes/test_llm_call_with_ollama_gemma.yaml

+++ /dev/null

@@ -1,35 +0,0 @@

-interactions:

-- request:

- body: '{"model": "gemma2:latest", "prompt": "### User:\nRespond in 20 words. Who

- are you?\n\n", "options": {"num_predict": 30, "temperature": 0.7}, "stream":

- false}'

- headers:

- Accept:

- - '*/*'

- Accept-Encoding:

- - gzip, deflate

- Connection:

- - keep-alive

- Content-Length:

- - '157'

- Content-Type:

- - application/json

- User-Agent:

- - python-requests/2.31.0

- method: POST

- uri: http://localhost:8080/api/generate

- response:

- body:

- string: '{"model":"gemma2:latest","created_at":"2024-09-24T21:57:52.329049Z","response":"I

- am Gemma, an open-weights AI assistant trained by Google DeepMind. \n","done":true,"done_reason":"stop","context":[106,1645,108,6176,4926,235292,108,54657,575,235248,235284,235276,3907,235265,7702,708,692,235336,109,107,108,106,2516,108,235285,1144,137061,235269,671,2174,235290,30316,16481,20409,17363,731,6238,20555,35777,235265,139,108],"total_duration":991843667,"load_duration":31664750,"prompt_eval_count":25,"prompt_eval_duration":51409000,"eval_count":19,"eval_duration":908132000}'

- headers:

- Content-Length:

- - '572'

- Content-Type:

- - application/json; charset=utf-8

- Date:

- - Tue, 24 Sep 2024 21:57:52 GMT

- status:

- code: 200

- message: OK

-version: 1

diff --git a/tests/cassettes/test_llm_call_with_ollama_llama3.yaml b/tests/cassettes/test_llm_call_with_ollama_llama3.yaml

new file mode 100644

index 000000000..c1cee2cc8

--- /dev/null

+++ b/tests/cassettes/test_llm_call_with_ollama_llama3.yaml

@@ -0,0 +1,36 @@

+interactions:

+- request:

+ body: '{"model": "llama3.2:3b", "prompt": "### User:\nRespond in 20 words. Which

+ model are you??\n\n", "options": {"num_predict": 30, "temperature": 0.7}, "stream":

+ false}'

+ headers:

+ Accept:

+ - '*/*'

+ Accept-Encoding:

+ - gzip, deflate

+ Connection:

+ - keep-alive

+ Content-Length:

+ - '164'

+ Content-Type:

+ - application/json

+ User-Agent:

+ - python-requests/2.32.3

+ method: POST

+ uri: http://localhost:11434/api/generate

+ response:

+ body:

+ string: '{"model":"llama3.2:3b","created_at":"2025-01-02T20:24:24.812595Z","response":"I''m

+ an AI, specifically a large language model, designed to understand and respond

+ to user queries with accuracy.","done":true,"done_reason":"stop","context":[128006,9125,128007,271,38766,1303,33025,2696,25,6790,220,2366,18,271,128009,128006,882,128007,271,14711,2724,512,66454,304,220,508,4339,13,16299,1646,527,499,71291,128009,128006,78191,128007,271,40,2846,459,15592,11,11951,264,3544,4221,1646,11,6319,311,3619,323,6013,311,1217,20126,449,13708,13],"total_duration":827817584,"load_duration":41560542,"prompt_eval_count":39,"prompt_eval_duration":384000000,"eval_count":23,"eval_duration":400000000}'

+ headers:

+ Content-Length:

+ - '683'

+ Content-Type:

+ - application/json; charset=utf-8

+ Date:

+ - Thu, 02 Jan 2025 20:24:24 GMT

+ status:

+ code: 200

+ message: OK

+version: 1

diff --git a/tests/cli/tools/test_main.py b/tests/cli/tools/test_main.py

index 10c29b920..b06c0b28c 100644

--- a/tests/cli/tools/test_main.py

+++ b/tests/cli/tools/test_main.py

@@ -28,9 +28,10 @@ def test_create_success(mock_subprocess):

with in_temp_dir():

tool_command = ToolCommand()

- with patch.object(tool_command, "login") as mock_login, patch(

- "sys.stdout", new=StringIO()

- ) as fake_out:

+ with (

+ patch.object(tool_command, "login") as mock_login,

+ patch("sys.stdout", new=StringIO()) as fake_out,

+ ):

tool_command.create("test-tool")

output = fake_out.getvalue()

@@ -82,7 +83,7 @@ def test_install_success(mock_get, mock_subprocess_run):

capture_output=False,

text=True,

check=True,

- env=unittest.mock.ANY

+ env=unittest.mock.ANY,

)

assert "Successfully installed sample-tool" in output

diff --git a/tests/crew_test.py b/tests/crew_test.py

index 0cb8f469c..8354f6584 100644

--- a/tests/crew_test.py

+++ b/tests/crew_test.py

@@ -333,16 +333,16 @@ def test_manager_agent_delegating_to_assigned_task_agent():

)

mock_task_output = TaskOutput(

- description="Mock description",

- raw="mocked output",

- agent="mocked agent"

+ description="Mock description", raw="mocked output", agent="mocked agent"

)

# Because we are mocking execute_sync, we never hit the underlying _execute_core

# which sets the output attribute of the task

task.output = mock_task_output

- with patch.object(Task, 'execute_sync', return_value=mock_task_output) as mock_execute_sync:

+ with patch.object(

+ Task, "execute_sync", return_value=mock_task_output

+ ) as mock_execute_sync:

crew.kickoff()

# Verify execute_sync was called once

@@ -350,12 +350,20 @@ def test_manager_agent_delegating_to_assigned_task_agent():

# Get the tools argument from the call

_, kwargs = mock_execute_sync.call_args

- tools = kwargs['tools']

+ tools = kwargs["tools"]

# Verify the delegation tools were passed correctly

assert len(tools) == 2

- assert any("Delegate a specific task to one of the following coworkers: Researcher" in tool.description for tool in tools)

- assert any("Ask a specific question to one of the following coworkers: Researcher" in tool.description for tool in tools)

+ assert any(

+ "Delegate a specific task to one of the following coworkers: Researcher"

+ in tool.description

+ for tool in tools

+ )

+ assert any(